Chapter 9. Testing Infrastructure Stacks

This chapter applies the core practice of continuously testing and delivering code to infrastructure stacks. It uses the ShopSpinner example to illustrate how to test a stack project. This includes using online and offline test stages and making use of test fixtures to decouple the stack from dependencies.

Example Infrastructure

The ShopSpinner team uses reusable stack projects (see “Pattern: Reusable Stack”) to create consistent instances of application infrastructure for each of its customers. It can also use this to create test instances of the infrastructure in the pipeline.

The infrastructure for these examples is a standard three-tier system. The infrastructure in each tier consists of:

- Web server container cluster

-

The team runs a single web server container cluster for each region and in each test environment. Applications in the region or environment share this cluster. The examples in this chapter focus on the infrastructure that is specific to each customer, rather than shared infrastructure. So the shared cluster is a dependency in the examples here. For details of how changes are coordinated and tested across this infrastructure, see Chapter 17.

- Application server

-

The infrastructure for each application instance includes a virtual machine, a persistent disk volume, and networking. The networking includes an address block, gateway, routes to the server on its network port, and network access rules.

- Database

-

ShopSpinner runs a separate database instance for each customer application instance, using its provider’s DBaaS (see “Storage Resources”). ShopSpinner’s infrastructure code also defines an address block, routing, and database authentication and access rules.

The Example Stack

To start, we can define a single reusable stack that has all of the infrastructure other than the web server cluster. The project structure could look like Example 9-1.

Example 9-1. Stack project for ShopSpinner customer application

stack-project/└── src/├── appserver_vm.infra├── appserver_networking.infra├── database.infra└── database_networking.infra

Within this project, the file appserver_vm.infra includes code along the lines of what is shown in Example 9-2.

Example 9-2. Partial contents of appserver_vm.infra

virtual_machine:name:appserver-${customer}-${environment}ram:4GBaddress_block:ADDRESS_BLOCK.appserver-${customer}-${environment}storage_volume:STORAGE_VOLUME.app-storage-${customer}-${environment}base_image:SERVER_IMAGE.shopspinner_java_server_imageprovision:tool:servermakerparameters:maker_server:maker.shopspinner.xyzrole:appservercustomer:${customer}environment:${environment}storage_volume:id:app-storage-${customer}-${environment}size:80GBformat:xfs

A team member or automated process can create or update an instance of the stack by running the stack tool. They pass values to the instance using one of the patterns from Chapter 7.

As described in Chapter 8, the team uses multiple test stages (“Progressive Testing”), organized in a sequential pipeline (“Infrastructure Delivery Pipelines”).

Pipeline for the Example Stack

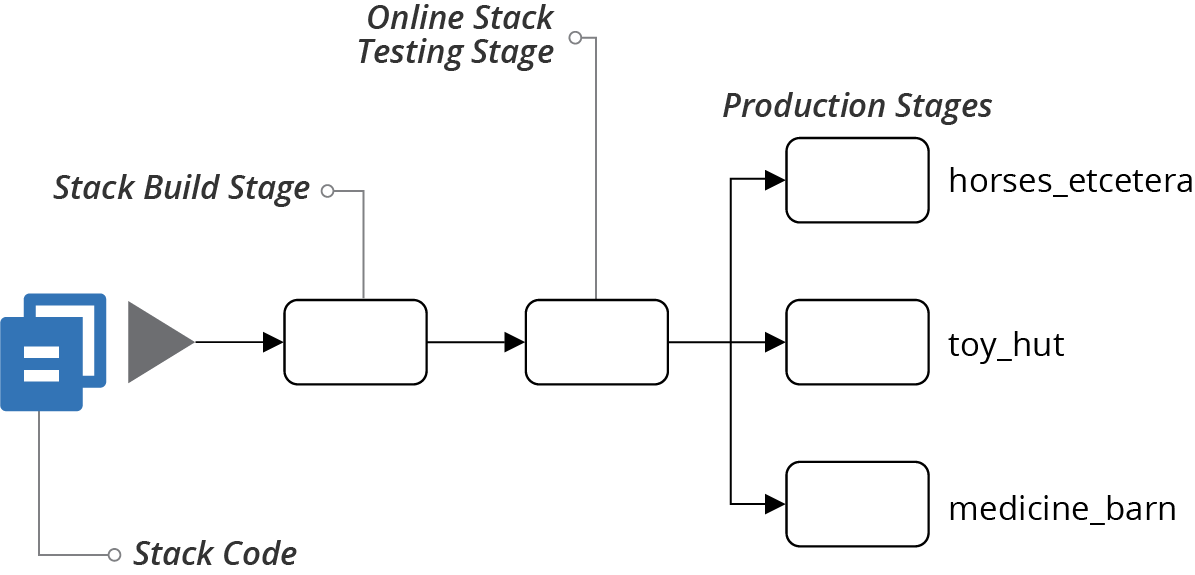

A simple pipeline for the ShopSpinner application infrastructure stack has two testing stages,1 followed by a stage that applies the code to each customer’s production environment (see Figure 9-1).

Figure 9-1. Simplified example pipeline for a stack

The first stage of the pipeline is the stack build stage. A build stage for an application usually compiles code, runs unit tests (described in “Test Pyramid”), and builds a deployable artifact. See “Building an Infrastructure Project” for more details of a typical build stage for infrastructure code. Because earlier stages in a pipeline should run faster, the first stage is normally used to run offline tests.

The second stage of the example pipeline runs online tests for the stack project. Each of the pipeline stages may run more than one set of tests.

Offline Testing Stages for Stacks

An offline stage runs “locally” on an agent node of the service that runs the stage (see “Delivery Pipeline Software and Services”), rather than needing to provision infrastructure on your infrastructure platform. Strict offline testing runs entirely within the local server or container instance, without connecting to any external services such as a database. A softer offline stage might connect to an existing service instance, perhaps even a cloud API, but doesn’t use any real stack infrastructure.

An offline stage should:

-

Run quickly, giving fast feedback if something is incorrect

-

Validate the correctness of components in isolation, to give confidence in each component, and to simplify debugging failures

-

Prove the component is cleanly decoupled

Some of the tests you can carry out on your stack code in an offline stage are syntax checking, offline static code analysis, static code analysis with the platform API, and testing with a mock API.

Syntax Checking

With most stack tools, you can run a dry run command that parses your code without applying it to infrastructure. The command exits with an error if there is a syntax error. The check tells you very quickly when you’ve made a typo in your code change, but misses many other errors. Examples of scriptable syntax checking tools include terraform validate and aws cloudformation validate-template.

The output of a failing syntax check might look like this:

$stack validateError: Invalid resource typeon appserver_vm.infra line 1, in resource "virtual_mahcine":stack does not support resource type "virtual_mahcine".

Offline Static Code Analysis

Some tools can parse and analyze stack source code for a wider class of issues than just syntax, but still without connecting to an infrastructure platform. This analysis is often called linting.2 This kind of tool may look for coding errors, confusing or poor coding style, adherence to code style policy, or potential security issues. Some tools can even modify code to match a certain style, such as the terraform fmt command. There are not as many tools that can analyze infrastructure code as there are for application programming languages. Examples include tflint, CloudFormation Linter, cfn_nag, tfsec, and checkov.

Here’s an example of an error from a fictional analysis tool:

$stacklint1 issue(s) found:Notice: Missing 'Name' tag (vms_must_have_standard_tags)on appserver_vm.infra line 1, in resource "virtual_machine":

In this example, we have a custom rule named vms_must_have_standard_tags that requires all virtual machines to have a set of tags, including one called Name.

Static Code Analysis with API

Depending on the tool, some static code analysis checks may connect to the cloud platform API to check for conflicts with what the platform supports. For example, tflint can check Terraform project code to make sure that any instance types (virtual machine sizes) or AMIs (server images) defined in the code actually exist. Unlike previewing changes (see “Preview: Seeing What Changes Will Be Made”), this type of validation tests the code in general, rather than against a specific stack instance on the platform.

The following example output fails because the code declaring the virtual server specifies a sever image that doesn’t exist on the platform:

$stacklint1 issue(s) found:Notice: base_image 'SERVER_IMAGE.shopspinner_java_server_image' doesn'texist (validate_server_images)on appserver_vm.infra line 5, in resource "virtual_machine":

Testing with a Mock API

You may be able to apply your stack code to a local, mock instance of your infrastructure platform’s API. There are not many tools for mocking these APIs. The only one I’m aware of as of this writing is Localstack. Some tools can mock parts of a platform, such as Azurite, which emulates Azure blob and queue storage.

Applying declarative stack code to a local mock can reveal coding errors that syntax or code analysis checks might not find. In practice, testing declarative code with infrastructure platform API mocks isn’t very valuable, for the reasons discussed in “Challenge: Tests for Declarative Code Often Have Low Value”. However, these mocks may be useful for unit testing imperative code (see “Programmable, Imperative Infrastructure Languages”), especially libraries (see “Dynamically Create Stack Elements with Libraries”).

Online Testing Stages for Stacks

An online stage involves using the infrastructure platform to create and interact with an instance of the stack. This type of stage is slower but can carry out more meaningful testing than online tests. The delivery pipeline service usually runs the stack tool on one of its nodes or agents, but it uses the platform API to interact with an instance of the stack. The service needs to authenticate to the platform’s API; see “Handling Secrets as Parameters” for ideas on how to handle this securely.

Although an online test stage depends on the infrastructure platform, you should be able to test the stack with a minimum of other dependencies. In particular, you should design your infrastructure, stacks, and tests so that you can create and test an instance of a stack without needing to integrate with instances of other stacks.

For example, the ShopSpinner customer application infrastructure works with a shared web server cluster stack. However, the ShopSpinner team members implement their infrastructure, and testing stages, using techniques that allow them to test the application stack code without an instance of the web server cluster.

I cover techniques for splitting stacks and keeping them loosely coupled in Chapter 15. Assuming you have built your infrastructure in this way, you can use test fixtures to make it possible to test a stack on its own, as described in “Using Test Fixtures to Handle Dependencies”.

First, consider how different types of online stack tests work. The tests that an online stage can run include previewing changes, verifying that changes are applied correctly, and proving the outcomes.

Preview: Seeing What Changes Will Be Made

Some stack tools can compare stack code against a stack instance to list changes it would make without actually changing anything. Terraform’s plan subcommand is a well-known example.

Most often, people preview changes against production instances as a safety measure, so someone can review the list of changes to reassure themselves that nothing unexpected will happen. Applying changes to a stack can be done with a two-step process in a pipeline. The first step runs the preview, and a person triggers the second step to apply the changes, once they’ve reviewed the results of the preview.

Having people review changes isn’t very reliable. People might misunderstand or not notice a problematic change. You can write automated tests that check the output of a preview command. This kind of test might check changes against policies, failing if the code creates a deprecated resource type, for example. Or it might check for disruptive changes—fail if the code will rebuild or destroy a database instance.

Another issue is that stack tool previews are usually not deep. A preview tells you that this code will create a new server:

virtual_machine:name:myappserverbase_image:"java_server_image"

But the preview may not tell you that "java_server_image" doesn’t exist, although the apply command will fail to create the server.

Previewing stack changes is useful for checking a limited set of risks immediately before applying a code change to an instance. But it is less useful for testing code that you intend to reuse across multiple instances, such as across test environments for release delivery. Teams using copy-paste environments (see “Antipattern: Copy-Paste Environments”) often use a preview stage as a minimal test for each environment. But teams using reusable stacks (see “Pattern: Reusable Stack”) can use test instances for more meaningful validation of their code.

Verification: Making Assertions About Infrastructure Resources

Given a stack instance, you can have tests in an online stage that make assertions about the infrastructure in the stack. Some examples of frameworks for testing infrastructure resources include:

A set of tests for the virtual machine from the example stack code earlier in this chapter could look like this:

givenvirtual_machine(name:"appserver-testcustomerA-staging"){it{exists}it{is_running}it{passes_healthcheck}it{has_attachedstorage_volume(name:"app-storage-testcustomerA-staging")}}

Most stack testing tools provide libraries to help write assertions about the types of infrastructure resources I describe in Chapter 3. This example test uses a

virtual_machine resource to identify the VM in the stack instance for the staging environment. It makes several assertions about the resource, including whether it has been created (exists), whether it’s running rather than having terminated

(is_running), and whether the infrastructure platform considers it healthy (passes_healthcheck).

Simple assertions often have low value (see “Challenge: Tests for Declarative Code Often Have Low Value”), since they simply restate the infrastructure code they are testing. A few basic assertions (such as exists) help to sanity check that the code was applied successfully. These quickly identify basic problems with pipeline stage configuration or test setup scripts. Tests such as is_running and passes_healthcheck would tell you when the stack tool successfully creates the VM, but it crashes or has some other fundamental issue. Simple assertions like these save you time in troubleshooting.

Although you can create assertions that reflect each of the VM’s configuration items in the stack code, like the amount of RAM or the network address assigned to it, these have little value and add overhead.

The fourth assertion in the example, has_attached storage_volume(), is more interesting. The assertion checks that the storage volume defined in the same stack is attached to the VM. Doing this validates that the combination of multiple declarations works correctly (as discussed in “Testing combinations of declarative code”). Depending on your platform and tooling, the stack code might apply successfully but leave the server and volume correctly tied together. Or you might make an error in your stack code that breaks the attachment.

Another case where assertions can be useful is when the stack code is dynamic. When passing different parameters to a stack can create different results, you may want to make assertions about those results. As an example, this code creates the infrastructure for an application server that is either public facing or internally facing:

virtual_machine:name:appserver-${customer}-${environment}address_block:if(${network_access} == "public")ADDRESS_BLOCK.public-${customer}-${environment}elseADDRESS_BLOCK.internal-${customer}-${environment}end

You could have a testing stage that creates each type of instance and asserts that the networking configuration is correct in each case. You should move more complex variations into modules or libraries (see Chapter 16) and test those modules separately from the stack code. Doing this simplifies testing the stack code.

Asserting that infrastructure resources are created as expected is useful up to a point. But the most valuable testing is proving that they do what they should.

Outcomes: Proving Infrastructure Works Correctly

Functional testing is an essential part of testing application software. The analogy with infrastructure is proving that you can use the infrastructure as intended. Examples of outcomes you could test with infrastructure stack code include:

-

Can you make a network connection from the web server networking segment to an application hosting network segment on the relevant port?

-

Can you deploy and run an application on an instance of your container cluster stack?

-

Can you safely reattach a storage volume when you rebuild a server instance?

-

Does your load balancer correctly handle server instances as they are added and removed?

Testing outcomes is more complicated than verifying that things exist. Not only do your tests need to create or update the stack instance, as I discuss in “Life Cycle Patterns for Test Instances of Stacks”, but you may also need to provision test fixtures. A test fixture is an infrastructure resource that is used only to support a test (I talk about test fixtures in “Using Test Fixtures to Handle Dependencies”).

This test makes a connection to the server to check that the port is reachable, and returns the expected HTTP response:

givenstack_instance(stack:"shopspinner_networking",instance:"online_test"){can_connect(ip_address:stack_instance.appserver_ip_address,port:443)http_request(ip_address:stack_instance.appserver_ip_address,port:443,url:'/').response.codeis('200')}

The testing framework and libraries implement the details of validations like can_connect and http_request. You’ll need to read the documentation for your test tool to see how to write actual tests.

Using Test Fixtures to Handle Dependencies

Many stack projects depend on resources created outside the stack, such as shared networking defined in a different stack project. A test fixture is an infrastructure resource that you create specifically to help you provision and test a stack instance by itself, without needing to have instances of other stacks. Test doubles, mentioned in “Challenge: Dependencies Complicate Testing Infrastructure”, are a type of test fixture.

Using test fixtures makes it much easier to manage tests, keep your stacks loosely coupled, and have fast feedback loops. Without test fixtures, you may need to create and maintain complicated sets of test infrastructure.

A test fixture is not a part of the stack that you are testing. It is additional infrastructure that you create to support your tests. You use test fixtures to represent a stack’s dependencies.

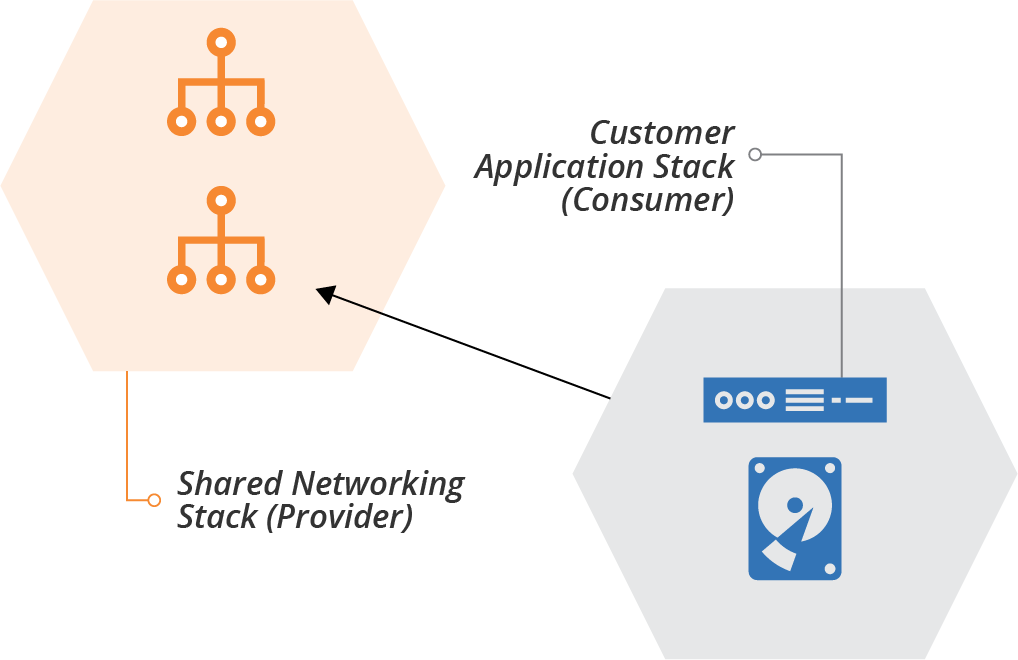

A given dependency is either upstream, meaning the stack you’re testing uses resources provided by another stack, or it is downstream, in which case other stacks use resources from the stack you’re testing. People sometimes call a stack with downstream dependencies the provider, since it provides resources. A stack with upstream dependencies is then called the consumer (see Figure 9-2).

Figure 9-2. Example of a provider stack and consumer stack

Our ShopSpinner example has a provider stack that defines shared networking structures. These structures are used by consumer stacks, including the stack that defines customer application infrastructure. The application stack creates a server and assigns it to a network address block.3

A given stack may be both a provider and a consumer, consuming resources from another stack and providing resources to other stacks. You can use test fixtures to stand in for either upstream or downstream integration points of a stack.

Test Doubles for Upstream Dependencies

When you need to test a stack that depends on another stack, you can create a test double. For stacks, this typically means creating some additional infrastructure. In our example of the shared network stack and the application stack, the application stack needs to create its server in a network address block that is defined by the network stack. Your test setup may be able to create an address block as a test fixture to test the application stack on its own.

It may be better to create the address block as a test fixture rather than creating an instance of the entire network stack. The network stack may include extra infrastructure that isn’t necessary for testing. For instance, it may define network policies, routes, auditing, and other resources for production that are overkill for a test.

Also, creating the dependency as a test fixture within the consumer stack project decouples it from the provider stack. If someone is working on a change to the networking stack project, it doesn’t impact work on the application stack.

A potential benefit of this type of decoupling is to make stacks more reusable and composable. The ShopSpinner team might want to create different network stack projects for different purposes. One stack creates tightly controlled and audited networking for services that have stricter compliance needs, such as payment processing subject to the PCI standard, or customer data protection regulations. Another stack creates networking that doesn’t need to be PCI compliant. By testing application stacks without using either of these stacks, the team makes it easier to use the stack code with either one.

Test Fixtures for Downstream Dependencies

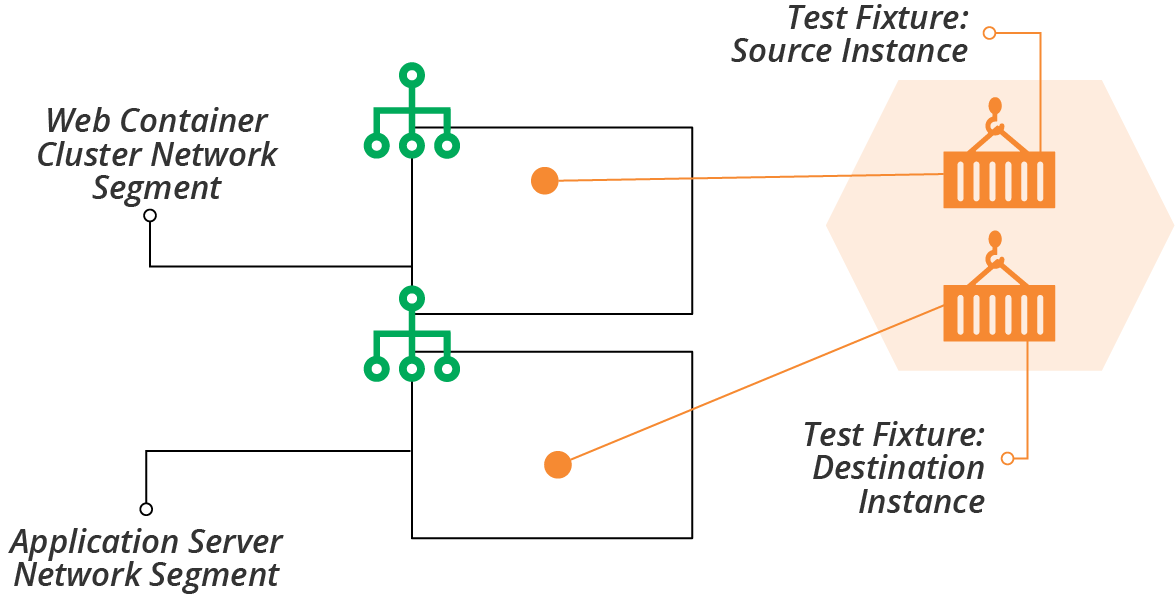

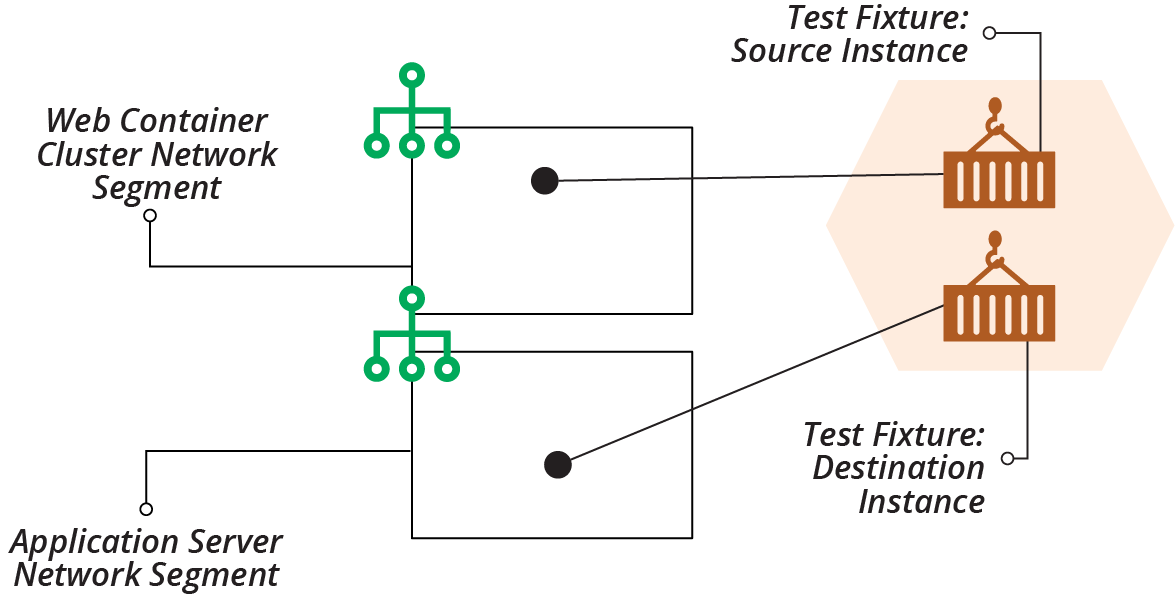

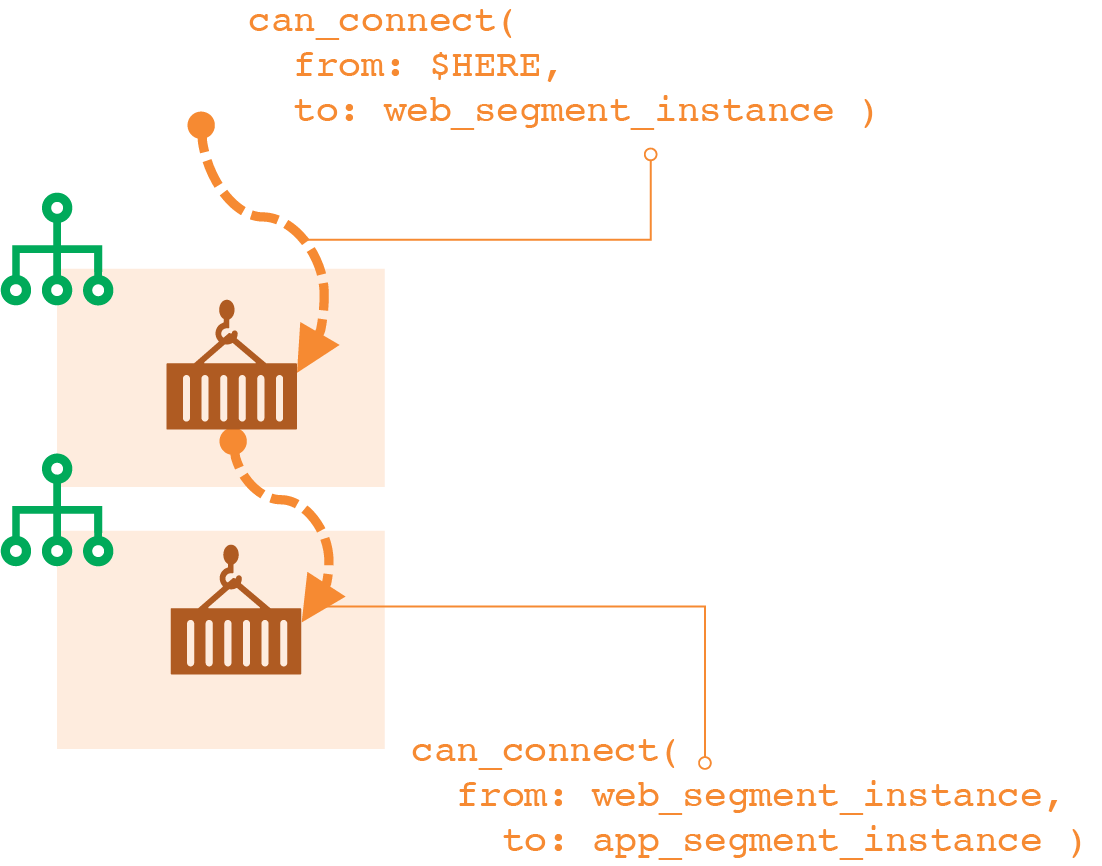

You can also use test fixtures for the reverse situation, to test a stack that provides resources for other stacks to use. In Figure 9-3, the stack instance defines networking structures for ShopSpinner, including segments and routing for the web server container cluster and application servers. The network stack doesn’t provision the container cluster or application servers, so to test the networking, the setup provisions a test fixture in each of these segments.

Figure 9-3. Test instance of the ShopSpinner network stack, with test fixtures

The test fixtures in these examples are a pair of container instances, one assigned to each of the network segments in the stack. You can often use the same testing tools that you use for verification testing (see “Verification: Making Assertions About Infrastructure Resources”) for outcome testing. These example tests use a fictional stack testing DSL:

givenstack_instance(stack:"shopspinner_networking",instance:"online_test"){can_connect(from:$HERE,to:get_fixture("web_segment_instance").address,port:443)can_connect(from:get_fixture("web_segment_instance"),to:get_fixture("app_segment_instance").address,port:8443)}

The method can_connect executes from $HERE, which would be the agent where the test code is executing, or from a container instance. It attempts to make an HTTPS connection on the specified port to an IP address. The get_fixture() method fetches the details of a container instance created as a test fixture.

The test framework might provide the method can_connect, or it could be a custom method that the team writes.

You can see the connections that the example test code makes in Figure 9-4.

Figure 9-4. Testing connectivity in the ShopSpinner network stack

The diagram shows the paths for both tests. The first test connects from outside the stack to the test fixture in the web segment. The second test connects from the fixture in the web segment to the fixture in the application segment.

Refactor Components So They Can Be Isolated

Sometimes a particular component can’t be easily isolated. Dependencies on other components may be hardcoded or simply too messy to pull apart. One of the benefits of writing tests while designing and building systems, rather than afterward, is that it forces you to improve your designs. A component that is difficult to test in isolation is a symptom of design issues. A well-designed system should have loosely coupled components.

So when you run across components that are difficult to isolate, you should fix the design. You may need to completely rewrite components, or replace libraries, tools, or applications. As the saying goes, this is a feature, not a bug. Clean design and loosely coupled code is a byproduct of making a system testable.

There are several strategies for restructuring systems. Martin Fowler has written about refactoring and other techniques for improving system architecture. For example, the Strangler Application prioritizes keeping the system fully working while restructuring it over time.

Part IV of this book involves more detailed rules and examples for modularizing and integrating infrastructure.

Life Cycle Patterns for Test Instances of Stacks

Before virtualization and cloud, everyone maintained static, long-lived test environments. Although many teams still have these environments, there are advantages to creating and destroying environments on demand. The following patterns describe the trade-offs of keeping a persistent stack instance, creating an ephemeral instance for each test run, and ways of combining both approaches. You can also apply these patterns to application and full system test environments as well as to testing infrastructure stack code.

Pattern: Persistent Test Stack

Also known as: static environment.

A testing stage can use a persistent test stack instance that is always running. The stage applies each code change as an update to the existing stack instance, runs the tests, and leaves the resulting modified stack in place for the next run (see Figure 9-5).

Figure 9-5. Persistent test stack instance

Motivation

It’s usually much faster to apply changes to an existing stack instance than to create a new instance. So the persistent test stack can give faster feedback, not only for the stage itself but for the full pipeline.

Applicability

A persistent test stack is useful when you can reliably apply your stack code to the instance. If you find yourself spending time fixing broken instances to get the pipeline running again, you should consider one of the other patterns in this chapter.

Consequences

It’s not uncommon for stack instances to become “wedged” when a change fails and leaves it in a state where any new attempt to apply stack code also fails. Often, an instance gets wedged so severely that the stack tool can’t even destroy the stack so you can start over. So your team spends too much time manually unwedging broken test instances.

You can often reduce the frequency of wedged stacks through better stack design. Breaking stacks down into smaller and simpler stacks, and simplifying dependencies between stacks, can lower your wedge rate. See Chapter 15 for more on this.

Implementation

It’s easy to implement a persistent test stack. Your pipeline stage runs the stack tool command to update the instance with the relevant version of the stack code, runs the tests, and then leaves the stack instance in place when finished.

You may rebuild the stack completely as an ad hoc process, such as someone running the tool from their local computer, or using an extra stage or job outside the routine pipeline flow.

Related patterns

The periodic stack rebuild pattern discussed in “Pattern: Periodic Stack Rebuild” is a simple tweak to this pattern, tearing the instance down at the end of the working day and building a new one every morning.

Pattern: Ephemeral Test Stack

Also known as: quick and dirty plus slow and clean.

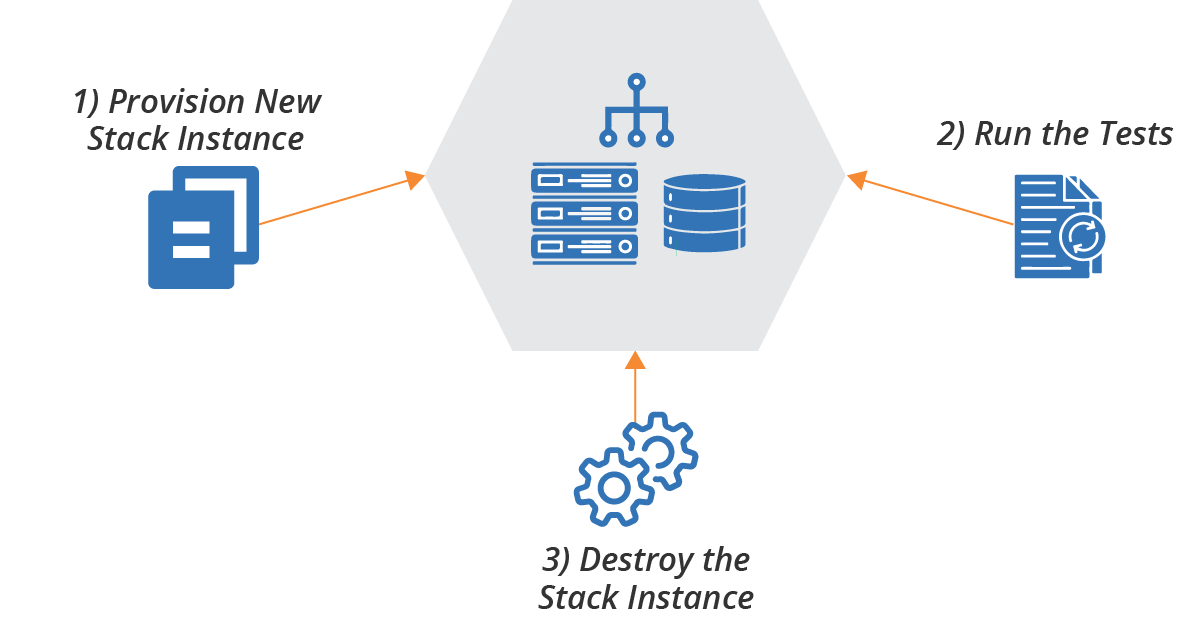

With the ephemeral test stack pattern, the test stage creates and destroys a new instance of the stack every time it runs (see Figure 9-6).

Figure 9-6. Ephemeral test stack instance

Motivation

An ephemeral test stack provides a clean environment for each run of the tests. There is no risk from data, fixtures, or other “cruft” left over from a previous run.

Applicability

You may want to use ephemeral instances for stacks that are quick to provision from scratch. “Quick” is relative to the feedback loop you and your teams need. For more frequent changes, like commits to application code during rapid development phases, the time to build a new environment is probably longer than people can tolerate. But less frequent changes, such as OS patch updates, may be acceptable to test with a complete rebuild.

Consequences

Stacks generally take a long time to provision from scratch. So stages using ephemeral stack instances make feedback loops and delivery cycles slower.

Implementation

To implement an ephemeral test instance, your test stage should run the stack tool command to destroy the stack instance when testing and reporting have completed. You may want to configure the stage to stop before destroying the instance if the tests fail so that people can debug the failure.

Related patterns

The continuous stack reset pattern (“Pattern: Continuous Stack Reset”) is similar, but runs the stack creation and destruction commands out of band from the stage, so the time taken doesn’t affect feedback loops.

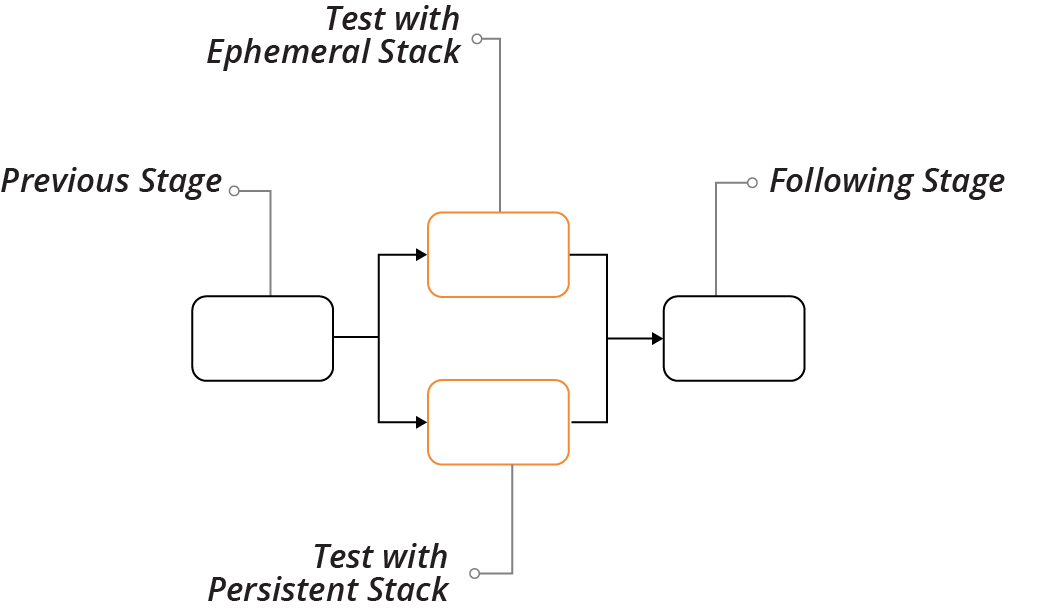

Antipattern: Dual Persistent and Ephemeral Stack Stages

Also known as: nightly rebuild.

With persistent and ephemeral stack stages, the pipeline sends each change to a stack to two different stages, one that uses an ephemeral stack instance, and one that uses a persistent stack instance. This combines the persistent test stack pattern (see “Pattern: Persistent Test Stack”) and the ephemeral test stack pattern (see “Pattern: Ephemeral Test Stack”).

Motivation

Teams usually implement this to work around the disadvantages of each of the two patterns it combines. If all works well, the “quick and dirty” stage (the one using the persistent instance) provides fast feedback. If that stage fails because the environment becomes wedged, you will get feedback eventually from the “slow and clean” stage (the one using the ephemeral instance).

Applicability

It might be worth implementing both types of stages as an interim solution while moving to a more reliable solution.

Consequences

In practice, using both types of stack life cycle combines the disadvantages of both. If updating an existing stack is unreliable, then your team will still spend time manually fixing that stage when it goes wrong. And you probably wait until the slower stage passes before being confident that a change is good.

This antipattern is also expensive, since it uses double the infrastructure resources, at least during the test run.

Implementation

You implement dual stages by creating two pipeline stages, both triggered by the previous stage in the pipeline for the stack project, as shown in Figure 9-7. You may require both stages to pass before promoting the stack version to the following stage, or you may promote it when either of the stages passes.

Figure 9-7. Dual persistent and ephemeral stack stages

Related patterns

This antipattern combines the persistent test stack pattern (see “Pattern: Persistent Test Stack”) and the ephemeral test stack pattern (see “Pattern: Ephemeral Test Stack”).

Pattern: Periodic Stack Rebuild

Periodic stack rebuild uses a persistent test stack instance (see “Pattern: Persistent Test Stack”) for the stack test stage, and then has a process that runs out-of-band to destroy and rebuild the stack instance on a schedule, such as nightly.

Motivation

People often use periodic rebuilds to reduce costs. They destroy the stack at the end of the working day and provision a new one at the start of the next day.

Periodic rebuilds might help with unreliable stack updates, depending on why the updates are unreliable. In some cases, the resource usage of instances builds up over time, such as memory or storage that accumulates across test runs. Regular resets can clear these out.

Applicability

Rebuilding a stack instance to work around resource usage usually masks underlying problems or design issues. In this case, this pattern is, at best, a temporary hack, and at worst, a way to allow problems to build up until they cause a disaster.

Destroying a stack instance when it isn’t in use to save costs is sensible, especially when using metered resources such as with public cloud platforms.

Consequences

If you use this pattern to free up idle resources, you need to consider how you can be sure they aren’t needed. For example, people working outside of office hours, or in other time zones, may be blocked without test environments.

Implementation

Most pipeline orchestration tools make it easy to create jobs that run on a schedule to destroy and build stack instances. A more sophisticated solution would run based on activity levels. For example, you could have a job that destroys an instance if the test stage hasn’t run in the past hour.

There are three options for triggering the build of a fresh instance after destroying the previous instance. One is to rebuild it right away after destroying it. This approach clears resources but doesn’t save costs.

A second option is to build the new environment instance at a scheduled point in time. But it may stop people from working flexible hours.

The third option is for the test stage to provision a new instance if it doesn’t currently exist. Create a separate job that destroys the instance, either on a schedule or after a period of inactivity. Each time the testing stage runs, it first checks whether the instance is already running. If not, it provisions a new instance first. With this approach, people occasionally need to wait longer than usual to get test results. If they are the first person to push a change in the morning, they need to wait for the system to provision the stack.

Related patterns

This pattern can play out like the persistent test stack pattern (see “Pattern: Persistent Test Stack”)—if your stack updates are unreliable, people spend time fixing broken instances.

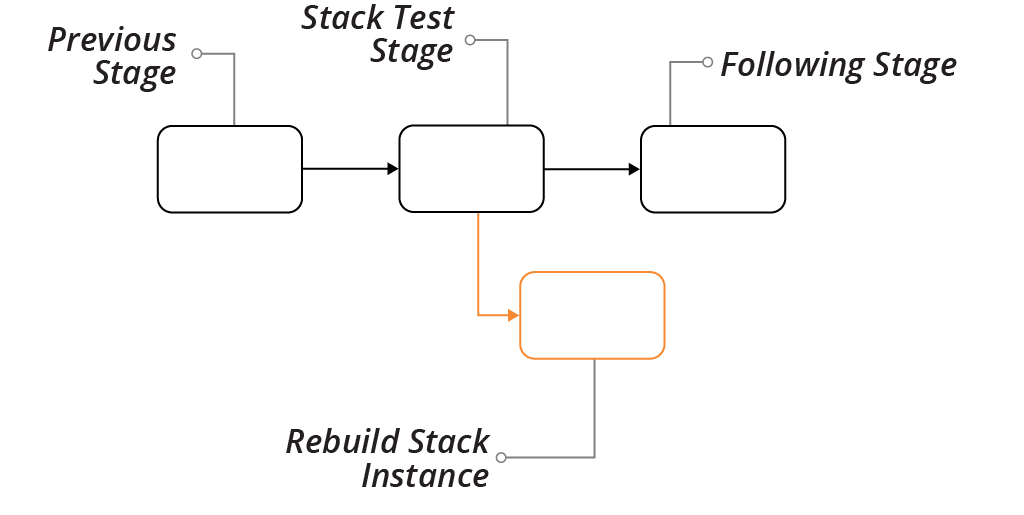

Pattern: Continuous Stack Reset

With the continuous stack reset pattern, every time the stack testing stage completes, an out-of-band job destroys and rebuilds the stack instance (see Figure 9-8).

Figure 9-8. Pipeline flow for continuous stack reset

Motivation

Destroying and rebuilding the stack instance every time provides a clean slate to each testing run. It may automatically remove a broken instance unless it is too broken for the stack tool to destroy. And it removes the time it takes to create and destroy the stack instance from the feedback loop.

Another benefit of this pattern is that it can reliably test the update process that would happen for the given stack code version in production.

Applicability

Destroying the stack instance in the background can work well if the stack project doesn’t tend to break and need manual intervention to fix.

Consequences

Since the stack is destroyed and provisioned outside the delivery flow of the pipeline, problems may not be visible. The pipeline can be green, but the test instance may break behind the scenes. When the next change reaches the test stage, it may take time to realize it failed because of the background job rather than because of the change itself.

Implementation

When the test stage passes, it promotes the stack project code to the next stage. It also triggers a job to destroy and rebuild the stack instance. When someone pushes a new change to the code, the test stage applies it to the instance as an update.

You need to decide which version of the stack code to use when rebuilding the instance. You could use the same version that has just passed the stage. An alternative is to pull the last version of the stack code applied to the production instance. This way, each version of stack code is tested as an update to the current production version. Depending on how your infrastructure code typically flows to production, this may be a more accurate representation of the production upgrade process.

Related patterns

Ideally, this pattern resembles the persistent test stack pattern (see “Pattern: Persistent Test Stack”), providing feedback, while having the reliability of the ephemeral test stack pattern (see “Pattern: Ephemeral Test Stack”).

Test Orchestration

I’ve described each of the moving parts involved in testing stacks: the types of tests and validations you can apply, using test fixtures to handle dependencies, and life cycles for test stack instances. But how should you put these together to set up and run tests?

Most teams use scripts to orchestrate their tests. Often, these are the same scripts they use to orchestrate running their stack tools. In “Using Scripts to Wrap Infrastructure Tools”, I’ll dig into these scripts, which may handle configuration, coordinating actions across multiple stacks, and other activities as well as testing.

Test orchestration may involve:

-

Creating test fixtures

-

Loading test data (more often needed for application testing than infrastructure testing)

-

Managing the life cycle of test stack instances

-

Providing parameters to the test tool

-

Running the test tool

-

Consolidating test results

-

Cleaning up test instances, fixtures, and data

Most of these topics, such as test fixtures and stack instance life cycles, are covered earlier in this chapter. Others, including running the tests and consolidating the results, depend on the particular tool.

Two guidelines to consider for orchestrating tests are supporting local testing and avoiding tight coupling to pipeline tools.

Support Local Testing

People working on infrastructure stack code should be able to run the tests themselves before pushing code into the shared pipeline and environments. “Personal Infrastructure Instances” discusses approaches to help people work with personal stack instances on an infrastructure platform. Doing this allows you to code and run online tests before pushing changes.

As well as being able to work with personal test instances of stacks, people need to have the testing tools and other elements involved in running tests on their local working environment. Many teams use code-driven development environments, which automate installing and configuring tools. You can use containers or virtual machines for packaging development environments that can run on different types of desktop systems.4 Alternatively, your team could use hosted workstations (hopefully configured as code), although these may suffer from latency, especially for distributed teams.

A key to making it easy for people to run tests themselves is using the same test orchestration scripts across local work and pipeline stages. Doing this ensures that tests are set up and run consistently everywhere.

Avoid Tight Coupling with Pipeline Tools

Many CI and pipeline orchestration tools have features or plug-ins for test orchestration, even configuring and running the tests for you. While these features may seem convenient, they make it difficult to set up and run your tests consistently outside the pipeline. Mixing test and pipeline configuration can also make it painful to make changes.

Instead, you should implement your test orchestration in a separate script or tool. The test stage should call this tool, passing a minimum of configuration parameters. This approach keeps the concerns of pipeline orchestration and test orchestration loosely coupled.

Test Orchestration Tools

Many teams write custom scripts to orchestrate tests. These scripts are similar to or may even be the same scripts used to orchestrate stack management (as described in “Using Scripts to Wrap Infrastructure Tools”). People use Bash scripts, batch files, Ruby, Python, Make, Rake, and others I’ve never heard of.

There are a few tools available that are specifically designed to orchestrate infrastructure tests. Two I know of are Test Kitchen and Molecule. Test Kitchen is an open source product from Chef that was originally aimed at testing Chef cookbooks. Molecule is an open source tool designed for testing Ansible playbooks. You can use either tool to test infrastructure stacks, for example, using Kitchen-Terraform.

The challenge with these tools is that they are designed with a particular workflow in mind, and can be difficult to configure to suit the workflow you need. Some people tweak and massage them, while others find it simpler to write their own scripts.

Conclusion

This chapter gave an example of creating a pipeline with multiple stages to implement the core practice of continuously testing and delivering stack-level code. One of the challenges with testing stack code is tooling. Although there are some tools available—many of which I mention in this chapter—TDD, CI, and automated testing are not very well established for infrastructure as of this writing. You have a journey to discover the tools that you can use, and may need to cover gaps in the tooling with custom scripting. Hopefully, this will improve over time.

1 This pipeline is much simpler than what you’d use in reality. You would probably have at least one stage to test the stack and the application together (see “Delivering Infrastructure and Applications”). You might also need a customer acceptance testing stage before each customer production stage. This also doesn’t include governance and approval stages, which many organizations require.

2 The term lint comes from a classic Unix utility that analyzes C source code.

3 Chapter 17 explains how to connect stack dependencies.

4 Vagrant is handy for sharing virtual machine configuration between members of a team.