6

How the Brain Makes Emotions

Have you ever wanted to punch your boss? I would never advocate workplace violence, of course, and many bosses are terrific work partners. But sometimes we are blessed with supervisors who personify the German emotion word Backpfeifengesicht, meaning “a face in need of a fist.”

Suppose you have such a boss, and he’s been handing you extra projects for almost a year. You’ve been expecting a promotion for all your good work, but he has just informed you that the promotion went to someone else. How would you feel?

If you live in a Western culture, you’d likely feel angry. Your brain would issue numerous predictions of “Anger” simultaneously. One prediction might be to pound your fist on the desk and yell at your boss. Another is to stand up and walk slowly across the room toward your boss, leaning in menacingly to whisper, “You will regret this.” Or you could sit quietly in your chair as you scheme to undermine your boss’s career.1

These diverse predictions of “Anger” have similarities, such as the boss, the lost promotion, and the common goal to exact vengeance. They also have plenty of differences, because yelling, whispering, and silence require different sensory and motor predictions. Your action also is different in each case (pounding, leaning, sitting), so your inner-body changes are different, as are the consequences for your body budget, and therefore the interoceptive and affective consequences are different as well. Ultimately, through a process we’ll discuss shortly, your brain selects a winning instance of “Anger” that best fits your goal in this particular situation. The winning instance determines how you behave and what you experience. This process is categorization.

The scenario with your boss could play out differently, however. You could be angry with a different goal, like changing your boss’s mind, or maintaining social relations with the coworker who got the promotion in your place. Or you could construct an instance of a different emotion such as “Regret” or “Fear,” or a non-emotion like “Emancipation,” or a physical symptom like a “Headache,” or a perception that your boss is an “Idiot.” In each case, your brain follows a similar process, categorizing to best fit the entire situation and your internal sensations, based on past experience. Categorization means selecting a winning instance that becomes your perception and guides your action.2

It takes a rich set of concepts to construct emotion, as you read in the preceding chapter. Now you’ll learn how your brain acquires and uses your conceptual system from your earliest moments as an infant. Along the way, you’ll also learn the neural basis for several important topics you’ve seen previously: emotional granularity, population thinking, why emotions feel triggered rather than constructed, and why your body-budgeting regions can affect every decision and action you make.* When taken as a whole, these explanations hint at a unifying framework for how the brain makes meaning: one of the most extraordinary mysteries of the human mind.

…

The infant brain is missing most of the concepts that we have as adults. Babies don’t know what telescopes are, or sea cucumbers, or picnics, let alone purely mental concepts like “Whimsy” or “Schadenfreude.” A newborn is experientially blind to a great extent. Not surprisingly, the infant brain does not predict well. A grown-up brain is dominated by prediction, but an infant brain is awash in prediction error. So babies must learn about the world from sensory input before their brains can model the world. This learning is a primary task of the infant brain.

At first, much of the onslaught of sensory input is new to an infant’s brain, and its significance is undetermined, so little will be ignored. If sensory input is like a skipping stone on an ocean wave of brain activity, for infants the stone is a boulder. Infants absorb the sensory input around them and learn, learn, learn. The developmental psychologist Alison Gopnik describes babies as having a “lantern” of attention that is exquisitely bright but diffuse. In contrast, your adult brain has a network to shut out information that might sidetrack your predictions, allowing you to do things like read this book without distraction. You have a built-in “spotlight” of attention that illuminates some things, such as these words, while leaving other things in the dark. The infant brain’s “lantern” cannot focus in this manner.3

As the months pass, if everything is working properly, the infant brain begins to predict more effectively. Sensations from the outside world have become concepts in the infant’s model of the world; what was outside is now inside. These sensory experiences, over time, create the opportunity for the infant brain to make coordinated predictions that span the senses. A rumbling tummy in a bright room after awakening means that it’s morning, whereas a warm wetness with bright overhead light means that it’s evening bath time. When my daughter, Sophia, was only a few weeks old, we capitalized on such multisensory predictions to help her develop sleep patterns that would not reduce us to sleep-deprived zombies. We exposed her to distinct songs, stories, colored blankets, and other rituals to help her distinguish statistically between naptime and bedtime, so she would sleep for shorter or longer stretches.4

How does the infant brain, equipped with a smattering of concrete concepts and dominated by prediction error, eventually encompass thousands of complex, purely mental concepts like “Awe” and “Despair,” each of which is a population of diverse instances? This is actually a question of engineering, and its solution can be found in the architecture of the human cerebral cortex. It all comes down to some basic problems of efficiency and energy. An infant brain must continually learn and update its concepts in a changing environment. This task requires a mighty powerful, efficient brain. But this brain has practical constraints. Its networks of neurons can grow only so big and still fit inside a skull that can be birthed through a human pelvis. Neurons are also expensive little cells to keep alive (they require a lot of energy), and so a brain has a limit on how many connections it can support metabolically and still run. So the infant brain must transfer information efficiently by passing it to as few neurons as possible.

The solution to this engineering challenge is a cortex that represents concepts so that similarities are separated from differences. This separation, as you will now see, leads to a tremendous optimization.

Whenever you watch a video on YouTube, you’re witnessing efficient information transfer of a similar kind. A video is a sequence of still images or “frames” displayed in rapid succession. There is great redundancy from one frame to the next, however, so when YouTube’s server sends the stream of video information over the Internet to your computer or phone, it needn’t send every single pixel from every frame. It’s more efficient to communicate only what has changed from one frame to the next, because any static areas of the previous frame have already been transmitted. YouTube separates the video’s similarities from its differences to speed up transmission, and software on your computer or phone assembles the pieces into a cohesive video.

The human brain does much the same thing when it processes prediction error. The sensory information from sight is highly redundant like a video, and the same is true for sound, smell, and the other senses. The brain represents this information as patterns of firing neurons, and it’s advantageous (and efficient) to represent it with as few neurons as possible.

For example, the visual system represents a straight line as a pattern of neurons firing in primary visual cortex. Suppose that a second group of neurons fires to represent a second line at a ninety-degree angle to the first line. A third group of neurons could summarize this statistical relationship between the two lines efficiently as a simple concept of “Angle.” The infant brain might encounter a hundred different pairs of intersecting line segments of varying length, thickness, and color, but conceptually they are all instances of “Angle,” each of which gets efficiently summarized by some smaller group of neurons. These summaries eliminate redundancy. In this manner, the brain separates statistical similarities from sensory differences.

In the same manner, the instances of the concept “Angle” are themselves part of other concepts. For example, an infant receives visual input about her mother’s face from many different vantage points: while nursing, while sitting face to face, in the morning and the evening. Her concept of “Angle” will be part of her concept “Eye” that summarizes the continuously changing lines and contours of her mother’s eyes seen at different angles and in different luminances. Different groups of neurons fire to represent the various instances of the concept “Eye,” allowing the infant to recognize those eyes as her mother’s eyes each time, regardless of the sensory differences.5

As we go from very specific to increasingly general concepts (in this example, from line to angle to eye), the brain creates similarities that are progressively more efficient summaries of the information. For example, “Angle” is an efficient summary with respect to lines but is a sensory detail with respect to eyes. The same logic works for the concepts “Nose” and “Ear” and so on. Together, these concepts are part of the concept “Face,” whose instances are yet more efficient summaries of the sensory regularities in facial features. Eventually, the infant’s brain forms summary representations for enough visual concepts that she can see one stable object, despite incredible variation in low-level sensory details. Think about it: each of your eyes transmits millions of tiny pieces of information to your brain in a moment, and you simply see “a book.”

This principle—finding similarities in the service of efficiency—doesn’t just describe the visual system; it also operates within each sensory system (sounds, smells, interoceptive sensations, and so on), and for patterns of different senses in combination. Consider a purely mental concept like “Mother.” As a baby nurses one morning, groups of neurons fire in her various sensory systems, in statistically related patterns, to represent the mother’s visual image, the sound of her voice, her scent, the tactile sensations of being held, an increase in energy from being fed, the sensations of a full tummy, plus the pleasure of feeding and being cuddled. All of these representations are interrelated, and their summary is represented elsewhere, in the pattern of firing within a smaller group of neurons, as a rudimentary, multisensory instance of “Mother.” During nursing again later in the day, other summaries of the concept “Mother” will be similarly created, using similar, but not identical, groupings of neurons. And as the infant swats at a hanging toy above the crib, watches the toy swing through the air, and feels any associated tactile and interoceptive sensations, all of which are linked with a decrease in energy due to her movement, her brain summarizes these statistically related events as a rudimentary, multisensory instance of the concept “Self.”6

In this manner, an infant’s brain distills widely dispersed firing patterns for individual senses into one multisensory summary. This process reduces redundancy and represents the information in a minimal, efficient form for future use. It’s like dehydrated food that takes up less space but needs to be reconstituted before eating. This efficiency makes it practical for the brain to form rudimentary concepts such as “Mother” and “Self” that result from learning.

As a child gets older, her brain begins to predict more effectively using her concepts—but of course she still makes mistakes. When Sophia was three years old, for instance, we were in a shopping mall when she spotted a man ahead of us, with his hair in dreadlocks. She knew three people with dreadlocks at that time: her beloved Uncle Kevin, who is medium height and dark-skinned; an acquaintance who also has dark skin but is quite tall and broad-shouldered; and one of our neighbors, who is female and short with light skin. In that moment, Sophia’s brain was furiously launching multiple, competing predictions that could potentially become her experience. For the sake of argument, let’s say this included 100 predictions of Uncle Kevin from Sophia’s past experience, from different places and times and angles, along with 14 predictions of her acquaintance, and 60 predictions of her female neighbor. Each prediction was assembled from bits and pieces of patterns in her brain, all mixed and matched. These 174 predictions were also accompanied by many other predictions of people, places, and things from Sophia’s prior experiences—anything at all that was statistically related to the scene in front of her.

In total, Sophia’s population of 174 predictions is what we’ve been calling a “concept” (in this case, the concept “People with Dreadlocks”). When we say these instances are “grouped” as a concept, be aware that there is no “grouping” stored anywhere in Sophia’s brain. Any given concept is not represented in the information flow among one single set of neurons; each concept is itself a population of instances, and these instances are represented in different patterns of neurons on each occasion. (This is degeneracy.) The concept is constructed in the moment, ad hoc. And among these myriad instances, one of them will be the most similar (by pattern matching) to Sophia’s current situation. That’s what we’ve been calling the “winning instance.”7

On that particular day, Sophia leaped out of her stroller, ran across the mall, and wrapped her little arms around the man’s leg, shouting, “Uncle KEVIN!” Her delight was short-lived, however, as Uncle Kevin was at home six hundred miles away. She looked up into a total stranger’s face and shrieked.*

The same general process occurs for purely mental concepts such as “Sadness.” A child hears the word “sad” spoken in three different situations. These three instances are represented in the child’s brain in bits and pieces. They are not “grouped together” in any concrete way. On a fourth occasion, the child sees a boy in her classroom crying, and a teacher uses the word “sad.” The child’s brain constructs the three prior instances as predictions, along with other predictions that are statistically similar in any way to the current situation. This collection of predictions is a concept created in the moment, by virtue of some purely mental similarity among the instances of “Sadness.” Once again, the prediction that is most similar to the current situation becomes her experience—an instance of emotion.8

…

It’s time for me to explain something directly what so far I have only implied. Two of the phenomena I’ve been discussing are actually one and the same. I’m speaking of concepts and predictions.

When your brain “constructs an instance of a concept,” such as an instance of “Happiness,” that is equivalent to saying your brain “issues a prediction” of happiness. When Sophia’s brain issued 100 predictions about Uncle Kevin, each one was an instance of the momentary concept “Uncle Kevin” that she formed before grabbing the stranger’s leg.9

I separated the ideas of predictions and concepts earlier to simplify some explanations. I could have used the word “prediction” throughout the book and never mentioned the word “concept,” or vice versa, but information transmission is easier to understand in terms of predictions flying across the brain, whereas knowledge is more readily understood in terms of concepts. Now that we’re discussing how concepts work in the brain, we must acknowledge that concepts are predictions.

Early in life, you build up concepts from detailed sensory input (as prediction error) from your body and the world. Your brain efficiently compresses the sensory input it receives, just like YouTube compresses video, extracting similarities out of differences, eventually creating an efficient, multisensory summary. Once your brain has learned a concept in this manner, it can run this process in reverse, expanding the similarities into differences to construct an instance of the concept, much as your computer or phone expands the incoming YouTube video for display. This is a prediction. Think of prediction as “applying” a concept, modifying the activity in your primary sensory and motor regions, and correcting or refining as needed.

Imagine that you’re in a shopping mall, as I was with my daughter, strolling from store to store. The mall is filled with sounds, people are bustling about, the shop windows are overflowing with tempting products for sale, and your brain is busy issuing thousands of simultaneous predictions as usual. “There is motion in front of me.” “There is motion to my left.” “My breathing is slowing down.” “My stomach is rumbling.” “I hear laughter.” “I am calm.” “I am lonely.” “I see my neighbor.” “I see that nice guy who works at the post office.” “I see my Uncle Kevin.” Let’s say that those last three predictions about people are instances of a concept for “Happiness,” having to do with feeling connected to friends. Your brain simultaneously constructs many instances of this concept, based on past experiences in similar situations when you have unexpectedly bumped into friends. Each instance has some probability of being correct at that moment.

Let’s give our focus to one of those instances, your prediction that you see your beloved Uncle Kevin unexpectedly in a shopping mall. Your brain issues this prediction because, at some time in the past, you saw Uncle Kevin in a similar situation and experienced sensations that you categorized as happiness. How well will this prediction match your incoming sensory inputs right now? If it matches better than all the other predictions, then you will experience this instance of “Happiness.” If not, then your brain will adjust the prediction, and you might experience an instance of “Disappointment.” Or if need be, your brain will make the prediction match the sensory input, and you will mistakenly perceive someone else to be your Uncle Kevin, as Sophia did in the shopping mall that day.

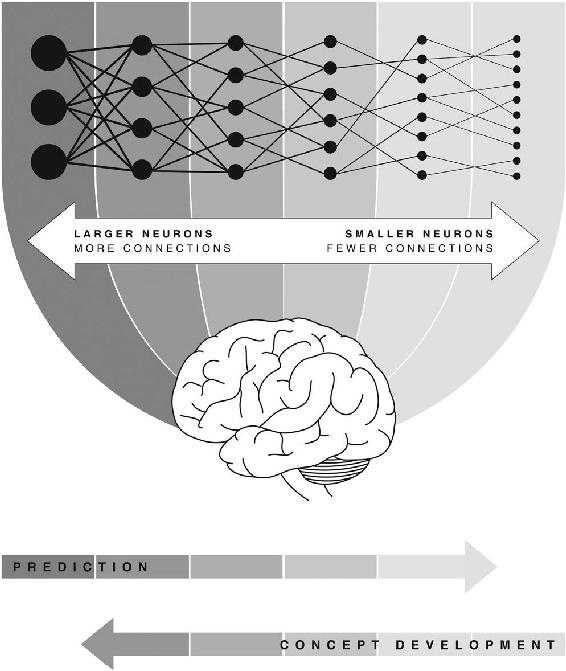

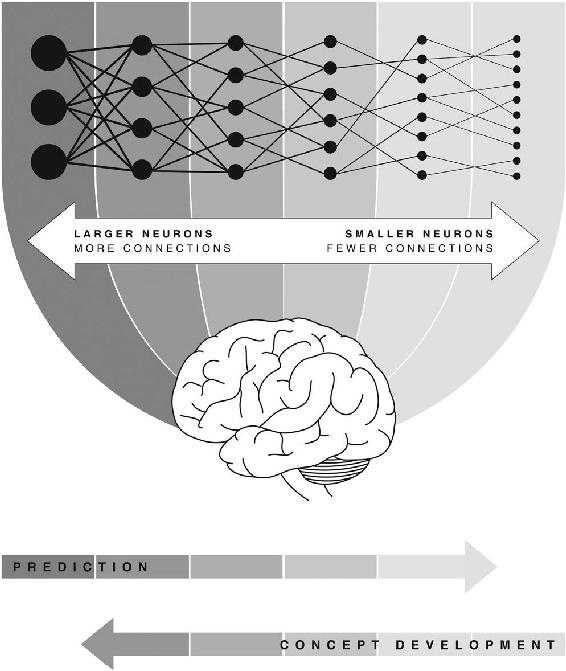

So there you are, standing in the mall, and your brain must determine whether its prediction of Uncle Kevin ultimately becomes your perception and directs your action, or whether a course correction is required. To determine the details, the brain unpacks the summary of all the sensory input into a gigantic cascade of more detailed predictions, like uncompressing a YouTube video for viewing, or adding water to dehydrated food to make it edible. This process, shown in figure 6-1, is the same one that builds up a concept from details, but in reverse.

For example, when the prediction of “Happiness” reaches the upper portions of the visual system, the prediction might unpack into details of Uncle Kevin’s appearance, say, whether he is facing toward you or away from you, and what clothing he is wearing. These details are themselves predictions based on probabilities (e.g., Uncle Kevin never wears plaid), so your brain can compare the simulation to actual sensory input and compute and resolve any prediction error. This resolution does not happen in a single step but in millions of bits and pieces (as the prediction loops discussed in chapter 4). Each visual detail is unpacked into even more detailed predictions in turn, for (say) colors, shirt texture, and so on, each of which involves more prediction loops and cascading and unpacking. The cascade ends in the brain’s primary visual cortex, which represents your lowest-level visual concepts in a tornado of ever-changing lines and edges.

Figure 6-1: The concept cascade. When you develop a concept (right to left), sensory input is compressed into efficient, multisensory summaries. When you construct an instance of a concept by prediction (left to right), those efficient summaries unpack into ever more detailed predictions, which are checked against actual sensory input at each stage.

Cascades begin—of all places—within our old friend the interoceptive network.* That’s where multisensory summaries are constructed in your brain. Cascades end in your primary sensory regions, where the tiniest details of your experience are represented, not just for vision as in our example but also for sound, touch, interoception, and the rest of your senses.

If one cascade of predictions accounts for the incoming sensory input—Uncle Kevin is indeed in front of you, his hair pulled back in a particular way, wearing a particular shirt, his voice sounding a particular way, your body in a particular state, and so on—then you have constructed an instance of “Happiness” having to do with feeling connected to friends. That is, the entire cascade is that instance of the concept “Happiness” as you glimpse your uncle. You are feeling happy.

The concept cascade reveals the neural reasons for several of the claims I’ve made earlier in the book. First, your cascade of predictions explains why an experience like happiness feels triggered rather than constructed. You’re simulating an instance of “Happiness” even before categorization is complete. Your brain is preparing to execute movements in your face and body before you feel any sense of agency for moving, and is predicting your sensory input before it arrives. So emotions seem to be “happening to” you, when in fact your brain is actively constructing the experience, held in check by the state of the world and your body.10

Second, the cascade explains a statement I made in chapter 4, that every thought, memory, emotion, or perception that you construct in your life includes something about the state of your body. Your interoceptive network, which regulates your body budget, is launching these cascades. Every prediction you make, and every categorization your brain completes, is always in relation to the activity of your heart and lungs, your metabolism, your immune function, and the other systems that contribute to your body budget.

Third, the cascade also highlights the neural advantages of high emotional granularity, the phenomenon (described in chapter 1) of constructing more precise emotional experiences. When your brain constructs multiple instances of “Happiness” at seeing Uncle Kevin, it must sort out which one best resembles your current sensory input, to become the winning instance. This is a big job for your brain with some metabolic cost. But imagine if the English language had a more specific word than “happiness” for feeling attachment to a close friend, such as the Korean word jeong (정). Your brain would require less effort to construct with this more precise concept. Even better, if you had a special word for “happiness at feeling close to my Uncle Kevin,” your brain could be even more efficient at determining the winning instance. On the other hand, if you were constructing with the very broad concept “Pleasant Feeling” rather than “Happiness,” your brain’s job would be harder. Preciseness leads to efficiency; this is a biological payoff of higher emotional granularity.11

Finally, we’re seeing population thinking in action in the brain, because multiple predictions make up a concept in the moment. You do not construct just one instance of “Happiness” and experience it. You construct a large population of predictions, each of which has its own cascade. That population is a concept. It doesn’t represent the sum total of everything you know about happiness, just summaries that fit your goal—bumping into a friend—in a similar situation. In a different, happiness-related situation, like receiving a gift or hearing your favorite song, your interoceptive network would launch very different summaries (and cascades) representing “Happiness” in that moment. These dynamic constructions are another example of efficiency in the brain.

Scientists have known for some time that knowledge from the past, wired into brain connections, creates simulated experiences of the future, such as imagination. Other scientists focus on how this knowledge creates experiences of the present moment. The Nobel laureate and neuroscientist Gerald M. Edelman called your experiences “the remembered present.” Today, thanks to advances in neuroscience, we can see that Edelman was correct. An instance of a concept, as an entire brain state, is an anticipatory guess about how you should act in the present moment and what your sensations mean.12

My description of the concept cascade is just a sketch of a much larger parallel process. In real life, your brain never categorizes 100 percent with one concept and 0 percent with others. Predictions are more probabilistic than that. Your brain launches thousands of predictions simultaneously in every moment, in a storm of probabilities, and never lingers on a single winning instance. When you construct one hundred varied, simultaneous predictions of Uncle Kevin in a moment, each one is a cascade. (If you’re interested in more details about the neuroscience, see appendix D.)13

…

Each time you categorize with concepts, your brain creates many competing predictions while being bombarded by sensory input. Which predictions should be the winners? Which sensory input is important, and which is just noise? Your brain has a network to help resolve these uncertainties, known as your control network. This is the same network that transforms an infant’s “lantern” of attention into the adult “spotlight” you have now.14

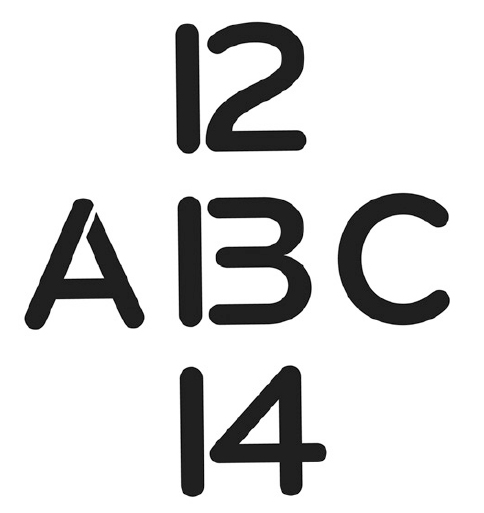

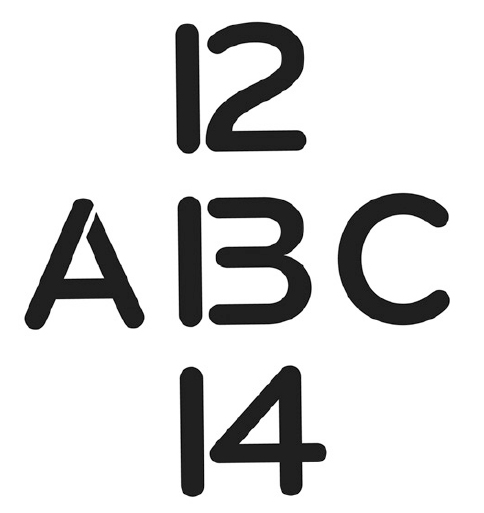

The famous optical illusion in figure 6-2 illustrates your control network in action. Depending on the context, whether you read horizontally or vertically, you’ll perceive the central symbol as a “B” or a “13.” Your control net work helps select the winning concept—letter or number?—in each moment.15

Figure 6-2: The control network helps the brain select among potential categorizations: in this case, “B” versus “13.”

Your control network also helps construct instances of emotion. Suppose you’ve recently argued with your significant other, and now you’re having chest pain. Is it a heart attack, indigestion, an experience of anxiety, or a perception that your partner’s being unreasonable? Your interoceptive network will launch hundreds of competing instances of different concepts, each a brain-wide cascade, to resolve this quandary. Your control network assists in efficiently constructing and selecting among the candidate instances so your brain can pick a winner. It helps neurons to participate in certain constructions rather than others, and keeps some concept instances alive while suppressing others. The result is akin to natural selection, in which the instances most suitable to the current environment survive to shape your perception and action.16

The name “control network” is unfortunate because it implies a central position of authority, as if the network were making decisions and conducting the process. This is not the case. Your control network is more of an optimizer. It constantly tinkers with the information flow among neurons, ramping up the firing rate of some neurons and slowing down others, which moves sensory input in and out of your attentional spotlight, making some predictions fit while others become irrelevant. It’s like a car-racing team that constantly optimizes the engine and body to make a car slightly faster and safer. This tinkering ultimately helps your brain simultaneously to regulate your body budget, produce a stable perception, and launch an action.17

Your control network helps select between emotion and non-emotion concepts (is this anxiety or indigestion?), between different emotion concepts (is this excitement or fear?), between different goals for an emotion concept (in fear, should I escape or attack?), and between different instances (when running to escape, should I scream or not?). When you’re watching a movie, your control network might favor your visual and auditory systems, transporting you into the story. At other times it might background the traditional five senses in favor of more intense affect, resulting in an experience of emotion. Much of this tinkering happens outside your awareness.18

Some scientists refer to the control network as an “emotion regulation” network. They assume that emotion regulation is a cognitive process that exists separately from emotion itself, say, when you’re pissed off at your boss but refrain from punching him. From the brain’s perspective, however, regulation is just categorization. When you have an experience that feels like your so-called rational side is tempering your emotional side—a mythical arrangement that you’ve learned is not respected by brain wiring—you are constructing an instance of the concept “Emotion Regulation.”19

Your control network and interoceptive network, as you’ve now seen, are critical for constructing emotion. Moreover, these two core networks together contain most of the major hubs for communication throughout the entire brain. Think about the world’s largest airports that serve multiple airlines. A traveler in JFK International Airport in New York can switch between American Airlines and British Airways because the two airlines overlap there. Likewise, information can pass efficiently between different networks in your brain via the major hubs in the interoceptive and control networks.20

These major hubs help to synchronize so much of your brain’s information flow that they might even be a prerequisite for consciousness. If any of these hubs become damaged, your brain is in big trouble: depression, panic disorder, schizophrenia, autism, dyslexia, chronic pain, dementia, Parkinson’s disease, and attention deficit hyperactivity disorder are all associated with hub damage.21

The major hubs in your interoceptive and control networks make possible what I describe in chapter 4, that your everyday decisions are driven by your body-budgeting regions—your inner, loudmouthed, mostly deaf scientist who views the world through affect-colored glasses. You see, your brain’s body-budgeting regions are major hubs. Through their massive connections, they broadcast predictions that alter what you see, hear, and otherwise perceive and do. That’s why, at the level of brain circuitry, no decision can be free of affect.

…

I’ve said several times that the brain acts like a scientist. It forms hypotheses through prediction and tests them against the “data” of sensory input. It corrects its predictions by way of prediction error, like a scientist adjusts his or her hypotheses in the face of contrary evidence. When the brain’s predictions match the sensory input, this constitutes a model of the world in that instant, just like a scientist judges that a correct hypothesis is the path to scientific certainty.

Several years ago, my family was eating dinner in our kitchen in Boston when suddenly, simultaneously, all of us had a sensation that was entirely new. Our chairs tipped backward for a moment, then righted themselves, but in a curvy sort of way like cresting an ocean wave. This completely novel experience left us in a state of experiential blindness, so we started forming hypotheses. Did we all simply lose our balance momentarily? No, that wasn’t likely to happen to three people at once. Did a car crash outside the house? No, we hadn’t heard anything. Had a building exploded far away, out of audible range, making the ground tremble? Maybe, but the feeling wasn’t so much a tremble as a swoop. What about an earthquake? Maybe, but we’d never been in an earthquake before, and ours had lasted only one second, much shorter than earthquakes we’d seen in disaster movies. However, the rising and falling shape, an almost sinusoidal motion, was consistent with our understanding of earthquakes. An earthquake was the best match to our knowledge, so we settled on that hypothesis. A few hours later, we learned that a magnitude 4.5 earthquake had struck in nearby Maine and rippled throughout New England.

This same process of elimination that my family performed consciously, the brain does naturally, automatically, and extremely rapidly. Your brain has a mental model of the world as it will be in the next moment, developed from past experience. This is the phenomenon of making meaning from the world and the body using concepts. In every waking moment, your brain uses past experience, organized as concepts, to guide your actions and give your sensations meaning.

I’ve been calling this process “categorization,” but it’s known by many other names in science. Experience. Perception. Conceptualization. Pattern completion. Perceptual inference. Memory. Simulation. Attention. Morality. Mental Inference. In the folk psychology of daily life, these words mean different things, and scientists often study them as different phenomena, assuming each is produced by a distinct process in the brain. But really, they arise via the same neural processes.

When I feel cheerful as my nephew Jacob exuberantly wraps his little arms around my neck for a big hug, this is conventionally called “an emotional experience.” When I see happiness in the big smile on his face as he is hugging me, I am no longer experiencing but “perceiving.” When I recollect the hug and how warm it made me feel, I am no longer perceiving but “remembering.” When I contemplate whether I was feeling happy or sentimental, I am no longer remembering but “categorizing.” My view is that these terms don’t mark sharp distinctions, and they can all be accounted for with the same brain ingredients for making meaning.

To make meaning is to go beyond the information given. A fast-beating heart has a physical function, such as getting enough oxygen to your limbs so you can run, but categorization allows it to become an emotional experience such as happiness or fear, giving it additional meaning and functions understood within your culture. When you experience affect with unpleasant valence and high arousal, you make meaning from it depending on how you categorize: Is it an emotional instance of fear? A physical instance of too much caffeine? A perception that the guy talking to you is a jerk? Categorization bestows new functions on biological signals, not by virtue of their physical nature but by virtue of your knowledge and the context around you in the world. If you categorize the sensations as fear, you are making meaning that says, “Fear is what caused these physical changes in my body.” When the concepts involved are emotion concepts, your brain constructs instances of emotion.22

When you perceived the blobby picture in chapter 2 as a bee, you made meaning from the visual sensations. Your brain accomplished this feat by predicting a bee and simulating lines to connect the blobs. Prior experience—seeing the real bee photograph—encouraged your brain to leave the prediction uncorrected. As a result, you perceived a bee in the blobs. Your prior experiences shape the meaning of momentary sensations. This same miraculous process makes emotion.

Emotions are meaning. They explain your interoceptive changes and corresponding affective feelings, in relation to the situation. They are a prescription for action. The brain systems that implement concepts, such as the interoceptive network and the control network, are the biology of meaning-making.

So, now you know how emotions are made in the brain. We predict and categorize. We regulate our body budgets, as any animal does, but wrap this regulation in purely mental concepts like “Happiness” and “Fear,” that we construct in the moment. We share these purely mental concepts with other adults, and we teach them to our children. We make a new kind of reality and live in it every day, mostly unaware that we are doing so. That’s the topic of the next chapter.