1

Once upon a time, in the 1980s, I thought I would be a clinical psychologist. I headed into a Ph.D. program at the University of Waterloo, expecting to learn the tools of the trade as a psychotherapist and one day treat patients in a stylish yet tasteful office. I was going to be a consumer of science, not a producer. I certainly had no intention of joining a revolution to unseat basic beliefs about the mind that have existed since the days of Plato. But life sometimes tosses little surprises in your direction.

It was in graduate school that I felt my first tug of doubt about the classical view of emotion. At the time, I was researching the roots of low self-esteem and how it leads to anxiety or depression. Numerous experiments showed that people feel depressed when they fail to live up to their own ideals, but when they fall short of a standard set by others, they feel anxious. My first experiment in grad school was simply to replicate this well-known phenomenon before building on it to test my own hypotheses. In the course of this experiment, I asked a large number of volunteers if they felt anxious or depressed using well-established checklists of symptoms.1

I’d done more complicated experiments as an undergraduate student, so this one should have been a piece of cake. Instead, it crashed and burned. My volunteers did not report anxious or depressed feelings in the expected pattern. So I tried to replicate a second published experiment, and it failed too. I tried again, over and over, each experiment taking months. After three years, all I’d achieved was the same failure eight times in a row. In science, experiments often don’t replicate, but eight consecutive failures is an impressive record. My internal critic taunted me: not everyone is cut out to be a scientist.

When I looked closely at all the evidence I had collected, however, I noticed something consistently odd across all eight experiments. Many of my subjects appeared to be unwilling, or unable, to distinguish between feeling anxious and feeling depressed. Instead, they had indicated feeling both or neither; rarely did a subject report feeling just one. This made no sense. Everybody knows that anxiety and depression, when measured as emotions, are decidedly different. When you’re anxious, you feel worked up, jittery, like you’re worried something bad will happen. In depression you feel miserable and sluggish; everything seems horrible and life is a struggle. These emotions should leave your body in completely opposite physical states, and so they should feel different and be trivial for any healthy person to tell apart. Nevertheless, the data declared that my test subjects weren’t doing so. The question was . . . why?

As it turned out, my experiments weren’t failing after all. My first “botched” experiment actually revealed a genuine discovery—that people often did not distinguish between feeling anxious and feeling depressed. My next seven experiments hadn’t failed either; they’d replicated the first one. I also began noticing the same effect lurking in other scientists’ data. After completing my Ph.D. and becoming a university professor, I continued pursuing this mystery. I directed a lab that asked hundreds of test subjects to keep track of their emotional experiences for weeks or months as they went about their lives. My students and I inquired about a wide variety of emotional experiences, not just anxious and depressed feelings, to see if the discovery generalized.

These new experiments revealed something that had never been documented before: everyone we tested used the same emotion words like “angry,” “sad,” and “afraid” to communicate their feelings but not necessarily to mean the same thing. Some test subjects made fine distinctions with their word use: for example, they experienced sadness and fear as qualitatively different. Other subjects, however, lumped together words like “sad” and “afraid” and “anxious” and “depressed” to mean “I feel crappy” (or, more scientifically, “I feel unpleasant”). The effect was the same for pleasant emotions like happiness, calmness, and pride. After testing over seven hundred American subjects, we discovered that people vary tremendously in how they differentiate their emotional experiences.

A skilled interior designer can look at five shades of blue and distinguish azure, cobalt, ultramarine, royal blue, and cyan. My husband, on the other hand, would call them all blue. My students and I had discovered a similar phenomenon for emotions, which I described as emotional granularity.2

Here’s where the classical view of emotion entered the picture. Emotional granularity, in terms of this view, must be about accurately reading your internal emotional states. Someone who distinguished among different feelings using words like “joy,” “sadness,” “fear,” “disgust,” “excitement,” and “awe” must be detecting physical cues or reactions for each emotion and interpreting them correctly. A person exhibiting lower emotional granularity, who uses words like “anxious” and “depressed” interchangeably, must be failing to detect these cues.

I began wondering if I could teach people to improve their emotional granularity by coaching them to recognize their emotional states accurately. The key word here is “accurately.” How can a scientist tell if someone who says “I’m happy” or “I’m anxious” is accurate? Clearly, I needed some way to measure an emotion objectively and then compare it to what the person reports. If a person reports feeling anxious, and the objective criteria indicate that he is in a state of anxiety, then he is accurately detecting his own emotion. On the other hand, if the objective criteria indicate that he is depressed or angry or enthusiastic, then he’s inaccurate. With an objective test in hand, the rest would be simple. I could ask a person how he feels and compare his answer to his “real” emotional state. I could correct any of his apparent mistakes by teaching him to better recognize the cues that distinguish one emotion from another and improve his emotional granularity.

Like most students of psychology, I had read that each emotion is supposed to have a distinct pattern of physical changes, roughly like a fingerprint. Each time you grasp a doorknob, the fingerprints that you leave behind may vary depending on the firmness of your grip, how slippery the surface is, or how warm and pliable your skin is at that moment. Nevertheless, your fingerprints look similar enough each time to identify you uniquely. The “fingerprint” of an emotion is likewise assumed to be similar enough from one instance to the next, and in one person to the next, regardless of age, sex, personality, or culture. In a laboratory, scientists should be able to tell whether someone is sad or happy or anxious just by looking at physical measurements of a person’s face, body, and brain.

I felt confident that these emotion fingerprints could provide the objective criteria I needed to measure emotion. If the scientific literature was correct, then assessing people’s emotional accuracy would be a breeze. But things did not turn out quite as I expected.

According to the classical view of emotion, our faces hold the key to assessing emotions objectively and accurately. A primary inspiration for this idea is Charles Darwin’s book The Expression of the Emotions in Man and Animals, where he claimed that emotions and their expressions were an ancient part of universal human nature. All people, everywhere in the world, are said to exhibit and recognize facial expressions of emotion without any training whatsoever.3

So, I thought that my lab should be able to measure facial movements, assess our test subjects’ true emotional state, compare it to their verbal reports of emotion, and calculate their accuracy. If subjects made a pouting expression in the lab, for instance, but did not report feeling sad, we could train them to recognize the sadness they must be feeling. Case closed.

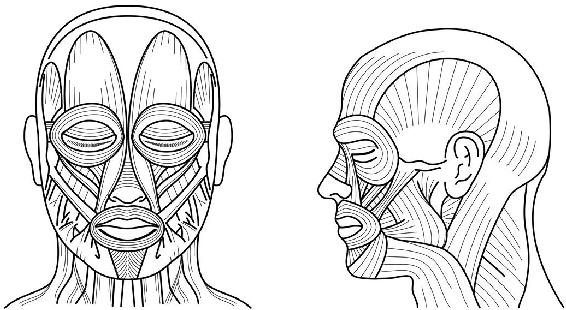

The human face is laced with forty-two small muscles on each side. The facial movements that we see each other make every day—winks and blinks, smirks and grimaces, raised and wrinkled brows—occur when combinations of facial muscles contract and relax, causing connective tissue and skin to move. Even when your face seems completely still to the naked eye, your muscles are still contracting and relaxing.4

According to the classical view, each emotion is displayed on the face as a particular pattern of movements—a “facial expression.” When you’re happy, you’re supposed to smile. When you’re angry, you’re supposed to furrow your brow. These movements are said to be part of the fingerprint of their respective emotions.

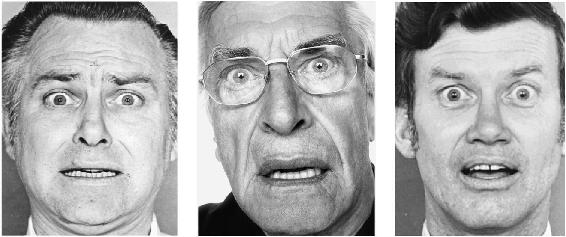

Back in the 1960s, the psychologist Silvan S. Tomkins and his protégés Carroll E. Izard and Paul Ekman decided to test this in the lab. They created sets of meticulously posed photographs, such as those in figure 1-2, to represent six so-called basic emotions they believed had biological fingerprints: anger, fear, disgust, surprise, sadness, and happiness. These photos, which featured actors who were carefully coached, were supposed to be the clearest examples of facial expressions for these emotions. (They might look exaggerated or artificial to you, but they were designed this way on purpose, because Tomkins believed they gave the strongest, clearest signals for emotion.)5

Using posed photos like these, Tomkins and his crew applied an experimental technique to study how well people “recognize” emotional expressions, or, more precisely, how well they perceive facial movements as expressions of emotion. Hundreds of published experiments have used this method, and it’s still considered the gold standard today. A test subject is given a photograph and a set of emotion words, as in figure 1-3.

The subject then chooses the word that best matches the face. In this case, the intended word is “Surprise.” Or, using a slightly different setup, a test subject is given two posed photos and a brief story, as in figure 1-4, and then picks which face best matches the story. In this case, the intended face is on the right.6

This research technique—let’s call it the basic emotion method—revolutionized the scientific study of what Tomkins’s group called “emotion recognition.” Using this method, scientists showed that people from around the world could consistently match the same emotion words (translated into the local language) to posed faces. In one famous study, Ekman and his colleagues traveled to Papua New Guinea and ran experiments with a local population, the Fore people, who had little contact with the Western world. Even this remote tribe could consistently match the faces to the expected emotion words and stories. In later years, scientists ran similar studies in many other countries such as Japan and Korea. In each case, subjects easily matched the posed scowls, pouts, smiles, and so on to the provided emotion words or stories.7

From this evidence, scientists concluded that emotion recognition is universal: no matter where you are born or grow up, you should be able to recognize American-style facial expressions like those in the photos. The only way expressions could be universally recognized, the reasoning went, is if they are universally produced: thus, facial expressions must be reliable, diagnostic fingerprints of emotion.8

Other scientists, however, worried that the basic emotion method was too indirect and subjective to reveal emotion fingerprints because it involves human judgment. A more objective technique, called facial electromyography (EMG), removes human perceivers altogether. Facial EMG places electrodes on the surface of the skin to detect the electrical signals that make facial muscles move. It precisely identifies the parts of the face as they move, how much, and how often. In a typical study, test subjects wear electrodes over their eyebrows, forehead, cheeks, and jaw as they view films or photos, or as they remember or imagine situations, to evoke a variety of emotions. Scientists record the electrical changes in muscle activity and calculate the degree of movement in each muscle during each emotion. If people move the same facial muscles in the same pattern each time they experience a given emotion—scowling in anger, smiling in happiness, pouting in sadness, and so on—and only when they experience that emotion, then the movements might be a fingerprint.9

As it turns out, facial EMG presents a serious challenge to the classical view of emotion. In study after study, the muscle movements do not reliably indicate when someone is angry, sad, or fearful; they don’t form predictable fingerprints for each emotion. At best, facial EMG reveals that these movements distinguish pleasant versus unpleasant feeling. Even more damning, the facial movements recorded in these studies do not reliably match the posed photos created for the basic emotion method.10

Let’s take a moment and consider the implications of these findings. Hundreds of experiments have shown that people worldwide can match emotion words to so-called expressions of emotion, posed by actors who aren’t actually feeling those emotions. However, those expressions can’t be consistently and specifically detected by objective measures of facial muscle movements when people are actually feeling emotion. We all move our facial muscles all the time, of course, and when we look at each other, we effortlessly see emotion in some of these movements. Nevertheless, from a purely objective standpoint, when scientists measure just the muscle movements themselves, those movements do not conform to the photographs.

It’s conceivable that facial EMG is too limited to capture all the meaningful actions in a face during an emotional experience. A scientist can place about six electrodes on each side of the face before a test subject starts to feel uncomfortable, too few to capture all forty-two facial muscles meaningfully. So scientists also employ an alternative technique called facial action coding (FACS), in which trained observers laboriously classify a subject’s individual facial movements as they occur. It’s less objective than facial EMG, since it relies on human perceivers, but presumably more objective than matching words to posed faces in the basic emotion method. Nevertheless, the movements observed during facial action coding also don’t consistently match the posed photos.11

These same inconsistencies show up in infants. If facial expressions are universal, then babies should be even more likely than adults to express anger with a scowl and sadness with a pout, because they’re too young to learn rules of social appropriateness. And yet when scientists observe infants in situations that should evoke emotion, the infants do not make the expected expressions. For example, the developmental psychologists Linda A. Camras and Harriet Oster and their colleagues videotaped babies from various cultures, employing a growling gorilla toy to startle them (to induce fear) or restraining their arm (to induce anger). Camras and Oster found, using FACS, that the range of babies’ facial movements in the two situations was indistinguishable. Nevertheless, when adults watched these videos, they somehow identified the infants in the gorilla film as afraid and infants in the arm restraint film as angry, even when Camras and Oster blanked out the babies’ faces electronically! The adults were distinguishing fear from anger based on the context, without seeing facial movements at all.12

Don’t get me wrong: newborns and young infants move their faces in meaningful ways. They make many distinctive facial movements when the situation implies that they might be interested or puzzled, or when they feel distress in response to pain or distaste in response to offending smells and tastes. But newborns don’t show differentiated, adult-like expressions like the photographs from the basic emotion method.13

Other scientists also have demonstrated, as Camras and Oster did, that you take tremendous information from the surrounding context. They graft photographs of faces and bodies that don’t belong together, like an angry scowling face attached to a body that’s holding a dirty diaper, and their test subjects nearly always identify the emotion appropriate to the body, not the face—in this case, disgust rather than anger. Faces are constantly moving, and your brain relies on many different factors at once—body posture, voice, the overall situation, your lifetime of experience—to figure out which movements are meaningful and what they mean.14

When it comes to emotion, a face doesn’t speak for itself. In fact, the poses of the basic emotion method were not discovered by observing faces in the real world. Scientists stipulated those facial poses, inspired by Darwin’s book, and asked actors to portray them. And now these faces are simply assumed to be the universal expressions of emotion.15

But they aren’t universal. To further demonstrate this, my lab conducted a study using photos from a group of emotion experts—accomplished actors. The photos came from the book In Character: Actors Acting, in which actors portray emotions by posing their faces to match written scenarios. We divided our U.S. test subjects into three groups. The first group read only the scenarios, for example, “He just witnessed a shooting on his quiet, tree-shaded block in Brooklyn.” A second group saw only the facial configurations, such as Martin Landau’s pose for the shooting scenario (figure 1-6, center). A third group saw the scenarios and the faces. In each case, we handed subjects a short list of emotion words to categorize whatever emotion they saw.16

For the shooting scenario I just mentioned, 66 percent of subjects who read the scenario alone or with Landau’s face rated the scenario as a fearful situation. But for subjects who saw Landau’s face alone, devoid of context, only 38 percent of them rated it as fear and 56 percent rated it as surprise. (Figure 1-6 compares Landau’s facial configuration to basic emotion method photos for “fear” and “surprise.” Does Landau look afraid or surprised? Or both?)

Figure 1-6: Actor Martin Landau (center) flanked by basic emotion method faces for fear (left) and surprise (right)

Other actors’ poses for fear were strikingly different from Landau’s. In one case, the actress Melissa Leo portrayed fear for the scenario: “She is trying to decide if she should tell her husband about a rumor going around that she is gay before he hears it from someone else.” Her mouth is closed and downturned, and her brow is slightly knitted. Nearly three-quarters of our test subjects who saw her face alone rated it as sad, but when presented with the scenario, 70 percent of subjects rated her face as displaying fear.17

This sort of variation held true for every emotion that we studied. An emotion like “Fear” does not have a single expression but a diverse population of facial movements that vary from one situation to the next.* (Think about it: When is the last time an actor won an Academy Award for pouting when sad?)

This may seem obvious once you pause to consider your own emotional experiences. When you experience an emotion such as fear, you might move your face in a variety of ways. While cowering in your seat at a horror movie, you might close your eyes or cover them with your hands. If you’re uncertain whether a person directly in front of you could harm you, you might narrow your eyes to see the person’s face better. If danger is potentially lurking around the next corner, your eyes might widen to improve your peripheral vision. “Fear” takes no single physical form. Variation is the norm. Likewise, happiness, sadness, anger, and every other emotion you know is a diverse category, with widely varying facial movements.18

If facial movements have so much variation within an emotion category like “Fear,” you might wonder why we find it so natural to believe that a wide-eyed face is the universal fear expression. The answer is that it’s a stereotype, a symbol that fits a well-known theme for “Fear” within our culture. Preschools teach these stereotypes to children: “People who scowl are angry. People who pout are sad.” They are cultural shorthands or conventions. You see them in cartoons, in advertisements, in the faces of dolls, in emojis—in an endless array of imagery and iconography. Textbooks teach these stereotypes to psychology students. Therapists teach them to their patients. The media spreads them widely throughout the Western world. “Now, wait just a minute,” you might be thinking. “Is she saying that our culture has created these expressions, and we all have learned them?” Well . . . yes. And the classical view perpetuates these stereotypes as if they are authentic fingerprints of emotion.

To be sure, faces are instruments of social communication. Some facial movements have meaning, but others do not, and right now, we know precious little about how people figure out which is which, other than that context is somehow crucial (body language, social situation, cultural expectation, etc.). When facial movements do convey a psychological message—say, raising an eyebrow—we don’t know if the message is always emotional, or even if its meaning is the same each time. If we put all the scientific evidence together, we cannot claim, with any reasonable certainty, that each emotion has a diagnostic facial expression.19

In my search for unique fingerprints of emotion, I clearly needed a more reliable source than the human face, so next I looked to the human body. Perhaps some telling changes in heart rate, blood pressure, and other body functions would provide the necessary fingerprints to teach people to recognize their emotions more accurately.

Some of the strongest experimental support for bodily fingerprints comes from a famous study by Paul Ekman, the psychologist Robert W. Levenson, and their colleague Wallace V. Friesen, published in the journal Science in 1983. They hooked up test subjects to machines to measure changes in the autonomic nervous system: variations in heart rate, temperature, and skin conductance (a measure of sweat). They also measured variations in arm tension, rooted in the skeletomotor nervous system. They then used an experimental technique to evoke anger, sadness, fear, disgust, surprise, and happiness, and observed the physical changes during each emotion. After analyzing the data, Ekman and his colleagues concluded that they had measured clear and consistent changes in these bodily responses, relating them to particular emotions. This study seemingly established objective, biological fingerprints in the body for each of the studied emotions, and today it remains a classic in the scientific literature.20

The famous 1983 study evoked emotion in a curious way—by having test subjects make and hold a facial pose from the basic emotion method. To evoke sadness, for example, a subject would frown for ten seconds. To evoke anger, a subject would scowl. While face-posing, subjects could use a mirror and were coached by Ekman himself to move particular facial muscles.21

The idea that a posed, so-called facial expression can trigger an emotional state is known as the facial feedback hypothesis. Allegedly, contorting your face into a particular configuration causes the specific physiological changes associated with that emotion in your body. Try it yourself. Knit your brows and pout for ten seconds—do you feel sad? Smile broadly. Do you feel happier? The facial feedback hypothesis is highly controversial—there is wide disagreement on whether a full-blown emotional experience can be evoked this way.22

The 1983 study did, in fact, observe bodily changes as people posed the required facial configurations. This is a remarkable finding: just posing a particular facial configuration changed the test subjects’ peripheral nervous system activity, even while they were comfortably motionless in a chair. Their fingertips were warmer when posing a scowl (anger pose). Their heartbeats were faster when posing scowls, wide-eyed startle (fear pose), and pouts (sad pose) when compared to the poses for happiness, surprise, and disgust. The remaining two measures, skin conductance and arm tension, did not distinguish one facial configuration from another.23

Even so, you must take some additional steps before you can claim that you’ve found a bodily fingerprint for an emotion. For one thing, you must show that the response during one emotion, say, anger, is different from that of other emotions—that is, it’s specific to instances of anger. Here, the 1983 study starts having some difficulty. It showed some specificity for anger but not for the other emotions tested. That means the bodily responses for different emotions were too similar to be distinct fingerprints.

In addition, you must show that no other explanations can account for your results. Then, and only then, can you claim to have found physical fingerprints for anger, sadness, and the rest. The 1983 study is, for this reason, subject to an alternative explanation, because the test subjects were given instructions for how to pose their faces. Western subjects could conceivably identify most of the target emotions from these instructions. This understanding can actually produce the heart rate and other physical changes Ekman and colleagues observed, a fact that was unknown when these studies were conducted. This alternative explanation is borne out by their later experiment with an African tribe, the Minangkabau of West Sumatra. These volunteers had less understanding of Western emotions and did not show the same physical changes as Western test subjects; they also reported feeling the expected emotion much less frequently than the Western subjects did.24

Other subsequent research has evoked emotions using a variety of different methods but has not replicated the original physiological differences observed in the 1983 paper. Quite a few studies employ horror movies, tearful chick flicks, and other evocative material to bring on particular emotions, while scientists measure subjects’ heart rate, respiration, and other bodily functions. Many such studies found great variability in physical measurements, meaning no clear pattern of bodily changes that distinguished emotions. In other studies, scientists did find distinguishing patterns, but different studies often found different patterns, even when using exactly the same film clips. In other words, when studies distinguished anger from sadness from fear, they did not always replicate one another, implying that the instances of anger, sadness, and fear cultivated in one study were different from those cultivated in another.25

When faced with a large collection of diverse experiments like this, it’s hard to extract a consistent story. Fortunately, scientists have a technique to analyze all the data together and reach a unified conclusion. It’s called a “meta-analysis.” Scientists comb through large numbers of experiments conducted by different researchers, combining their results statistically. As a simple example, suppose you wanted to check if increased heart rate is part of the bodily fingerprint of happiness. Rather than run your own experiment, you could do a meta-analysis of other experiments that measured heart rate during happiness, even incidentally (e.g., the study could be about the relationship between sex and heart attacks and have nothing centrally to do with emotion). You would search for all the relevant scientific papers, collect the relevant statistics from them, and analyze them en masse to test the hypothesis.

Where emotions and the autonomic nervous system are concerned, four significant meta-analyses have been conducted in the last two decades, the largest of which covered more than 220 physiology studies and nearly 22,000 test subjects. None of these four meta-analyses found consistent and specific emotion fingerprints in the body. Instead, the body’s orchestra of internal organs can play many different symphonies during happiness, fear, and the rest.26

You can see this variation easily in an experimental procedure used by laboratories around the world, where test subjects perform a difficult task such as counting backward by thirteen as fast as possible, or speaking about a polarizing topic like abortion or religion, while being ridiculed. As they struggle, the experimenter berates them for poor performance, making critical and even insulting remarks. Do all the test subjects get angry? No, they don’t. More importantly, those who do feel angry show different patterns of bodily changes. Some people fume in anger, but some cry. Others become quiet and cunning. Still others just withdraw. Each behavior (fuming, crying, planning, withdrawing) is supported by a different physiological pattern in the body, a detail long known by physiologists who study the body for its own sake. Even small changes in body posture, like lying back versus leaning forward with arms crossed, can completely alter an angry person’s physiological response.27

When I address audiences at conferences and present these meta-analyses, some people become incredulous: “Are you saying that in a frustrating, humiliating situation, not everyone will get angry so that their blood boils and their palms sweat and their cheeks flush?” And my answer is yes, that is exactly what I am saying. As a matter of fact, earlier in my career, when I was giving my first talks about these ideas, you could see variations in anger firsthand in audience members who really didn’t like the evidence. Sometimes they would shift around in their seats. Other times they shook their head in a silent “no.” Once a colleague yelled at me while his face turned red and he stabbed his finger in the air. Another colleague asked me, in a sympathetic tone, if I had ever felt real fear, because if I’d ever been seriously harmed, I would never be suggesting such a preposterous idea. Yet another colleague said he would tell my brother-in-law (a sociologist of his acquaintance) that I was damaging the science of emotion. My favorite example involved a much more senior colleague, built like a linebacker and towering a foot above me, who cocked his fist and offered to punch me in the face to demonstrate what real anger looks like. (I smiled and thanked him for the thoughtful offer.) In these examples, my colleagues demonstrated the variability of anger far more handily than my presentation did.

What does it mean that four meta-analyses, summarizing hundreds of experiments, revealed no consistent, specific fingerprints in the autonomic nervous system for different emotions? It doesn’t mean that emotions are an illusion, or that bodily responses are random. It means that on different occasions, in different contexts, in different studies, within the same individual and across different individuals, the same emotion category involves different bodily responses. Variation, not uniformity, is the norm. These results are consistent with what physiologists have known for over fifty years: different behaviors have different patterns of heart rate, breathing, and so on to support their unique movements.28

Despite tremendous time and investment, research has not revealed a consistent bodily fingerprint for even a single emotion.

My first two attempts to find objective fingerprints of emotion—in the face and body—had led me smack into a closed door. But as they say, when a door closes, sometimes a window opens. My window was the unexpected realization that an emotion is not a thing but a category of instances, and any emotion category has tremendous variety. Anger, for example, varies far more than the classical view of emotion predicts or can explain. When you’re angry at someone, do you shout and swear or do you seethe quietly? Do you tease back in reproach? How about widening your eyes and raising your eyebrows? During these times, your blood pressure might go up or down or stay the same. You might feel your heart beating in your chest, or not. Your hands might become clammy, or they might remain dry . . . whatever best prepares your body for action in that situation.

How does your brain create and keep track of all these diverse angers? How does it know which one fits the situation best? If I asked how you felt in each of these situations, would you give a detailed answer like “aggravated,” “irritated,” “outraged,” or “vengeful” automatically with little effort? Or would you answer “angry” in each case, or simply, “I feel bad”? How do you even know the answer? These are mysteries that the classical view of emotion doesn’t acknowledge.

I didn’t know it at the time, but as I considered emotion categories in all their diversity, I was unwittingly applying a standard way of thinking in biology called population thinking, which was proposed by Darwin. A category, such as a species of animal, is a population of unique members who vary from one another, with no fingerprint at their core. The category can be described at the group level only in abstract, statistical terms. Just as no American family consists of 3.13 people, no instance of anger must include an average anger pattern (should we be able to identify one). Nor will any instance necessarily resemble the elusive fingerprint of anger. What we have been calling a fingerprint might just be a stereotype.29

Once I adopted a mindset of population thinking, my whole landscape shifted, scientifically speaking. I began to see variation not as error but as normal and even desirable. I continued my quest for an objective way to distinguish one emotion from another, but it wasn’t quite the same quest anymore. With growing skepticism, I had only one place left to look for fingerprints. It was time to turn to the brain.*

Scientists have long studied people with brain damage (brain lesions) to try to locate an emotion in a specific area of the brain. If someone with a lesion in a particular area of the brain has difficulty experiencing or perceiving a particular emotion, and only that emotion, then this would be considered evidence that the emotion specifically depends on the neurons in that region. It’s a bit like finding out which circuit breakers in your house control which parts of your electrical system. Initially, all breakers are on and your house runs normally. When you shut off one breaker (giving your electrical system a lesion of sorts) and observe that your kitchen lights no longer function, you’ve discovered a purpose of the breaker.

The search for fear in the brain is an instructive example because for many years, scientists have considered it a textbook case of localizing emotion to a single brain area—namely, the amygdala, a group of nuclei found deep in the brain’s temporal lobe.* The amygdala was first linked to fear in the 1930s when two scientists, Heinrich Klüver and Paul C. Bucy, removed the temporal lobes of rhesus monkeys. Lacking an amygdala, these monkeys approached objects and animals that would normally frighten them, like snakes, unfamiliar monkeys, or others that they’d avoided before the surgery, without hesitation. Klüver and Bucy attributed these deficits to an “absence of fear.”30

Not long afterward, other scientists began studying humans with amygdala damage to see if those patients continued to experience and perceive fear. The most intensively studied case is a woman known as “SM,” afflicted with a genetic disease that gradually obliterates the amygdala during childhood and adolescence, called Urbach-Wiethe disease. Overall, SM was (and still is) mentally healthy and of normal intelligence, but her relationship to fear seemed quite unusual in laboratory tests. Scientists showed her horror movies like The Shining and The Silence of the Lambs, exposed her to live snakes and spiders, and even took her through a haunted house, but she reported no strong feelings of fear. When SM was shown wide-eyed facial configurations from the basic emotion method’s set of photos, she had difficulty identifying them as fearful. SM experienced and perceived other emotions normally.31

Scientists tried unsuccessfully to teach SM to feel fear, using a procedure commonly called fear learning. They showed her a picture and then immediately blasted a boat horn at one hundred decibels to startle her. This sound was meant to trigger SM’s fear response if she had one. At the same time, they measured SM’s skin conductance, which many scientists believe to be a measure of fear and is related to amygdala activity. After many repetitions of the picture followed by the horn blast, they showed SM the picture alone and measured her response. People with intact amygdalae would have learned to associate the picture with the startling sound, so if just shown the picture, their brain would predict the horn blast and their skin conductance would jump. But no matter how many times scientists paired the picture and the loud sound, SM’s skin conductance didn’t increase when viewing the picture alone. The experimenters concluded that SM could not learn to fear new objects.32

Overall, SM seemed fearless, and her damaged amygdalae seemed to be the reason. From this and other similar evidence, scientists concluded that a properly functioning amygdala was the brain center for fear.

But then, a funny thing happened. Scientists found that SM could see fear in body postures and hear fear in voices. They even found a way to make SM feel terror, by asking her to breathe air that was loaded with extra carbon dioxide. Lacking the normal degree of oxygen, SM panicked. (Don’t worry, she was not in danger.) So SM could clearly feel and perceive fear under some circumstances, even without her amygdalae.33

As brain lesion research progressed, other people with amygdala damage were discovered and tested, and the clear and specific link between fear and the amygdala dissolved. Perhaps the most important counterevidence came from a pair of identical twins who lost the supposed fear-related parts of their amygdalae to Urbach-Wiethe disease. Both were diagnosed at the age of twelve, have normal intelligence, and have a high school education. Despite their identical DNA, equivalent brain damage, and a common environment both as children and adults, the twins have very different profiles regarding fear. One twin, BG, is much like SM: she has similar fear-related deficits yet experiences fear when breathing carbon dioxide–loaded air. The other twin, AM, has basically normal responses during fear: other brain networks are compensating for her missing amygdalae. So we have identical twins, with identical DNA, suffering from identical brain damage, living in highly similar environments, but one has some fear-related deficits while the other has none.34

These findings undermine the idea that the amygdala contains the circuit for fear. They point instead to the idea that the brain must have multiple ways of creating fear, and therefore the emotion category “Fear” cannot be necessarily localized to a specific region. Scientists have studied other emotion categories in lesion patients besides fear, and the results have been similarly variable. Brain regions like the amygdala are routinely important to emotion, but they are neither necessary nor sufficient for emotion.35

This is one of the most surprising things I learned as I began to study neuroscience: a mental event, such as fear, is not created by only one set of neurons. Instead, combinations of different neurons can create instances of fear. Neuroscientists call this principle degeneracy. Degeneracy means “many to one”: many combinations of neurons can produce the same outcome. In the quest to map emotion fingerprints in the brain, degeneracy is a humbling reality check.36

My lab has observed degeneracy while performing brain scans on volunteers. We showed them evocative photos, with subject matter like skydiving and bloody corpses, and asked them how much bodily arousal they felt. Men and women reported equivalent feelings of arousal, and both had increased activity in two brain areas, the anterior insula and early visual cortex. However, women’s feelings of arousal were more strongly linked to the anterior insula, while men’s were more strongly linked to visual cortex. This is evidence that the same experience—feelings of arousal—was associated with different patterns of neural activity, an example of degeneracy.37

Another surprising thing I learned while training to be a neuroscientist, along with degeneracy, is that many parts of the brain serve more than one purpose. The brain contains core systems that participate in creating a wide variety of mental states. A single core system can play a role in thinking, remembering, decision-making, seeing, hearing, and experiencing and perceiving diverse emotions. A core system is “one to many”: a single brain area or network contributes to many different mental states. The classical view of emotion, in contrast, considers particular brain areas to have dedicated psychological functions, that is, they are “one to one.” Core systems are therefore the antithesis of neural fingerprints.38

To be clear, I’m not saying that every neuron in the brain does exactly the same thing, nor that every neuron can stand in for every other. (That view is called equipotentiality, and it’s been long disproved.) I am saying that most neurons are multipurpose, playing more than one part, much as flour and eggs in your kitchen can participate in many recipes.

The reality of core systems has been established through virtually every experimental method in neuroscience, but it’s most easily seen with brain-imaging techniques that observe the brain in action. The most common method is called functional magnetic resonance imaging (fMRI), which can peer harmlessly into the heads of living people who are experiencing emotion or perceiving emotion in others, recording the changes in magnetic signals related to firing neurons.39

Even so, scientists employ fMRI to search for emotion fingerprints throughout the brain. If a particular blob of brain circuitry shows increased activation during a particular emotion, researchers reason, that would be evidence that the blob computes the emotion. Scientists initially focused their scanners on the amygdala and whether it contains the neural fingerprint for fear. One key piece of evidence came from test subjects who looked at photos of so-called fear poses from the basic emotion method while in the scanner. Their amygdalae increased in activity compared to when they viewed faces with neutral expressions.40

As research continued, however, anomalies emerged. Yes, the amygdala was showing an increase in activity, but only in certain situations, like when the eyes of a face were staring directly at the viewer. If the eyes were gazing off to the side, the neurons in the amygdala barely changed their firing rates. Also, if test subjects viewed the same stereotyped fear pose over and over again, their amygdala activation rapidly tapered off. If the amygdala truly housed the circuit for fear, then this habituation should not occur—the circuit should fire in an obligatory way whenever it is presented with a triggering “fear” stimulus. From these contrary results, it became clear to me—and ultimately to many other scientists—that the amygdala is not the home of fear in the brain.41

In 2008, my lab along with neurologist Chris Wright demonstrated why the amygdala increases in activity in response to the basic emotion fear faces. The activity increases in response to any face—whether fearful or neutral—as long as it is novel (i.e., the test subjects have not seen it before). Since the wide-eyed, fearful facial configurations of the basic emotion method occur rarely in everyday life, they are novel when test subjects view them in brain-imaging experiments. These findings, and others like them, provide an alternative explanation for the original experiments that don’t require the amygdala to be the brain locus of fear.42

Over the past two decades, this back-and-forth trajectory, with evidence followed by counterevidence, has occurred in research on every brain region that has ever been identified as the neural fingerprint of an emotion. So my lab set out to settle the question of whether brain blobs are really emotion fingerprints once and for all. We examined every published neuroimaging study on anger, disgust, happiness, fear, and sadness, and combined those that were usable statistically in a meta-analysis. Altogether, this comprised nearly 100 published studies involving nearly 1,300 test subjects across almost 20 years.43

To make sense of this large amount of data, we divided the human brain virtually into tiny cubes called voxels, the 3-D version of pixels. Then, for every voxel in the brain during every emotion studied in every experiment, we recorded whether or not an increase in activation was reported. Now we could compute the probability that each voxel would show an increase in activation during the experience or perception of each emotion. When the probability was greater than chance, we called it statistically significant.

Our comprehensive meta-analysis found little to support the classical view of emotion. The amygdala, for example, did show a consistent increase in activity for studies of fear, more than what you’d expect by chance, but only in a quarter of fear experience studies and about 40 percent of fear perception studies. These numbers fall short of what you’d expect for a neural fingerprint. Not only that, but the amygdala also showed a consistent increase during studies of anger, disgust, sadness, and happiness, indicating that whatever functions the amygdala was performing in some instances of fear, it was also performing those functions during some instances of those other emotions.

Interestingly, amygdala activity likewise increases during events usually considered non-emotional, such as when you feel pain, learn something new, meet new people, or make decisions. It’s probably increasing now as you read these words. In fact, every supposed emotional brain region has also been implicated in creating non-emotional events, such as thoughts and perceptions.

Overall, we found that no brain region contained the fingerprint for any single emotion. Fingerprints are also absent if you consider multiple connected regions at once (a brain network), or stimulate individual neurons with electricity. The same results hold in experiments with other animals that allegedly have emotion circuits, such as monkeys and rats. Emotions arise from firing neurons, but no neurons are exclusively dedicated to emotion. For me, these findings have been the final, definitive nail in the coffin for localizing emotions to individual parts of the brain.44

By now, I hope you see that for a very long time, people have held a mistaken view of emotions. Many research studies claim to have identified physical fingerprints that distinguish one emotion from another. Nevertheless, these supportive studies are found within a much larger scientific context that doesn’t support the classical view.*

Some scientists might say that the contrary studies are simply wrong; after all, experiments on emotion can be pretty tricky to pull off. Some areas of the brain are really difficult to see. Heart rate is influenced by all kinds of factors that have nothing to do with emotion, like how much sleep test subjects had the night before, whether they had any caffeine in the last hour, and whether they are sitting, standing, or lying down. It’s also challenging to make test subjects experience emotion on cue. Trying to evoke blood-curdling fear or brain-boiling anger is against the rules: all universities have Institutional Review Boards that prevent people like me from inflicting too much emotional agony on innocent volunteers.45

But even considering all these caveats, far more experiments call the classical view into doubt than we would expect by chance, or even due to inadequate experimental methods. Facial EMG studies demonstrate that people move their facial muscles in many different ways, not one consistent way, when feeling an instance of the same emotion category. Large meta-analyses conclude that a single emotion category involves different bodily responses, not a single, consistent response. Brain circuitry operates by the many-to-one principle of degeneracy: instances of a single emotion category, such as fear, are handled by different brain patterns at different times and in different people. Conversely, the same neurons can participate in creating different mental states (one-to-many).

I hope you’ve caught the pattern emerging here: variation is the norm. Emotion fingerprints are a myth.

If we want to truly understand emotions, we must start taking that variation seriously. We must consider that an emotion word, like “anger,” does not refer to a specific response with a unique physical fingerprint but to a group of highly variable instances that are tied to specific situations. What we colloquially call emotions, such as anger, fear, and happiness, are better thought of as emotion categories, because each is a collection of diverse instances. Just as instances of the category “Cocker Spaniel” vary in their physical attributes (tail length, nose length, coat thickness, running speed, and so on) more than genes alone can account for, so might instances of “Anger” vary in their physical manifestations (facial movements, heart rate, hormones, vocal acoustics, neural activity, and so on), and this variation might be related to the environment or context.46

When you adopt a mindset of variation and population thinking, so-called emotion fingerprints give way to better explanations. Here’s an example of what I mean. Some scientists, using techniques from artificial intelligence, can train a software program to recognize many, many brain scans of people experiencing different emotions (say, anger and fear). The program computes a statistical pattern that summarizes each emotion category and then—here’s the cool part—can actually analyze new scans and determine if they are closer to the summary pattern for anger or fear. This technique, called pattern classification, works so well that it’s sometimes called “neural mind-reading.”

Some of these scientists claim that the statistical summaries depict neural fingerprints for anger and fear. But that’s a gigantic logical error. The statistical pattern for fear is not an actual brain state, just an abstract summary of many instances of fear. These scientists are mistaking a mathematical average for the norm.47

My collaborators and I applied pattern classification to our meta-analysis of brain-imaging studies of emotion. Our computer program learned to classify scans from about 150 different studies. We found patterns across the brain that predict better than chance whether the test subjects in a specific study were experiencing anger, disgust, fear, happiness, or sadness. These patterns are not emotion fingerprints, however. The pattern for anger, for example, consists of a set of voxels across the brain, but that pattern need not appear in any individual brain scan for anger. The pattern is an abstract summary. In fact, no individual voxel appeared in all the scans of anger.48

When properly applied, pattern classification is an example of population thinking. A species, you may recall, is a collection of diverse individuals, so it can be summarized only in statistical terms. The summary is an abstraction that does not exist in nature—it does not describe any individual member of the species. Where emotion is concerned, on different occasions and in different people, different combinations of neurons can create instances of an emotion category like anger. Even when two experiences of anger feel the same to you, they can have different brain patterns via degeneracy. But we can still summarize many varying instances of anger to describe how, in abstract terms, they might be distinguishable from all the varying instances of fear. (Analogy: no two Labrador Retrievers are identical, but they’re all distinguishable from Golden Retrievers.)

My long search for fingerprints in the face, body, and brain brought me to a realization that I had not expected—that we need a new theory of what emotions are and where they come from. In the chapters that follow, I introduce you to this new theory, which accounts for all the findings of the classical view as well as all the inconsistencies you’ve just seen. By moving beyond fingerprints and following the evidence, we will seek a better and more scientifically justified understanding, not only of emotion but also of ourselves.