Appendix D

Evidence for the Concept Cascade

I’ve described the brain in two ways that look like hierarchies. (They are metaphors to help understand brain activity; neurons are not wired in a strict hierarchy.) The first hierarchy in chapter 6 illustrates how the brain uses sensory input to form concepts, as a hierarchy of similarities and differences. This hierarchy is bottom-up and should be familiar to neuroscientists. Your primary sensory regions are at the bottom; their neurons fire to represent the different sensory details of bodily sensations, changing wavelengths of light, changes in air pressure, and so on, that make up a particular instance. The neurons at the top of the hierarchy represent the highest-level, efficient, multisensory summary of the instance.

The second hierarchy in chapter 4 illustrates how concepts unpack as predictions, based on the structure of the cortex. This hierarchy is top-down and incorporates some of my own discoveries. Body-budgeting circuitry (more commonly called visceromotor limbic circuitry), the loudmouth of the brain, is at the top, and it issues but does not receive predictions. Primary sensory regions are at the bottom, as they receive predictions but don’t issue them to other cortical regions. In this manner, body-budgeting regions drive predictions throughout the brain and down to the primary sensory regions, in progressively finer detail.

The two hierarchies represent the same circuitry but operate in reverse. The former hierarchy is for learning concepts and the latter—which I call the concept cascade—is for applying those concepts to construct your perceptions and actions. In this manner, categorization is a whole-brain activity, with predictions flowing from simulated similarities to simulated differences, and prediction errors flowing in the other direction.

The concept cascade involves some reasoned speculation but is consistent with evidence from neuroscience. At present, we have scientific evidence that all the external sensory systems (vision, hearing, etc.) operate by prediction. Along with my colleague neuroscientist W. Kyle Simmons, I discovered that the interoceptive network is also structured to function this way.1

Right now, scientists have specific details of the conceptual cascade within the visual system. The broader conceptual cascade that I’ve outlined in this book is based on three very solid pieces of evidence: (1) the anatomical evidence in chapter 4 about how predictions and prediction errors flow across the structure of cortex, (2) the anatomical evidence showing that the cortex is structured to compress sensory differences into multisensory summaries in chapter 6, and (3) scientific evidence on the functions of several brain networks, which we’ll discuss now.2

A prediction originates as a multisensory summary, representing the goal of the concept, in a portion of the interoceptive network known as the default mode network. Notice I did not say that concepts are “stored” in the default mode network. I specifically use the word “originate.” Concepts do not live wholesale in the default mode network, or anywhere else, as if they were entities. This network simulates only part of the concept, namely, the efficient, multisensory summaries of the concept instances with none of their sensory details. When your brain constructs a concept of “Happiness” on the fly, for use in a specific situation, degeneracy is in play. Each instance is created with its own pattern of neurons. The more conceptually similar the instances are, the more the neural patterns will be close to one another in the default mode network, and some will even overlap, using some of the same neurons. Different representations need not be separate in the brain, just separable.3

The default mode network is an intrinsic network. In fact, it was the first intrinsic network to be discovered. Scientists noticed a set of brain regions that increased their activity when subjects were lying at rest. They named these regions the “default mode” because they are spontaneously active while the brain isn’t being probed or stimulated by an experimental procedure. When I first learned about this network, I thought the choice of name was unfortunate, because numerous other intrinsic networks have since been discovered. But the name is ironic: Scientists originally believed the brain’s “default” activity was aimless mind-wandering between tasks, when in fact this network is at the core of every prediction in the brain. Your brain’s “default mode” by which it interprets and navigates the world, namely, prediction using concepts, makes the name fit this network nicely.4

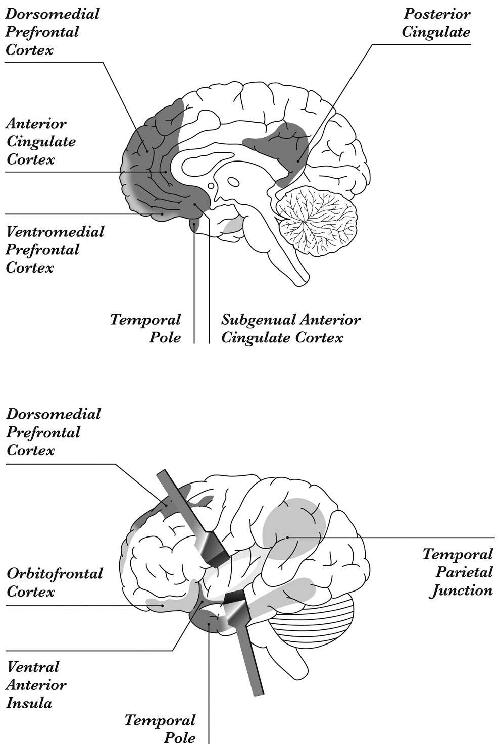

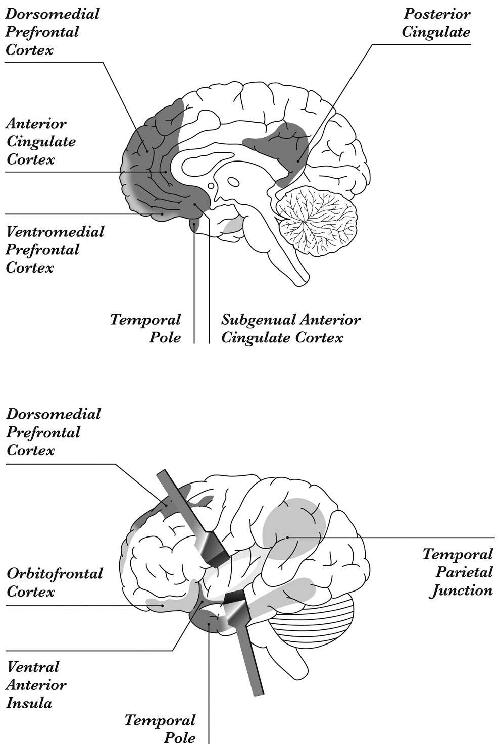

Figure AD-1: The default mode network, which lies within the interoceptive network. Body-budgeting regions, which launch predictions, are in dark gray. They send commands to the subcortical nuclei that control the body’s tissues and organs, metabolism, and immune function. Top is medial view, bottom is lateral.

Neuroscientists have demonstrated pretty definitively that the default mode network represents key portions of concepts. This discovery required clever scientific experiments. You can’t simply ask a test subject to simulate a concept and then look for increased activation in the default mode network. That single concept would barely perturb the brain’s maelstrom of intrinsic activity, like spitting into an ocean wave. Fortunately, the cognitive neuroscientist Jeffrey R. Binder and his colleagues designed an ingenious brain-scanning experiment to work around this issue. They created two experimental tasks, one that used more conceptual knowledge than the other, and “subtracted” the results to yield the difference.

In Binder’s first experimental task, test subjects in a brain scanner listened to names of animals like “fox,” “elephant,” and “cow,” and were asked a question whose answer requires rich conceptual knowledge of purely mental similarities (e.g., “Is the animal found in the United States and used by people?”). In the second task, subjects were scanned while making a decision that requires more limited conceptual knowledge based on perceptual similarity (e.g., they were told to listen to syllables like “pa-da-su” and respond when they hear the consonants “b” and “d”). Both tasks should produce an increase in activation in sensory and motor networks, but only the former task should produce an increase in the default mode network. By “subtracting” one brain scan from the other, Binder and his colleagues removed the brain activity related to sensory and motor details and observed an increase in activity within the default mode network, as predicted. Binder’s findings have been replicated by a meta-analysis of 120 similar brain-imaging experiments.5

The default mode network supports mental inference, that is, categorizing another person’s thoughts and feelings with mental concepts. In one study, participants were presented with written descriptions of actions such as drinking coffee, brushing teeth, and eating ice cream. On some trials, participants were asked how people performed these actions: drinking coffee from a mug, brushing teeth with a toothbrush, eating ice cream with a spoon. Participants appeared to simulate these actions in motor regions of the brain. On other trials, participants were asked why people performed such actions: drinking coffee to stay awake, brushing teeth to avoid cavities, eating ice cream because it tastes good. These judgments require purely mental concepts, and they were more associated with activity in the default mode network.6

A growing number of cognitive neuroscientists, social psychologists, and neurologists speculate that the default mode network has a general function: it allows you to simulate how the world might be different from the way it is right now. This includes remembering the past and imagining the future from different points of view. This remarkable ability provides you with a leg up when negotiating the two big challenges of human life: getting along with others and getting ahead to benefit yourself. The social psychologist Daniel T. Gilbert, author of Stumbling on Happiness, who is famous for his humorous eloquence, calls the default mode network an “experience simulator,” akin to flight simulators for training pilots. By simulating a future world, you are better equipped to reach your future goals.7

The default mode network unites past, present, and future. Information from the past, constructed as concepts, forms predictions about the present, which make you better equipped to reach your future goals.

I find it useful to think about the default mode network as playing a key role in categorization. The network initiates predictions to create simulations, thereby allowing the brain to work its magic of modeling the world. The “world” in this case includes the outside world, the minds of other people, and the body that holds the brain. Sometimes these simulations are corrected by the outside world, like when you construct emotions, and other times they aren’t, like when you imagine or dream.8

Of course, the default mode network is not working alone. It contains only part of the pattern required for making a concept, namely, the mental, goal-based, multisensory knowledge that initiates a cascade. Anytime you imagine things, or your mind wanders, or your brain performs other intrinsic activity, you also simulate sights, sounds, changes in your body budget, and other sensations that are the domain of sensory and motor networks. Thus, it stands to reason that the default mode network should be interacting with these other networks to construct instances of concepts. (And they do, which you’ll see shortly.)9

Newborns don’t have a fully formed default mode network, hence their inability to predict and their diffuse “lantern” of attention; newborn brains spend a lot of time learning from prediction error. It very well may be that experience with the multisensory world, anchored in body budgeting, provides the needed inputs that help the default mode network to form. This occurs sometime during the first few years of life as the brain is bootstrapping concepts into its wiring. What begins as “outside” becomes “inside” as you become wired by your environment.10

My lab has been investigating the biology of concepts and categorization for some time, and we’ve uncovered considerable evidence about the roles of the default mode network, the rest of the interoceptive network, and the control network. When we peer into the brains of people who are experiencing emotion, or perceiving emotion in blinks, furrowed brows, muscle twitches, and the lilting voices of others, we see pretty clearly that key parts of these networks are hard at work. For starters, you might remember my lab’s meta-analysis that examined every published neuroimaging study of emotion, which we saw in chapter 1. We divided the entire brain into tiny cubes called “voxels” (akin to “pixels” of the brain), and then identified voxels that consistently showed a significant increase in activity for any of the emotion categories we studied. We could not localize a single emotion category to any brain region. This same meta-analysis also provided evidence for the theory of constructed emotion. We identified groups of voxels that activated together with high probability, like a network would. These groups of voxels consistently fell within the interoceptive and control networks.11

When you consider that our meta-analysis, at the time it was conducted, covered over 150 diverse, independent studies by hundreds of scientists, in which subjects viewed faces, smelled scents, listened to music, watched movies, remembered past events, and performed many other emotion-evoking tasks, the emergence of these networks is particularly compelling. These findings are even more remarkable to me because the studies covered by the meta-analysis weren’t designed to test the theory of constructed emotion. Most were inspired by classical view theories and designed to localize each emotion to a different region of the brain. And most of them studied only the most stereotyped examples of emotion categories and did not examine each emotion in all its real-life variations.

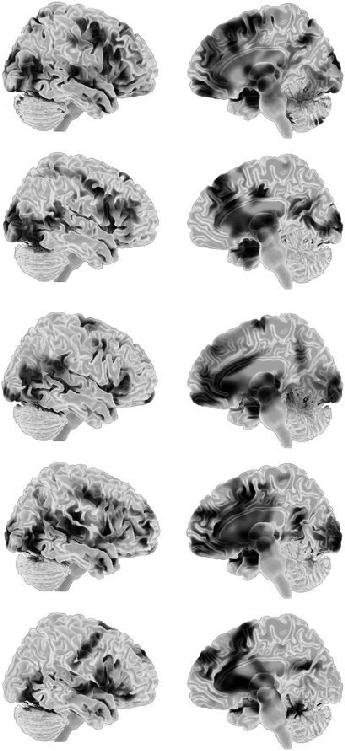

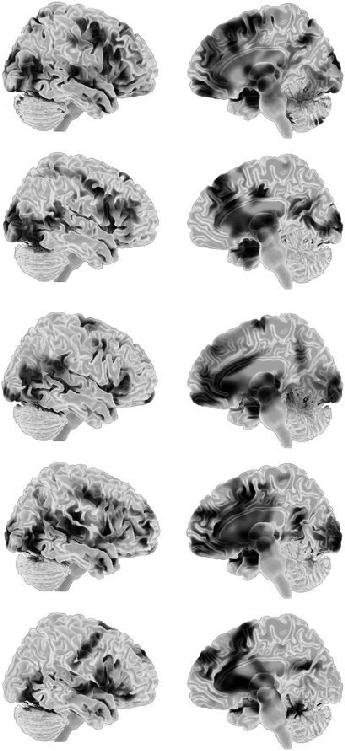

Our meta-analysis project is ongoing, and we have collected almost four hundred brain-imaging studies to date. From this data, my colleagues and I used pattern classification analysis (chapter 1) to produce five summaries of emotion categories, shown in figure AD-2. In all five, the interoceptive network played a significant role. The control network was also present for all five, but less clearly for happiness and sadness. Remember that you’re not looking at neural fingerprints here, just abstract summaries. No single instance of anger, disgust, fear, happiness, or sadness looks exactly like its associated summary. Each instance can use diverse combinations of neurons, as we know from the principle of degeneracy. For each study of (say) anger in the meta-analysis, the brain activity was closer to the anger summary than to the other summaries, so it was identified as anger. So we can diagnose an instance of anger, but we cannot specify which neurons will be active. In other words, we have applied Darwin’s principle of population thinking to the construction of anger. The same result follows for the other four emotion categories we studied.12

Figure AD-2: Statistical summaries of the concepts (top to bottom) “Anger,” “Disgust,” “Fear,” “Happiness,” and “Sadness.” These are not neural fingerprints (see chapter 1). Left is lateral view, right is medial view.

When we specifically design experiments to test the theory of constructed emotion, we find similar results. In one study, my collaborators Christine D. Wilson-Mendenhall and Lawrence W. Barsalou, and I asked subjects to immerse themselves in imagined scenarios while we performed brain scans. We saw evidence of the resulting simulations as increased activity in sensory and motor regions. We could also see evidence that their body budgets were perturbed, associated with changes in the interoceptive network. In a second phase after each immersion, test subjects were shown a word and asked to categorize their interoceptive sensations as instances of either “Anger” or “Fear.” As our subjects simulated these concepts, we saw even more increased activity in the interoceptive network. We also saw activations representing the low-level sensory and motor details, as well as increased activity in a key node in the control network.13

In a later study, we had subjects construct atypical, infrequent simulations, such as the pleasant fear of riding a rollercoaster and the unpleasant happiness of injuring yourself while winning a competition. We hypothesized that less typical simulations would require the interoceptive network to work harder to issue predictions, compared to simulating more typical instances like pleasant happiness and unpleasant fear, which are like mental habits. This is exactly what we observed.14

In a more recent set of experiments, our test subjects watched evocative movie scenes, and we saw the interoceptive network construct ongoing emotional experiences. Talma Hendler’s lab at Tel Aviv University in Israel chose film clips that would create a variety of different experiences of sadness, fear, and anger. For example, some test subjects watched a scene from Sophie’s Choice where the title character, played by Meryl Streep, must choose one of her children to be taken from her at Auschwitz. Other test subjects watched a clip from the film Stepmom, where Susan Sarandon’s character reveals to her children that she is dying of cancer. In all cases, we observed that the default mode network and the remainder of the interoceptive network were firing more in synchrony in the moments when subjects reported more intense emotional experiences, and less so when subjects reported less intense experiences.15

Other studies make a similar case for emotion perception. In one study, subjects watched movies and explicitly categorized the characters’ physical movements as emotional expressions. In other words, they made mental inferences about what the movements meant, a task that requires concepts. Their brains showed increased activity in the interoceptive network, in nodes of the control network, and in visual cortex where objects are represented.16

…

When discussing concepts, we must be mindful not to essentialize because it’s super easy to imagine concepts as “stored” in your brain. For example, you could think concepts live in the default mode network alone (as if the summaries exist apart from their sensory and motor details). There is abundant evidence (and very little doubt), however, that any instance of any concept is represented by the entire brain. As you look at the hammer in figure AD-3, neurons in your motor cortex that control your hand movements have increased their firing. (And if you are like me, the neurons that simulate pain in your thumb are also firing madly.) This increase even occurs when you read the name of the object (“hammer”). Viewing the hammer also makes it easier for you to make a gripping motion with your hand.17

Figure AD-3: Tweaking your motor cortex

Likewise, as you read these words:

Apple, Tomato, Strawberry, Heart, Lobster

neurons that process color sensations in early visual cortex also increase their firing rate, because all of the objects are typically red. So concepts have no mental core in the default mode network; they are represented throughout the entire brain.18

A second essentialist misconception is that your default mode network has a single set of neurons for each goal, like little essences, even if the rest of the concept, such as sensory and motor features, is distributed throughout the brain. This cannot be the case, however. If it were, then in brain scans we’d see this “essence” activate first, under all conditions, because it’s at the top of their concept cascade, followed by the more variable sensory and motor differences depending on the situation, but we see nothing of the kind.19

Here again, essentialism yields to degeneracy. Each time you construct an instance of an emotion concept like “Happiness” with a particular goal, such as being with a close friend, the pattern of neural firing can be different. Even the highest-level, multisensory summary of “Happiness,” represented by sets of neurons in the default mode network, can be different each time. None of these instances need be physically alike, and yet they are all instances of “Happiness.” What is binding them together? Nothing. They are not “bound” together in any permanent way. But they are very likely initiated concurrently, as predictions. When you read the word “happy” or hear it spoken, or when you find yourself surrounded by your favorite people, your brain launches a variety of predictions, each with some prior probability of being likely in whatever the specific situation is. Words are powerful. This is reasoned speculation on my part because the brain operates on degeneracy, words are key to concept learning, and the default mode network and the language network share many brain regions.20

A third mistake of essentialism is thinking of concepts as “things.” When I was an undergraduate student, I took a course in astronomy where I learned the universe was expanding. At first, I was baffled: expanding into what? I was confused because I harbored an incorrect intuition that the universe was expanding into space. After some reflection, I realized that I conceived of “space” in rather literal, physical terms, as a big, dark, empty bucket. Instead, “space” is a theoretical idea—a concept—not a concrete, fixed entity; space is always computed in relation to something else. (“Space and time are in the eye of the beholder.”)21

Something similar happens when people think about concepts. A concept is not a “thing” that exists in the brain, any more than “space” is a physical thing that the universe expands into. “Concept” and “space” are ideas. It is a verbal convenience to talk about “a” concept. Really you have a conceptual system. When I write “you have a concept for awe,” this translates as “you have many instances that you have categorized, or that have been categorized for you, as awe, and each can be reconstituted as a pattern in your brain.” The “concept” refers to all the knowledge you construct about awe in your conceptual system in a given moment. Your brain is not a vessel that “contains” concepts. It enacts them as a computational moment over some period of time. When you “use a concept,” you are really constructing an instance of that concept on the spot. You don’t have little packets of knowledge called “concepts” stored in your brain, any more than you have little packets called “memories” stored in your brain. Concepts have no existence separate from the process that creates them.22