Chapter 17. Infrastructure Code Testing Strategy

Continually testing and delivering changes to infrastructure code is the second of the three core practices of Infrastructure as Code described in “Core Practices for Infrastructure as Code”, along with defining everything as code and building infrastructure from small, simple pieces.

If a strong focus on testing creates good results when writing application code, it’s reasonable to expect it to be useful for infrastructure code as well. This chapter explores challenges and approaches with testing infrastructure code. It draws heavily on Agile engineering approaches to quality, such as Extreme Programming (XP) and test-driven development (TDD), that integrate testing with development.

I’ll build on these ideas in the following chapters to describe how to implement infrastructure testing into change delivery workflows by using automated pipelines. In Chapter 18, I’ll provide some patterns to implement automated testing in automated delivery workflows.

Why Continually Test Infrastructure Code?

Testing changes to your infrastructure is clearly a good idea. But the need to build and maintain a suite of test automation code may not be as clear. We often think of building infrastructure as a one-off activity: build it, test it, then use it. Why spend the effort to create an automated test suite for infrastructure if you’re going to build it only once?

Creating an automated test suite is hard work, especially when you consider the work needed to implement the delivery and testing tools and services—CI servers, pipelines, test runners, test scaffolding, and various types of scanning and validation tools. When getting started with Infrastructure as Code, building all these parts may seem like more work than building the workloads you’ll run on them.

In Chapter 1, I explained the rationale for implementing systems for delivering changes to infrastructure. To recap, you’ll make more changes to your infrastructure after you build it than you might expect. Once any nontrivial system is live, you need to patch, upgrade, fix, and improve it for as long as it’s in use.

A key benefit of CD is removing the classic, Iron Age distinction between the build and run phases of a system’s lifecycle. Any nontrivial system is continually updated, improved, and patched until it’s decommissioned, which brings build activities into the run phase. Modern approaches to designing and building systems encourage delivering a fully working subset of the system as early as possible so you can build production readiness into it. Activities and concerns from the run phase are included in the build phase.

You should design and implement your change delivery system, including automated testing and deployment, along with the system itself. Use this system to support prerelease implementation, and continue using the same automation after the first release. Integrating build and run in this way massively de-risks your “go-live,” since deploying version 1 uses the same processes and tooling that you’ve been using throughout development. And you get the capability to quickly and reliably roll out fixes and improvements to your production system for “free.”

What Continual Testing Means

One of the cornerstones of Agile engineering is testing as you work—build quality in. The earlier you validate whether each line of code you write is ready for production, the faster you can fix anything that needs it, and the sooner you can deliver value. Finding problems more quickly also means spending less time going back to investigate problems and less time fixing and rewriting code. Fixing problems continually avoids accumulating technical debt.

Most people get the importance of fast feedback. But what differentiates genuinely high-performing teams is how aggressively they pursue truly continual feedback.

Legacy delivery methodologies test after the system’s complete functionality has been implemented (often referred to as “feature complete”). Time-boxed Agile methodologies take this further. The team tests periodically during development, such as at the end of a sprint. Teams following Lean or Kanban test each story as they complete it.1

Truly continual testing involves testing even more frequently than this. People write and run tests as they code, even before finishing a story. They frequently push their code into a centralized, automated build system—ideally, at least once a day.2

People need to get feedback as soon as possible when they push their code so that they can respond to it with as little interruption to their flow of work as possible. Tight feedback loops are the essence of continual testing.

Immediate Testing and Eventual Testing

Another way to think of this is to classify each of your testing activities as either immediate or eventual. Immediate testing happens as soon as you push your code. Eventual testing happens after a delay, perhaps after a manual review, or maybe on a schedule, such as an automated nightly test run.

Ideally, testing is truly immediate, happening as you write code. Validation activities run in your editor, such as syntax highlighting or running unit tests. The Language Server Protocol (LSP) defines a standard for integrating syntax checking into IDEs, supported by implementations for various languages.

People who prefer the command line as a development environment can use a utility like inotifywait or entr to run checks in a terminal when your code changes.

Another example of immediate validation is pair programming, which is essentially a code review that happens as you work. Pairing provides much faster feedback than code reviews that happen after you’ve finished working on a story or feature and someone else finds time to review what you’ve done.3

The CI build and the CD pipeline should run immediately every time someone pushes a change to the codebase. Running immediately on each change not only gives them feedback faster but also ensures a small scope of change for each run. If the pipeline runs only periodically, it may include multiple changes from multiple people. If any of the tests fail, working out which change caused the issue is harder, meaning more people need to get involved and spend time to find and fix it.

What Should We Test with Infrastructure?

Quality assurance is about managing the risks of applying code to your system. Will the code break when applied? Does it create the right infrastructure? Does the infrastructure work the way it should? Does it meet operational criteria for performance, reliability, and security? Does it comply with regulatory and governance rules?

Teams should identify the risks that come from making changes to their infrastructure code, and create a repeatable process for testing any given change against those risks. This process takes the form of automated test suites and manual tests. A test suite is a collection of automated tests that are run as a group.

When people think about automated testing, they generally think about functional tests like unit tests and UI-driven journey tests. But the scope of risks is broader than functional defects, so the scope of validation needs to be broader as well. Constraints and requirements beyond the purely functional are often called non-functional requirements (NFRs) or cross-functional requirements (CFRs).4 Examples of aspects that you may want to validate, whether automatically or manually, include the following:

- Code quality

-

Is the code readable and maintainable? Does it follow the team’s standards for formatting and structuring code? Depending on the tools and languages you’re using, some tools can scan code for syntax errors and compliance with formatting rules, and run a complexity analysis. Depending on how long they’ve been around and how popular they are, infrastructure languages may not have many (or any!) of these tools. Manual review methods include gated code review processes, code showcase sessions, and pair programming.

- Functionality

-

Does the code do what it should? Ultimately, functionality is tested by deploying the applications onto the infrastructure and checking that they run correctly. Doing this indirectly tests that the infrastructure is correct, but you can often catch issues before deploying applications. An example of this for infrastructure is network routing. Can an HTTPS connection be made from the public internet to the web servers? You may be able to test this by using a subset of the entire infrastructure.

- Security

-

You can test security at a variety of levels, from code scanning to unit testing to integration testing and production monitoring. Some tools are specific to security testing, such as scanners for vulnerabilities and secrets. Writing security tests into standard test suites may also be useful. For example, unit tests can make assertions about open ports, user account handling, or access permissions.

- Compliance

-

Systems may need to comply with laws, regulations, industry standards, contractual obligations, or organizational policies. Ensuring and proving compliance can be time-consuming for infrastructure and operations teams. Automated testing can be enormously useful, both to catch violations quickly and to provide evidence for auditors. As with security, you can automate compliance checks at multiple levels of validation, from the code level to production testing. See “Controls by Workflow” for a broader look at this topic.

- Provenance

-

Code often makes use of libraries, providers, and other externally sourced code, whether open source or commercial. Tools are available that analyze this, sometimes referring to it as a software supply chain. This analysis can involve checking library versions with public databases to see if there are known vulnerabilities. These tools can also check that licensing is compatible with your organization’s policies. And they can make a record of what’s included for inventories and auditing, perhaps as a software bill of materials (SBOM).

- Performance

-

Automated tools can test how quickly specific actions complete. Testing the speed of a network connection from point A to point B can surface issues with the network configuration or the cloud platform if run before you even deploy an application. Finding performance issues on a subset of your system is another example of how you can get faster feedback.

- Scalability

-

Automated tests can prove that scaling works correctly—for example, checking that an auto-scaled cluster adds nodes when it should. Tests can also check whether scaling gives you the outcomes that you expect. For example, perhaps adding nodes to the cluster doesn’t improve capacity, because of a bottleneck somewhere else in the system. Having these tests run frequently means you’ll discover quickly if a change to your infrastructure breaks your scaling.

- Availability

-

Similarly, automated testing can prove that your system would be available in the face of potential outages. Your tests can destroy resources, such as nodes of a cluster, and verify that the cluster automatically replaces them. You can also test that scenarios that aren’t automatically resolved are handled gracefully—for example, showing an error page and avoiding data corruption.

- Operability

-

You can automatically test any other system requirements needed for operations. Teams can test monitoring (by injecting errors and proving that monitoring detects and reports them), logging, and automated maintenance activities.

Each of these types of validations can be applied at more than one level of scope, from server configuration to stack code to the fully integrated system. I discuss this in “Progressive Testing”. But first I’d like to address the things that make infrastructure especially difficult to test.

Challenges with Testing Infrastructure Code

Most of the teams I encounter that work with Infrastructure as Code struggle to implement the same level of automated testing and delivery for their infrastructure code as they would for application code. And many teams without a background in Agile software engineering find it even more difficult.

The premise of Infrastructure as Code is that we can apply software engineering practices, including those for testing, to infrastructure. But infrastructure code and application code differ significantly. We need to adapt some of the techniques and mindsets from application testing to make them practical for infrastructure.

This section presents a few challenges that arise from the differences between infrastructure code and application code.

Tests for Declarative Code Often Have Low Value

As mentioned in “Imperative and Declarative Languages and Tools”, many infrastructure tools use declarative languages rather than imperative languages. Declarative code typically declares the desired state for some infrastructure, such as this code that defines a networking subnet:

subnet:name:private_Aaddress_range:192.168.0.0/16

A test for this would simply restate the code:

assert:subnet("private_A").existsassert:subnet("private_A").address_range is("192.168.0.0/16")

A suite of low-level tests of declarative code can become a bookkeeping exercise. Every time you change the infrastructure code, you change the test to match. What value do these tests provide? Well, testing is about managing risks, so let’s consider what risks the preceding test can uncover:

-

The infrastructure code was never applied.

-

The infrastructure code was applied, but the tool failed to apply it correctly, without returning an error.

-

Someone changed the infrastructure code but forgot to change the test to match.

The first risk may be a real one, but it doesn’t require a test for every single declaration. Assuming you have code that does multiple things on a server, a single test would be enough to reveal that, for whatever reason, the code wasn’t applied.

The second risk boils down to protecting yourself against a bug in the tool you’re using. The tool developers should fix that bug, or your team should switch to a more reliable tool. I’ve seen teams use tests like this when they found a specific bug and wanted to protect themselves against it. Testing for this is OK to cover a known issue, but it is wasteful to blanket your code with detailed tests just in case your tool has a bug.

The last risk is circular logic. Removing the test would remove the risk it addresses and would remove work for the team.

Declarative Tests

The Given, When, Then format is useful for writing tests.5 A declarative test omits the When part, having a format more like “Given a thing, then it has these characteristics.” Tests written like this suggest that the code you’re testing doesn’t create variable outcomes. Declarative tests, like declarative code, have a place in many infrastructure codebases, but be aware that many tools and practices for testing dynamic code may not be appropriate.

In some situations, it’s useful to test declarative code. Two that come to mind are when the declarative code can create different outcomes, and when you combine multiple declarations.

Testing declarative code that varies outcomes

The previous example of declarative code is simple—the values are hardcoded, so the result of applying the code is clear. Variables introduce the possibility of creating different results, which may create risks that make testing more useful. Variables don’t always create variation that needs testing. What if we add simple variables to the earlier example:

subnet:name:${MY_APP}-${MY_ENVIRONMENT}address_range:${SUBNET_IP_RANGE}

This code doesn’t have much risk that isn’t already managed by the tool that applies it. If someone sets the variables to invalid values, the tool should fail with an error.

The code becomes riskier when more outcomes are possible. Let’s add some conditional code to the example:

subnet:name:${MY_APP}-${MY_ENVIRONMENT}address_range:get_networking_subrange(get_vpc(${MY_ENVIRONMENT}),data_centers.howmany,data_centers.howmany++)

This code has logic that might be worth testing. It calls two functions, get_networking_subrange() and get_vpc(), either of which might fail or return a result that interacts in unexpected ways with the other function.

The outcome of applying this code varies based on inputs and context, which makes writing tests worthwhile.

Testing Declarative Code Mixed with Procedural Code

Imagine that instead of calling these functions, you wrote the code to select a subset of the address range as a part of this declaration for your subnet. This is an example of mixing declarative and imperative code (as discussed in “Imperative and Declarative Languages and Tools”). The tests for the subnet code would need to include various edge cases of the imperative code—for example, what happens if the parent range is smaller than the range needed?

If your declarative code is complex enough that it needs complex testing, it is a sign that you should pull some of the logic out of your declarations and into a library written in a procedural language. You can then write clean, separate tests for that function and simplify the testing for the subnet declaration.

Testing combinations of declarative code

Testing declarative code is also valuable when you have multiple declarations for infrastructure that are combined into more-complicated structures. For example, you may have code that defines multiple networking structures—subnets, a load balancer, routing rules, and a gateway, for instance. Each piece of code would probably be simple enough that tests would be unnecessary. But the combination of these produces an outcome that is worth testing—that someone can make a network connection from point A to point B.

Testing that the tool created the elements declared in code is usually less valuable than testing that they enable the outcomes you want.

Unit Testing Code Generation

Many tools that support writing procedural or object-oriented code for infrastructure act as code generators. For example, AWS CDK uses the code you write in JavaScript or another language to generate CloudFormation templates, which are declarative JSON code. One of the benefits of writing infrastructure code in these languages is great support for writing unit tests.

A pitfall with this, however, is the illusion that your unit tests validate the infrastructure built using the code. In reality, they can validate only how the code generates the intermediate code, a few steps removed from the outcomes enabled by the infrastructure (e.g., “can a user connect from the public internet to the application running on the infrastructure?”). This is another example of the gap between infrastructure code and reality that was described in “Infrastructure Code Processing”.

This isn’t to say that unit testing infrastructure code written in programming languages isn’t valuable—only that it’s important to be conscious of what your tests are actually proving. Some validations may require applying the code and checking the resulting infrastructure resources and their behavior. This is part of the progressive testing strategies discussed later in this chapter.

Testing Infrastructure Code Is Slow

To test the results of infrastructure code, you need to apply it to relevant infrastructure instances. Applying the code on your IaaS platform is often slow and can be costly. The length of time needed to get feedback from these tests is a common reason that teams struggle to implement automated infrastructure testing.

The solution is usually a combination of strategies:

- Divide infrastructure into more tractable pieces

-

Including testability as a factor in designing a system’s structure is useful, as it’s one of the key ways to make the system easy to maintain, extend, and evolve. Making pieces smaller is one tactic, as smaller pieces are usually faster to provision and test. It’s easier to write and maintain tests for smaller, more loosely coupled pieces since they are simpler and have less surface area of risk. Chapter 5 discusses this topic in more depth.

- Clarify, minimize, and isolate dependencies

-

Each element of your system may have dependencies on other parts of your system, on platform services, and on services and systems that are external to your team, department, or organization. These impact testing, especially if you need to rely on someone else to provide instances to support your test. External dependencies may be slow, expensive, unreliable, or have inconsistent test data, especially if other users share them. Test doubles are a useful way to isolate a component so that you can test it quickly. You may use test doubles as part of a progressive testing strategy—first testing your component with test doubles, and later testing it integrated with other components and services. See “Use local emulators and test doubles” in this list for more about test doubles.

- Progressive testing

-

You’ll usually have multiple test suites to test different aspects of the system. You can run faster tests first, to get quicker feedback if they fail, and run slower, broader-scoped tests only after the faster tests have passed. For example, run static code analysis and syntax checkers on code before you apply it to provision infrastructure resources for other types of tests. I’ll delve into progressive testing strategies later in this chapter.

- Use local emulators and test doubles

-

You may use a local IaaS emulator as a test double to run some tests. As we’ll discuss in Chapter 18, testing with an emulator has limitations over testing with the real IaaS platform, but it can give faster feedback for some types of tests.

- Test offline where feasible and useful

-

Some types of tests run online, requiring you to provision infrastructure on the IaaS platform. Others can run offline, on your laptop or a build agent. Consider the nature of your various tests and consider which ones can run where. Offline tests usually run much faster. You can use test doubles to support offline testing.

- Use persistent IaaS instances

-

For online tests, you may create and destroy an instance of the infrastructure each time you test it (an ephemeral instance), or you may leave an instance running in between runs (persistent instances). Using ephemeral instances can make tests significantly slower, but it’s cleaner and gives more consistent results. Keeping persistent instances cuts the time needed to run tests, but may leave changes and accumulate inconsistencies over time, as well as run up hosting costs.6 Choose the appropriate strategy for a given set of tests and revisit the decision based on how well it’s working. I’ll provide more-concrete examples of implementing ephemeral and persistent instances in Chapter 18.

With any of these strategies, you should regularly assess how well they are working. If tests are unreliable, either failing to run correctly or returning inconsistent results, you should drill into the reasons and either fix them or replace them with something else. If tests rarely fail, or if the same tests almost always fail together, you may be able to strip them out to simplify your test suite. If you spend more time finding and fixing problems that originate in your tests rather than in the code you’re testing, look for ways to simplify and improve the tests.

Dependencies Complicate Testing Infrastructure

The time needed to set up other infrastructure that your code depends on makes testing even slower. A useful technique for addressing this is to replace dependencies with test doubles. A test double replaces a dependency needed by a component so you can test it in isolation.

Mocks, fakes, and stubs are types of test doubles. These terms tend to be used in different ways by different people, but I’ve found the definitions used by Gerard Meszaros in xUnit Test Patterns (Addison-Wesley) to be useful.7

In the context of infrastructure, a growing number of tools allow you to mock IaaS platform APIs or even emulate their behavior. I’ll share examples of emulators in Chapter 18.

It’s often more useful to use test doubles for other infrastructure components than for the infrastructure platform itself. Chapter 18 gives examples of using test doubles and other test fixtures for testing infrastructure stacks (see “Use Test Fixtures to Handle Dependencies”). Chapter 5 describes breaking infrastructure into smaller pieces and integrating them. Test fixtures are a key tool for keeping components loosely coupled.

Progressive Testing

Most nontrivial systems need multiple suites of tests to validate changes. Different suites may test different aspects, as described earlier in this chapter.

One suite may test a concern offline, such as checking for security vulnerabilities by scanning code syntax. Another suite may run online checks for the same concern (for example, by probing a running instance of an infrastructure stack for security vulnerabilities).

Progressive testing involves running test suites in a sequence. The sequence builds up, starting with simpler tests that run more quickly over a smaller scope of code, and then running more comprehensive tests over a broader set of integrated components and services. Models like the test pyramid and Swiss cheese testing (described next) help you think about how to structure validation activities across your test suites in different testing stages. The progressive sequence of testing becomes the basis for designing delivery pipelines.

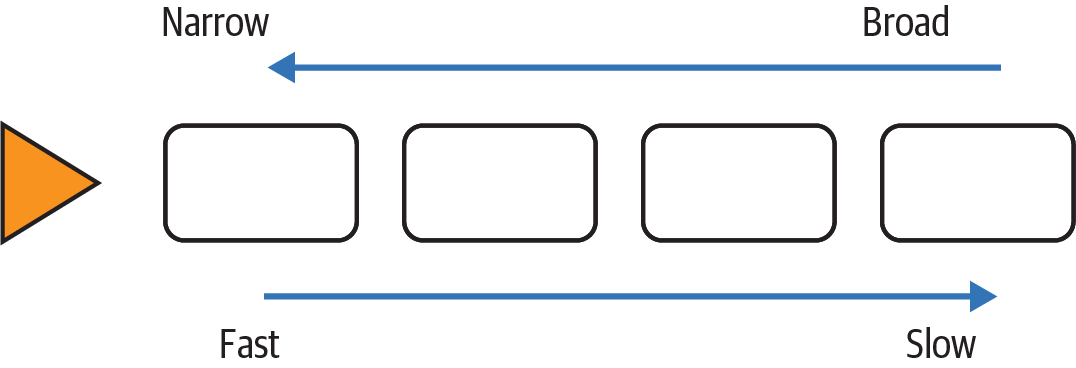

The guiding principle for a progressive feedback strategy is to optimize for fast, accurate feedback. As a rule, this means running faster tests with a narrower scope and fewer dependencies first and then running tests that progressively add more components and integration points (Figure 17-1). This way, small errors are quickly made visible so they can be quickly fixed and retested.

Figure 17-1. Scope versus speed of progressive testing

When a broadly scoped test fails, you have a large surface area of components and dependencies to investigate. You should try to find any potential area at the earliest point, with the smallest scope that you can.

Another goal of a test strategy is to keep the overall test suite manageable. Avoid duplicating tests in different stages. For example, you may test that your application server configuration code sets the correct directory permissions on the log folder. This test would run in an earlier stage that explicitly tests the server configuration, possibly an offline test stage. You should not test directory permissions in a later stage that focuses on the fully provisioned infrastructure stack.

Test Pyramid

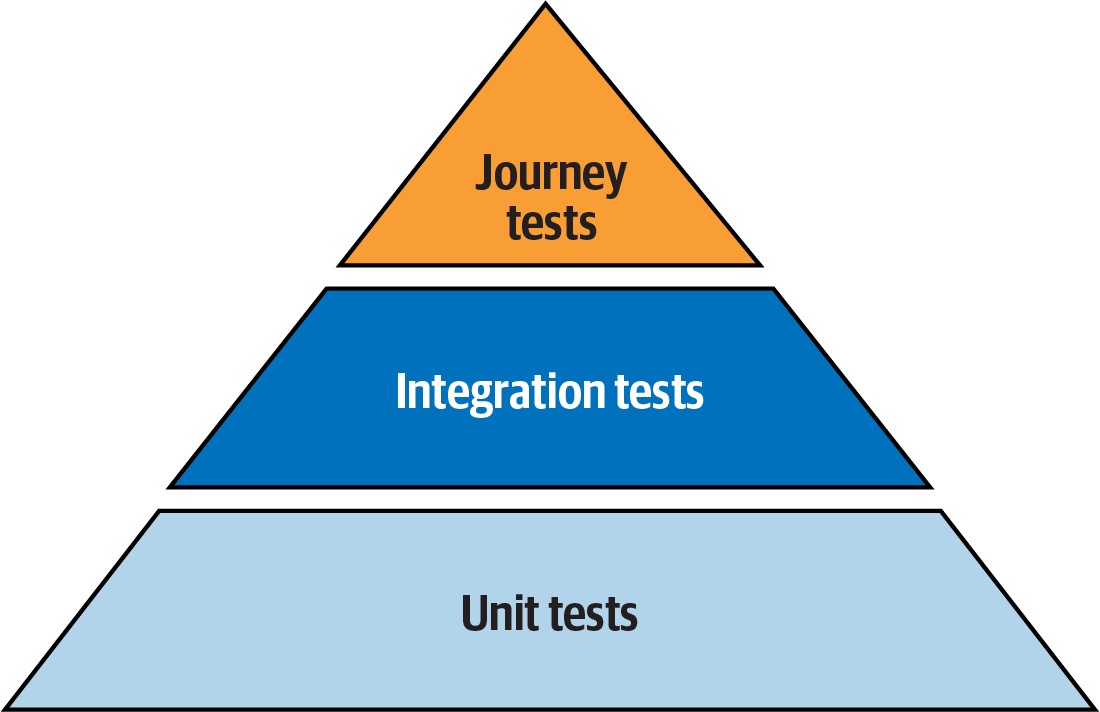

The test pyramid is a well-known model for software testing.8 Its key idea is that you should have more tests at the lower layers, which are the earlier stages in your progression, and fewer tests in the later stages represented higher in the pyramid. See Figure 17-2.

Figure 17-2. The classic test pyramid

The pyramid was devised for application software development. The lower level of the pyramid is composed of unit tests, each of which tests a small piece of code and runs very quickly.9 The middle layer is integration tests, each of which covers a collection of components. The higher stages are journey tests, driven through the UI, which test the application as a whole.

The scope of tests in higher levels of the pyramid includes everything covered in lower levels. This means they can be less comprehensive—they need to test only functionality that emerges from the integration of components, rather than proving the detailed behavior of each lower-level component.

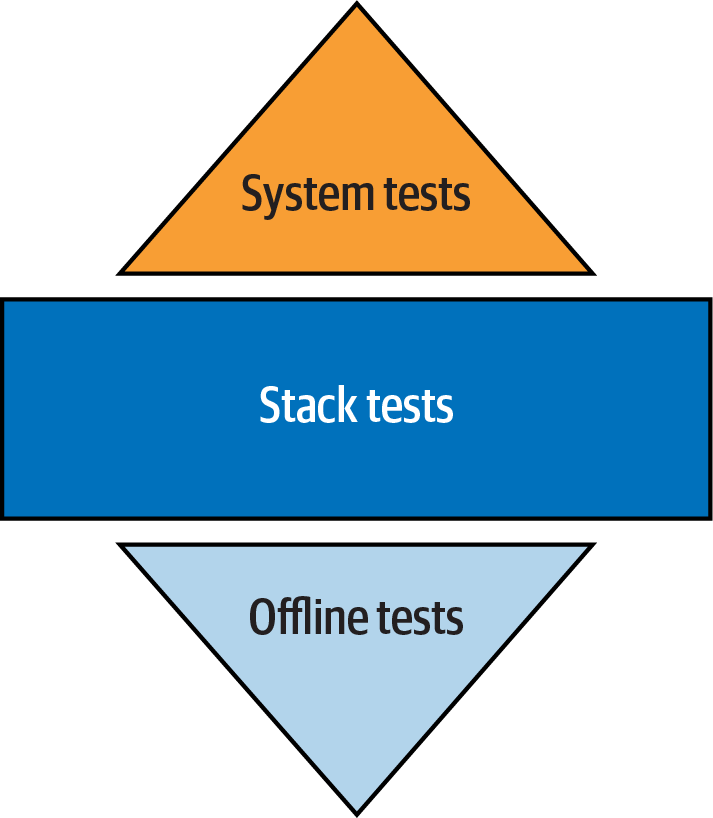

The test pyramid can be less valuable with declarative infrastructure codebases. Most low-level declarative stack code written for tools like Terraform and CloudFormation depends on the IaaS platform. Declarative code modules, such as Terraform modules, are difficult to test in a useful way, both because of the lower value of testing declarative code and because usually not much can be usefully tested without the IaaS platform.

Therefore, although you’ll almost certainly have low-level infrastructure tests, there may not be as many as the pyramid model suggests. An infrastructure test suite for declarative infrastructure may end up looking more like a diamond, as shown in Figure 17-3.

Figure 17-3. The infrastructure test diamond

The pyramid may be more relevant with an infrastructure codebase that makes heavier use of dynamic libraries written in imperative languages. These codebases have more components that produce variable results, so there is more to test at lower levels.

Swiss Cheese Testing Model

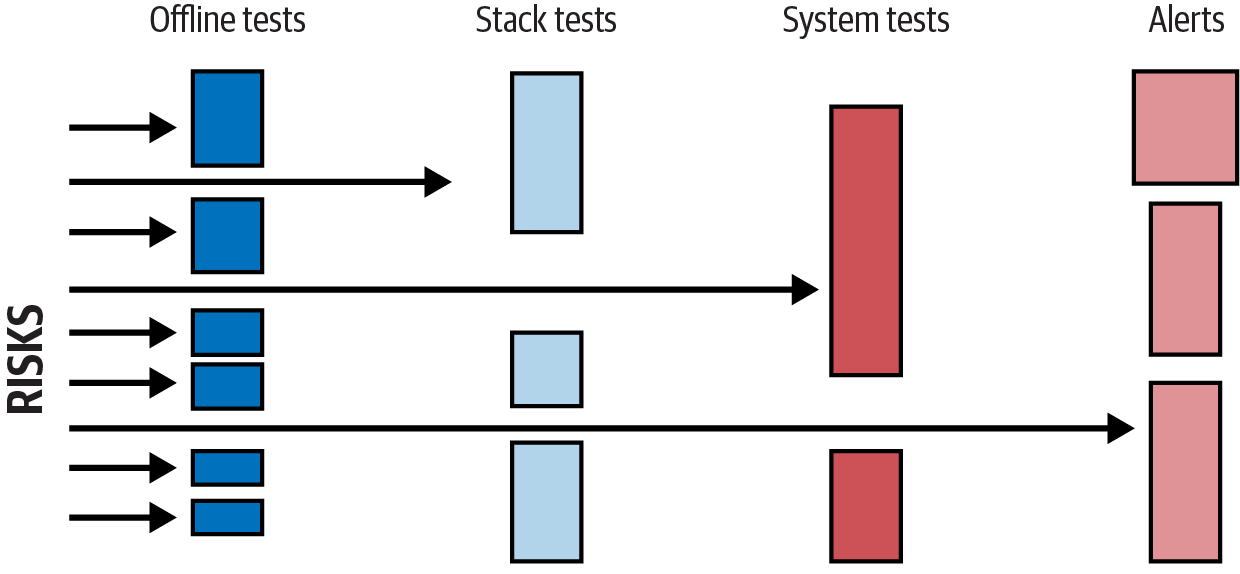

Another way to think about how to organize progressive tests is the Swiss cheese model. This concept comes from risk management outside the software industry. The idea is that a given layer of testing may have gaps, like the holes in a single slice of Swiss cheese, that can miss a defect or risk. But when you combine multiple layers, it looks more like a block of Swiss cheese, where no hole goes all the way through.

The point of using the Swiss cheese model for infrastructure testing is that you focus on where to catch any given risk (see Figure 17-4). You still want to catch issues in the earliest layer where it is feasible to do so, but the important point is that it is tested somewhere in the overall model.

Figure 17-4. Swiss cheese testing model

The key takeaway is to design your test suite based on managing risks rather than fitting a formula.

Testing in Production

Testing releases and changes before applying them to production is a big focus in our industry. At one client, I counted eight groups that needed to review and approve releases, even apart from the various technical teams that had to carry out tasks to install and configure various parts of the system.10

As systems increase in complexity and scale, the scope of risks that you can practically check for outside of production shrinks. This isn’t to say that testing changes before applying them to production has no value. But believing that prerelease testing can comprehensively cover your risks leads to the following:

-

Over-investing in prerelease testing, well past the point of diminishing returns

-

Under-investing in testing in your production environment

Going Deeper on Testing in Production

For more on testing in production, I recommend watching “Yes, I Test in Production (And So Do You)” by Charity Majors, which is a key source of my thinking on this topic.

What You Can’t Replicate Outside Production

Production environments have several characteristics you can’t realistically replicate outside of production:

- Data

-

Your production system may have larger data sets than you can replicate, and will undoubtedly have unexpected data values and combinations, thanks to your users.

- Users

-

Because of their sheer numbers, your users are far more creative at doing strange things than your testing staff.11

- Traffic

-

If your system has a nontrivial level of traffic, you can’t replicate the number and types of activities it will regularly experience. A week-long soak test is trivial compared to a year of running in production. Unexpected side effects of traffic and longevity include logs and other files filling storage capacity.

- Concurrency

-

Testing tools can emulate multiple users using the system at the same time, but they can’t replicate the unusual combinations of things that your users do concurrently.

The two challenges that come from these characteristics are that they create risks that you can’t predict, and they create conditions that you can’t replicate well enough to test anywhere other than production.

By running tests in production, you take advantage of the conditions that exist with large natural data sets and unpredictable concurrent activity.

Why Test Anywhere Other Than Production?

Obviously, testing in production is not a substitute for testing changes before you apply them to production. It helps to be clear on what you realistically can (and should!) test beforehand:

-

Does it work?

-

Does my code run?

-

Does it fail in ways I can predict?

-

Does it fail in the ways it has failed before?

Testing changes before production addresses the known unknowns, the things that you know might go wrong. Testing changes in production addresses the unknown unknowns, the risks that are more difficult to predict.

Managing the Risks of Testing in Production

Testing in production creates new risks. There are a few ways to manage these risks:

- Monitoring

-

Effective monitoring gives confidence that you can detect problems caused by your tests so you can stop them quickly.

- Observability

-

Observability gives you visibility into what’s happening within the system at a level of detail that helps you investigate and fix problems quickly, as well as improving the quality of what you can test.12

- Zero-downtime deployment

-

Being able to deploy and roll back changes quickly and seamlessly helps mitigate the risk of errors.

- Progressive deployment

-

If you can run different versions of components concurrently, or have different configurations for different sets of users, you can test changes in production conditions before rolling out the change to the full user population.

- Data management

-

Your production tests shouldn’t make inappropriate changes to data or expose sensitive data. You can maintain test data records, such as users and credit card numbers, that won’t trigger real-world actions.

- Chaos engineering

-

Lower risk in production environments by deliberately injecting known types of failures to prove that your mitigation systems work correctly (see “Principles of Chaos Engineering” by the chaos engineering community).

Monitoring as Testing

Monitoring can be seen as passive testing in production. It’s not true testing, in that you aren’t taking an action and checking the result. Instead, you’re observing the natural activity of your users and watching for undesirable outcomes. Monitoring should form a part of the testing strategy because it is a part of the mix of actions you take to manage risks to your system.

Conclusion

This chapter has discussed general challenges and approaches for testing infrastructure. I’ve avoided going deeply into the subjects of testing, quality, and risk management. If these aren’t areas you have much experience with, this chapter may give you enough to get started. I encourage you to read more, as testing and QA are fundamental to Infrastructure as Code.

The next chapter goes into deeper detail on testing infrastructure code in automated delivery workflows.

1 See the Mountain Goat Software site for an explanation of Agile stories.

2 The Accelerate research published in the annual State of DevOps Report finds that teams that merge their code at least daily tend to be more effective than those that do so less often. In the most effective teams I’ve seen, developers push their code multiple times a day, sometimes as often as every hour or so.

3 Mob programming, or mobbing, takes pairing to the extreme by having the team work on a code change together, in real time. Few teams will mob program all the time. But it’s an effective way to build and reinforce norms for coding styles and ways of working. I find it useful to have a team mob when it’s first forming and then on a regular basis.

4 My colleague Sarah Taraporewalla coined the term CFR to emphasize that people should not consider these requirements to be separate from development work, but applicable to all the work.

5 See Perryn Fowler’s post for an explanation of writing Given, When, Then tests.

6 On the other hand, keeping an instance running over a long period may expose issues like memory leaks that will otherwise appear only in production. However, if long-term resource management is a concern, it’s probably better to test this with intentional soak testing.

7 Martin Fowler’s bliki entry “Mocks Aren’t Stubs” is another useful reference for test doubles.

8 “The Practical Test Pyramid” by Ham Vocke is a thorough reference.

9 See the ExtremeProgramming.org definition of unit tests. Martin Fowler’s bliki definition of unit test discusses a few ways of thinking of unit tests.

10 These groups were change management, information security, risk management, service management, transition management, system integration testing, user acceptance, and the technical governance board.

11 A popular joke goes, “A software tester walks into a bar. Orders a beer. Orders 0 beers. Orders 999999999 beers. Orders a bear. Orders -1 beers. Orders hdtseatfibkd. The first real customer walks into a bar and asks where the bathroom is. The bar bursts into flames, killing everyone inside.”

12 Although it’s often conflated with monitoring, observability is about giving people ways to understand what’s going on inside your system. See Honeycomb’s “Introduction to Observability”.