Chapter 2. Principles of Cloud Infrastructure

The preceding chapter gave context for the use of Infrastructure as Code, including how it can support organizational strategy, and the importance of creating systems that are easy to improve and evolve. This chapter builds on this context, outlining high-level principles for using cloud Infrastructure as Code effectively.

Computing resources in the Iron Age of IT were tightly coupled to physical hardware. We assembled CPUs, memory, and hard drives in a server, mounted the server into a rack, and cabled it to switches and routers. We installed and configured an operating system (OS) and application software. We could describe an application server’s location in the data center: which floor, which row, which rack, which slot.

The cloud decouples computing resources from the physical hardware they run on. The hardware still exists, of course. But servers, hard drives, and routers have transformed into virtual constructs that we create, duplicate, change, move, and destroy at will.

The cloud native approach takes this decoupling further, moving away from modeling resources based on hardware concepts like servers, hard drives, and firewalls. Instead, infrastructure is defined around concepts driven by application architecture. Containers strip down the concept of a virtual server to only those things that are specific to an application process. Serverless removes even that, providing the bare minimum that an application needs from its environment to run. A service mesh can abstract various aspects of interaction and integration among application processes, including routing, authentication, and service discovery.

We can no longer rely on the physical attributes of our infrastructure to be constant. We must be able to add and remove instances of our system and its components without ceremony, and we need to be able to easily maintain the consistency and quality of our systems even as we rapidly expand and contract their scale.

The principles and pitfalls in this chapter address how to design and deliver Infrastructure as Code in the Cloud Age, and guide the more specific practices and techniques explained in this book.

Assume Systems Are Unreliable

In the Iron Age, we assumed our systems were running on reliable hardware. In the Cloud Age, we need to assume our system runs on unreliable hardware.1

Cloud-scale infrastructure involves hundreds of thousands of devices, if not more. At this scale, failures happen even when using reliable hardware—and some cloud vendors use cheap, less reliable hardware, detecting and replacing it when it breaks.

We need to patch and upgrade system software, and to resize, reallocate, and redistribute resources. The more systems we have and the more resources we use, the more often we do this work. With static infrastructure, this means taking systems offline. But in many modern organizations, taking systems offline means taking the business offline.

We can’t treat the infrastructure our system runs on as a stable foundation. Instead, we must design for uninterrupted service when underlying resources change.2

Make Everything Reproducible

One way to make a system resilient is to make sure we can rebuild its parts effortlessly and reliably. Effortlessly means that there is no need to make any decisions about how to rebuild parts of the system. Define elements such as configuration settings, software versions, and dependencies as code. Rebuilding is then a simple yes/no decision.

It should be possible not only to rebuild any part of the system but also to rebuild the system as it was at a previous point in time. The most common example is rolling back a change that turns out to be problematic.

Reproducibility not only makes it easy to recover a failed system but also helps to do the following:

-

Make test environments consistent with production

-

Replicate systems across regions for availability

-

Add instances on demand to cope with high load

-

Replicate systems to give each customer a dedicated instance

Of course, a running system generates data, content, and logs, which can’t be defined ahead of time. We need to identify these and find ways to keep them as a part of our replication strategy. Doing this might be as simple as automatically copying or streaming data to a backup and then restoring it when rebuilding. Chapter 20 describes options for doing this.

The ability to effortlessly build, rebuild, and roll back any part of the infrastructure is powerful. It reduces the risk and fear of making changes, so we can handle failures with confidence and can rapidly provision new services and environments.

Avoid Snowflake Systems

A snowflake is an instance of a system or part of a system that is difficult to rebuild. It may also be an environment that should be similar to other environments—such as a staging environment—but is different in ways that its team doesn’t fully understand.

People don’t set out to build snowflake systems; they are a natural occurrence. The first time we build something with a new tool, we learn lessons along the way, which involves making mistakes. But if people are relying on the thing we’ve built, we may not have time to go back and rebuild or improve it using what we’ve learned. Improving what we’ve built is especially hard if we don’t have the mechanisms and practices that make it easy and safe to change.

Another cause of snowflakes is people making changes to one instance of a system that they don’t make to others. They may be under pressure to fix a problem that appears in only one system, or they may start a major upgrade in a test environment but run out of time to roll it out to others.

We know a system is a snowflake when we’re not confident we can safely change or upgrade it. Worse, if the system does break, fixing it is hard. People then avoid making changes to the system, leaving it out of date, unpatched, and maybe even partly broken.

Snowflake systems create risk and waste the time of the teams that manage them. Replacing them with reproducible systems is almost always worth the effort. If a snowflake system isn’t worth improving, it may not be worth keeping at all.

The best way to replace a snowflake system is to write code that can replicate the system, running the new system in parallel until it’s ready. Use automated tests and pipelines to prove that the new implementation is correct and reproducible and that you can change it easily.

Note that it’s possible to create snowflake systems using infrastructure code, as explained in “Snowflakes as Code”.

Create Disposable Things

Building a system that can cope with dynamic infrastructure is one level. The next level is building a system that is itself dynamic. We should be able to gracefully add, remove, start, stop, change, and move the parts of our system. Doing this creates operational flexibility, availability, and scalability. It also simplifies and de-risks changes.

“Treat your servers like cattle, not pets,” is a popular expression about disposability.3 I miss giving fun names to each new server I create. But I don’t miss having to tweak and coddle every server in our estate by hand.

Tools and services that work with a dynamic system need to be able to cope gracefully with parts of the system appearing and disappearing. For example, some older monitoring tools raise an alert every time a virtual server is replaced. At the same time, it’s important to be alerted when something gets into a loop rebuilding itself.

Minimize Variation

As a system grows, it becomes harder to understand, harder to change, and harder to fix. The work involved grows with the number of pieces as well as with the number of different types of pieces. So a useful way to keep a system manageable is to have fewer types of pieces—to keep variation low. It’s easier to manage one hundred identical servers than five completely different servers.

Any change made to one element of the system must be applied to all other instances of the same element to avoid configuration drift.

Here are some variations that may exist in a system:

-

Multiple operating systems, Kubernetes distributions, databases, and other technologies. Each one of these needs people on the team to keep up skills and knowledge.

-

Multiple versions of software such as a container cluster or database. Different versions may need different configurations and tooling, or have slightly different behavior.

-

Different versions of a package. When some systems have a newer version of a package, utility, or library than others, risk is created. Commands may not run consistently across them, or older versions may have vulnerabilities or bugs.

Tensions arise as organizations weigh allowing each team to choose technologies and solutions appropriate to their needs against keeping the amount of variation in the organization to a manageable level.

It’s important to be aware of the difference between necessary variation and unintended variation. For example, the FoodSpin team has several services that use databases for storage. The requirements for several of these services are similar, storing customer and product data for use during customer visits. FoodSpin also has a data analytics service that is used to run reports and conduct research.

Using different database products for the customer and product services is unnecessary variation, because a single product should be perfectly fine for both services. However, the data analytics service has different requirements that are better met with a specialist database product.

A specific and common type of unintended variation is configuration drift. This variation happens over time across systems that are meant to be consistent.4 Making changes manually is a common cause of inconsistencies. They can also happen if you use automation tools to make ad hoc changes to only some instances, or if you create separate branches or copies of the infrastructure code for different instances. Configuration drift makes it harder to maintain consistent automation.

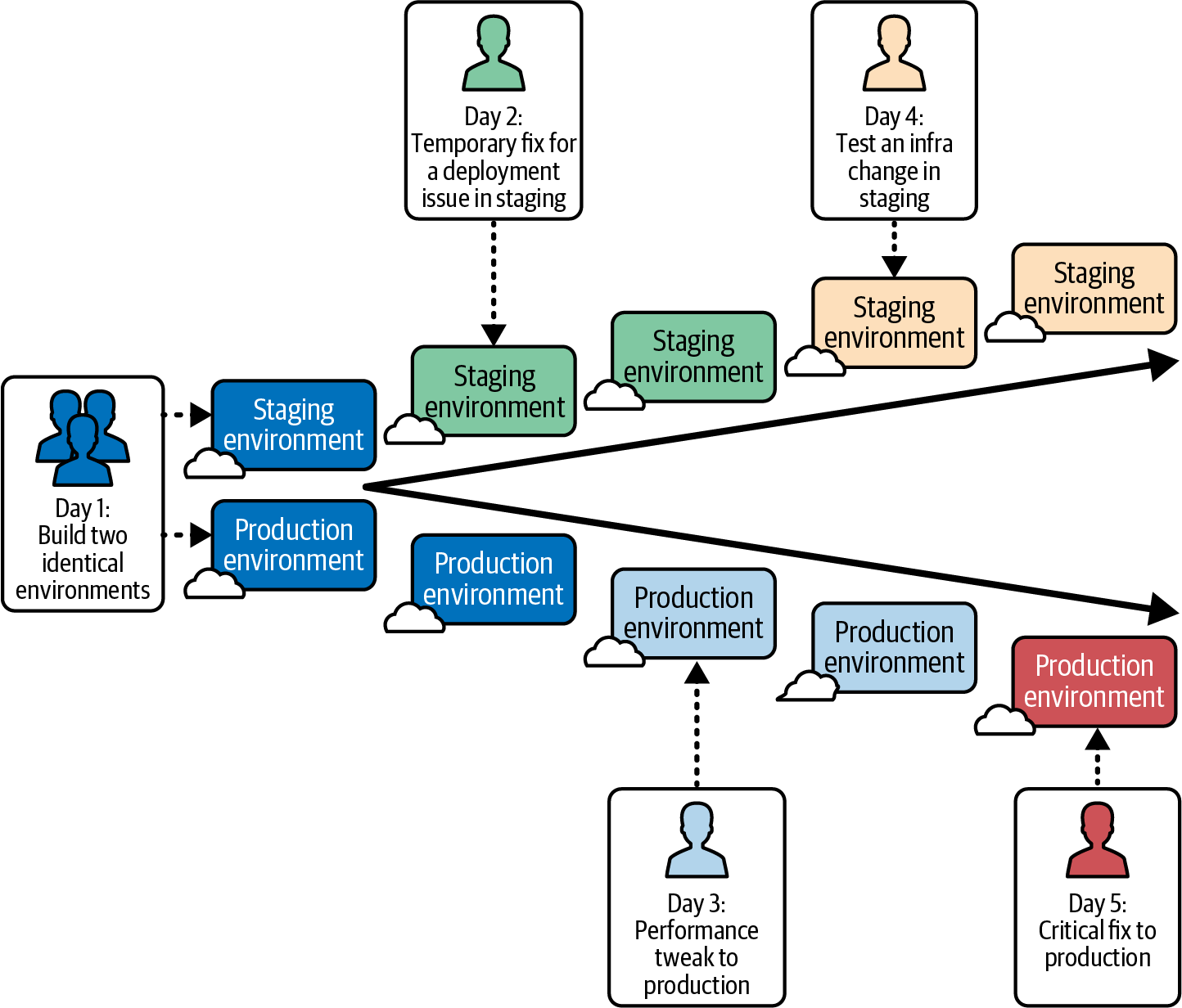

Figure 2-1 shows an example of configuration drift between the staging and production environments for the example company FoodSpin (as introduced in “Introduction to FoodSpin and Its Strategy”).

Figure 2-1. Configuration drift occurs when instances of the same thing become different over time

On day 1, the FoodSpin team provisions identical staging and production environments. On day 2, the team has an issue deploying a new software release to the staging environment, so engineers make several changes to the infrastructure as a workaround. The developers fix the issue with the application. The team is too busy to revert the workaround in staging, which in any case doesn’t seem to be a problem.

On day 3 users report that the system is sluggish, which the infrastructure addresses with some infrastructure tuning. On day 4, one of the engineers starts testing out better ways to tune the infrastructure in the staging environment, but other work becomes a priority, so they leave the changes incomplete.

On day 5, a production outage occurs, which the team swarms to fix right away. The outage is critical, so there is no time to test the infrastructure changes in staging.

Production and staging gradually become very different from each other. Both work fine, so nobody thinks the differences to the infrastructure matter. However, the differences show up in ways that the FoodSpin team becomes used to and takes for granted. For example, deploying a new build of the FoodSpin software takes manual work to configure it to match the environment, so the deployment process is only partly automated.

Drift detection and resolution (see Chapter 19) involves automatically and continually applying configuration to systems. In itself, this doesn’t prevent configuration drift among multiple instances of a system. However, the “hands-off” mechanism of keeping the system and code synchronized helps avoid a type of automation fear that comes from people making changes to a system outside of code.

Ensure That Any Procedure Can Be Repeated

Building on the reproducibility principle, you should be able to easily and reliably repeat anything you do to your infrastructure. Scripts and configuration management tools are more effective at this than manual steps. But automating a task can take a lot of work, especially if you’re not used to it.

For example, let’s say I have to partition a hard drive as a one-off task. Writing and testing a script is much more work than just logging in and running the fdisk command. So I do it by hand.

The problem comes later, when someone else on my team, Priya, needs to partition another disk. She comes to the same conclusion I did and does the work by hand rather than writing a script. However, she makes slightly different decisions about how to partition the disk. I made an 80 GB /var ext3 partition on my server, but Priya made /var a 100 GB XFS partition on hers. We’re creating configuration drift, which will erode our ability to automate with confidence.

Effective infrastructure teams have a strong scripting culture. If you can script a task, then script it.5 If scripting it is hard, dig deeper. Maybe a technique or tool that help, or maybe you can simplify the task or handle it differently. Breaking work into scriptable tasks usually makes it simpler, cleaner, and more reliable.

Apply Software Design Principles to Infrastructure Code

Chapter 1 defined Infrastructure as Code as “applying the principles, practices, and tools of software engineering to infrastructure.” There are tricky differences between infrastructure and software code, touched on in Chapter 4, so this principle needs to be treated with care. However, many software design and engineering concepts are useful for infrastructure.

Conclusion

The principles described in this chapter embody differences from the way we traditionally worked with static infrastructure. These principles are important for making the most of the dynamic nature of cloud platforms. It isn’t enough to move workloads onto a cloud platform, even using Infrastructure as Code tools. Organizations need to adopt the mindset of treating infrastructure as dynamic, disposable, easily recreatable elements if they want to see the benefits of modern hosting technologies.

The next two chapters present the context for using the principles from this chapter. Chapter 3 introduces a dynamic infrastructure platform that can be managed using automation, and Chapter 4 discusses Infrastructure as Code tools.

1 I first encountered this idea from Sam Johnston’s article, “Simplifying Cloud: Reliability”. Werner Vogels, the CTO of Amazon, says, “Everything fails all the time.”

2 The principle of assuming that systems are unreliable drives chaos engineering, which injects failures in controlled circumstances to test and improve the reliability of our services.

3 I first heard this expression in Gavin McCance’s presentation “CERN Data Centre Evolution”. In his presentation “Architectures for Open and Scalable Clouds”, Randy Bias credits Bill Baker with the expression. Both presentations are an excellent introduction to these principles.

4 Two definitions have emerged for the term configuration drift with infrastructure and environments. The original definition is the one used in this chapter: unnecessary differences between environments. A newer definition, discussed in Chapter 19, describes differences between infrastructure code and a specific infrastructure instance (see “Drift Detection and Correction”).

5 My colleague Florian Sellmayr says, “If it’s worth documenting, it’s worth automating.”