13 Partitioning

Now it’s time to change gears and dive into another tool you have at your disposal to scale out application — partitioning or sharding.

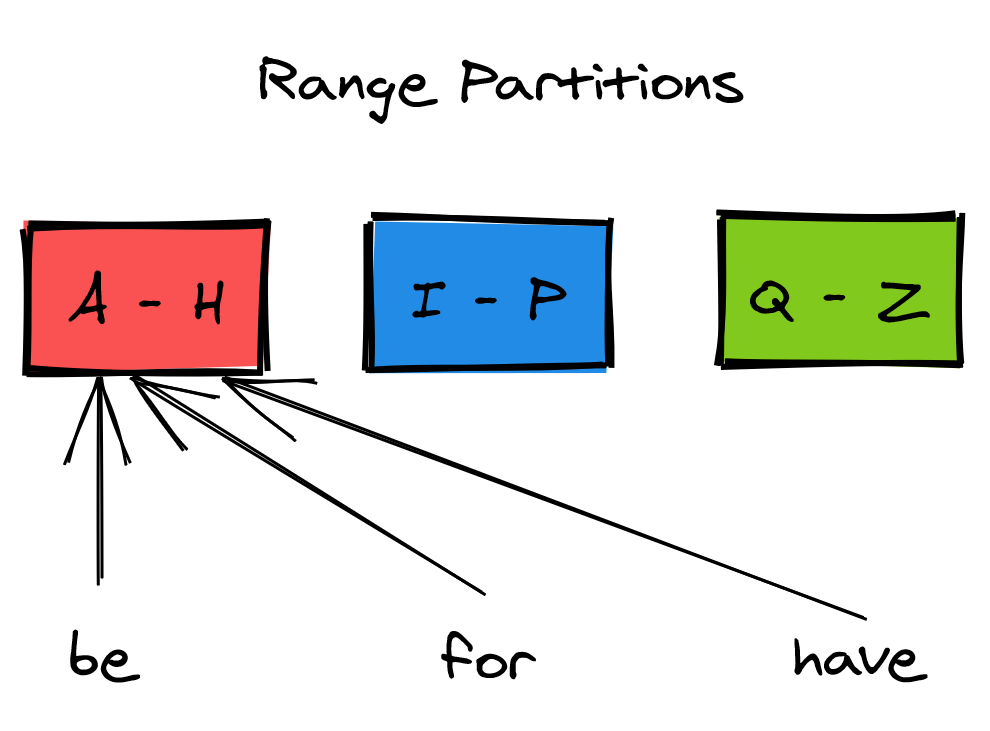

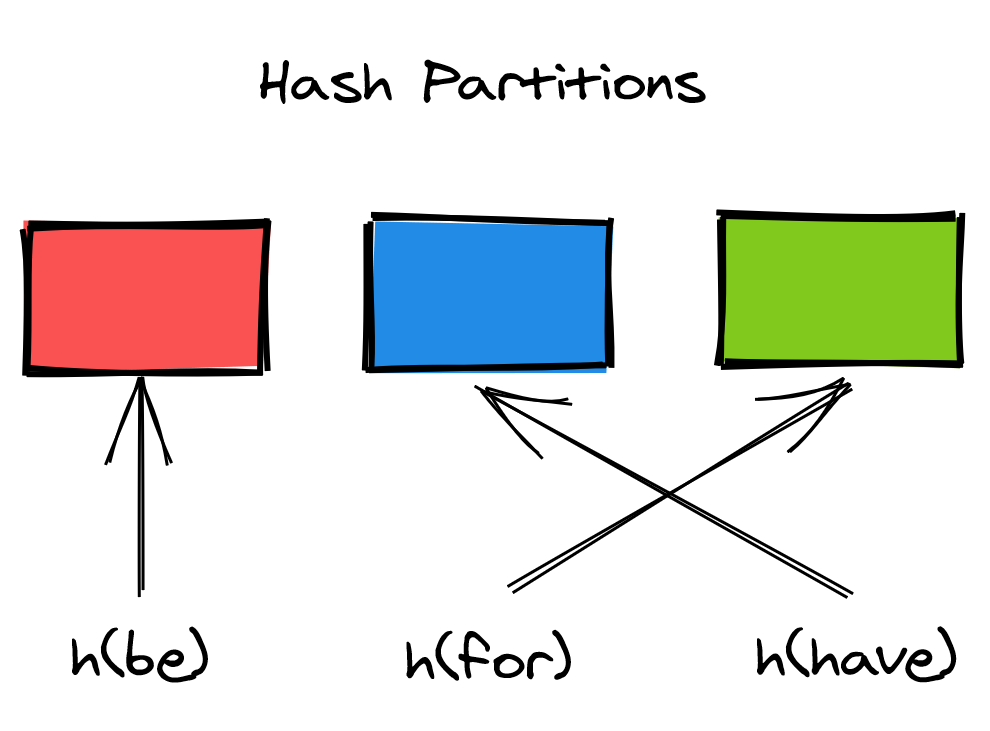

When a dataset no longer fits on a single node, it needs to be partitioned across multiple nodes. Partitioning is a general technique that can be used in a variety of circumstances, like sharding TCP connections across backends in a load balancer. To ground the discussion in this chapter, we will anchor it to the implementation of a sharded key-value store.

13.2 Rebalancing

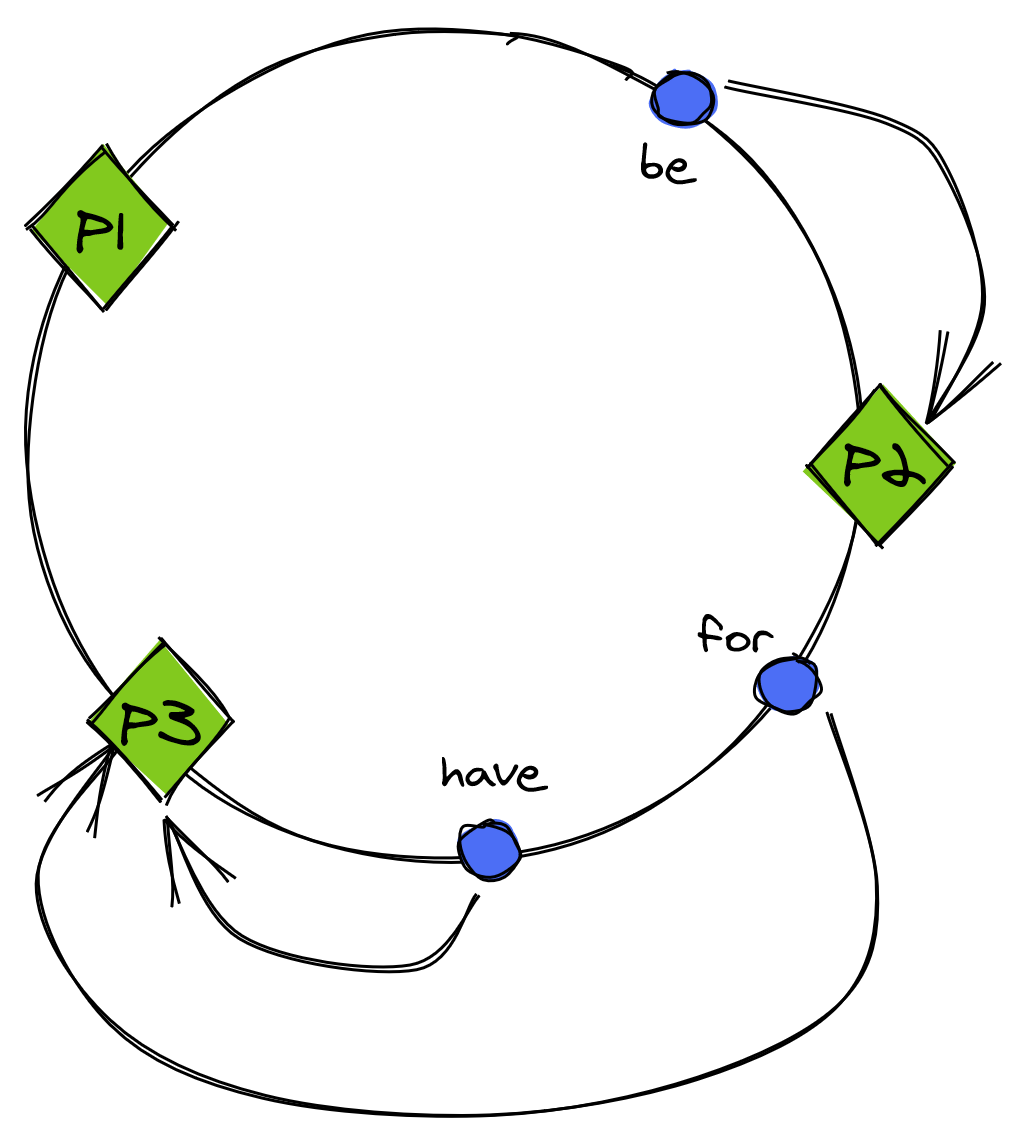

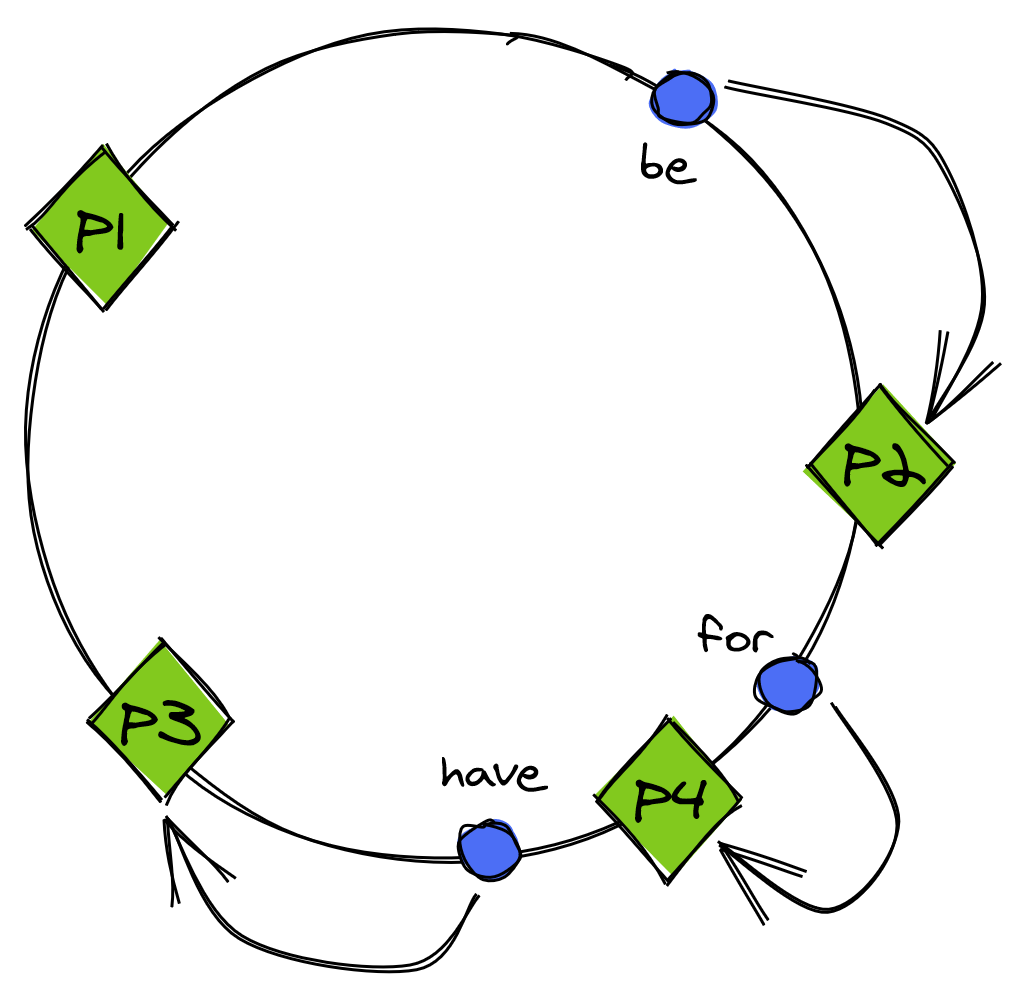

When the number of requests to the data store becomes too large, or the dataset’s size becomes too large, the number of nodes serving partitions needs to be increased. Similarly, if the dataset’s size keeps shrinking, the number of nodes can be decreased to reduce costs. The process of adding and removing nodes to balance the system’s load is called rebalancing.

Rebalancing needs to be implemented in such a way to minimize disruption to the data store, which needs to continue to serve requests. Hence, the amount of data transferred during the rebalancing act needs to be minimized.

13.2.1 Static partitioning

Here, the idea is to create way more partitions than necessary when the data store is first initialized and assign multiple partitions per node. When a new node joins, some partitions move from the existing nodes to the new one so that the store is always in a balanced state.

The drawback of this approach is that the number of partitions is set when the data store is first initialized and can’t be easily changed after that. Getting the number of partitions wrong can be problematic — too many partitions add overhead and decrease the data store’s performance, while too few partitions limit the data store’s scalability.

13.2.2 Dynamic partitioning

An alternative to creating partitions upfront is to create them on-demand. One way to implement dynamic partitioning is to start with a single partition. When it grows above a certain size or becomes too hot, it’s split into two sub-partitions, each containing approximately half of the data. Then, one sub-partition is transferred to a new node. Similarly, if two adjacent partitions become small enough, they can be merged into a single one.

13.2.3 Practical considerations

Introducing partitions in the system adds a fair amount of complexity, even if it appears deceptively simple. Partition imbalance can easily become a headache as a single hot partition can bottleneck the system and limit its ability to scale. And as each partition is independent of the others, transactions are required to update multiple partitions atomically.

We have merely scratched the surface on the topic; if you are interested to learn more about it, I recommend reading Designing Data-Intensive Applications by Martin Kleppmann.