CHAPTER 14

Nutrition 3.0

You Say Potato, I Say “Nutritional Biochemistry”

Religion is a culture of faith; science is a culture of doubt.

—Richard Feynman

I dread going to parties, because when people find out what I really do for a living (not buying my usual lies about being a shepherd or a race car driver), they always want to talk about the topics I dread most: “diet” and “nutrition.”

I will do whatever it takes to get out of that conversation—go and get a drink, even if I’m already holding one, or pretend to answer my phone, or, if all else fails, feign a grand mal seizure. Like politics or religion, it’s just not a fit topic of conversation, in my view. (And if I seemed like kind of a jerk to you at a party once, my apologies.)

Diet and nutrition are so poorly understood by science, so emotionally loaded, and so muddled by lousy information and lazy thinking that it is impossible to speak about them in nuanced terms at a party or, say, on social media. Yet most people these days are conditioned to want bullet-point “listicles,” bumper-sticker slogans, and other forms of superficial analysis. It reminds me of a story about the great physicist (and one of my heroes) Richard Feynman being asked at a party to explain, briefly and simply, why he was awarded his Nobel Prize. He responded that if he could explain his work briefly and simply, it probably would not have merited a Nobel Prize.

Feynman’s rule also applies to nutrition, with one caveat: we actually know far less about this subject than we do about subatomic particles. On the one hand, we have made-for-clickbait epidemiological “studies” that make absurd claims, such as that eating an ounce of tree nuts each day will lower your cancer risk by exactly 18 percent (not making this up). On the other, we have clinical trials that tend almost without exception to be flawed. Thanks to the poor quality of the science, we actually don’t know that much about how what we eat affects our health. That creates a tremendous opportunity for a multitude of would-be nutrition gurus and self-proclaimed experts to insist, loudly, that only they know the true and righteous diet. There are forty thousand diet books on Amazon; they can’t all be right.

Which brings us to my final quibble about the world of nutrition and diets, which is the extreme tribalism that seems to prevail there. Low-fat, vegan, carnivore, Paleo, low-carb, or Atkins—every diet has its zealous warriors who will proclaim the superiority of their way of eating over all others until their dying breath, despite a total lack of conclusive evidence.

Once upon a time, I too was one of those passionate advocates. I spent three years on a ketogenic diet and have written and blogged and spoken extensively about that journey. For better and for worse, I’m indelibly associated with low-carb and ketogenic diets. Giving up added sugar—literally, putting down the Coke that I held in my hand, on September 8, 2009, moments after my lovely wife suggested I “work on being a little less not thin”—was the first step on a long, life-changing, but also frustrating journey through the world of diet and nutrition science. The good news is that it reversed my incipient metabolic syndrome and may have saved my life. It also led to me writing this book. The bad news is that it exhausted my patience for the “diet debate.”

Consider this chapter my penance.

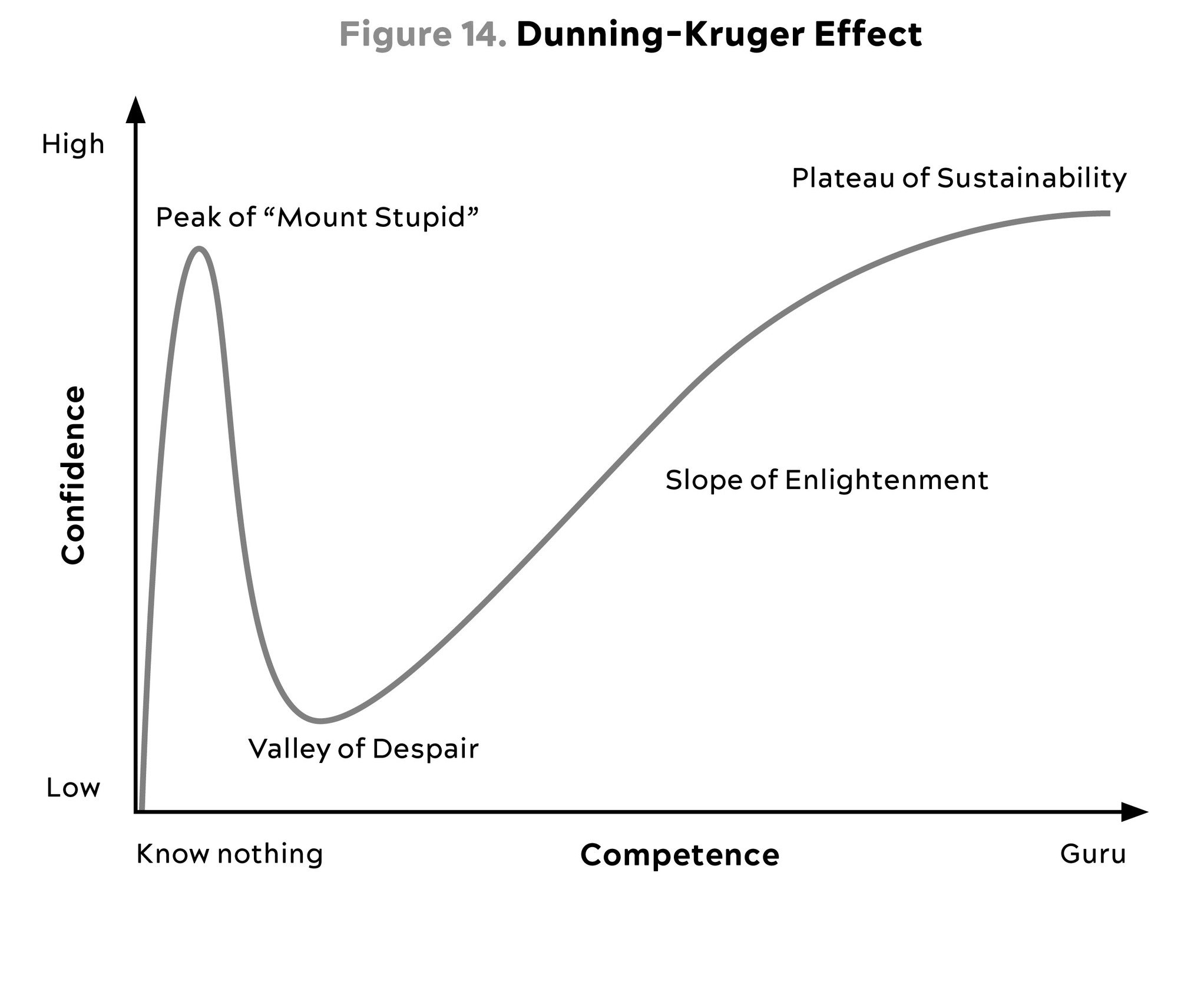

Overall, I think most people spend either too little or too much time thinking about this topic. Probably more on the “too little” side, as evidenced by the epidemic of obesity and metabolic syndrome. But those on the “too much” side are loud and insistent (check out nutrition Twitter). I was completely guilty of this myself, in the past. Looking back, I now realize that I was too far on the left on the Dunning-Kruger curve, caricatured below in figure 14—my maximal confidence and relatively minimal knowledge having propelled me quite close to the summit of “Mount Stupid.”

Source: Wikimedia Commons, (2020).

Now I might be halfway up the Slope of Enlightenment on a good day, but one key change I have made is that I am no longer a dogmatic advocate of any particular way of eating, such as a ketogenic diet or any form of fasting. It took me a long time to figure this out, but the fundamental assumption underlying the diet wars, and most nutrition research—that there is one perfect diet that works best for every single person—is absolutely incorrect. More than anything I owe this lesson to my patients, whose struggles have taught me a humility about nutrition that I never could have learned from reading scientific papers alone.

I encourage my patients to avoid using the term diet at all, and if I were a dictator, I might ban it entirely. When you eat a slice of prosciutto or a Rice Krispies square, you are ingesting a multitude of different chemical compounds. Just as their chemical makeup differentiates them in terms of taste, the molecules in the foods that we consume affect multiple enzymes and pathways and mechanisms in our bodies, many of which we have discussed in previous chapters. These food molecules—which are basically nothing more than different arrangements of carbon, nitrogen, oxygen, phosphorus, and hydrogen atoms—also interact with our genes, our metabolism, our microbiome, and our physiologic state. Moreover, each of us will react to these food molecules in different ways.

Instead of diet, we should be talking about nutritional biochemistry. That takes it out of the realm of ideology and religion—and above all, emotion—and places it firmly back into the realm of science. We can think of this new approach as Nutrition 3.0: scientifically rigorous, highly personalized, and (as we’ll see) driven by feedback and data rather than ideology and labels. It’s not about telling you what to eat; it’s about figuring out what works for your body and your goals—and, just as important, what you can stick to.

What problem are we trying to solve here? What is our goal with Nutrition 3.0?

I think it boils down to the simple questions that we posited in chapter 10:

-

Are you undernourished, or overnourished?

-

Are you undermuscled, or adequately muscled?

-

Are you metabolically healthy or not?

The correlation between poor metabolic health and being overnourished and undermuscled is very high. Hence, for a majority of patients the goal is to reduce energy intake while adding lean mass. This means we need to find ways to get them to consume fewer calories while also increasing their protein intake, and to pair this with proper exercise. This is the most common problem we are trying to solve around nutrition.

When my patients are undernourished, it’s typically because they are not taking in enough protein to sustain muscle mass, which as we saw in the previous chapters is a crucial determinant of both lifespan and healthspan. So any dietary intervention that compromises muscle, or lean body mass, is a nonstarter—for both the under- and overnourished groups.

I used to think that diet and nutrition were the one path to perfect health. Years of experience, with myself and my patients, have led me to temper my expectations a bit. Nutritional interventions can be powerful tools with which to restore someone’s metabolic equilibrium and reduce risk of chronic disease. But can they extend and improve lifespan and healthspan, almost magically, the way exercise does? I’m no longer convinced that they can.

I still believe that most people need to address their eating pattern in order to get control of their metabolic health, or at least not make things worse. But I also believe that we need to differentiate between behavior that maintains good health versus tactics that correct poor health and disease. Wearing a cast on a broken bone will allow it to heal. Wearing a cast on a perfectly normal arm will cause it to atrophy. While this example is obvious, it’s amazing how many people fail to translate it to nutrition. It seems quite clear that a nutritional intervention aimed at correcting a serious problem (e.g., highly restricted diets, even fasting, to treat obesity, NAFLD, and type 2 diabetes) might be different from a nutritional plan calibrated to maintain good health (e.g., balanced diets in metabolically healthy people).

Nutrition is relatively simple, actually. It boils down to a few basic rules: don’t eat too many calories, or too few; consume sufficient protein and essential fats; obtain the vitamins and minerals you need; and avoid pathogens like E. coli and toxins like mercury or lead. Beyond that, we know relatively little with complete certainty. Read that sentence again, please.

Directionally, a lot of the old cliché expressions are probably right: If your great-grandmother would not recognize it, you’re probably better off not eating it. If you bought it on the perimeter of the grocery store, it’s probably better than if you bought it in the middle of the store. Plants are very good to eat. Animal protein is “safe” to eat. We evolved as omnivores; ergo, most of us can probably find excellent health as omnivores.

Don’t get me wrong, I still have a lot to say—that’s why these chapters on nutrition are not short. There is so much ideological bickering and utter bullshit out there that I hope to inject at least a little bit of clarity into the discussion. But most of this chapter and the next will be aimed at changing the way you think about diet and nutrition, rather than telling you to eat this, not that. My goal here is to give you the tools to help you find the right eating pattern for yourself, one that will make your life better by protecting and preserving your health.

What We Sort of Know About Nutritional Biochemistry (and How We Sort of Know It)

One of my biggest frustrations in the area of nutrition—sorry, nutritional biochemistry—has to do with how little we actually know about it for certain. The problem is rooted in the poor quality of much nutrition research, which leads to bad reporting in the media, lots of arguing on social media, and rampant confusion among the public. What are we supposed to eat (and not eat)? What is the right diet for you?

If all we have to go on is media reporting about the latest big study from Harvard, or the wisdom of some self-appointed diet guru, then we will never escape this state of hopeless confusion. So before we delve into the specifics, it’s worth taking a step back to try to understand what we do and don’t know about nutrition—what kinds of studies might be worth heeding and which ones we can safely ignore. Understanding how to discern signal from noise is an important first step in coming up with our own plan.

Our knowledge of nutrition comes primarily from two types of studies: epidemiology and clinical trials. In epidemiology, researchers gather data on the habits of large groups of people, looking for meaningful associations or correlations with outcomes such as a cancer diagnosis, cardiovascular disease, or mortality. These epidemiological studies generate much of the diet “news” that pops up in our daily internet feed, about whether coffee is good for you and bacon is bad, or vice versa.

Epidemiology has been a useful tool for sleuthing out the causes of epidemics, including (famously) stopping a cholera outbreak in nineteenth-century London, and (less famously) saving boy chimney sweeps from an epidemic of scrotal cancer that turned out to be linked to their employment.[*1] It has propelled some real public health triumphs, such as the advent of smoking bans and widespread treatment of drinking water. But in nutrition it has proved less insightful. Even at face value, the “associations” that nutritional epidemiologists come up with are often absurd: Will eating twelve hazelnuts every day really add two years to my lifespan, as one study suggested?[*2] I wish.

The problem is that epidemiology is incapable of distinguishing between correlation and causation. This, aided and abetted by bad journalism, creates confusion. For example, multiple studies have found a strong association between drinking diet sodas and abdominal fat, hyperinsulinemia, and cardiovascular risk. Sounds like diet soda is bad stuff that causes obesity, right? But that is not what those studies actually demonstrate, because they fail to ask an important question: Who drinks diet soda?

People who are concerned about their weight or their diabetes risk, that’s who. They may drink diet soda because they are heavy, or worried about becoming heavy. The problem is that epidemiology is not equipped to determine the direction of causality between a given behavior (e.g., drinking diet soda) and a particular outcome (e.g., obesity) any more than one of my chickens is able to scramble the egg she has just laid for me.

To understand why, we must consult (again) Sir Austin Bradford Hill, a British scientist whom we met in chapter 11. Hill had helped sleuth out the link between smoking and lung cancer in the early 1950s, and he came up with nine criteria for evaluating the strength of epidemiological findings and determining the likely direction of causality, which we also referenced in regard to exercise.[*3] The most important of these, and the one that can best separate correlation from causation, is the trickiest to implement in nutrition: experiment. Try proposing a study where you would test the effects of a lifetime of eating fast food by randomizing young boys and girls either to Big Macs or a non–fast-food diet. Even if you did somehow receive institutional review board approval for this terrible idea, there are a bunch of different ways in which even a simple experiment can go wrong. Some of the Big Mac kids might secretly go vegetarian, while the controls might decide to frequent the Golden Arches. The point is that humans are terrible study subjects for nutrition (or just about anything else) because we are unruly, disobedient, messy, forgetful, confounding, hungry, and complicated creatures.

This is why we rely on epidemiology, which derives data from observation and often from the subjects themselves. As we saw earlier, the epidemiology around exercise passes the Bradford Hill criteria with flying colors—but using epidemiology to study nutrition often flunks those tests miserably, beginning with the effect size, the power of the association, often expressed as a percentage. While the epidemiology of smoking (like exercise) easily passes the Bradford Hill tests because the effect size is so overwhelming, in nutrition the effect sizes are typically so small that they could easily be the product of other, confounding factors.

Case in point: The claim that eating red meats and processed meats “causes” colorectal cancer. According to a very well-publicized 2017 study from the Harvard School of Public Health and the World Health Organization, eating those kinds of meats raises one’s risk of colon cancer by 17 percent (HR = 1.17). That does sound scary—but does it pass the Bradford Hill tests? I don’t think so, because the association is so weak. For comparison’s sake, someone who smokes cigarettes is at more like 1,000 to 2,500 percent (ten to twenty-five times) increased risk of lung cancer, depending on the population being studied. This suggests that there might actually be some sort of causation at work. Yet very few published epidemiological studies show a risk increase of even 50 percent (HR = 1.50) for any given type of food.

Second, and far more damning, is that the raw data on which these conclusions are typically based are shaky at best. Many nutritional epidemiological studies collect information on subjects via something called a “food frequency questionnaire,” a lengthy checklist that asks users to recall everything they ate over the last month, or even the last year, in minute detail. I’ve tried filling these out and it’s almost impossible to recall exactly what I ate two days ago, let alone three weeks.[*4] So how reliable can studies based on such data possibly be? How much confidence do we have in, say, the red meat study?

So do red and processed meats actually cause cancer or not? We don’t know, and we will probably never get a more definitive answer, because a clinical trial testing this proposition is unlikely ever to be done. Confusion reigns. Nevertheless, I’m going to stick my neck out and assert that a risk ratio of 1.17 is so minimal that it might not matter that much whether you eat red/processed meats versus some other protein source, like chicken. Clearly, this particular study is very far from providing a definitive answer to the question of whether red meat is “safe” to eat. Yet people have been fighting about it for years.

This is another problem in the world of nutrition: too many people are majoring in the minor and minoring in the major, focusing too much attention on small questions while all but ignoring the bigger issues. Small variations in what we eat probably matter a lot less than most people assume. But bad epidemiology, aided and abetted by bad journalism, is happy to blow these things way out of proportion.

Bad epidemiology so dominates our public discussion of nutrition that it has inspired a backlash by skeptics such as John Ioannidis of the Stanford Prevention Research Center, a crusader against bad science in all its forms. His basic argument is that food is so complex, made up of thousands of chemical compounds in millions of possible combinations that interact with human physiology in so many ways—in other words, nutritional biochemistry—that epidemiology is simply not up to the task of disentangling the effect of any individual nutrient or food. In an interview with the CBC, the normally soft-spoken Ioannidis was brutally direct: “Nutritional epidemiology is a scandal,” he said. “It should just go into the waste bin.”

The true weakness of epidemiology, at least as a tool to extract reliable, causal information about human nutrition, is that such studies are almost always hopelessly confounded. The factors that determine our food choices and eating habits are unfathomably complex. They include genetics, social influences, economic factors, education, metabolic health, marketing, religion, and everything in between—and they are almost impossible to disentangle from the biochemical effects of the foods themselves.

A few years ago, a scientist and statistician named David Allison ran an elegant experiment that illustrates how epidemiological methods can lead us astray, even in the most tightly controlled research model possible: laboratory mice, which are genetically identical and housed in identical conditions. Allison created a randomized experiment using these mice, similar to the caloric restriction experiments we discussed in chapter 5. He split them into three groups, differing only in the quantity of food they were given: a low-calorie group, a medium-calorie group, and a high-calorie, ad libitum group of animals who were allowed to eat as much as they wanted. The low-calorie mice were found to live the longest, followed by medium-calorie mice, and the high-calorie mice lived the shortest, on average. This was the expected result that had been well established in many previous studies.

But then Allison did something very clever. He looked more closely at the high-calorie group, the mice with no maximum limit on food intake, and analyzed this group separately, as its own nonrandomized epidemiological cohort. Within this group, Allison found that some mice chose to eat more than others—and that these hungrier mice actually lived longer than the high-calorie mice who chose to eat less. This was exactly the opposite of the result found in the larger, more reliable, and more widely repeated randomized trial.

There was a simple explanation for this: the mice that were strongest and healthiest had the largest appetites, and thus they ate more. Because they were healthiest to begin with, they also lived the longest. But if all we had to go on was Allison’s epidemiological analysis of this particular subgroup, and not the larger and better-designed clinical trial, we might conclude that eating more calories causes all mice to live longer, which we are pretty certain is not the case.

This experiment demonstrates how easy it is to be misled by epidemiology. One reason is because general health is a massive confounder in these kinds of studies. This is also known as healthy user bias, meaning that study results sometimes reflect the baseline health of the subjects more than the influence of whatever input is being studied—as was the case with the “hungry” mice in this study.[*5]

One classic example of this, I believe, lies in the vast, well-publicized literature correlating “moderate” drinking with improved health outcomes. This notion has become almost an article of faith in the popular media, but these studies are also almost universally tainted by healthy user bias—that is, the people who are still drinking in older age tend to do so because they are healthy, and not the other way around. Similarly, people who drink zero alcohol often have some health-related reason, or addiction-related reason, for avoiding it. And such studies also obviously exclude those who have already died of the consequences of alcoholism.

Epidemiology sees only a bunch of seemingly healthy older people who all drink alcohol and concludes that alcohol is the cause of their good health. But a recent study in JAMA, using the tool of Mendelian randomization we discussed back in chapter 3, suggests that this might not be true. This study found that once you remove the effects of other factors that may accompany moderate drinking—such as lower BMI, affluence, and not smoking—any observed benefit of alcohol consumption completely disappears. The authors concluded that there is no dose of alcohol that is “healthy.”

Clinical trials would seem like a much better way to evaluate one diet against another: One group of subjects eats diet X, the other group is on diet Y, and you compare the results. (Or, to continue the alcohol example, one group drinks moderately, one group drinks heavily, and the control group abstains altogether.)

These are more rigorous than epidemiology, and they offer some ability to infer causality thanks to the process of randomization, but they too are often flawed. There’s a trade-off between sample size, study duration, and control. To do a long study in a large group of subjects, you essentially have to trust that they are following the prescribed diet, whether the Big Mac diet in our hypothetical example from above, or a simple low-fat diet. If you want to ensure that your subjects are actually eating the diet, you need to feed each subject, observe them eating, and keep them locked in the metabolic ward of a hospital (to be sure they are not eating anything else). All of this is doable, but only for a handful of subjects for a few weeks at a time, which is not nearly a large enough sample or long enough duration to infer anything beyond mechanistic insights about nutrients and health.

These studies make pharmaceutical studies seem simple. Determining whether pill X lowers blood pressure enough to prevent heart attacks requires only that study subjects remember to take their pill every day for however many months or years, and even that simple compliance poses a challenge. Now imagine trying to ensure that study subjects lower their dietary fat content to no more than 20 percent of total calories and consume at least five servings of fruits and vegetables daily for a year. In fact, I’m convinced that compliance is the key issue in nutrition research, and with diets in general: Can you stick to it? The answer is different for almost everyone. This is why it’s so difficult for experiments to answer the central questions about the relationship between diet and disease, no matter how big and ambitious they are.

One classic example of a well-intended nutrition study that created more confusion than clarity is the Women’s Health Initiative (WHI), an enormous randomized controlled trial that was meant to test a low-fat, high-fiber diet in nearly fifty thousand women. Begun in 1993, it lasted eight years and cost nearly $750 million (and if it sounds familiar, that is because of the study’s highly publicized other arm, discussed earlier, which looked at the effects of hormone replacement therapy on older women). In the end, despite all this effort, the WHI found no statistically significant difference between the low-fat and control diet groups in terms of incidence of breast cancer, colorectal cancer, cardiovascular disease, or overall mortality.[*6]

Many people, myself included, argued that the results of this study demonstrated the lack of efficacy of low-fat diets. But in reality, it probably told us nothing about a low-fat diet because the “low-fat” intervention group consumed around 28 percent of their calories from fat, while the control group got about 37 percent of their calories from fat. (And that’s even assuming the investigators were able to be remotely accurate in their assessment of what the subjects actually ate over the years, a big assumption.) So this study compared two diets that were pretty similar, and found that they had pretty similar outcomes. Big surprise. Nevertheless, flawed as it was, the WHI study has been fought over for years by partisans of different ways of eating.

Just as an aside, the WHI study does provide a clear example of why it is so important to evaluate any intervention, nutritional or otherwise, through the lens of efficacy versus effectiveness. Efficacy tests how well the intervention works under perfect conditions and adherence (i.e., if one does everything exactly as prescribed). Effectiveness tests how well the intervention works under real-world conditions, in real people. Most people confuse these and therefore fail to appreciate this nuance of clinical trials. The WHI was not a test of the efficacy of a low-fat diet for the simple reasons that (a) it failed to test an actual low-fat diet, and (b) study subjects did not adhere to the diet perfectly. So it can’t be argued from the WHI that low-fat diets do not improve health, only that the prescription of a low-fat diet, in this population of patients, did not improve health. See the difference?

That said, some clinical trials have provided some useful bits of knowledge. One of the best, or least bad, clinical trials ever executed seemed to show a clear advantage for the Mediterranean diet—or at least, for nuts and olive oil. This study also focused on the role of dietary fats.

The large Spanish study known as PREDIMED (PREvención con DIeta MEDiterránea) was elegant in its design: rather than telling the nearly 7,500 subjects exactly what they were supposed to eat, the researchers simply gave one group a weekly “gift” of a liter of olive oil, which was meant to nudge them toward other desired dietary changes (i.e., to eat the sorts of things that one typically prepares with olive oil). A second group was given a quantity of nuts each week and told to eat an ounce per day, while the control group was simply instructed to eat a lower-fat diet, with no nuts, no excess fat on the meat they did eat, no sofrito (a garlicky Spanish tomato sauce with onions and peppers that sounds delicious), and weirdly, no fish.

The study was meant to last six years, but in 2013 the investigators announced that they had halted it prematurely, after just four and a half years, because the results were so dramatic. The group receiving the olive oil had about a one-third lower incidence (31 percent) of stroke, heart attack, and death than the low-fat group, and the mixed-nuts group showed a similar reduced risk (28 percent). It was therefore deemed unethical to continue the low-fat arm of the trial. By the numbers, the nuts-or-olive-oil “Mediterranean” diet appeared to be as powerful as statins, in terms of number needed to treat (NNT), for primary prevention of heart disease—meaning in a population that had not yet experienced an “event” or a clinical diagnosis.[*7]

It looked like a slam dunk; it’s rare when investigators can report hard outcomes like death or heart attack, as opposed to simple weight loss, in a mere dietary study. It did help that the subjects already had at least three serious risk factors, such as type 2 diabetes, smoking, hypertension, elevated LDL-C, low HDL-C, overweight or obesity, or a family history of premature coronary heart disease. Yet despite their elevated risk, the olive oil (or nuts) diet had clearly helped them delay disease and death. A post hoc analysis of PREDIMED data also found cognitive improvement in those allocated the Mediterranean-style diet(s), versus cognitive decline in those allocated the low-fat diet.

But does that mean a Mediterranean diet is right for everyone, or that extra-virgin olive oil is the healthiest type of fat? Possibly—but not necessarily.

To me, perhaps the most vexing issue with diet and nutrition studies is the degree of variation between individuals that is found but often obscured. This is especially true in studies looking mostly or entirely at weight loss as an end point. The published studies report average results that are almost always underwhelming, subjects losing a few pounds on average. In reality, some individuals may have lost quite a bit of weight on the diet, while others lost none or even gained weight.

There are two issues at play here. The first is compliance: how well can you stick to the diet? That differs for everyone; we all have different behaviors and thought patterns around food. The second issue is how a given diet affects you, with your individual metabolism and other risk factors. Yet these are too often ignored, and we end up with generalizations about how diets “don’t work.” What that really means is that diet X or diet Y doesn’t work for everyone.

Our goal in the next chapter is to help you figure out the best eating plan for you, as an individual. To do that, we must move beyond labels and dive into nutritional biochemistry.