10.8 Android

Android is a relatively new operating system designed to run on mobile devices. It is based on the Linux kernel—Android introduces only a few new concepts to the Linux kernel itself, using most of the Linux facilities you are already familiar with (processes, user IDs, virtual memory, file systems, scheduling, etc.) in sometimes very different ways than they were originally intended.

Since its introduction in 2008, Android has grown to be the most widely used operating systems in the world with, as of this writing, over 3 billion monthly active users of just the Google flavor of Android alone. Its popularity has ridden the explosion of smartphones, and it is freely available for manufacturers of mobile devices to use in their products. It is also an open-source platform, making it customizable to a diverse variety of devices. It is popular not only for consumer-centric devices where its third-party application ecosystem is advantageous (such as tablets, televisions, game systems, and media players), but is increasingly used as the embedded OS for dedicated devices that need a graphical user interface such as smart watches, automotive dashboards, airplane seatbacks, medical devices, and home appliances.

A large amount of the Android operating system is written in a high-level language, the Java programming language. The kernel and a large number of lowlevel libraries are written in C and C++. However, a large amount of the system is written in Java and, but for some small exceptions, the entire application API is written and published in Java as well. The parts of Android written in Java tend to follow a very object-oriented design as encouraged by that language.

10.8.1 Android and Google

Android is an unusual operating system in the way it combines open-source code with closed-source third-party applications. The open-source part of Android is called the AOSP (Android Open Source Project) and is completely open and free to be used and modified by anyone.

An important goal of Android is to support a rich third-party application environment, which requires having a stable implementation and API for applications to run against. However, in an open-source world where every device manufacturer can customize the platform however it wants, compatibility issues quickly arise. There needs to be some way to control this conflict.

Part of the solution to this for Android is the CDD (Compatibility Definition Document), which describes the ways Android must behave to be compatible with third party applications. This document describes what is required to be a compatible Android device. Without some way to enforce such compatibility, however, it will often be ignored; there needs to be some additional mechanism to do this.

Android solves this by allowing additional proprietary services to be created on top of the open-source platform, providing (typically cloud-based) services that the platform cannot itself implement. Since these services are proprietary, they can restrict which devices are allowed to include them, thus requiring CDD compatibility of those devices.

Google implemented Android to be able to support a wide variety of proprietary cloud services, with Google’s extensive set of services being representative cases: Gmail, calendar and contacts sync, cloud-to-device messaging, and many other services, some visible to the user, some not. When it comes to offering compatible apps, the most important service is Google Play.

Google Play is Google’s online store for Android apps. Generally when developers create Android applications, they will publish with Google Play. Since Google Play (or any other application store) is a significant channel through which applications are delivered to an Android device, that proprietary service is responsible for ensuring that applications will work on the devices it delivers them to.

Google Play uses two main mechanisms to ensure compatibility. The first and most important is requiring that any device shipping with it must be a compatible Android device as per the CDD. This ensures a baseline of behavior across all devices. In addition, Google Play must know about any features of a device that an application requires (such as having a touch screen, camera hardware, or telephony support) so the application is not made available on devices that lack them.

10.8.2 History of Android

Google developed Android in the mid-2000s, after acquiring Android as a startup company early in its development. Nearly all the development of the Android platform that exists today was done under Google’s management.

Early Development

Android, Inc. was a software company founded to build software to create smarter mobile devices. Originally looking at cameras, the vision soon switched to smartphones due to their larger potential market. That initial goal grew to addressing the then-current difficulty in developing for mobile devices, by bringing to them an open platform built on top of Linux that could be widely used.

During this time, prototypes for the platform’s user interface were implemented to demonstrate the ideas behind it. The platform itself was targeting three key languages, JavaScript, Java, and C++, in order to support a rich application-development environment.

Google acquired Android in July 2005, providing the necessary resources and cloud-service support to continue Android development as a complete product. A fairly small group of engineers worked closely together during this time, starting to develop the core infrastructure for the platform and foundations for higher-level application development.

In early 2006, a significant shift in plan was made: instead of supporting multiple programming languages, the platform would focus entirely on the Java programming language for its application development. This was a difficult change, as the original multilanguage approach superficially kept everyone happy with ‘‘the best of all worlds’’; focusing on one language felt like a step backward to engineers who preferred other languages.

Trying to make everyone happy, however, can easily make nobody happy. Building out three different sets of language APIs would have required much more effort than focusing on a single language, greatly reducing the quality of each one. The decision to focus on the Java language was critical for the ultimate quality of the platform and the development team’s ability to meet important deadlines.

As development progressed, the Android platform was developed closely with the applications that would ultimately ship on top of it. Google already had a wide variety of services—including Gmail, Maps, Calendar, YouTube, and of course Search—that would be delivered on top of Android. Knowledge gained from implementing these applications on top of the early platform was fed back into its design. This iterative process with the applications allowed many design flaws in the platform to be addressed early in its development.

Most of the early application development was done with little of the underlying platform actually available to the developers. The platform was usually running all inside one process, through a ‘‘simulator’’ that ran all of the system and applications as a single process on a host computer. In fact there are still some remnants of this old implementation around today, with things like the Application.onTerminate method still in the SDK (Software Development Kit), which Android programmers use to write applications.

In June 2006, two hardware devices were selected as software-development targets for planned products. The first, code-named ‘‘Sooner,’’ was based on an existing smartphone with a QWERTY keyboard and screen without touch input. The goal of this device was to get an initial product out as soon as possible, by leveraging existing hardware. The second target device, code-named ‘‘Dream,’’ was designed specifically for Android, to run it as fully envisioned. It included a large (for that time) touch screen, slide-out QWERTY keyboard, 3G radio (for faster Web browsing), accelerometer, GPS and compass (to support Google Maps), etc.

As the software schedule came better into focus, it became clear that the two hardware schedules did not make sense. By the time it was possible to release Sooner, that hardware would be well out of date, and the effort put on Sooner was pushing out the more important Dream device. To address this, it was decided to drop Sooner as a target device (though development on that hardware continued for some time until the newer hardware was ready) and focus entirely on Dream.

Android 1.0

The first public availability of the Android platform was a preview SDK released in November 2007. This consisted of a hardware device emulator running a full Android device system image and core applications, API documentation, and a development environment. At this point, the core design and implementation were in place, and in most ways closely resembled the modern Android system architecture we will be discussing. The announcement included video demos of the platform running on top of both the Sooner and Dream hardware.

Early development of Android had been done under a series of quarterly demo milestones to drive and show continued process. The SDK release was the first more formal release for the platform. It required taking all the pieces that had been put together so far for application development, cleaning them up, documenting them, and creating a cohesive development environment for third-party developers. Development now proceeded along two tracks: taking in feedback about the SDK to further refine and finalize APIs, and finishing and stabilizing the implementation needed to ship the Dream device. A number of public updates to the SDK occurred during this time, culminating in a 0.9 release in August 2008 that contained the nearly final APIs.

The platform itself had been going through rapid development, and in the spring of 2008 the focus was shifting to stabilization so that Dream could ship. Android at this point contained a large amount of code that had never been shipped as a commercial product, all the way from parts of the C library, through the Dalvik (and later ART) interpreter (which runs the apps), system services, and applications.

Android also contained quite a few novel design ideas that had never been done before, and it was not clear how they would pan out. This all needed to come together as a stable product, and the team spent a few nail-biting months wondering if all of this stuff would actually come together and work as intended.

Finally, in August 2008, the software was stable and ready to ship. Builds went to the factory and started being flashed onto devices. In September, Android was launched on the Dream device, now called the T-Mobile G1.

Continued Development

After Android’s 1.0 release, development continued at a rapid pace. There were about 15 major updates to the platform over the following 5 years, adding a large variety of new features and improvements from the initial 1.0 release.

The original Compatibility Definition Document basically allowed only for compatible devices that were very much like the T-Mobile G1. Over the following years, the range of compatible devices would greatly expand. Key points of this process were as follows:

During 2009, Android versions 1.5 through 2.0 introduced a soft keyboard to remove a requirement for a physical keyboard, much more extensive screen support (both size and pixel density) for lowerend QVGA devices and new larger and higher density devices like the WVGA Motorola Droid, and a new ‘‘system feature’’ facility for devices to report what hardware features they support and applications to indicate which hardware features they require. The latter is the key mechanism Google Play uses to determine application compatibility with a specific device.

During 2011, Android versions 3.0 through 4.0 introduced new core support in the platform for 10-inch and larger tablets; the core platform now fully supported device screen sizes everywhere from small QVGA phones, through smartphones and larger ‘‘phablets,’’ 7-inch tablets and larger tablets to beyond 10 inches.

As the platform provided built-in support for more diverse hardware, not only larger screens but also nontouch devices with or without a mouse, many more types of Android devices appeared. This included TV devices such as Google TV, gaming devices, notebooks, cameras, etc.

Significant development work also went into something not as visible: a cleaner separation of Google’s proprietary services from the Android open-source platform.

For Android 1.0, a significant amount of work had been put into having a clean third-party application API and an open-source platform with no dependencies on proprietary Google code. However, the implementation of Google’s proprietary code was often not yet cleaned up, having dependencies on internal parts of the platform. Often the platform did not even have facilities that Google’s proprietary code needed in order to integrate well with it. A series of projects were soon undertaken to address these issues:

In 2009, Android version 2.0 introduced an architecture for third parties to plug their own sync adapters into platform APIs like the contacts database. Google’s code for syncing various data moved to this well-defined SDK API.

In 2010, Android version 2.2 included work on the internal design and implementation of Google’s proprietary code. This ‘‘great unbundling’’ cleanly implemented many core Google services, from delivering cloud-based system software updates to ‘‘cloud-to-device messaging’’ and other background services, so that they could be delivered and updated separately from the platform.

In 2012, a new Google Play services application was delivered to devices, containing updated and new features for Google’s proprietary nonapplication services. This was the outgrowth of the unbundling work in 2010, allowing proprietary APIs such as cloud-to-device messaging and maps to be fully delivered and updated by Google.

Since then, there have been a regular series of Android releases. Below are the major releases, with select highlights of the changes in each release related to the core operating system. A number of these will be covered in more detail later.

Android 4.2 (2012): Added support for multi-user separation (allowing different people to share a device in isolated users). SELinux introduced in non-enforcing mode.

Android 4.3 (2013): Extended multi-user to enable ‘‘restricted users,’’ can create restricted environments for children, kiosk modes, point of sale systems, etc.

Android 4.4 (2013): SELinux now enforced across operating systems. Android Runtime (ART) is introduced as a developer preview and will later replace the original Dalvik virtual machine. ART features ahead-of-time compilation and a new concurrent garbage collector to avoid GC stalls that cause missed UI frames.

Android 5.0 (2014): Introduced the JobScheduler, which would be the future foundation for applications to schedule almost all of their background work with the system. Extended multi-user to support ‘‘profiles’’ where two users run concurrently under different identities (typically providing a concurrent personal and work profile that are isolated from each other). Introduced document-centric-recents model, where recent tasks can include documents or other sub-sections of an overall application. Added support for 64-bit apps.

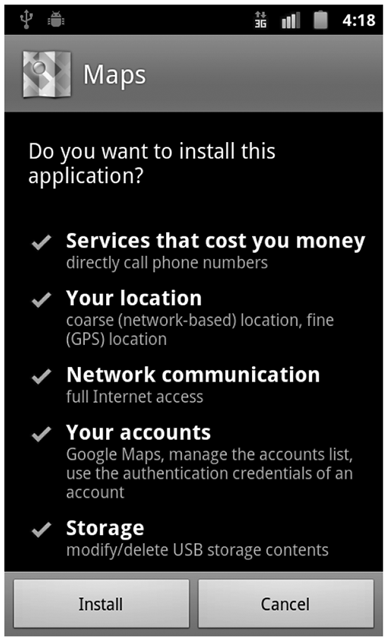

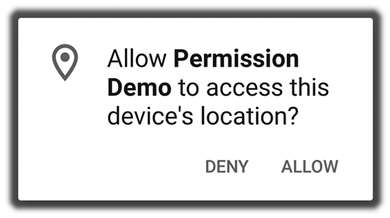

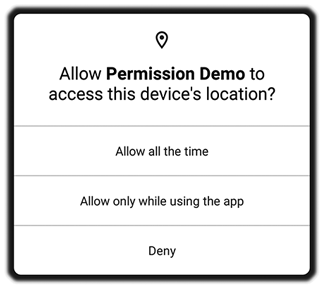

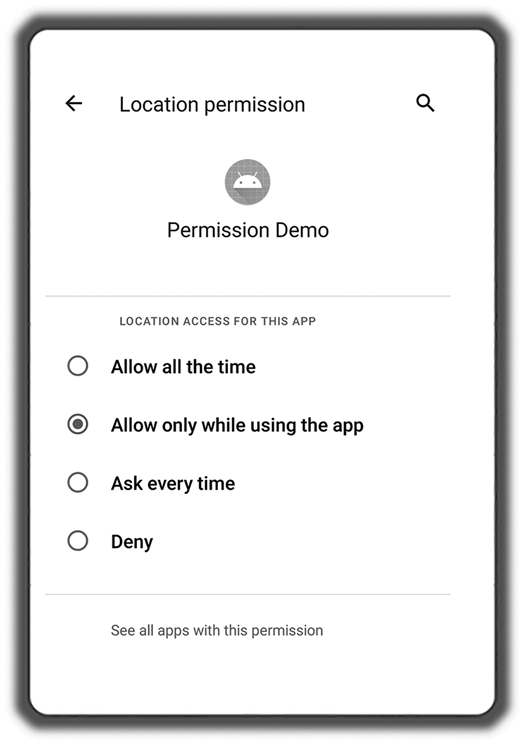

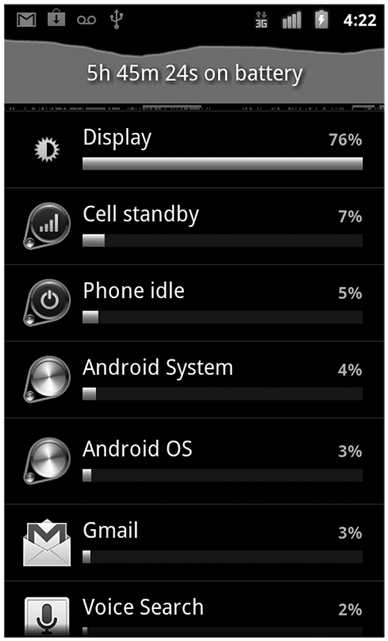

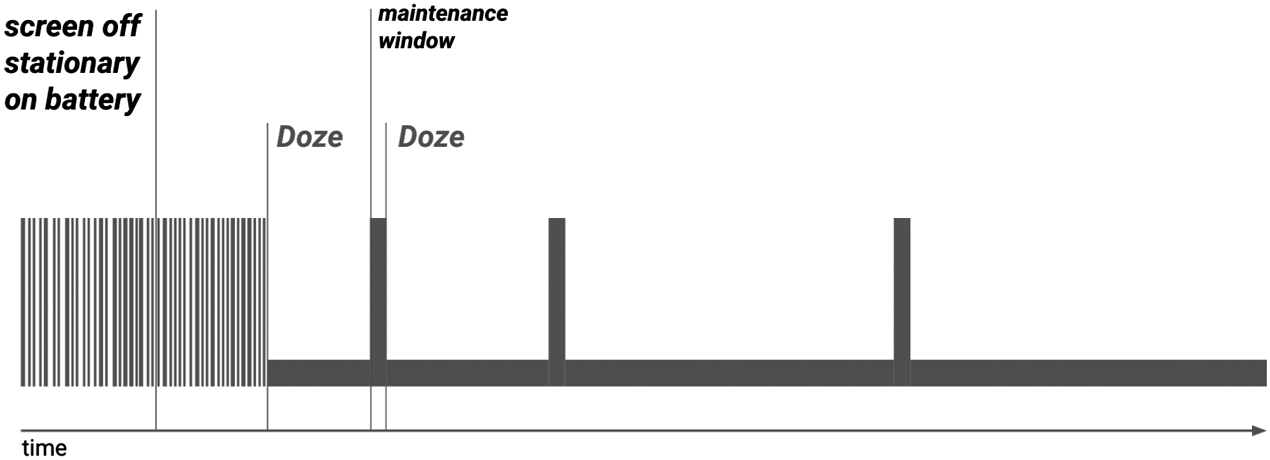

Android 6.0 (2015): Permission model changed from install-time to runtime, reflecting a shift in focus from security to privacy and the increasing complexity of mobile applications with a growing number of secondary features. Introduced the original ‘‘doze mode’’ to take a stronger hand in what apps can do in the background. Security is about protected the device and the user from harm caused by outsiders whereas privacy is focused on protecting the user’s information from snooping. They are quite different and need different approaches.

Android 7.0 (2016): Extended ‘‘doze mode’’ to cover most situations when the screen is off. On all battery-powered devices, managed energy usage to avoid draining the battery too fast is crucial to the user experience, so in Android 7.0 there was more attention to it.

Android 8.0 (2017): A new abstraction, called Treble, was introduced between the Android system and lower-level hardware touched by the kernel and drivers. Similar to the HAL (Hardware Abstraction Layer) in the Windows kernel, Treble provides a stable interface between the bulk of Android and hardware-specific kernel and drivers. It is structured like a microkernel with Treble drivers running in separate userspace processes and Binder IPC (covered later) used to communicate with them. It also placed strong limits on how applications could run in the background, as well as differentiation between background vs. foreground for location access.

Android 9 (2018): Limited the ability of applications to launch into their foreground interface while running in the background. Introduced ‘‘adaptive battery,’’ where a machine-learning system helps guide the system in deciding the importance of background work across applications.

Android 10 (2019): Provided user control over an app’s ability to access location information while in the background. Introduced ‘‘scoped storage’’ to better control data access across applications that are putting data on external storage (such as SD cards).

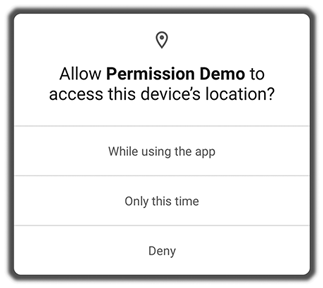

Android 11 (2020): Allowed the user to select ‘‘only this once’’ for permissions that provide access to continuous personal data: location, camera, and microphone.

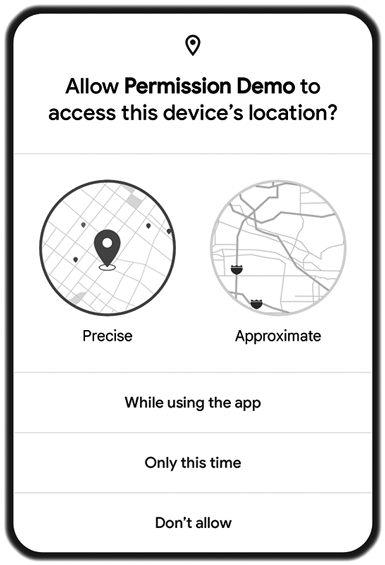

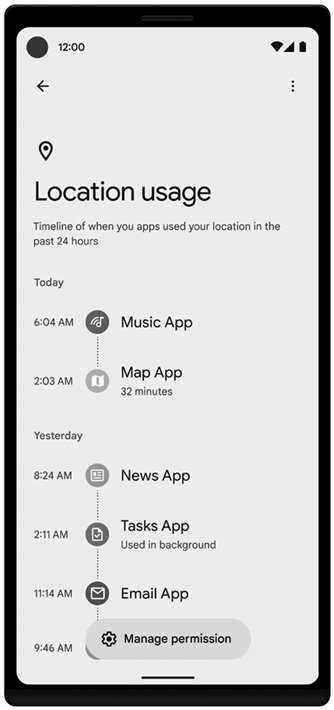

Android 12 (2021): Gave the user control over coarse vs. fine location access. Introduced a ‘‘permissions hub’’ allowing users to see how applications have been accessing their personal data. Limited other cases (using foreground services) where applications could go into a foreground state from the background.

10.8.3 Design Goals

A number of key design goals for the Android platform evolved during its development:

Provide a complete open-source platform for mobile devices. The open-source part of Android is a bottom-to-top operating system stack, including a variety of applications, that can ship as a complete product.

Strongly support proprietary third-party applications with a robust and stable API. As previously discussed, it is challenging to maintain a platform that is both truly open source and also stable enough for proprietary third-party applications. Android uses a mix of technical solutions (specifying a very well-defined SDK and division between public APIs and internal implementation) and policy requirements (through the CDD) to address this.

Allow all third-party applications, including those from Google, to compete on a level playing field. The Android open source code is designed to be neutral as much as possible to the higher-level system features built on top of it, from access to cloud services (such as data sync or cloud-to-device messaging APIs), to libraries (such as Google’s mapping library) and rich services like application stores.

Provide an application security model in which users do not have to deeply trust third-party applications and do not need to rely on a gatekeeper (like a carrier) to control which applications can be installed on the device in order to protect them. The operating system itself must protect the user from misbehavior of applications, not only buggy applications that can cause it to crash, but more subtle misuse of the device and the user’s data on it. The less users need to trust applications or the sources of those applications, the more freedom they have to try out and install them.

Support typical mobile user interaction, where the user often spends short amounts of time in many apps. The mobile experience tends to involve brief interactions with applications: glancing at new received email, receiving and sending an SMS message or IM, going to contacts to place a call, etc. The system needs to optimize for these cases with fast app launch and switch times; the goal for Android has generally been 200 msec to cold start a basic application up to the point of showing a full interactive UI.

Manage application processes for users, simplifying the user experience around applications so that users do not have to worry about closing applications when done with them. Mobile devices also tend to run without the swap space that allows operating systems to fail more gracefully when the current set of running applications requires more RAM than is physically available. To address both of these requirements, the system needs to take a more proactive stance about managing application processes and deciding when they should be started and stopped.

Encourage applications to interoperate and collaborate in rich and secure ways. Mobile applications are in some ways a return back to shell commands: rather than the increasingly large monolithic design of desktop applications, they are often targeted and more focused for specific needs. To help support this, the operating system should provide new types of facilities for these applications to collaborate together to create a larger whole.

Create a full general-purpose operating system. Mobile devices are a new expression of general purpose computing, not something simpler than our traditional desktop operating systems. Android’s design should be rich enough that it can grow to be at least as capable as a traditional operating system.

10.8.4 Android Architecture

Android is built on top of the standard Linux kernel, with only a few significant extensions to the kernel itself that will be discussed later. Once in user space, however, its implementation is quite different from a traditional Linux distribution and uses many of the Linux features you already understand, but in very different ways.

As in a traditional Linux system, Android’s first user-space process is init, which is the root of all other processes. The daemons Android’s init process starts are different, however, focused more on low-level details (managing file systems and hardware access) rather than higher-level user facilities like scheduling cron jobs. Android also has an additional layer of processes, those running ART (for Android Runtime which implements the Java language environment); these are responsible for executing all parts of the system implemented in Java.

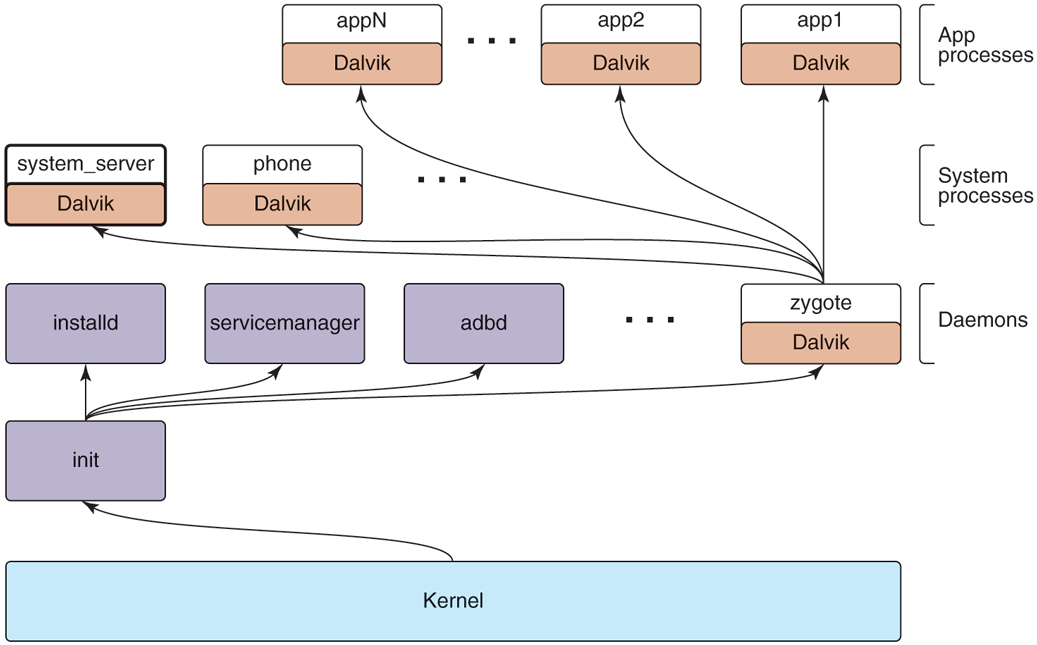

Figure 10-39 illustrates the basic process structure of Android. First is the init process, which spawns a number of low-level daemon processes. One of these is zygote, which is the root of the higher-level Java language processes.

Figure 10-39

Android process hierarchy.

Android’s init does not run a shell in the traditional way, since a typical Android device does not have a local console for shell access. Instead, the daemon process adbd listens for remote connections (such as over USB) that request shell access, forking shell processes for them as needed. These parts are always there, no matter which platform is being used or what features it has.

Since most of Android is written in the Java language, the zygote daemon and processes it starts are central to the system. The first process zygote always starts is called system_server, which contains all of the core operating system services. Key parts of this are the power manager, package manager, window manager, and activity manager.

Other processes will be created from zygote as needed. Some of these are ‘‘persistent’’ processes that are part of the basic operating system, such as the telephony stack in the phone process, which must remain always running. Additional application processes will be created and stopped as needed while the system is running.

Applications interact with the operating system through calls to libraries provided by it, which together compose the Android framework. Some of these libraries can perform their work within that process, but many will need to perform interprocess communication with other processes, often services in the system_server process.

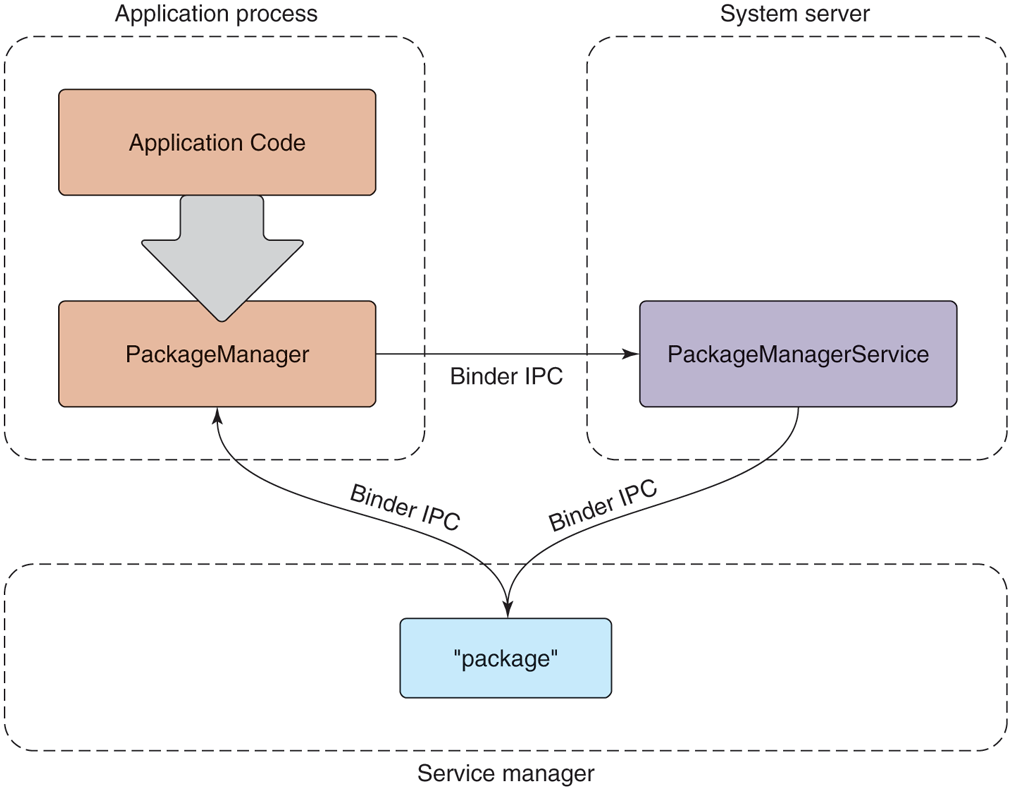

Figure 10-40 shows the typical design for Android framework APIs that interact with system services, in this case the package manager. The package manager provides a framework API for applications to call in their local process, here the PackageManager class. Internally, this class needs to get a connection to the coresponding service in the system_server. To accomplish this, at boot time the system_server publishes each service under a well-defined name in the service manager, a daemon started by init. The PackageManager in the application process retrieves a connection from the service manager to its system service using that same name.

Figure 10-40

Publishing and interacting with system services.

Once the PackageManager has connected with its system service, it can make calls on it. Most application calls to PackageManager are implemented as interprocess communication using Android’s Binder IPC mechanism, in this case making calls to the PackageManagerService implementation in the system_server. The implementation of PackageManagerService arbitrates interactions across all client applications and maintains state that will be needed by multiple applications.

10.8.5 Linux Extensions

For the most part, Android includes a stock Linux kernel providing standard Linux features. Most of the interesting aspects of Android as an operating system are in how those existing Linux features are used. There are also, however, several significant extensions to Linux that the Android system relies on.

Wake Locks

Power management on mobile devices is different than on traditional computing systems, so Android adds a new feature to Linux called wake locks (also called suspend blockers) for managing how the system goes to sleep. This is important in order to save energy and maximize the time before the battery is drained.

On a traditional computing system, the system can be in one of two power states: running and ready for user input, or deeply asleep and unable to continue executing without an external interrupt such as pressing a power key. While running, secondary pieces of hardware may be turned on or off as needed, but the CPU itself and core parts of the hardware must remain in a powered state to handle incoming network traffic and other such events. Going into the lower-power sleep state is something that happens relatively rarely: either through the user explicitly putting the system to sleep, or its going to sleep itself due to a relatively long interval of user inactivity. Coming out of this sleep state requires a hardware interrupt from an external source, such as pressing a key on a keyboard, at which point the device will wake up and turn on its screen.

Mobile device users have different expectations. Although the user can turn off the screen in a way that looks like putting the device to sleep, the traditional sleep state is not actually desired. While a device’s screen is off, the device still needs to be able to do work: it needs to be able to receive phone calls, receive and process data for incoming chat messages, and many other things.

The expectations around turning a mobile device’s screen on and off are also much more demanding than on a traditional computer. Mobile interaction tends to be in many short bursts throughout the day: you receive a message and turn on the device to see it and perhaps send a one-sentence reply or you run into friends walking their new dog and turn on the device to take a picture of her. In this kind of typical mobile usage, any delay from pulling the device out until it is ready for use has a significant negative impact on the user experience.

Given these requirements, one solution would be to just not have the CPU go to sleep when a device’s screen is turned off, so that it is always ready to turn back on again. The kernel does, after all, know when there is no work scheduled for any threads, and Linux (as well as most operating systems) will automatically make the CPU idle and use less power in this situation.

An idle CPU, however, is not the same thing as true sleep. For example:

On many chipsets, the idle state uses significantly more power than a true sleep state.

An idle CPU can wake up at any moment if some work happens to become available, even if that work is not important.

Just having the CPU idle does not tell you that you can turn off other hardware that would not be needed in a true sleep.

Wake locks on Android allow the system to go in to a deeper sleep mode, without being tied to an explicit user action like turning the screen off. The default state of the system with wake locks is that the device is asleep. When the device is running, to keep it from going back to sleep something needs to be holding a wake lock.

While the screen is on, the system always holds a wake lock that prevents the device from going to sleep, so it will stay running, as we expect.

When the screen is off, however, the system itself does not generally hold a wake lock, so it will stay out of sleep only as long as something else is holding one. When no more wake locks are held, the system goes to sleep, and it can come out of sleep only due to a hardware interrupt.

Once the system has gone to sleep, a hardware interrupt will wake it up again, as in a traditional operating system. Some sources of such an interrupt are timebased alarms, events from the cellular radio (such as for an incoming call), incoming network traffic, and presses on certain hardware buttons (such as the power button). Interrupt handlers for these events require one change from standard Linux: they need to acquire an initial wake lock to keep the system running after it handles the interrupt.

The wake lock acquired by an interrupt handler must be held long enough to transfer control up the stack to the driver in the kernel that will continue processing the event. That kernel driver is then responsible for acquiring its own wake lock, after which the interrupt wake lock can be safely released without risk of the system going back to sleep.

If the driver is then going to deliver this event up to user space, a similar handshake is needed. The driver must ensure that it continues to hold the wake lock until it has delivered the event to a waiting user process and ensured there has been an opportunity there to acquire its own wake lock. This flow may continue across subsystems in user space as well; as long as something is holding a wake lock, we continue performing the desired processing to respond to the event. Once no more wake locks are held, however, the entire system falls back to sleep and all processing stops.

After Android shipped, there was significant discussion with the Linux community about how to merge Android’s wake lock facility back into the mainline kernel. This was especially important because wake locks require that drivers use them to keep the system running when needed, causing a fork of not just the kernel but also any drivers that need to do this.

Ultimately Linux added a ‘‘wakeup event’’ facility, allowing drivers and other entities in the kernel to note when they are the source of a wakeup and/or need to ensure the device continues to stay way. The decision for whether to go into suspend, however, was moved to user space, keeping the policy for when to suspend out of the kernel. Android provides a user space implementation that makes the decision to suspend based on the wakeup event state in the kernel as well as wake lock requests coming to it from elsewhere in user space.

Out-of-Memory Killer

Linux includes an ‘‘out-of-memory killer’’ that attempts to recover when memory is extremely low. Out-of-memory situations on modern operating systems are nebulous affairs. With paging and swap, it is rare for applications themselves to see out-of-memory failures. However, the kernel can still get in to a situation where it is unable to find available RAM pages when needed, not just for a new allocation, but when swapping in or paging in some address range that is now being used.

In such a low-memory situation, the standard Linux out-of-memory killer is a last resort to try to find RAM so that the kernel can continue with whatever it is doing. This is done by assigning each process a ‘‘badness’’ level, and simply killing the process that is considered the most bad. A process’s badness is based on the amount of RAM being used by the process, how long it has been running, and other factors; the goal is to kill large processes that are hopefully not critical.

Android puts special pressure on the out-of-memory killer. It does not have a swap space, so it is much more common to be in out-of-memory situations: there is no way to relieve memory pressure except by dropping clean RAM pages mapped from storage that has been recently used. Even so, Android uses the standard Linux configuration to over-commit memory—that is, allow address space to be allocated in RAM without a guarantee that there is available RAM to back it. Overcommit is an extremely important tool for optimizing memory use, since it is common to mmap large files (such as executables) where you will only be needing to load into RAM small parts of the overall data in that file.

Given this situation, the stock Linux out-of-memory killer does not work well, as it is intended more as a last resort and has a hard time correctly identifying good processes to kill. In fact, as we will discuss later, Android relies extensively on the out-of-memory killer running regularly to reap processes and make good choices about which to select.

To address this, Android introduced its own out-of-memory killer to the kernel, with different semantics and design goals. The Android out-of-memory killer runs much more aggressively: whenever RAM is getting ‘‘low.’’ Low RAM is identified by a tunable parameter indicating how much available free and cached RAM in the kernel is acceptable. When the system goes below that limit, the out-of-memory killer runs to release RAM from elsewhere. The goal is to ensure that the system never gets into bad paging states, which can negatively impact the user experience when foreground applications are competing for RAM, since their execution becomes much slower due to continual paging in and out.

Instead of trying to guess which processes are least useful and therefore should be killed, the Android out-of-memory killer relies very strictly on information provided to it by user space. The traditional Linux out-of-memory killer has a per-process oom_adj parameter that can be used to guide it toward the best process to kill by modifying the process’ overall badness score. Android’s original outof-memory killer used this same parameter, but as a strict ordering: processes with a higher oom_adj will always be killed before those with lower ones. We will discuss later how the Android system decides to assign these scores.

In later versions of Android, a new user-space lmkd process was added to take care of killing processes, replacing the original Android implementation in the kernel. This was made possible by newer Linux features such as ‘‘pressure-stall information’’ provided to user space. Switching to lmkd not only allows Android to use a closer to stock Linux kernel, but also gives it more flexibility in how the higher-level system interacts with the low-memory-killer.

For example, the oom_adj parameter in the kernel has a limit range of values, from to 15. This greatly limits the granularity of process selection that can be provided to it. The new lmkd implementation allows a full integer for ordering processes.

10.8.6 Art

ART (Android RunTime) implements the Java language environment on Android that is responsible for running applications as well as most of its system code. Almost everything in the system_service process—from the package manager, through the window manager, to the activity manager—is implemented with Java language code executed by ART.

Android is not, however, a Java-language platform in the traditional sense. Java code in an Android application is provided in ART’s bytecode format, called DEX (Dalvik Executable), based around a register machine rather than Java’s traditional stack-based bytecode.

DEX allows for faster interpretation, while still supporting JIT (Just-in-Time) compilation. DEX is also more space efficient, both on disk and in RAM, through the use of string pooling and other techniques.

When writing Android applications, source code is written in Java and then compiled into standard Java bytecode using traditional Java tools. Android then introduces a new step: converting that Java bytecode into DEX. It is the DEX version of an application that is packaged up as the final application binary and ultimately installed on the device.

Android’s system architecture leans heavily on Linux for system primitives, including memory management, security, and communication across security boundaries. It does not use the Java language for core operating system concepts—there is little attempt to abstract away these important aspects of the underlying Linux operating system.

Of particular note is Android’s use of processes. Android’s design does not rely on the Java language to protect application from each other and the system, but rather takes the traditional operating system approach of process isolation. This means that each application is running in its own Linux process with its own ART environment, as are the system_server and other core parts of the platform that are written in Java.

Using processes for this isolation allows Android to leverage all of Linux’s features for managing processes, from memory isolation to cleaning up all of the resources associated with a process when it goes away. In addition to processes, instead of using Java’s SecurityManager architecture, Android relies exclusively on Linux’s security features.

The use of Linux processes and security greatly simplifies the ART environment, since it is no longer responsible for these critical aspects of system stability and robustness. Not incidentally, it also allows applications to freely use native code in their implementation, which is especially important for games which are usually built with C++-based engines.

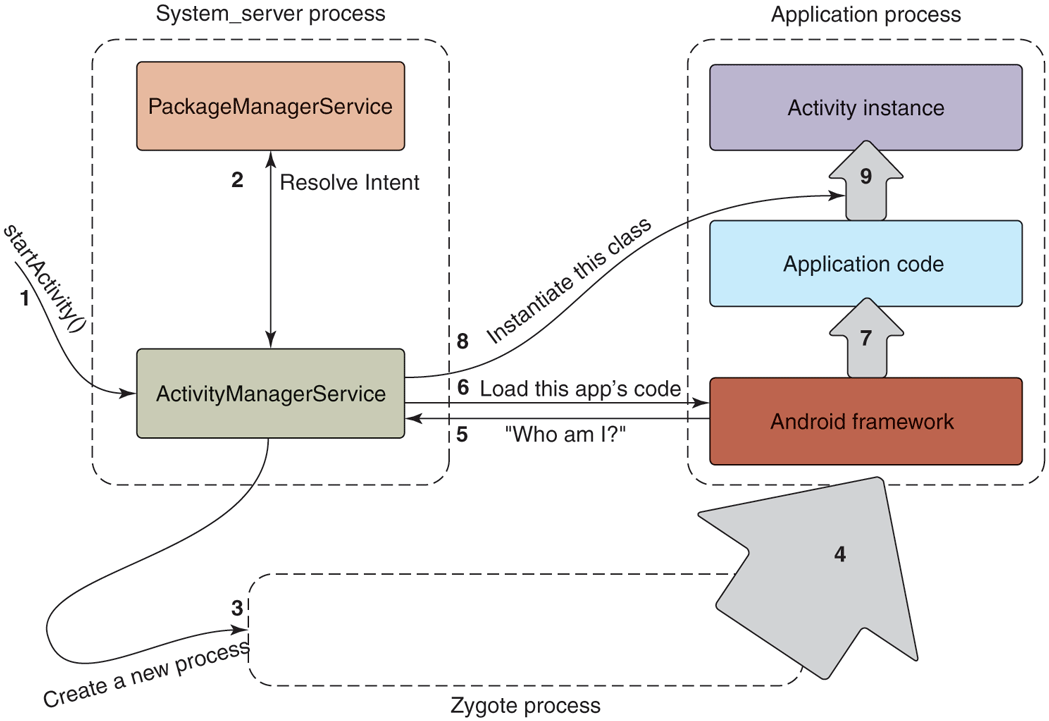

Mixing processes and the Java language like this does introduce some challenges. Bringing up a fresh Java-language environment can take more than a second, even on modern mobile hardware. Recall one of the design goals of Android, to be able to quickly launch applications, with a target of 200 msec. Requiring that a fresh ART process be brought up for this new application would be well beyond that budget. A 200-msec launch is hard to achieve on mobile hardware, even without needing to initialize a new Java-language environment.

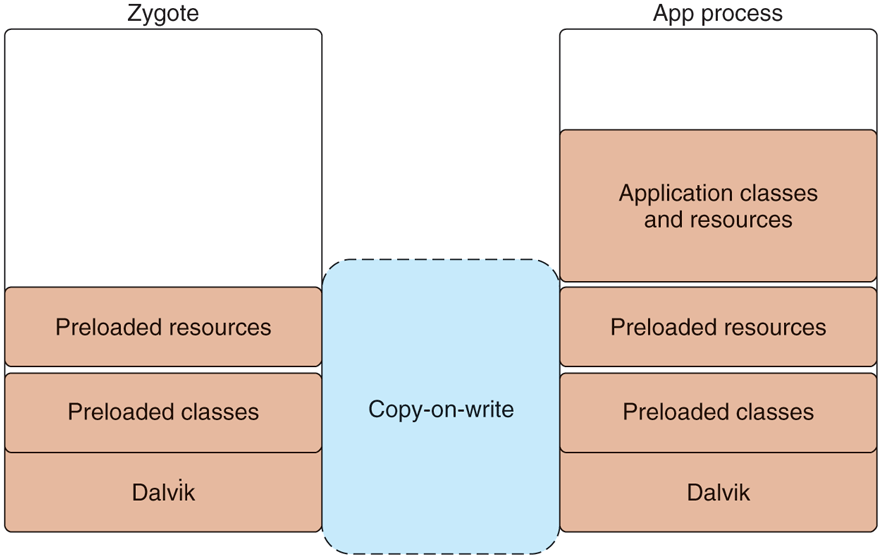

The solution to this problem is the zygote native daemon that we briefly mentioned earlier in the chapter. Zygote is responsible for bringing up and initializing ART, to the point where it is ready to start running system or application code written in Java. All new ART-based processes (system or application) are forked from zygote, allowing them to start execution with the environment already ready to go. This greatly speeds up launching apps.

It is not just ART that zygote brings up. Zygote also preloads many parts of the Android framework that are commonly used in the system and application, as well as loading resources and other things that are often needed.

Note that creating a new process from zygote involves a Linux fork system call but there is no exec system call. The new process is a replica of the original zygote process, with all of its preinitialized state already set up and ready to go. Figure 10-41 illustrates how a new Java application process is related to the original zygote process. After the fork, the new process has its own separate ART environment, though it is sharing all of the preloaded and initialed data with zygote through copy-on-write pages. All that now needs to be done to have the new running process ready to go is to give it the correct identity (UID, etc.), finish any initialization of ART that requires starting threads, and loading the application or system code to be run.

Figure 10-41

Creating a new ART process from zygote.

In addition to launch speed, there is another benefit that zygote brings. Because only a fork is used to create processes from it, the large number of dirty RAM pages needed to initialize ART and preload classes and resources can be shared between zygote and all of its child processes. This sharing is especially important for Android’s environment, where swap is not available; demand paging of clean pages (such as executable code) from ‘‘disk’’ (flash memory) is available. However, any dirty pages must stay locked in RAM; they cannot be paged out to ‘‘disk.’’

10.8.7 Binder IPC

Android’s system design revolves significantly around process isolation, between applications as well as between different parts of the system itself. This requires a large amount of interprocess communication to coordinate between the different processes, which can take a large amount of work to implement and get right. Android’s Binder interprocess communication mechanism is a rich general-purpose IPC facility that most of the Android system is built on top of.

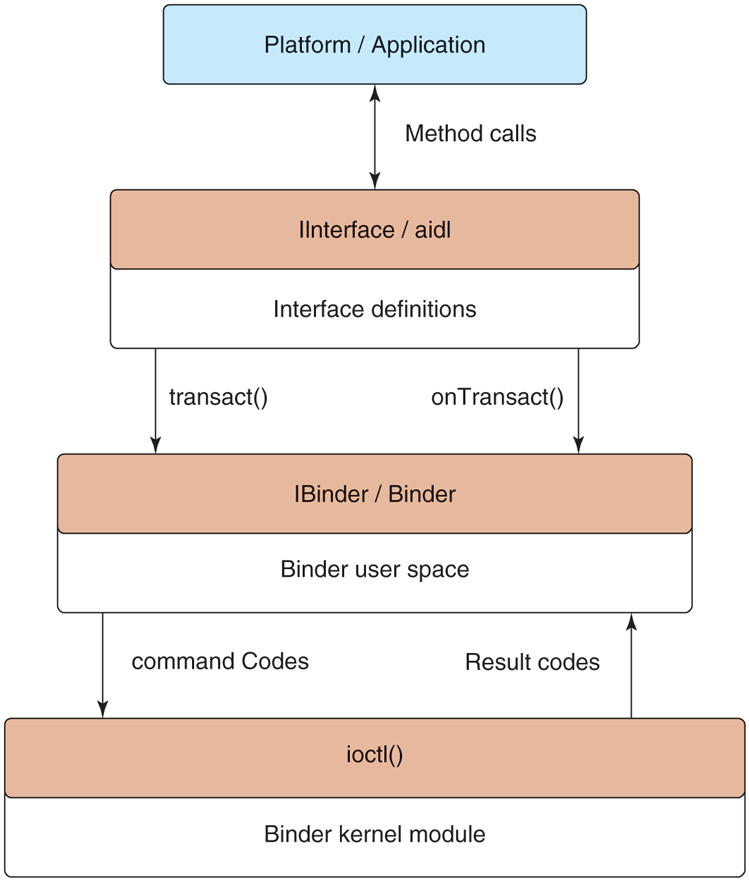

The Binder architecture is divided into three layers, shown in Fig. 10-42. At the bottom of the stack is a kernel module that implements the actual cross-process interaction and exposes it through the kernel’s ioctl function. (ioctl is a general-purpose kernel call for sending custom commands to kernel drivers and modules.) On top of the kernel module is a basic object-oriented user-space API, allowing applications to create and interact with IPC endpoints through the IBinder and Binder classes. At the top is an interface-based programming model where applications declare their IPC interfaces and do not otherwise need to worry about the details of how IPC happens in the lower layers.

Figure 10-42

Binder IPC architecture.

Binder Kernel Module

Rather than use existing Linux IPC facilities such as pipes, Binder includes a special kernel module that implements its own IPC mechanism. The Binder IPC model is different enough from traditional Linux mechanisms that it cannot be efficiently implemented on top of them purely in user space. In addition, Android does not support most of the System V primitives for cross-process interaction (semaphores, shared memory segments, message queues) because they do not provide robust semantics for cleaning up their resources from buggy or malicious applications.

The basic IPC model Binder uses is the RPC (Remote Procedure Call). That is, the sending process is submitting a complete IPC operation to the kernel, which is executed in the receiving process; the sender may block while the receiver executes, allowing a result to be returned back from the call. (Senders optionally may specify they should not block, continuing their execution in parallel with the receiver.) Binder IPC is thus message based, like System V message queues, rather than stream based as in Linux pipes. A message in Binder is referred to as a transaction, and at a higher level can be viewed as a function call across processes.

Each transaction that user space submits to the kernel is a complete operation: it identifies the target of the operation and identity of the sender as well as the complete data being delivered. The kernel determines the appropriate process to receive that transaction, delivering it to a waiting thread in the process.

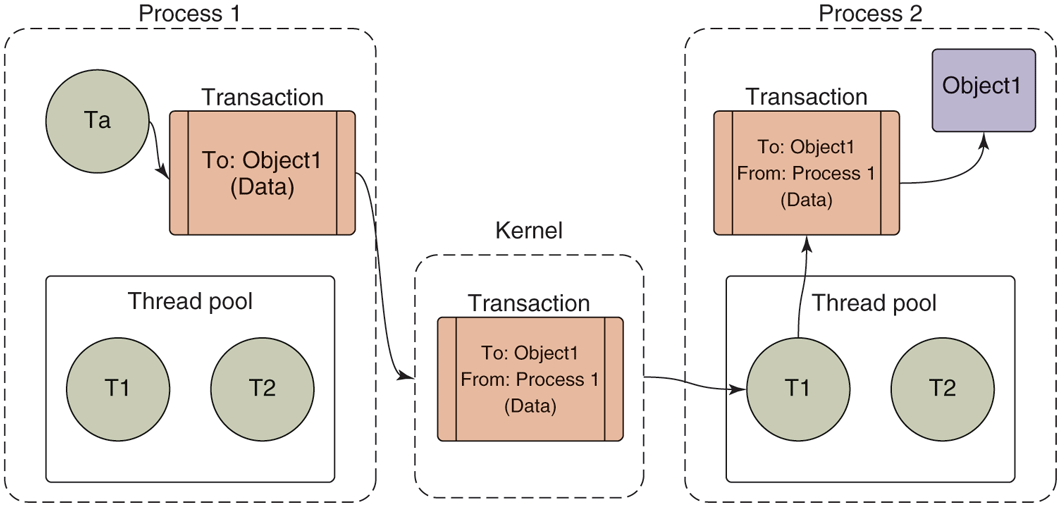

Figure 10-43 illustrates the basic flow of a transaction. Any thread in the originating process may create a transaction identifying its target, and submit this to the kernel. The kernel makes a copy of the transaction, adding to it the identity of the sender. It determines which process is responsible for the target of the transaction and wakes up a thread in the process to receive it. Once the receiving process is executing, it determines the appropriate target of the transaction and delivers it.

Figure 10-43

Basic Binder IPC transaction.

(For the discussion here, we are simplifying the way transaction data moves through the system as two copies, one to the kernel and one to the receiving process’s address space. The actual implementation does this in one copy. For each process that can receive transactions, the kernel creates a shared memory area with it. When it is handling a transaction, it first determines the process that will be receiving that transaction and copies the data directly into that shared address space.)

Note that each process in Fig. 10-43 has a ‘‘thread pool.’’ This is one or more threads created by user space to handle incoming transactions. The kernel will dispatch each incoming transaction to a thread currently waiting for work in that process’s thread pool. Calls into the kernel from a sending process, however, do not need to come from the thread pool—any thread in the process is free to initiate a transaction, such as Ta in Fig. 10-43.

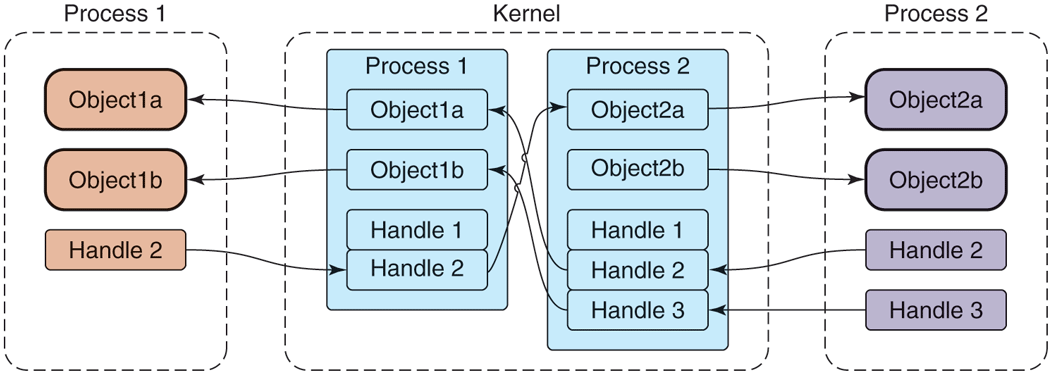

We have already seen that transactions given to the kernel identify a target object; however, the kernel must determine the receiving process. To accomplish this, the kernel keeps track of the available objects in each process and maps them to other processes, as shown in Fig. 10-44. The objects we are looking at here are simply locations in the address space of that process. The kernel only keeps track of these object addresses, with no meaning attached to them; they may be the location of a C data structure, C++ object, or anything else located in that process’s address space.

Figure 10-44

Binder cross-process object mapping.

References to objects in remote processes are identified by an integer handle, which is much like a Linux file descriptor. For example, consider Object2a in Process 2—this is known by the kernel to be associated with Process 2, and further the kernel has assigned Handle 2 for it in Process 1. Process 1 can thus submit a transaction to the kernel targeted to its Handle 2, and from that the kernel can determine this is being sent to Process 2 and specifically Object2a in that process.

Also like file descriptors, the value of a handle in one process does not mean the same thing as that value in another process. For example, in Fig. 10-44, we can see that in Process 1, a handle value of 2 identifies Object2a; however, in Process 2, that same handle value of 2 identifies Object1a. Further, it is impossible for one process to access an object in another process if the kernel has not assigned a handle to it for that process. Again in Fig. 10-44, we can see that Process 2’s Object2b is known by the kernel, but no handle has been assigned to it for Process 1. There is thus no path for Process 1 to access that object, even if the kernel has assigned handles to it for other processes.

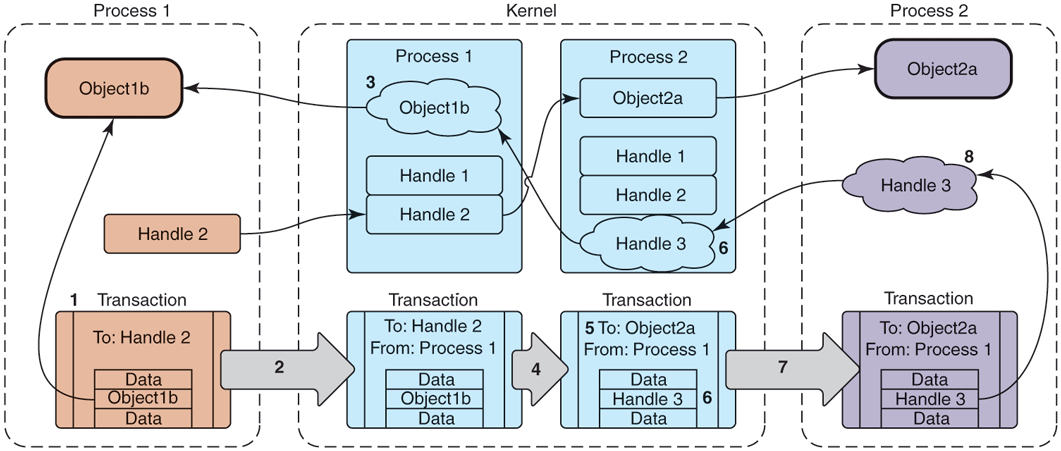

How do these handle-to-object associations get set up in the first place? Unlike Linux file descriptors, user processes do not directly ask for handles. Instead, the kernel assigns handles to processes as needed. This process is illustrated in Fig. 10-45. Here we are looking at how the reference to Object1b from Process 2 to Process 1 in the previous figure may have come about. The key to this is how a transaction flows through the system, from left to right at the bottom of the figure.

Figure 10-45

Transferring Binder objects between processes.

The key steps shown in Fig. 10-45 are as follows:

Process 1 creates the initial transaction structure, which contains the local address Object1b.

Process 1 submits the transaction to the kernel.

The kernel looks at the data in the transaction, finds the address Object1b, and creates a new entry for it since it did not previously know about this address.

The kernel uses the target of the transaction, Handle 2, to determine that this is intended for Object2a which is in Process 2.

The kernel now rewrites the transaction header to be appropriate for Process 2, changing its target to address Object2a.

The kernel likewise rewrites the transaction data for the target process; here it finds that Object1b is not yet known by Process 2, so a new Handle 3 is created for it.

The rewritten transaction is delivered to Process 2 for execution.

Upon receiving the transaction, the process discovers there is a new Handle 3 and adds this to its table of available handles.

If an object within a transaction is already known to the receiving process, the flow is similar, except that now the kernel only needs to rewrite the transaction so that it contains the previously assigned handle or the receiving process’s local object pointer. This means that sending the same object to a process multiple times will always result in the same identity, unlike Linux file descriptors where opening the same file multiple times will allocate a different descriptor each time. The Binder IPC system maintains unique object identities as those objects move between processes.

The Binder architecture essentially introduces a capability-based security model to Linux. Each Binder object is a capability. Sending an object to another process grants that capability to the process. The receiving process may then make use of whatever features the object provides. A process can send an object out to another process, later receive an object from any process, and identify whether that received object is exactly the same object it originally sent out.

Binder User-Space API

Most user-space code does not directly interact with the Binder kernel module. Instead, there is a user-space object-oriented library that provides a simpler API. The first level of these user-space APIs maps fairly directly to the kernel concepts we have covered so far, in the form of three classes:

IBinder is an abstract interface for a Binder object. Its key method is transact, which submits a transaction to the object. The implementation receiving the transaction may be an object either in the local process or in another process; if it is in another process, this will be delivered to it through the Binder kernel module as previously discussed.

Binder is a concrete Binder object. Implementing a Binder subclass gives you a class that can be called by other processes. Its key method is onTransact, which receives a transaction that was sent to it. The main responsibility of a Binder subclass is to look at the transaction data it receives here and perform the appropriate operation.

Parcel is a container for reading and writing data that are in a Binder transaction. It has methods for reading and writing typed data—integers, strings, arrays—but most importantly it can read and write references to any IBinder object, using the appropriate data structure for the kernel to understand and transport that reference across processes.

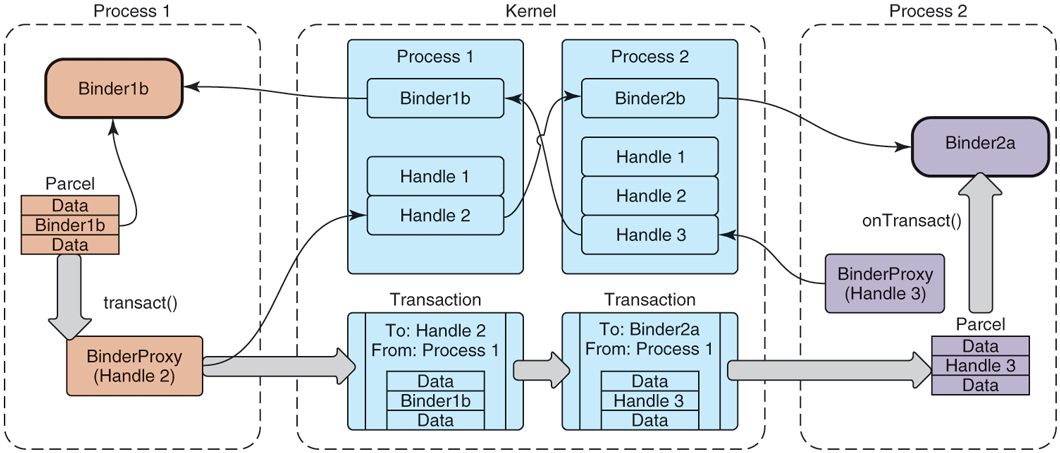

Figure 10-46 depicts how these classes work together, modifying Fig. 10-44 that we previously looked at with the user-space classes that are used. Here we see that Binder1b and Binder2a are instances of concrete Binder subclasses. To perform an IPC, a process now creates a Parcel containing the desired data, and sends it through another class we have not yet seen, BinderProxy. This class is created whenever a new handle appears in a process, thus providing an implementation of IBinder whose transact method creates the appropriate transaction for the call and submits it to the kernel.

Figure 10-46

Binder user-space API.

The kernel transaction structure we had previously looked at is thus split apart in the user-space APIs: the target is represented by a BinderProxy and its data are held in a Parcel. The transaction flows through the kernel as we previously saw and, upon appearing in user space in the receiving process, its target is used to determine the appropriate receiving Binder object while a Parcel is constructed from its data and delivered to that object’s onTransact method.

These three classes now make it fairly easy to write IPC code:

Subclass from Binder.

Implement onTransact to decode and execute incoming calls.

Implement corresponding code to create a Parcel that can be passed to that object’s transact method.

The bulk of this work is in the last two steps. This is the unmarshalling and marshalling code that is needed to turn how we’d prefer to program—using simple method calls—into the operations that are needed to execute an IPC. This is boring and error-prone code to write, so we’d like to let the computer take care of that for us.

Binder Interfaces and AIDL

The final piece of Binder IPC is the one that is most often used, a high-level interface-based programming model. Instead of dealing with Binder objects and Parcel data, here we get to think in terms of interfaces and methods.

The main piece of this layer is a command-line tool called AIDL (for Android Interface Definition Language). This tool is an interface compiler, taking an abstract description of an interface and generating from it the source code that is necessary to define that interface and implement the appropriate marshalling and unmarshalling code needed to make remote calls with it.

Figure 10-47 shows a simple example of an interface defined in AIDL. This interface is called IExample and contains a single method, print, which takes a single String argument.

Figure 10-47

Simple interface described in AIDL.

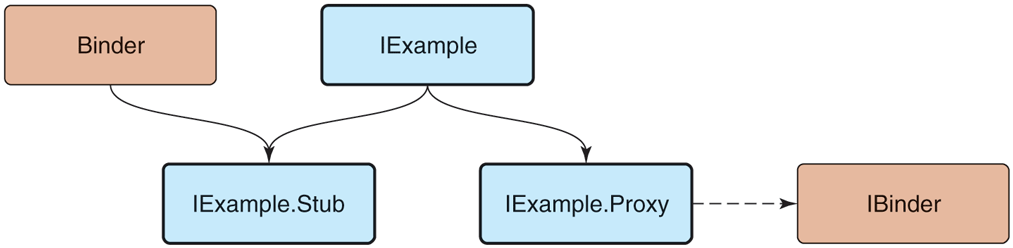

An interface description like that in Fig. 10-47 is compiled by AIDL to generate three Java-language classes illustrated in Fig. 10-48:

-

IExample supplies the Java-language interface definition.

IExample.Stub is the base class for implementations of this interface. It inherits from Binder, meaning it can be the recipient of IPC calls; it inherits from IExample, since this is the interface being implemented. The purpose of this class is to perform unmarshalling: turn incoming onTransact calls in to the appropriate method call of IExample. A subclass of it is then responsible only for implementing the IExample methods.

IExample.Proxy is the other side of an IPC call, responsible for performing marshalling of the call. It is a concrete implementation of IExample, implementing each method of it to transform the call into the appropriate Parcel contents and send it off through a transact call on an IBinder it is communicating with.

Figure 10-48

Binder interface inheritance hierarchy.

With these classes in place, there is no longer any need to worry about the mechanics of an IPC. Implementors of the IExample interface simply derive from IExample.Stub and implement the interface methods as they normally would. Callers will receive an IExample interface that is implemented by IExample.Proxy, allowing them to make regular calls on the interface.

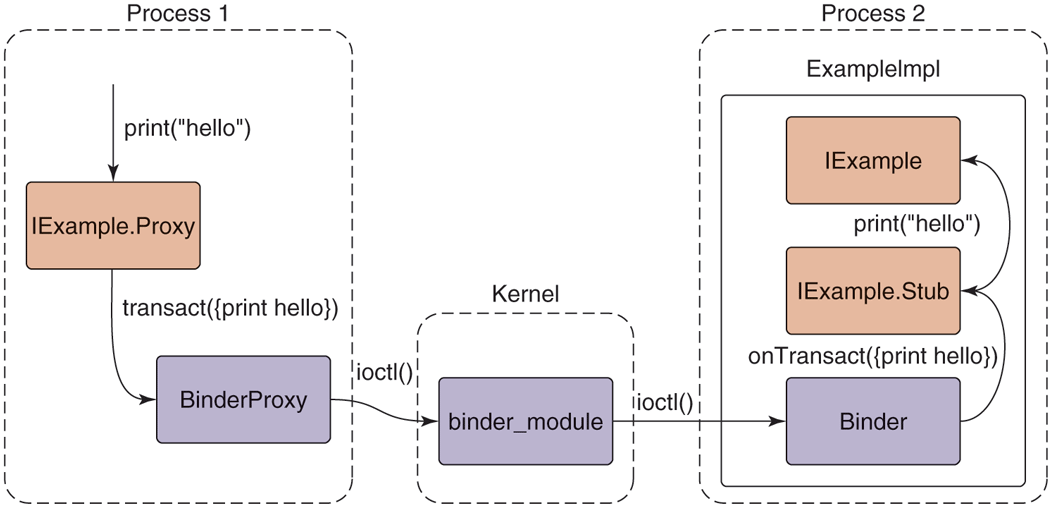

The way these pieces work together to perform a complete IPC operation is shown in Fig. 10-49. A simple print call on an IExample interface turns into:

Figure 10-49

Full path of an AIDL-based Binder IPC.

-

IExample.Proxy marshals the method call into a Parcel, calling transact on the IBinder it is connected to, which is typically a BinderProxy for an object in another process.

BinderProxy constructs a kernel transaction and delivers it to the kernel through an ioctl call.

The kernel transfers the transaction to the intended process, delivering it to a thread that is waiting in its own ioctl call.

The transaction is decoded back into a Parcel and onTransact called on the appropriate local object, here ExampleImpl (which is a subclass of IExample.Stub).

IExample.Stub decodes the Parcel into the appropriate method and arguments to call, here calling print.

The concrete implementation of print in ExampleImpl finally executes.

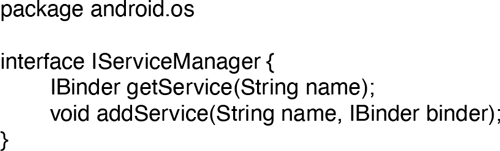

The bulk of Android’s IPC is written using this mechanism. Most services in Android are defined through AIDL and implemented as shown here. Recall the previous Fig. 10-40 showing how the implementation of the package manager in the system server process uses IPC to publish itself with the service manager for other processes to make calls to it. Two AIDL interfaces are involved here: one for the service manager and one for the package manager. For example, Fig. 10-50 shows the basic AIDL description for the service manager; it contains the getService method, which other processes use to retrieve the IBinder of system service interfaces like the package manager.

Figure 10-50

Basic service manager AIDL interface.

10.8.8 Android Applications

Android provides an application model that is very different from a typical command-line environment in the Linux shell or even applications launched from a graphical user interface such as Gnome or KDE. An application is not an executable file with a main entry point; it is a container of everything that makes up that app: its code, graphical resources, declarations about what it is to the system, and other data.

An Android application by convention is a file with the apk extension, for Android Package. This file is actually a normal zip archive, containing everything about the application. The important contents of an apk are as follows:

A manifest describing what the application is, what it does, and how to run it. The manifest must provide a

package namefor the application, a Java-style scoped string (such ascom.android.app.calculator), which uniquely identifies it.Resources needed by the application, including strings it displays to the user, XML data for layouts and other descriptions, graphical bitmaps, etc.

The code itself, which may be ART bytecode as well as native library code.

Signing information, securely identifying the author.

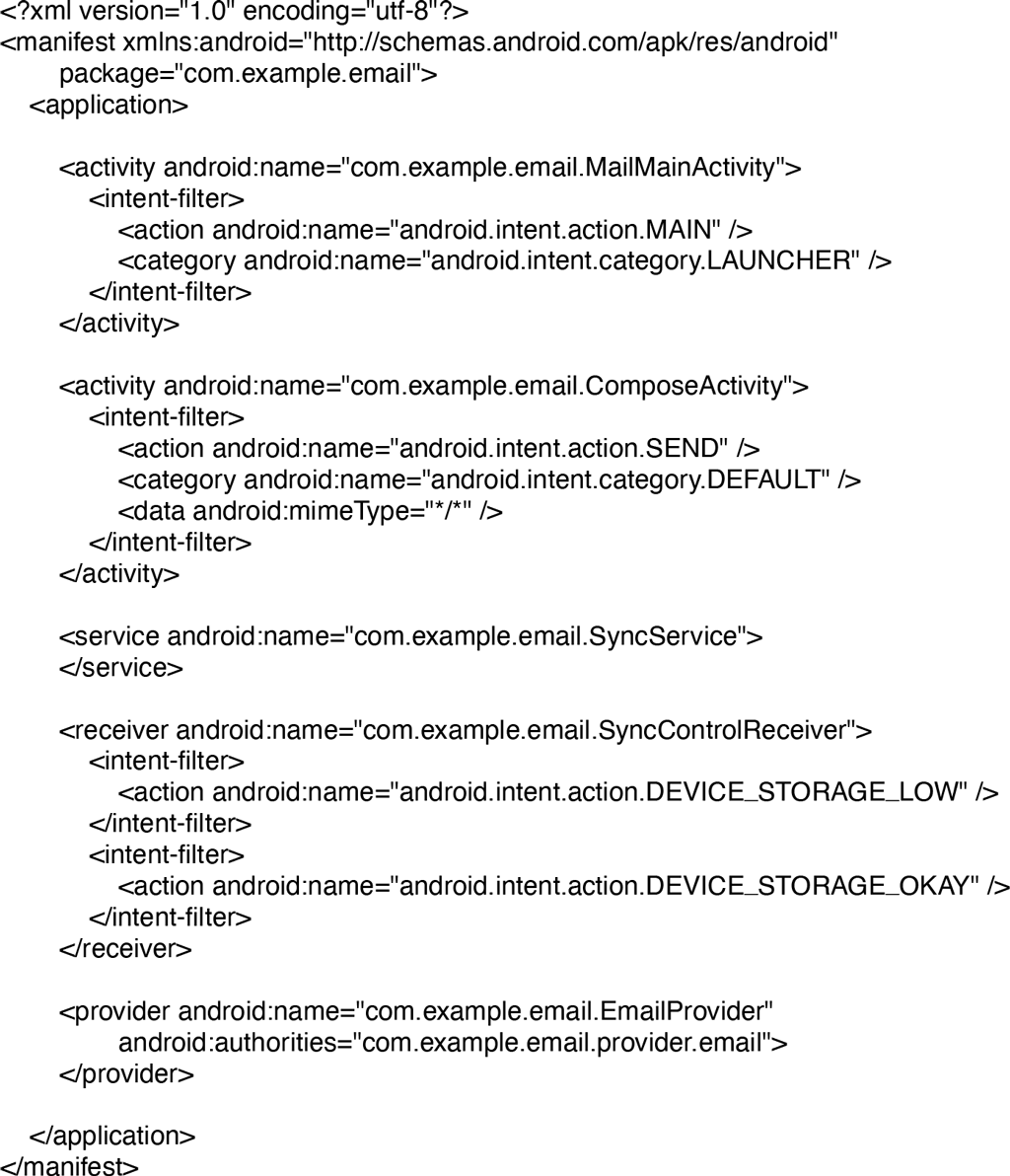

The key part of the application for our purposes here is its manifest, which appears as a precompiled XML file named AndroidManifest.xml in the root of the apk’s zip namespace. A complete example manifest declaration for a hypothetical email application is shown in Fig. 10-51: it allows you to view and compose emails and also includes components needed for synchronizing its local email storage with a server even when the user is not currently in the application.

Figure 10-51

Basic structure of AndroidManifest.xml.

Keep in mind that while what is described here is a real application you could write for Android, in order to focus on illustrating key operating system concepts the example has been simplified and modified from how an actual application like this is typically designed. If you have written an Android application and seeing this example makes you feel like something is off, you are not wrong!

Android applications do not have a simple main entry point that is executed when the user launches them. Instead, they publish under the manifest’s <application> tag a variety of entry points describing the various things the application can do. These entry points are expressed as four distinct types, defining the core types of behavior that applications can provide: activity, receiver, service, and content provider. The example we have presented shows a few activities and one declaration of the other component types, but an application may declare zero or more of any of these.

Each of the different four component types an application can contain has different semantics and uses within the system. In all cases, the android:name attribute supplies the Java class name of the application code implementing that component, which will be instantiated by the system when needed.

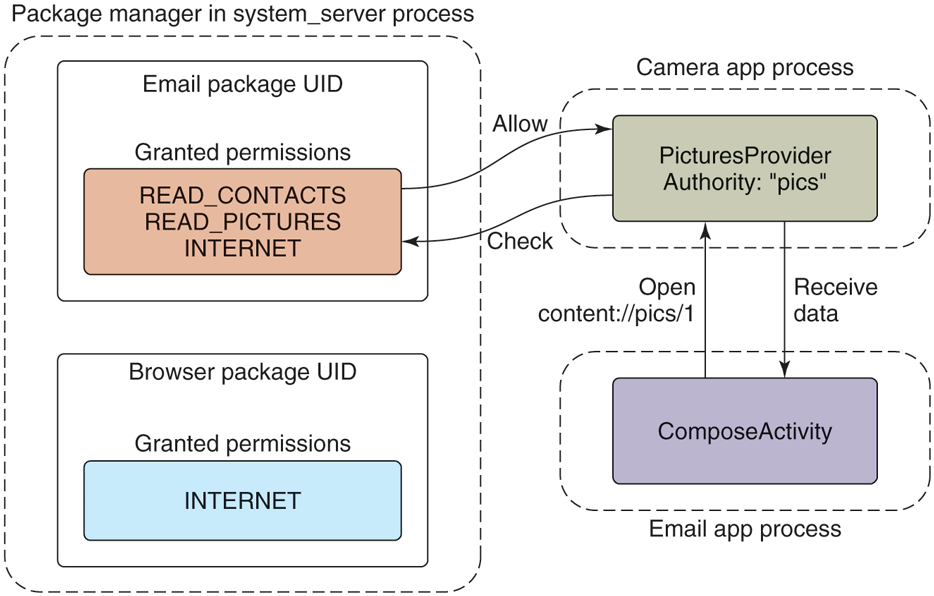

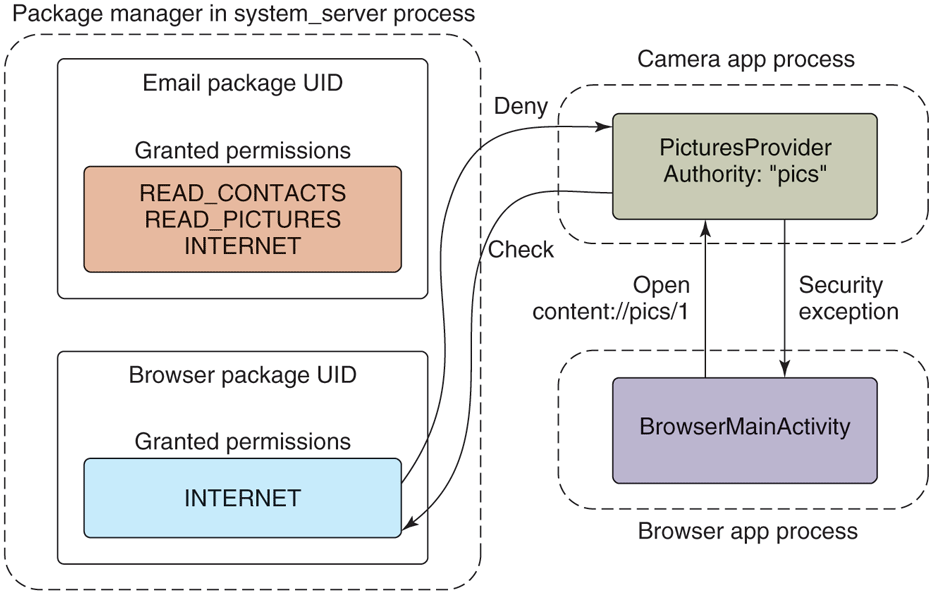

The package manager is the part of Android that keeps track of all application packages. When a user downloads an app, it comes in a package containing everything the app needs. It parses every application’s manifest, collecting and indexing the information it finds in them. With that information, it then provides facilities for clients to query it about the app information those clients are allowed to access, such as whether an app is currently installed and the kinds of things an app can do. It is also responsible for installing applications (creating storage space for the application and ensuring the integrity of the apk) as well as everything needed to uninstall an app, which includes cleaning up everything associated with a previously installed version of the app.

Applications statically declare their entry points in their manifest so they do not need to execute code at install time that registers them with the system. This design makes the system more robust in many ways: since installing an application does not run any application code and the top-level capabilities of the application can always be determined by looking at its manifest, there is no need to keep a separate database of this information about the application which can get out of sync (such as across updates) with the application’s actual capabilities, and it guarantees no information about an application can be left around after it is uninstalled. This decentralized approach was taken to avoid many of these types of problems caused by Windows’ centralized Registry.

Breaking an application into finer-grained components also serves our goal of supporting interoperation and collaboration between applications. Applications can publish pieces of themselves that provide specific functionality, which other applications can make use of either directly or indirectly. This will be illustrated as we look in more detail at the four kinds of components that can be published.

Above the package manager sits another important system service, the activity manager. While the package manager is responsible for maintaining static information about all installed applications, the activity manager determines when, where, and how those applications should run. Despite its name, it is actually responsible for running all four types of application components and implementing the appropriate behavior for each of them.

Activities

An activity is a part of the application that interacts directly with the user through a user interface. When the user launches an application on their device, this is actually an activity inside the application that has been designated as such a main entry point. The application implements code in its activity that is responsible for interacting with the user.

The example email manifest shown in Fig. 10-51 contains two activities. The first is the main mail user interface, allowing users to view their messages; the second is a separate interface for composing a new message. The first mail activity is declared as the main entry point for the application; that is, the activity that will be started when the user launches it from the home screen.

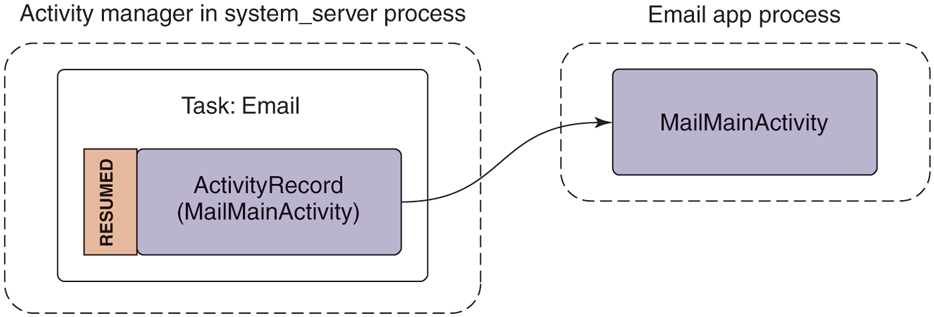

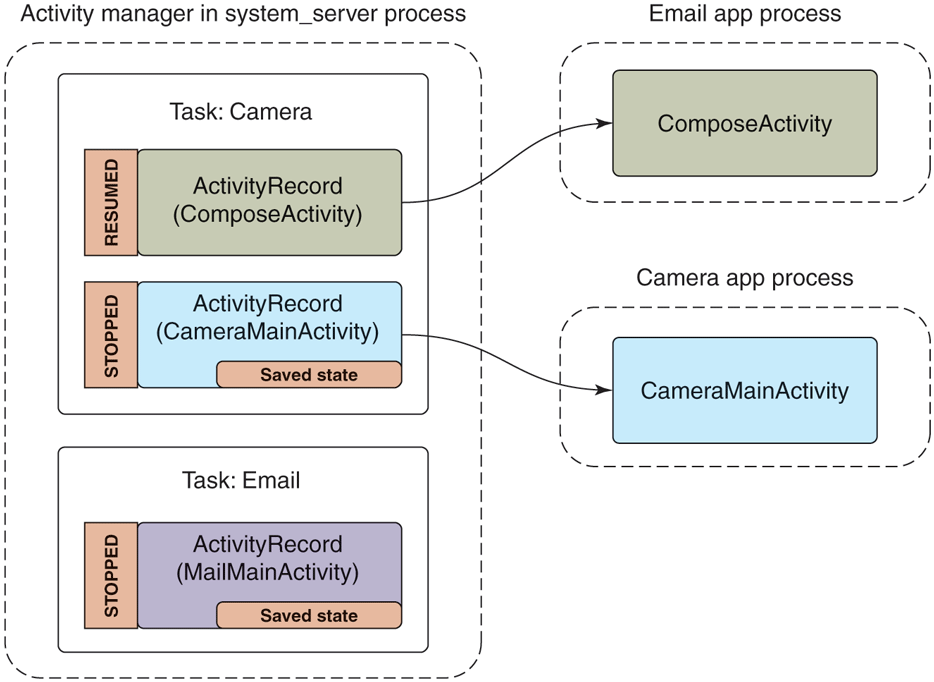

Since the first activity is the main activity, it will be shown to users as an application they can launch from the main application launcher. If they do so, the system will be in the state shown in Fig. 10-52. Here the activity manager, on the left side, has made an internal ActivityRecord instance in its process to keep track of the activity. One or more of these activities are organized into containers called tasks, which roughly correspond to what the user experiences as an application. At this point the activity manager has started the email application’s process and an instance of its MainMailActivity for displaying its main UI, which is associated with the appropriate ActivityRecord. This activity is in a state called resumed since it is now in the foreground of the user interface.

Figure 10-52

Starting an email application’s main activity.

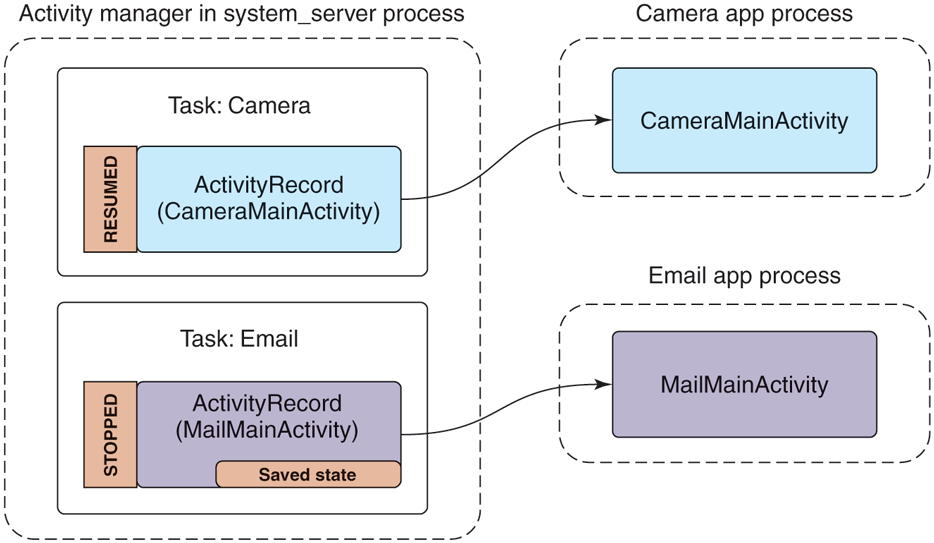

If the user were now to switch away from the email application (not exiting it) and launch a camera application to take a picture, we would be in the state shown in Fig. 10-53. Note that we now have a new camera process running the camera’s main activity, an associated ActivityRecord for it in the activity manager, and it is now the resumed activity. Something interesting also happens to the previous email activity: instead of being resumed, it is now stopped and the ActivityRecord holds this activity’s saved state.

Figure 10-53

Starting the camera application after email.

When an activity is no longer in the foreground, the system automatically asks it to ‘‘save its state.’’ This involves the application creating a minimal amount of state information representing what the user currently sees that it returns to the activity manager; the activity manager, running in the system server process, retains that state in its ActivityRecord for that activity. The saved state for an activity is generally small, for example containing where you are scrolled in an email message; it would not contain data like the message itself, which the app would instead keep somewhere in its own persistent storage (so it remains around even if the user completely removes an activity).

Recall that although Android does demand paging (it can page in and out clean RAM that has been mapped from files on disk, such as code), it does not rely on swap space. This means all dirty RAM pages in an application’s process must stay in RAM. Having the email’s main activity state safely stored away in the activity manager gives the system back some of the flexibility in dealing with memory that swap provides.

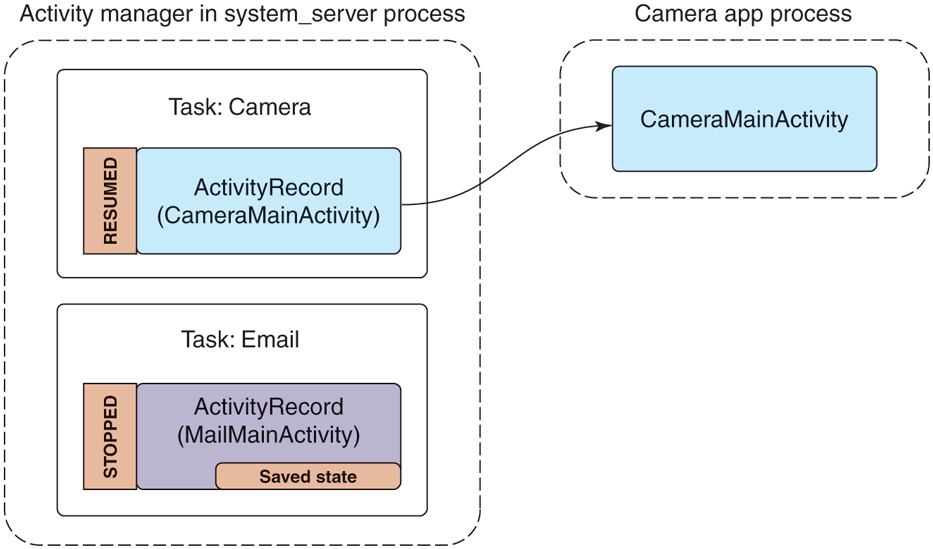

For example, if the camera application starts to require a lot of RAM, the system can simply get rid of the email process, as shown in Fig. 10-54. The ActivityRecord, with its precious saved state, remains safely tucked away by the activity manager in the system server process. Since the system server process hosts all of Android’s core system services, it must always remain running, so the state saved here will remain around for as long as we might need it.

Figure 10-54

Removing the email process to reclaim RAM for the camera.

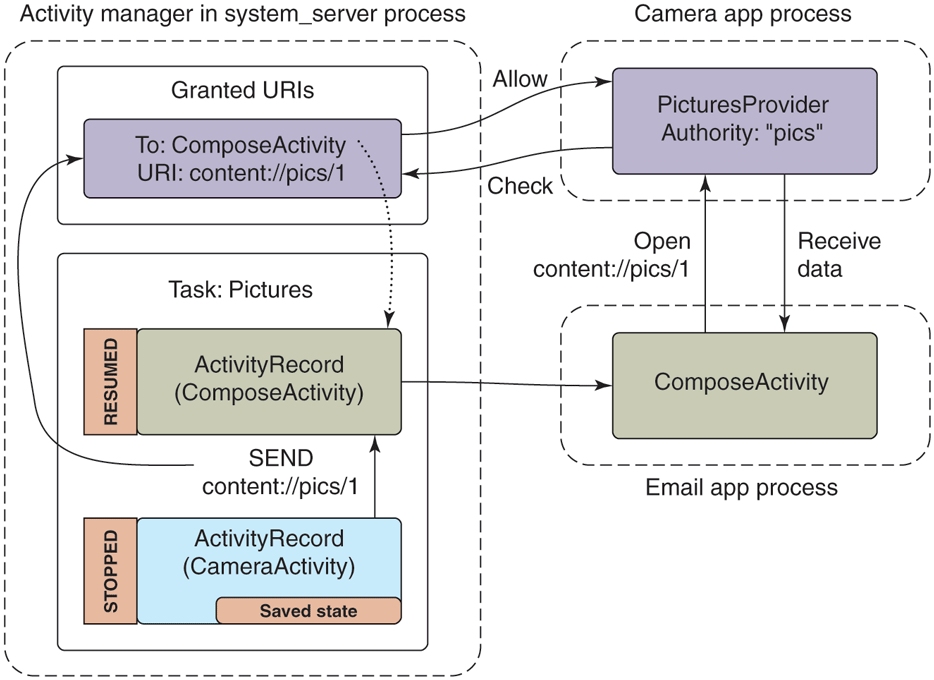

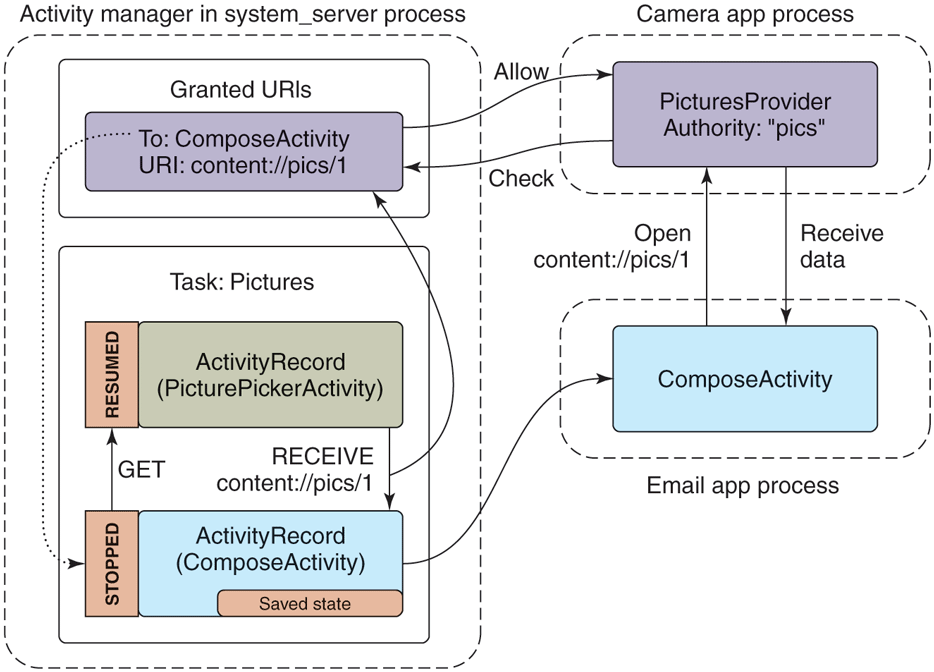

Our example email application not only has an activity for its main UI, but includes another ComposeActivity. Applications can declare any number of activities they want. This can help organize the implementation of an application, but more importantly it can be used to implement cross-application interactions. For example, this is the basis of Android’s cross-application sharing system, which the ComposeActivity here is participating in. If the user, while in the camera application, decides she wants to share a picture she took, our email application’s ComposeActivity is one of the sharing options she has. If it is selected, that activity will be started and given the picture to be shared. (Later we will see how the camera application is able to find the email application’s ComposeActivity.)

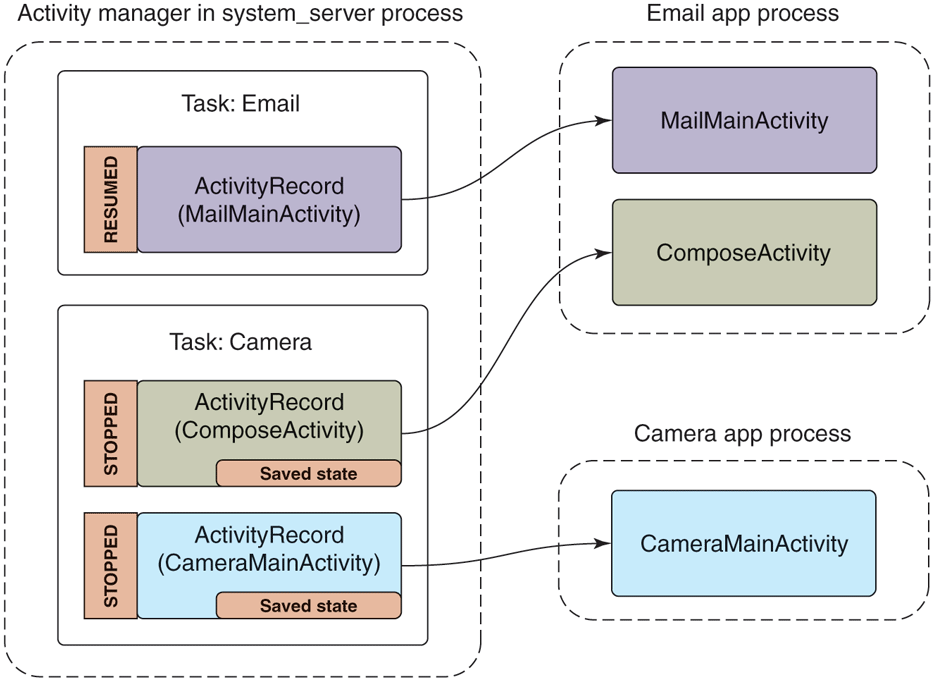

Performing that share option while in the activity state seen in Fig. 10-54 will lead to the new state in Fig. 10-55. There are a number of important things to note:

-

The email app’s process must be started again, to run its ComposeActivity.

However, the old MailMainActivity is not started at this point, since it is not needed. This reduces RAM use.

The camera’s task now has two records: the original CameraMainActivity we had just been in, and the new ComposeActivity that is now displayed. To the user, these are still one cohesive task: it is the camera currently interacting with them to email a picture.

The new ComposeActivity is at the top, so it is resumed; the previous CameraMainActivity is no longer at the top, so its state has been saved. We can at this point safely quit its process if its RAM is needed elsewhere.

Figure 10-55

Sharing a camera picture through the email application.

If you want to experiment yourself with this on Android, it should be noted that starting in Android 5.0 a real share flow would result in the ComposeActivity appearing in its own third task, separate from CameraMainActivity. This was part of a switch to a ‘‘document-centric recents’’ model, described in

https:/

where the tasks we have here that are shown to users could be contextual parts of apps as well as the apps themselves. The activity abstraction between apps and the operating system allowed implementing this kind of significant user experience with little to no modification of the apps themselves.

Finally, let us look at what would happen if the user left the camera task while in this last state (that is, composing an email to share a picture) and returned to the email application. Figure 10-56 shows the new state the system will be in. Note that we have brought the email task with its main activity back to the foreground. This makes MailMainActivity the foreground activity, but there is currently no instance of it running in the application’s process.

Figure 10-56

Returning to the email application.

To return to the previous activity, the system makes a new instance, handing it back the previously saved state the old instance had provided. This action of restoring an activity from its saved state must be able to bring the activity back to the same visual state as the user last left it. To accomplish this, the application will look in its saved state for the message the user was in, load that message’s data from its persistent storage, and then apply any scroll position or other user-interface state that had been saved.

Services

A service has two distinct identities:

It can be a self-contained long-running background operation. Common examples of using services in this way are performing background music playback, maintaining an active network connection (such as with an IRC server) while the user is in other applications, downloading or uploading data in the background, etc.

It can serve as a connection point for other applications or the system to perform rich interaction with the application. This can be used by applications to provide secure APIs for other applications, such as to perform image or audio processing, provide a text to speech, etc.

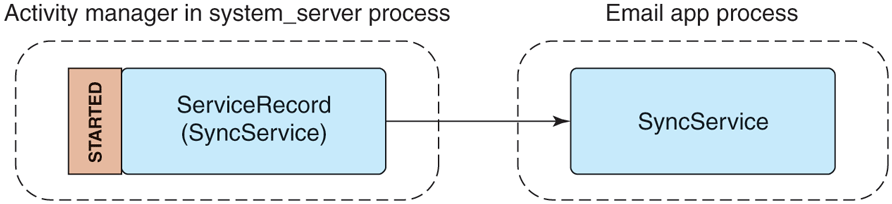

The example email manifest shown in Fig. 10-51 contains a service that is used to perform synchronization of the user’s mailbox. A common implementation would schedule the service to run at a regular interval, such as every 15 minutes, starting the service when it is time to run, and stopping itself when done.

This is a typical use of the first style of service, a long-running background operation. Figure 10-57 shows the state of the system in this case, which is quite simple. The activity manager has created a ServiceRecord to keep track of the service, noting that it has been started, and thus created its SyncService instance in the application’s process. While in this state the service is fully active (barring the entire system going to sleep if not holding a wake lock) and free to do what it wants. It is possible for the application’s process to go away while in this state, such as if the process crashes, but the activity manager will continue to maintain its ServiceRecord and can at that point decide to restart the service if desired.

Figure 10-57

Starting an application service.

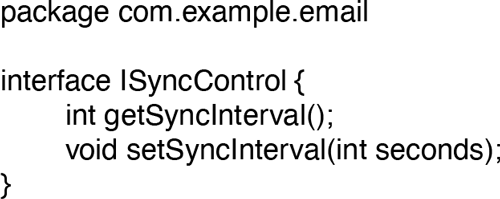

To see how one can use a service as a connection point for interaction with other applications, let us say that we want to extend our existing SyncService to have an API that allows other applications to control its sync interval. We will need to define an AIDL interface for this API, like the one shown in Fig. 10-58.

Figure 10-58

Interface for controlling a sync service’s sync interval.

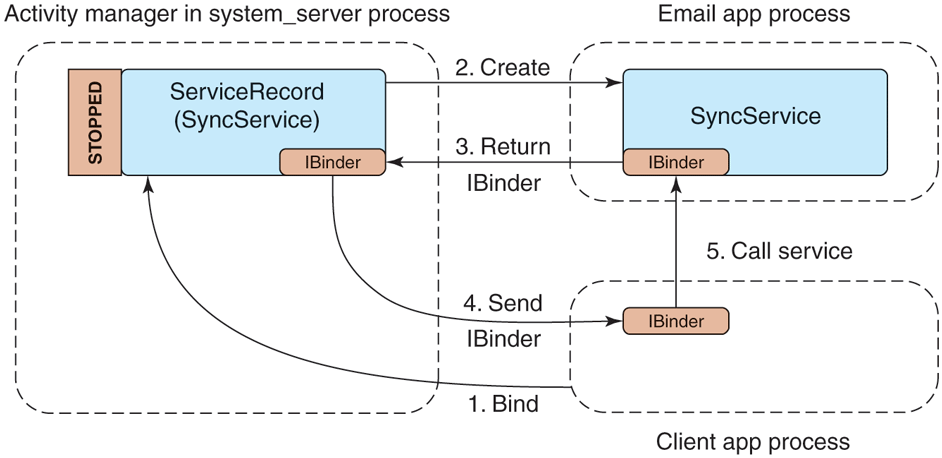

To use this, another process can bind to our application service, getting access to its interface. This creates a connection between the two applications, shown in Fig. 10-59. The steps of this process are as follows:

-

The client application tells the activity manager that it would like to bind to the service.

If the service is not already created, the activity manager creates it in the service application’s process.

The service returns the IBinder for its interface back to the activity manager, which now holds that IBinder in its ServiceRecord.

Now that the activity manager has the service IBinder, it can be sent back to the original client application.

The client application now having the service’s IBinder may proceed to make any direct calls it would like on its interface.

Figure 10-59

Binding to an application service.

Receivers

A receiver is the recipient of (typically external) event s that happen, most of the time in the background and outside of normal user interaction with an app. Receivers conceptually are the same as an application explicitly registering for a callback when something interesting happens (an alarm goes off, data connectivity changes, etc.), but do not require that the application be running in order to receive the event.

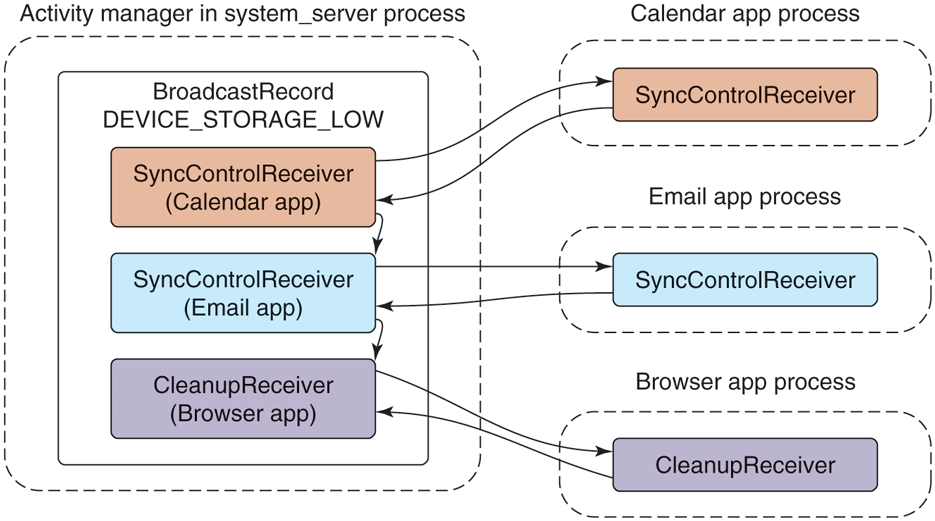

The example email manifest shown in Fig. 10-51 contains a receiver for the application to find out when the device’s storage becomes low in order for it to stop synchronizing email (which may consume more storage). When the device’s storage becomes low, the system will send a broadcast with the low storage code, to be delivered to all receivers interested in the event.

Figure 10-60 illustrates how such a broadcast is processed by the activity manager in order to deliver it to interested receivers. It first asks the package manager for a list of all receivers interested in the event, which is placed in a BroadcastRecord representing that broadcast. The activity manager will then proceed to step through each entry in the list, having each associated application’s process create and execute the appropriate receiver class.

Figure 10-60

Sending a broadcast to application receivers.

Receivers only run as one-shot operations. They are activated only one time. When an event happens, the system finds any receivers interested in it, delivers that event to them, and once they have consumed the event they are done. There is no ReceiverRecord like those we have seen for other application components, because a particular receiver is only a transient entity for the duration of a single broadcast. Each time a new broadcast is sent to a receiver component, a new instance of that receiver’s class is created.

Content Providers

Our last application component, the content provider, is the primary mechanism that applications use to exchange data with each other. All interactions with a content provider are through URIs using a content: scheme; the authority of the URI is used to find the correct content-provider implementation to interact with.

For example, in our email application from Fig. 10-51, the content provider specifies that its authority is com.example.email.provider.email. Thus, URIs operating on this content provider would start with

content://com.example.email.provider.email/The suffix to that URI is interpreted by the provider itself to determine what data within it is being accessed. In the example here, a common convention would be that the URI

content://com.example.email.provider.email/messages

means the list of all email messages, while

content://com.example.email.provider.email/messages/1provides access to a single message at key number 1.

To interact with a content provider, applications always go through a system API called ContentResolver, where most methods have an initial URI argument indicating the data to operate on. One of the most often used ContentResolver methods is query, which performs a database query on a given URI and returns a Cursor for retrieving the structured results. For example, retrieving a summary of all of the available email messages would look something like:

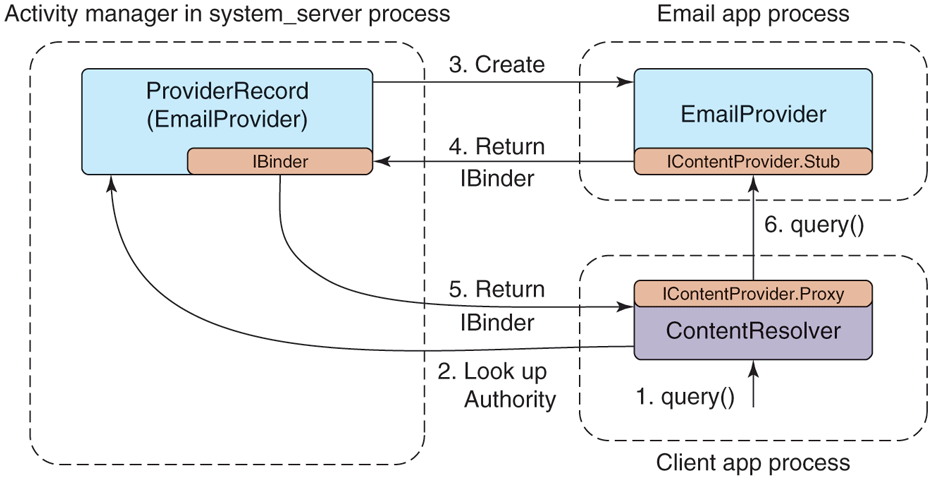

query("content://com.example.email.provider.email/messages")Though this does not look like it to applications, what is actually going on when they use content providers has many similarities to binding to services. Figure 10-61 illustrates how the system handles our query example:

-

The application calls ContentResolver.query to initiate the operation.

The URI’s authority is handed to the activity manager for it to find (via the package manager) the appropriate content provider.

If the content provider is not already running, it is created.

Once created, the content provider returns to the activity manager its IBinder implementing the system’s IContentProvider interface.

The content provider’s Binder is returned to the ContentResolver.

The content resolver can now complete the initial query operation by calling the appropriate method on the AIDL interface, returning the Cursor result.

Figure 10-61

Interacting with a content provider.

Content providers are one of the key mechanisms for performing interactions across applications. For example, if we return to the cross-application sharing system previously described in Fig. 10-55, content providers are the way data are actually transferred. The full flow for this operation is:

A share request that includes the URI of the data to be shared is created and is submitted to the system.

The system asks the ContentResolver for the MIME type of the data behind that URI; this works much like the query method we just discussed, but asks the content provider to return a MIME-type string for the URI.

The system finds all activities that can receive data of the identified MIME type.

A user interface is shown for the user to select one of the possible recipients.

When one of these activities is selected, the system launches it.

The share-handling activity receives the URI of the data to be shared, retrieves its data through ContentResolver, and performs its appropriate operation: creates an email, stores it, etc.

10.8.9 Intents

A detail that we have not yet discussed in the application manifest shown in Fig. 10-51 is the <intent-filter> tags included with the activity and receiver declarations. This is part of the intent feature in Android, which is the cornerstone for how different applications identify each other in order to be able to interact and work together.

An intent is the mechanism Android uses to discover and identify activities, receivers, and services. It is similar in some ways to the Linux shell’s search path, which the shell uses to look through multiple possible directories in order to find an executable matching command names given to it.