12 Harm

Several promises in the oath are related to harm.

First, Do No Harm

Promise 1. I will not produce harmful code.

The first promise of the software professional is DO NO HARM! That means that your code must not harm your users, your employers, your managers, or your fellow programmers.

You must know what your code does. You must know that it works. And you must know that it is clean.

Some time back, it was discovered that some programmers at Volkswagen wrote some code that purposely thwarted Environmental Protection Agency (EPA) emissions tests. Those programmers wrote harmful code. It was harmful because it was deceitful. That code fooled the EPA into allowing cars to be sold that emitted twenty times the amount of harmful nitrous oxides that the EPA deemed safe. Therefore, that code potentially harmed the health of everyone living where those cars were driven.

What should happen to those programmers? Did they know the purpose of that code? Should they have known?

I’d fire them and prosecute them because, whether they knew or not, they should have known. Hiding behind requirements written by others is no excuse. It’s your fingers on the keyboard, it’s your code. You must know what it does!

That’s a tough one, isn’t it? We write the code that makes our machines work, and those machines are often in positions to do tremendous harm. Because we will be held responsible for any harm our code does, we must be responsible for knowing what our code will do.

Each programmer should be held accountable based on their level of experience and responsibility. As you advance in experience and position, your responsibility for your actions and the actions of those under your charge increases.

Clearly, we can’t hold junior programmers as responsible as team leads. We can’t hold team leads as responsible as senior developers. But those senior people ought to be held to a very high standard and be ultimately responsible for those whom they direct.

That doesn’t mean that all the blame goes to the senior developers or the managers. Every programmer is responsible for knowing what the code does to the level of their maturity and understanding. Every programmer is responsible for the harm their code does.

No Harm to Society

First, you will do no harm to the society in which you live.

This is the rule that the VW programmers broke. Their software might have benefited their employer—Volkswagen. However, it harmed society in general. And we, programmers, must never do that.

But how do you know whether or not you are harming society? For example, is building software that controls weapon systems harmful to society? What about gambling software? What about violent or sexist video games? What about pornography?

If your software is within the law, might it still be harmful to society?

Frankly, that’s a matter for your own judgment. You’ll just have to make the best call you can. Your conscience is going to have to be your guide.

Another example of harm to society was the failed launch of HealthCare.gov, although in this case the harm was unintentional. The Affordable Care Act was passed by the US Congress and signed into law by the president in 2010. Among its many directives was the demand that a Web site be created and activated on October 1, 2013.

Never mind the insanity of specifying, by law, a date certain by which a whole new massive software system must be activated. The real problem was that on October 1, 2013, they actually turned it on.

Do you think, maybe, there were some programmers hiding under their desks that day?

Oh man, I think they turned it on.

Yeah, yeah, they really shouldn’t be doing that.

Oh, my poor mother. Whatever will she do?

This is a case where a technical screwup put a huge new public policy at risk. The law was very nearly overturned because of these failures. And no matter what you think about the politics of the situation, that was harmful to society.

Who was responsible for that harm? Every programmer, team lead, manager, and director who knew that system wasn’t ready and who nevertheless remained silent.

That harm to society was perpetrated by every software developer who maintained a passive-aggressive attitude toward their management, everyone who said, “I’m just doing my job—it’s their problem.” Every software developer who knew something was wrong and yet did nothing to stop the deployment of that system shares part of the blame.

Because here’s the thing. One of the prime reasons you were hired as a programmer was because you know when things are going wrong. You have the knowledge to identify trouble before it happens. Therefore, you have the responsibility to speak up before something terrible happens.

Harm to Function

You must KNOW that your code works. You must KNOW that the functioning of your code will not do harm to your company, your users, or your fellow programmers.

On August 1, 2012, some technicians at Knight Capital Group loaded their servers with new software. Unfortunately, they loaded only seven of the eight servers, leaving the eighth running with the older version.

Why they made this mistake is anybody’s guess. Somebody got sloppy.

Knight Capital ran a trading system. It traded stocks on the New York Stock Exchange. Part of its operation was to take large parent trades and break them up into many smaller child trades in order to prevent other traders from seeing the size of the initial parent trade and adjusting prices accordingly.

Eight years earlier, a simple version of this parent–child algorithm, named Power Peg, was disabled and replaced with something much better called Smart Market Access Routing System (SMARS). Oddly, however, the old Power Peg code was not removed from the system. It was simply disabled with a flag.

That flag had been used to regulate the parent–child process. When the flag was on, child trades were made. When enough child trades had been made to satisfy the parent trade, the flag was turned off.

They disabled the Power Peg code by simply leaving the flag off.

Unfortunately, the new software update that made it on to only seven of the eight servers repurposed that flag. The update turned the flag on, and that eighth server started making child trades in a high-speed infinite loop.

The programmers knew something had gone wrong, but they didn’t know exactly what. It took them 45 minutes to shut down that errant server—45 minutes during which it was making bad trades in an infinite loop.

The bottom line is that, in that first 45 minutes of trading, Knight Capital had unintentionally purchased more than $7 billion worth of stock that it did not want and had to sell it off at a $460 million loss. Worse, the company only had $360 million in cash. Knight Capital was bankrupt.

Forty-five minutes. One dumb mistake. Four hundred sixty million dollars.

And what was that mistake? The mistake was that the programmers did not KNOW what their system was going to do.

At this point, you may be worried that I’m demanding that programmers have perfect knowledge about the behavior of their code. Of course, perfect knowledge is not achievable; there will always be a knowledge deficit of some kind.

The issue isn’t the perfection of knowledge. Rather, the issue is to KNOW that there will be no harm.

Those poor guys at Knight Capital had a knowledge deficit that was horribly harmful—and given what was at stake, it was one they should not have allowed to exist.

Another example is the case of Toyota and the software system that made cars accelerate uncontrollably.

As many as 89 people were killed by that software, and more were injured.

Imagine that you are driving in a crowded downtown business district. Imagine that your car suddenly begins to accelerate, and your brakes stop working. Within seconds, you are rocketing through stoplights and crosswalks with no way to stop.

That’s what investigators found that the Toyota software could do—and quite possibly had done.

The software was killing people.

The programmers who wrote that code did not KNOW that their code would not kill—notice the double negative. They did not KNOW that their code would NOT kill. And they should have known that. They should have known that their code would NOT kill.

Now again, this is all about risk. When the stakes are high, you want to drive your knowledge as near to perfection as you can get it. If lives are at stake, you have to KNOW that your code won’t kill anyone. If fortunes are a stake, you have to KNOW that your code won’t lose them.

On the other hand, if you are writing a chat application or a simple shopping cart Web site, neither fortunes nor lives are at stake …

… or are they?

What if someone using your chat application has a medical emergency and types “HELP ME. CALL 911” on your app? What if your app malfunctions and drops that message?

What if your Web site leaks personal information to hackers who use it to steal identities?

And what if the poor functioning of your code drives customers away from your employer and to a competitor?

The point is that it’s easy to underestimate the harm that can be done by software. It’s comforting to think that your software can’t harm anyone because it’s not important enough. But you forget that software is very expensive to write, and at very least, the money spent on its development is at stake—not to mention whatever the users put at stake by depending on it.

The bottom line is, there’s almost always more at stake than you think.

No Harm to Structure

You must not harm the structure of the code. You must keep the code clean and well organized.

Ask yourself why the programmers at Knight Capital did not KNOW that their code could be harmful.

I think the answer is pretty obvious. They had forgotten that the Power Peg software was still in the system. They had forgotten that the flag they repurposed would activate it. And they assumed that all servers would always have the same software running.

They did not know their system could exhibit harmful behavior because of the harm they had done to the structure of the system by leaving that dead code in place. And that’s one of the big reasons why code structure and cleanliness are so important. The more tangled the structure, the more difficulty in knowing what the code will do. The more the mess, the more the uncertainty.

Take the Toyota case, for example. Why didn’t the programmers know their software could kill people? Do you think the fact that they had more than ten thousand global variables might have been a factor?

Making a mess in the software undermines your ability to know what the software does and therefore your ability to prevent harm.

Messy software is harmful software.

Some of you might object by saying that sometimes a quick and dirty patch is necessary to fix a nasty production bug.

Sure. Of course. If you can fix a production crisis with a quick and dirty patch, then you should do it. No question.

A stupid idea that works is not a stupid idea.

However, you can’t leave that quick and dirty patch in place without causing harm. The longer that patch remains in the code, the more harm it can do.

Remember that the Knight Capital debacle would not have happened if the old Power Peg code had been removed from the code base. It was that old defunct code that actually made the bad trades.

What do we mean by “harm to structure”? Clearly, having thousands of global variables is a structural flaw. So is dead code left in the code base.

Structural harm is harm to the organization and content of the source code. It is anything that makes that source code hard to read, hard to understand, hard to change, or hard to reuse.

It is the responsibility of every professional software developer to know the disciplines and standards of good software structure. They should know how to refactor, how to write tests, how to recognize bad code, how to decouple designs and create appropriate architectural boundaries. They should know and apply the principles of low- and high-level design. And it is the responsibility of every senior developer to make sure that younger developers learn these things and satisfy them in the code that they write.

Soft

The first word in software is soft. Software is supposed to be SOFT. It’s supposed to be easy to change. If we didn’t want it to be easy to change, we’d have called it hardware.

It’s important to remember why software exists at all. We invented it as a means to make the behavior of machines easy to change. To the extent that our software is hard to change, we have thwarted the very reason that software exists.

Remember that software has two values. There’s the value of its behavior, and then there’s the value of its “softness.” Our customers and users expect us to be able to change that behavior easily and without high cost.

Which of these two values is the greater? Which value should we prioritize above the other? We can answer that question with a simple thought experiment.

Imagine two programs. One works perfectly but is impossible to change. The other does nothing correctly but is easy to change. Which is the more valuable?

I hate to be the one to tell you this but, in case you hadn’t noticed, software requirements tend to change, and when they change, that first software will become useless—forever.

On the other hand, that second software can be made to work, because it’s easy to change. It may take some time and money to get it to work initially, but after that, it’ll continue to work forever with minimal effort.

Therefore, it is the second of the two values that should be given priority in all but the most urgent of situations.

What do I mean by urgent? I mean a production disaster that’s losing the company $10 million per minute. That’s urgent.

I do not mean a software startup. A startup is not an urgent situation requiring you to create inflexible software. Indeed, the opposite is true. The one thing that is absolutely certain in a startup is that you are creating the wrong product.

No product survives contact with the user. As soon as you start to put the product into users’ hands, you will discover that the product you’ve built is wrong in a hundred different ways. And if you can’t change it without making a mess, you’re doomed.

This is one of the biggest problems with software startups. Startup entrepreneurs believe they are in an urgent situation requiring them to throw out all the rules and dash to the finish line, leaving a huge mess in their wake. Most of the time, that huge mess starts to slow them down long before they do their first deployment. They’ll go faster and better and will survive a lot longer if they keep the structure of the software from harm.

When it comes to software, it never pays to rush.

—Brian Marick

Tests

Tests come first. You write them first, and you clean them first. You will know that every line of code works because you will have written tests that prove they work.

How can you prevent harm to the behavior of your code if you don’t have the tests that prove that it works?

How can you prevent harm to the structure of your code if you don’t have the tests that allow you to clean it?

And how can you guarantee that your test suite is complete if you don’t follow the three laws of test-driven development (TDD)?

Is TDD really a prerequisite to professionalism? Am I really suggesting that you can’t be a professional software developer unless you practice TDD?

Yes, I think that’s true. Or rather, it is becoming true. It is true for some of us, and with time, it is becoming true for more and more of us. I think the time will come, and relatively soon, when the majority of programmers will agree that practicing TDD is part of the minimum set of disciplines and behaviors that mark a professional developer.

Why do I believe that?

Because, as I said earlier, we rule the world! We write the rules that make the whole world work.

In our society, nothing gets bought or sold without software. Nearly all correspondence is through software. Nearly all documents are written with software. Laws don’t get passed or enforced without it. There is virtually no activity of daily life that does not involve software.

Without software, our society does not function. Software has become the most important component in the infrastructure of our civilization.

Society does not understand this yet. We programmers don’t really understand it yet either. But the realization is dawning that the software we write is critical. The realization is dawning that many lives and fortunes depend on our software. And the realization is dawning that software is being written by people who do not profess a minimum level of discipline.

So, yes, I think TDD, or some discipline very much like it, will eventually be considered a minimum standard behavior for professional software developers. I think our customers and our users will insist on it.

Best Work

Promise 2. The code that I produce will always be my best work. I will not knowingly allow code that is defective in either behavior or structure to accumulate.

Kent Beck once said, “First make it work. Then make it right.”

Getting the program to work is just the first—and easiest—step. The second—and harder—step is to clean the code.

Unfortunately, too many programmers think they are done once they get a program to work. Once it works, they move on to the next program, and then the next, and the next.

In their wake, they leave behind a history of tangled, unreadable, code that slows down the whole development team. They do this because they think their value is in speed. They know they are paid a lot, and so they feel that they must deliver a lot of functionality in a short amount of time.

But software is hard and takes a lot of time, and so they feel like they are going too slowly. They feel like they are failing, which creates a pressure that causes them to try to go faster. It causes them to rush. They rush to get the program working, and then they declare themselves to be done—because, in their minds, it’s already taken them too long. The constant tapping of the project manager’s foot doesn’t help, but that’s not the real driver.

I teach lots of classes. In many of them, I give the programmers small projects to code. My goal is to give them a coding experience in which to try out new techniques and new disciplines. I don’t care if they actually finish the project. Indeed, all the code is going to be thrown away.

Yet still I see people rushing. Some stay past the end of the class just hammering away at getting something utterly meaningless to work.

So, although the boss’s pressure doesn’t help. The real pressure comes from inside us. We consider speed of development to be a matter of our own self-worth.

Making It Right

As we saw earlier in this chapter, there are two values to software. There is the value of its behavior and the value of its structure. I also made the point that the value of structure is more important than the value of behavior. This is because to have any long-term value, software systems must be able to react to changes in requirements.

Software that is hard to change also is hard to keep up to date with the requirements. Software with a bad structure can quickly get out of date.

In order for you to keep up with requirements, the structure of the software has to be clean enough to allow, and even encourage, change. Software that is easy to change can be kept up to date with changing requirements, allowing it to remain valuable with the least amount of effort. But if you’ve got a software system that’s hard to change, then you’re going to have a devil of a time keeping that system working when the requirements change.

When are requirements most likely to change? Requirements are most volatile at the start of a project, just after the users have seen the first few features work. That’s because they’re getting their first look at what the system actually does as opposed to what they thought it was going to do.

Consequently, the structure of the system needs to be clean at the very start if early development is to proceed quickly. If you make a mess at the start, then even the very first release will be slowed down by that mess.

Good structure enables good behavior and bad structure impedes good behavior. The better the structure, the better the behavior. The worse the structure, the worse the behavior. The value of the behavior depends critically on the structure. Therefore, the value of the structure is the most critical of the two values, which means that professional developers put a higher priority on the structure of the code than on the behavior.

Yes, first you make it work; but then you make very sure that you continue to make it right. You keep the system structure as clean as possible throughout the lifetime of the project. From its very beginning to its very end, it must be clean.

What Is Good Structure?

Good structure makes the system easy to test, easy to change, and easy to reuse. Changes to one part of the code do not break other parts of the code. Changes to one module do not force massive recompiles and redeployments. High-level policies are kept separate and independent from low-level details.

Poor structure makes a system rigid, fragile, and immobile. These are the traditional design smells.

Rigidity is when relatively minor changes to the system cause large portions of the system to be recompiled, rebuilt, and redeployed. A system is rigid when the effort to integrate a change is much greater than the change itself.

Fragility is when minor changes to the behavior of a system force many corresponding changes in a large number of modules. This creates a high risk that a small change in behavior will break some other behavior in the system. When this happens, your managers and customers will come to believe that you have lost control over the software and don’t know what you are doing.

Immobility is when a module in an existing system contains a behavior you want to use in a new system but is so tangled up within the existing system that you can’t extract it for use in the new system.

These problems are all problems of structure, not behavior. The system may pass all its tests and meet all its functional requirements, yet such a system may be close to worthless because it is too difficult to manipulate.

There’s a certain irony in the fact that so many systems that correctly implement valuable behaviors end up with a structure that is so poor that it negates that value and makes the system worthless.

And worthless is not too strong a word. Have you ever participated in the Grand Redesign in the Sky? This is when developers tell management that the only way to make progress is to redesign the whole system from scratch; those developers have assessed the current system to be worthless.

When managers agree to let the developers redesign the system, it simply means that they have agreed with the developers’ assessment that the current system is worthless.

What is it that causes these design smells that lead to worthless systems? Source code dependencies! How do we fix those dependencies? Dependency management!

How do we manage dependencies? We use the SOLID5 principles of object-oriented design to keep the structure of our systems free of the design smells that would make it worthless.

5. These principles are described in Robert C. Martin, Clean Code (Addison-Wesley, 2009), and Agile Software Development: Principles, Patterns, and Practices (Pearson, 2003).

Because the value of structure is greater than the value of behavior and depends on good dependency management, and because good dependency management derives from the SOLID principles, it follows that overall value of the system depends on proper application of the SOLID principles.

That’s quite a claim, isn’t it? Perhaps it’s a bit hard to believe. The value of the system depends on design principles. But we’ve gone through the logic, and many of you have had the experiences to back it up. So, that conclusion bears some serious consideration.

Eisenhower’s Matrix

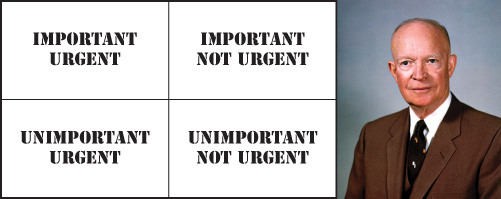

General Dwight D. Eisenhower once said, “I have two kinds of problems, the urgent and the important. The urgent are not important, and the important are never urgent.”

There is a deep truth to this statement—a deep truth about engineering. We might even call this the engineer’s motto:

The greater the urgency, the less the relevance.

Figure 12.1 presents Eisenhower’s decision matrix: urgency on the vertical axis, importance on the horizontal. The four possibilities are urgent and important, urgent and unimportant, important but not urgent, and neither important nor urgent.

Figure 12.1 Eisenhower’s decision matrix

Now let’s arrange these in order of priority. The two obvious cases are important and urgent at the top and neither important nor urgent at the bottom.

The question is, how do you sort the two in the middle: urgent but unimportant and important but not urgent? Which should you address first?

Clearly, things that are important should be prioritized over things that are unimportant. I would argue, furthermore, that if something is unimportant, it shouldn’t be done at all. Doing unimportant things is a waste.

If we eliminate all the unimportant things, we have two left. We do the important and urgent things first. Then we do the important but not urgent things second.

My point is that urgency is about time. Importance is not. Things that are important are long-term. Things that are urgent are short-term. Structure is long-term. Therefore, it is important. Behavior is short-term. Therefore, it is merely urgent.

So, structure, the important stuff, comes first. Behavior is secondary.

Your boss might not agree with that priority, but that’s because it’s not your boss’s job to worry about structure. It’s yours. Your boss simply expects that you will keep the structure clean while you implement the urgent behaviors.

Earlier in this chapter, I quoted Kent Beck: “First make it work, then make it right.” Now I’m saying that structure is a higher priority than behavior. It’s a chicken-and-egg dilemma, isn’t it?

The reason we make it work first is that structure has to support behavior; consequently, we implement behavior first, then we give it the right structure. But structure is more important than behavior. We give it a higher priority. We deal with structural issues before we deal with behavioral issues.

We can square this circle by breaking the problem down into tiny units. Let’s start with user stories. You get a story to work, and then you get its structure right. You don’t work on the next story until that structure is right. The structure of the current story is a higher priority than the behavior of the next story.

Except, stories are too big. We need to go smaller. Not stories, then—tests. Tests are the perfect size.

First you get a test to pass, then you fix the structure of the code that passes that test before you get the next test to pass.

This entire discussion has been the moral foundation for the red → green → refactor cycle of TDD.

It is that cycle that helps us to prevent harm to behavior and harm to structure. It is that cycle that allows us to prioritize structure over behavior. And that’s why we consider TDD to be a design technique as opposed to a testing technique.

Programmers Are Stakeholders

Remember this. We have a stake in the success of the software. We, programmers, are stakeholders too.

Did you ever think about it that way? Did you ever view yourself as one of the stakeholders of the project?

But, of course, you are. The success of the project has a direct impact on your career and reputation. So, yes, you are a stakeholder.

And as a stakeholder, you have a say in the way the system is developed and structured. I mean, it’s your butt on the line too.

But you are more than just a stakeholder. You are an engineer. You were hired because you know how to build software systems and how to structure those systems so that they last. Along with that knowledge comes the responsibility to produce the best product you can.

Not only do you have the right as a stakeholder, but you have the duty as an engineer, to make sure that the systems you produce do no harm either through bad behavior or bad structure.

A lot of programmers don’t want that kind of responsibility. They’d rather just be told what to do. And that’s a travesty and a shame. It’s wholly unprofessional. Programmers who feel that way should be paid minimum wage because that’s what their work output is worth.

If you don’t take responsibility for the structure of the system, who else will? Your boss?

Does your boss know the SOLID principles? How about design patterns? How about object-oriented design and the practice of dependency inversion? Does your boss know the discipline of TDD? What a self-shunt is, or a test-specific subclass, or a Humble Object? Does your boss understand that things that change together should be grouped together and that things that change for different reasons should be separated?

Does your boss understand structure? Or is your boss’s understanding limited to behavior?

Structure matters. If you aren’t going to care for it, who will?

What if your boss specifically tells you to ignore structure and focus entirely on behavior? You refuse. You are a stakeholder. You have rights too. You are also an engineer with responsibilities that your boss cannot override.

Perhaps you think that refusing will get you fired. It probably will not. Most managers expect to have to fight for things they need and believe in, and they respect those who are willing to do the same.

Oh, there will be a struggle, even a confrontation, and it won’t be comfortable. But you are a stakeholder and an engineer. You can’t just back down and acquiesce. That’s not professional.

Most programmers do not enjoy confrontation. But dealing with confrontational managers is a skill we have to learn. We have to learn how to fight for what we know is right because taking responsibility for the things that matter, and fighting for those things, is how a professional behaves.

Your Best

This promise of the Programmer’s Oath is about doing your best.

Clearly, this is a perfectly reasonable promise for a programmer to make. Of course you are going to do your best, and of course you will not knowingly release code that is harmful.

And, of course, this promise is not always black and white. There are times when structure must bend to schedule. For example, if in order to make a trade show, you have to put in some quick and dirty fix, then so be it.

The promise doesn’t even prevent you from shipping to customers code with less-than-perfect structure. If the structure is close but not quite right, and customers are expecting the release tomorrow, then so be it.

On the other hand, the promise does mean that you will address those issues of behavior and structure before you add more behavior. You will not pile more and more behavior on top of known bad structure. You will not allow those defects to accumulate.

What if your boss tells you to do it anyway? Here’s how that conversation should go.

Boss: I want this new feature added by tonight.

Programmer: I’m sorry, but I can’t do that. I’ve got some structural cleanup to do before I can add that new feature.

Boss: Do the cleanup tomorrow. Get the feature done by tonight.

Programmer: That’s what I did for the last feature, and now I have an even bigger mess to clean up. I really have to finish that cleanup before I start on anything new.

Boss: I don’t think you understand. This is business. Either we have a business or we don’t have a business. And if we can’t get features done, we don’t have a business. Now get the feature done.

Programmer: I understand. Really, I do. And I agree. We have to be able to get features done. But if I don’t clean up the structural problems that have accumulated over the last few days, we’re going to slow down and get even fewer features done.

Boss: Ya know, I used to like you. I used to say, that Danny, he’s pretty nice. But now I don’t think so. You’re not being nice at all. Maybe you shouldn’t be working with me. Maybe I should fire you.

Programmer: Well, that’s your right. But I’m pretty sure you want features done quickly and done right. And I’m telling you that if I don’t do this cleanup tonight, then we’re going to start slowing down. And we’ll deliver fewer and fewer features.

Look, I want to go fast, just like you do. You hired me because I know how to do that. You have to let me do my job. You have to let me do what I know is best.

Boss: You really think everything will slow down if you don’t do this cleanup tonight?

Programmer: I know it will. I’ve seen it before. And so have you.

Boss: And it has to be tonight?

Programmer: I don’t feel safe letting the mess get any worse.

Boss: You can give me the feature tomorrow?

Programmer: Yes, and it’ll be a lot easier to do once the structure is cleaned up.

Boss: Okay. Tomorrow. No later. Now get to it.

Programmer: Okay. I’ll get right on it.

Boss: [aside] I like that kid. He’s got guts. He’s got gumption. He didn’t back down even when I threatened to fire him. He’s gonna go far, trust me—but don’t tell him I said so.

Repeatable Proof

Promise 3. I will produce, with each release, a quick, sure, and repeatable proof that every element of the code works as it should.

Does that sound unreasonable to you? Does it sound unreasonable to be expected to prove that the code you’ve written actually works?

Allow me to introduce you to Edsger Wybe Dijkstra.

Dijkstra

Edsger Wybe Dijkstra was born in Rotterdam in 1930. He survived the bombing of Rotterdam and the German occupation of the Netherlands, and in 1948, he graduated high school with the highest possible marks in math, physics, chemistry, and biology.

In March 1952, at the age of 21, and just 9 months before I would be born, he took a job with the Mathematical Center in Amsterdam as the Netherlands’ very first programmer.

In 1957, he married Maria Debets. In the Netherlands, at the time, you had to state your profession as part of the marriage rites. The authorities were unwilling to accept “programmer” as his profession. They’d never heard of such a profession. Dijkstra settled for “theoretical physicist.”

In 1955, having been a programmer for 3 years, and while still a student, he concluded that the intellectual challenge of programming was greater than the intellectual challenge of theoretical physics, and as a result, he chose programming as his long-term career.

In making this decision, he conferred with his boss, Adriaan van Wijngaarden. Dijkstra was concerned that no one had identified a discipline or science of programming and that he would therefore not be taken seriously. His boss replied that Dijkstra might very well be one of the people who would make it a science.

In pursuit of that goal, Dijkstra was compelled by the idea that software was a formal system, a kind of mathematics. He reasoned that software could become a mathematical structure rather like Euclid’s Elements—a system of postulates, proofs, theorems, and lemmas. He therefore set about creating the language and discipline of software proofs.

Proving Correctness

Dijkstra realized that there were only three techniques we could use to prove the correctness of an algorithm: enumeration, induction, and abstraction. Enumeration is used to prove that two statements in sequence or two statements selected by a Boolean expression are correct. Induction is used to prove that a loop is correct. Abstraction is used to break groups of statements into smaller provable chunks.

If this sounds hard, it is.

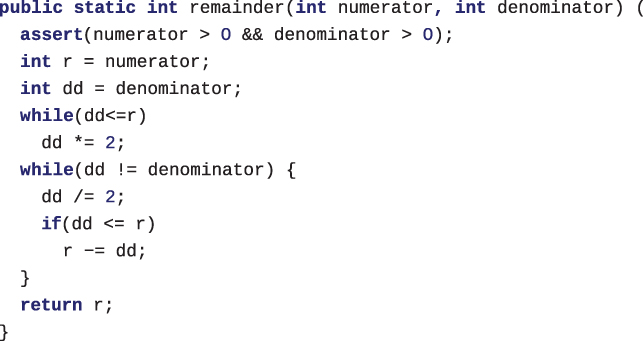

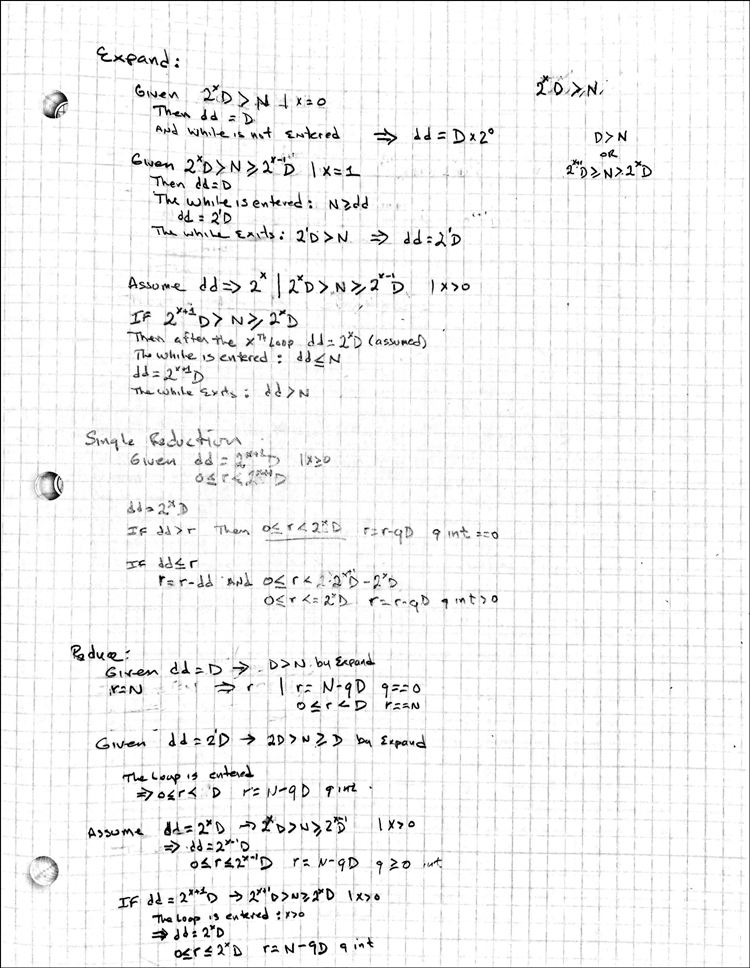

As an example of just how hard this is, I have included a simple Java program for calculating the remainder of an integer (Figure 12.2), and the handwritten proof of that algorithm (Figure 12.3).6

6. This is a translation into Java of a demonstration from Dijkstra’s work.

Figure 12.2 A simple Java program

Figure 12.3 Handwritten proof of the algorithm

I think you can see the problem with this approach. Indeed, this is something that Dijkstra complained bitterly about:

Of course I would not dare to suggest (at least at present!) that it is the programmer’s duty to supply such a proof whenever he writes a simple loop in his program. If so, he could never write a program of any size at all.

Dijkstra’s hope was that such proofs would become more practical through the creation of a library of theorems, again similar to Euclid’s Elements.

But Dijkstra did not understand just how prevalent and pervasive software would become. He did not foresee, in those early days, that computers would outnumber people and that vast quantities of software would be running in the walls of our homes, in our pockets, and on our wrists. Had he known, he would have realized that the library of theorems he envisioned would be far too vast for any mere human to grasp.

So, Dijkstra’s dream of explicit mathematical proofs for programs has faded into near oblivion. Oh, there are some holdouts who hope against hope for a resurgence of formal proofs, but their vision has not penetrated very far into the software industry at large.

Although the dream may have passed, it drew something deeply profound in its wake. Something that we use today, almost without thinking about it.

Structured Programming

In the early days of programming, the 1950s and 1960s, we used languages such as Fortran. Have you ever seen Fortran? Here, let me show you what it was like.

WRITE(4,99)

99 FORMAT(" NUMERATOR:")

READ(4,100)NN

WRITE(4,98)

98 FORMAT(" DENOMINATOR:")

READ(4,100)ND

100 FORMAT(I6)

NR=NN

NDD=ND

1 IF(NDD-NR)2,2,3

2 NDD=NDD*2

GOTO 1

3 IF(NDD-ND)4,10,4

4 NDD=NDD/2

IF(NDD-NR)5,5,6

5 NR=NR-NDD

6 GOTO 3

10 WRITE(4,20)NR

20 FORMAT(" REMAINDER:",I6)

END

This little Fortran program implements the same remainder algorithm as the earlier Java program.

Now I’d like to draw your attention to those GOTO statements. You probably haven’t seen statements like that very often. The reason you haven’t seen statements like that very often is that nowadays we look on them with disfavor. In fact, most modern languages don’t even have GOTO statements like that anymore.

Why don’t we favor GOTO statements? Why don’t our languages support them anymore? Because in 1968, Dijkstra wrote a letter to the editor of Communications of the ACM, titled “Go To Statement Considered Harmful.”7

7. Edsger W. Dijkstra, “Go To Statement Considered Harmful,” Communications of the ACM 11, no. 3 (1968), 147–148.

Why did Dijkstra consider the GOTO statement to be harmful? It all comes back to the three strategies for proving a function correct: enumeration, induction, and abstraction.

Enumeration depends on the fact that each statement in sequence can be analyzed independently and that the result of one statement feeds into the next. It should be clear to you that in order for enumeration to be an effective technique for proving the correctness of a function, every statement that is enumerated must have a single entry point and a single exit point. Otherwise, we could not be sure of either the inputs or the outputs of a statement.

What’s more, induction is simply a special form of enumeration, where we assume the enumerated statement is true for some x and then prove by enumeration that it is true for x + 1.

Thus, the body of a loop must be enumerable. It must have a single entry and a single exit.

GOTO is considered harmful because a GOTO statement can jump into or out of the middle of an enumerated sequence. GOTOs make enumeration intractable, making it impossible to prove an algorithm correct by enumeration or induction.

Dijkstra recommended that, in order to keep code provable, it be constructed of three standard building blocks.

Sequence, which we depict as two or more statements ordered in time. This simply represents nonbranching lines of code.

Selection, depicted as two or more statements selected by a predicate. This simply represents

if/elseandswitch/casestatements.Iteration, depicted as a statement repeated under the control of a predicate. This represents a

whileorforloop.

Dijkstra showed that any program, no matter how complicated, can be composed of nothing more than these three structures and that programs structured in that manner are provable.

He called the technique structured programming.

Why is this important if we aren’t going to write those proofs? If something is provable, it means you can reason about it. If something is unprovable, it means you cannot reason about it. And if you can’t reason about it, you can’t properly test it.

Functional Decomposition

In 1968, Dijkstra’s ideas were not immediately popular. Most of us were using languages that depended on GOTO, so the idea of abandoning GOTO or imposing discipline on GOTO was abhorrent.

The debate over Dijkstra’s ideas raged for several years. We didn’t have an Internet in those days, so we didn’t use Facebook memes or flame wars. But we did write letters to the editors of the major software journals of the day. And those letters raged. Some claimed Dijkstra to be a god. Others claimed him to be a fool. Just like social media today, except slower.

But in time, the debate slowed, and Dijkstra’s position gained increasing support until, nowadays, most of the languages we use simply don’t have a GOTO.

Nowadays, we are all structured programmers because our languages don’t give us a choice. We all build our programs out of sequence, selection, and iteration. And very few of us make regular use of unconstrained GOTO statements.

An unintended side effect of composing programs from those three structures was a technique called functional decomposition. Functional decomposition is the process whereby you start at the top level of your program and recursively break it down into smaller and smaller provable units. It is the reasoning process behind structured programming. Structured programmers reason from the top down through this recursive decomposition into smaller and smaller provable functions.

This connection between structured programming and functional decomposition was the basis for the structured revolution that took place in the 1970s and 1980s. People like Ed Yourdon, Larry Constantine, Tom DeMarco, and Meilir Page-Jones popularized the techniques of structured analysis and structured design during those decades.

Test-Driven Development

TDD, the red → green → refactor cycle, is functional decomposition. After all, you have to write tests against small bits of the problem. That means that you must functionally decompose the problem into testable elements.

The result is that every system built with TDD is built from functionally decomposed elements that conform to structured programming. And that means the system they compose is provable.

And the tests are the proof.

Or, rather, the tests are the theory.

The tests created by TDD are not a formal, mathematical proof, as Dijkstra wanted. In fact, Dijkstra is famous for saying that tests can only prove a program wrong; they can never prove a program right.

This is where Dijkstra missed it, in my opinion. Dijkstra thought of software as a kind of mathematics. He wanted us to build a superstructure of postulates, theorems, corollaries, and lemmas.

Instead, what we have realized is that software is a kind of science. We validate that science with experiments. We build a superstructure of theories based on passing tests, just as all other sciences do.

Have we proven the theory of evolution, or the theory of relativity, or the Big Bang theory, or any of the major theories of science? No. We can’t prove them in any mathematical sense.

But we believe them, within limits, nonetheless. Indeed, every time you get into a car or an airplane, you are betting your life that Newton’s laws of motion are correct. Every time you use a GPS system, you are betting that Einstein’s theory of relativity is correct.

The fact that we have not mathematically proven these theories correct does not mean that we don’t have sufficient proof to depend on them, even with our lives.

That’s the kind of proof that TDD gives us. Not formal mathematical proof but experimental empirical proof. The kind of proof we depend on every day.

And that brings us back to the third promise in the Programmer’s Oath:

I will produce, with each release, a quick, sure, and repeatable proof that every element of the code works as it should.

Quick, sure, and repeatable. Quick means that the test suite should run in a very short amount of time. Minutes instead of hours.

Sure means that when the test suite passes, you know you can ship.

Repeatable means that those tests can be run by anybody at any time to ensure that the system is working properly. Indeed, we want the tests run many times per day.

Some may think that it is too much to ask that programmers supply this level of proof. Some may think that programmers should not be held to this high a standard. I, on the other hand, can imagine no other standard that makes any sense.

When a customer pays us to develop software for them, aren’t we honor bound to prove, to the best of our ability, that the software we’ve created does what that customer has paid us for?

Of course we are. We owe this promise to our customers, and our employers, and our teammates. We owe it to our business analysts, our testers, and our project managers. But mostly we owe this promise to ourselves. For how can we consider ourselves professionals if we cannot prove that the work we have done is the work we have been paid to do?

What you owe, when you make that promise, is not the formal mathematical proof that Dijkstra dreamed of; rather, it is the scientific suite of tests that covers all the required behavior, runs in seconds or minutes, and produces the same clear pass/fail result every time it is run.