16

Crime and Punishment

Growing up in Florida, Terrance Graham was athletic and intelligent, but he had a troubled background. His parents were addicted to crack cocaine, and in grammar school he was diagnosed with attention deficit hyperactivity disorder. By the time he was nine years old, he was smoking cigarettes and drinking alcohol, and by age thirteen was using marijuana. In July 2003, when he was sixteen, Graham and three friends attempted to rob a barbecue restaurant where one of the friends worked. It was this accomplice who purposely left the back door unlocked at closing time, allowing Graham and one of the other boys to slip in. When they surprised the restaurant’s manager, Graham’s accomplice hit the man with a metal pipe, cutting his head. When the manager continued to yell for help, the three boys ran out the back of the restaurant, where a fourth youth was waiting in a car. No money was taken, the manager required stitches to his head, and within a couple of days all four were arrested. Prosecutors decided to charge the boys—none of them eighteen—as adults. Graham, who had never been arrested before, faced two felony counts. The most serious, a first-degree felony, carried a maximum penalty of life in prison without the possibility of parole.

Graham was offered a plea agreement and admitted his part in the crime. He was sentenced to a year in the county jail, less the six months already served. In June 2004 he was released on probation, intending, as he told the court at sentencing, to turn his life around. “This is my first and last time getting in trouble,” he told the judge. His promise lasted six months. In December 2004, Graham, then seventeen, and two twenty-year-old accomplices forced their way into a man’s home and held him hostage at gunpoint while they ransacked the house, looking for money. Later in the evening the three attempted another home invasion and robbery, during which one of Graham’s friends was shot. Driving his father’s car, Graham dropped the man off at the hospital and then sped past a policeman. A short time later he crashed into a telephone pole, tried to run, but was caught and arrested. It was December 13, 2004, and Graham was thirty-four days shy of his eighteenth birthday. In what was essentially a one-day trial in front of a judge, evidence was presented that Graham had violated his probation. He was facing a wide range of possible sentences under Florida law, running from a minimum of five years to life in prison. The Florida Department of Corrections recommended four years; prosecutors recommended thirty. At sentencing, Circuit Judge Lance M. Day of Duval County told Graham, “I don’t know why it is that you threw your life away. . . . The only thing that I can rationalize is that you decided that this is how you were going to lead your life and that there is nothing that we can do for you. . . . I have reviewed the statute. I don’t see where any further juvenile sanctions would be appropriate. . . . Given your escalating pattern of criminal conduct, it is apparent to the Court that you have decided that this is the way you are going to live your life and that the only thing I can do now is to try and protect the community from your actions.”

Indeed, in 2004 the US Supreme Court debated the extent of accountability of adolescents. During the case, it was asked whether a juvenile was akin to a mentally retarded adult, without full faculties to know right from wrong.

FIGURE 29: How Accountable Should Society Hold Adolescents? Opinions on the treatment and rehabilitation potential of juveniles who commit capital crimes vary widely.

With that, and on the basis of earlier charges of attempted armed burglary and attempted armed robbery, the judge sentenced Terrance Jamar Graham, age nineteen, to life in prison. Because Florida no longer has a parole system, a life sentence means exactly that—no possibility of release, unless by executive clemency. For a serious but nonhomicidal crime committed when he was a juvenile, Graham would be likely to spend the next sixty or seventy years in prison and die there. His attorneys appealed the sentence, citing the Eighth Amendment: “Excessive bail shall not be required, nor excessive fines imposed, nor cruel and unusual punishments inflicted.”

In the spring of 2008 I was contacted by the Washington, DC, branch of the law firm of Clifford Chance. The firm was preparing an amicus brief for Graham’s attorneys, who had appealed their client’s life sentence all the way to the US Supreme Court. Now the firm needed experts to explain why adolescents should be held to a different standard. Of the seventeen who joined in the amicus brief, I was the only neurologist. Thus began my adventure in the intersecting worlds of juvenile justice and neuroscience.

The lawyers for Graham were arguing the same principle that led the Supreme Court to rule the death penalty for juveniles unconstitutional in 2005. Central to that decision was the idea that there is something unique about the intellectual, emotional, and psychological makeup of adolescents. The lawyers who put together the amicus brief summed it up in the first paragraph:

Although adolescents must be held responsible for their actions, they generally lack mature decision-making capability, have an inflated appetite for risk, are prone to influence by peers, and do not accurately assess future consequences.

For the better part of this book I’ve provided scientific evidence supporting the notion that teen brains are different from adult brains. The question the high court was considering was how to weigh those differences in sentencing people for crimes committed when they were still adolescents.

Every year about 200,000 youths between the ages of twelve and seventeen are arrested for violent crimes. In 2008 juvenile offenders were involved in more than nine hundred murders and accounted for 48 percent of all arson in the nation. In 2005 the Supreme Court ruled it was unconstitutional to sentence to death anyone who was under the age of eighteen at the time the crime was committed. Seventeen years earlier it ruled the death penalty unconstitutional for anyone under the age of sixteen. But sentences of life without the possibility of parole (as well as the minimum age for criminal prosecution) have largely been left up to the states. Only three states do not allow charging youths under the age of seventeen in adult courts, and of the forty-seven that do allow it, twenty-nine actually mandate it for certain offenses.

Of all the countries in the world, the only two not to have signed the United Nations Convention on the Rights of the Child, a binding international treaty outlawing the sentencing of juveniles to life in prison without parole, are Somalia and the United States. It’s a dubious distinction, to be sure. In America, of the approximately 40,000 prisoners currently serving sentences of life without parole, some 2,500 are offenders who were below the age of eighteen at the time they committed their crimes. Seventy of those prisoners were only thirteen or fourteen.

The overwhelming majority of the crimes for which juveniles are sentenced to life without parole are homicides, although nationally there are more than a hundred juveniles like Terrance Graham serving life without parole for nonmurders, including six who were thirteen or fourteen when they committed their nonhomicide crimes.

For most of human history, children have been regarded simply as pint-size adults—and more often than not treated as indentured servants. In the oldest known written legal document, the Code of Hammurabi, which dates to approximately 1780 BC, children were subject to the same rules as adults and yet at the same time were under the complete control of their fathers. If a son struck his father, according to the Code, the father could cut off the son’s hands. Ancient Roman cultures continued the tradition of a father’s total control over his children, and during the Middle Ages the state often acted in place of the parent. Children who were tried for crimes as adults were punished as adults. Documents from the Middle Ages make references to boys and girls as young as six years old being hanged or burned at the stake. By the middle of the sixteenth century, however, more thought was given to the idea that criminals, and especially criminal children, could be taught to change their delinquent ways. In April 1553, Bridewell, an old manor house of the British monarchy, was given over to the city of London by King Edward VI at the urging of Bishop Ridley, who proclaimed in a sermon the need for a workhouse for the poor and a house of correction “for the strumpet and idle person, for the rioter that consumeth all, and for the vagabond that will abide in no place.” Bridewell Prison subsequently became a human warehouse not only for the indigent, homeless, and lame but also for vast numbers of children accused of committing petty offenses.

The first record of a juvenile being put to death in America dates to the seventh of September 1642 and poor sixteen-year-old Thomas Granger, who was found guilty of the high moral crime of sodomy with farm animals. According to William Bradford, the governor of the Plymouth colony, Granger, “late servant to Love Brewster of Duxborrow, was [in] this Court indicted for buggery wth a mare, a cowe, two goats, divers sheepe, two calves, and a turkey, and was found guilty, and received sentence of death by hanging untill he was dead.”

The Church of Rome was one of the first institutions to set a legal demarcation between adults and juveniles when it proclaimed children under the age of seven unable to form intent in the commission of a crime. Pope Clement XI followed through on his proclamation in 1704 when he founded a center for “profligate youth” in Rome—perhaps the Western world’s first reformatory school. But it took England’s most acclaimed legal scholar, William Blackstone, to lay the groundwork for juvenile justice systems in both Great Britain and America. After giving a series of lectures about the law at Oxford University in 1753, Blackstone codified the principles he had espoused into a four-volume legal work called Commentaries on the Laws of England, which was published between 1765 and 1769. In the Commentaries he describes the two criteria necessary to hold someone accountable for a crime. The first was obvious: A person had to have committed an illegal act. The second was much more difficult to ascertain: A person had to have a “vicious will,” by which Blackstone meant the intent to commit a crime. He then goes on to describe different categories of people in whom a “will,” by definition, is lacking. The first group he singles out is “infants,” and by “infants,” Blackstone simply meant children—that is, children below the age at which they could fully understand the meaning and consequences of their actions. And exactly what age was that? Actually there were two: children ages six and younger, as a rule, could not be found guilty of a serious crime, while children fifteen and older could be tried, convicted, and sentenced as adults. And if you were ages seven to fourteen, then what? Blackstone fudged. He said if an “infant” could understand the difference between right and wrong, then the child should be held accountable in the same manner as an adult.

The capacity of doing ill, or contracting guilt, is not so much measured by years and days, as by the strength of the delinquent’s understanding and judgment. For one lad of eleven years old may have as much cunning as another of fourteen; and in these cases our maxim is, that “malitia supplet aetatem” [“malice supplies the age”].

That the courts, no less than the public, remained confused about the age at which children should be deemed capable of understanding right and wrong and the consequences of their actions is evident in the fact that the executions of children younger than fourteen continued in the United States of America well into the eighteenth century. In New London, Connecticut, on December 20, 1786, Hannah Ocuish, a twelve-year-old girl, part Pequot Indian, part African American, was hanged for beating and strangling to death a six-year-old white girl from a wealthy family because, according to Hannah’s tearful confession, the six-year-old “complained of her . . . for taking away her strawberries.” Before Hannah’s execution, local Unitarian minister Henry Channing, who counseled the young girl during her imprisonment, preached to the throng that had assembled to witness Hannah’s hanging. In a sermon titled “God, Admonishing His People of Their Duty as Parents and Masters,” Channing spoke of the “natural consequences of too great parental indulgence” and warned parents that “appetites and passions unrestrained in childhood become furious in youth; and ensure dishonour, disease and an untimely death.” As Hannah awaited death, Channing thundered, “Sparing you on account of your age, would, as the law says, be of dangerous consequence to the publick, by holding up an idea, that children might commit such atrocious crimes with impunity.” It didn’t help Hannah that she was part Indian and part African American and that she had killed a white child. Shortly after noon, Hannah was hanged from the gallows by the sheriff. So popular was Channing’s sermon that within days he was named pastor of New London’s First Church of Christ.

Some, however, were horrified by Hannah’s execution, not only because of her age—she is considered to be the youngest female ever executed in the United States—but also because she was thought by some at the time to be mentally handicapped. She was also the product of a broken home and an alcoholic mother who had given her away to become a servant to a wealthy white family. It’s not clear how well Hannah understood the sentence she received after her one-day trial, but when she confessed to the crime—after being taken to view the six-year-old’s dead body—she cried and swore she was sorry and would never do it again.

The push to find some alternative means of dealing with children who commit criminal acts reached a turning point in 1825 when New York City’s “House of Refuge” for children was established. It was the first official institution in the United States dedicated solely to protecting and rehabilitating juvenile offenders and therefore the first tacit recognition by society that there might be special circumstances contributing to some juvenile crimes. Prior to that time in New York, youthful criminals had been housed in the state penitentiary, which opened in 1797. The House of Refuge took charge not only of juvenile criminals but also of orphans, poor children, and any youth considered to be “wayward.”

Any child who for any reason is destitute or homeless or abandoned; or dependent on the public for support; or has not proper parental care or guardianship; or who habitually begs or receives alms; or who is living in any house of ill fame or with any vicious or disreputable person; or whose home, by reason of neglect, cruelty or depravity on the part of its parents, guardian or other person in whose care it may be, is an unfit place for such a child; and any child under the age of eight who is found peddling or selling any article or singing or playing a musical instrument upon the street or giving any public entertainment.

The children of the House of Refuge spent eight hours a day working and learning trades such as tailoring and brass nail manufacturing, with another four hours given to more traditional schooling. Within fifteen years there were dozens of Houses of Refuge across the United States; these houses eventually spawned reform and training schools, beginning in 1886 with the Lyman School for Boys in Westborough, Massachusetts. The first true juvenile court, however, wasn’t established until 1899, in Cook County, Illinois, after the state legislature passed the Juvenile Court Act. One of its first judges, Julian Mack, described how a separate justice system for juveniles was different from adult criminal courts:

The ordinary trappings of the courtroom are out of place in such hearings. The judge on a bench, looking down upon the boy standing at the bar, can never evoke a proper sympathetic spirit. Seated at a desk, with the child at his side, where he can on occasion put his arm around his shoulder and draw the lad to him, the judge, while losing none of his judicial dignity, will gain immensely in the effectiveness of his work.

Responsible for youths charged with major crimes, juvenile courts also meted out punishment for such things as vagrancy and truancy. And because these were special courts, without juries or even due process, children could be sent away simply on the orders of a single judge and on the basis of evidence that did not need to be beyond a reasonable doubt. In the early part of the twentieth century children could be taken into custody just for throwing snowballs and summarily sent away to a detention facility.

Overcrowding and abuse in reform and training schools brought juvenile “rehabilitation” into the discussions of civil rights activists in the 1960s and ultimately, in two landmark cases, to the US Supreme Court. The first case, in 1966, was Kent v. United States. Morris Kent entered the juvenile justice system in Washington, DC, at the age of fourteen for minor offenses, including attempted purse snatching. But when he was sixteen, his fingerprints were found in the apartment of a woman who had been robbed and raped. It was in the juvenile court judge’s discretion to send the case to adult criminal court, and he did so without a hearing, refusing to hear arguments from Kent’s lawyers. When the high court heard the case, it ruled that Kent had been denied “meaningful representation” and due process. Justice Abe Fortas wrote in the majority opinion “that there may be grounds for concern that the child receives the worst of both worlds: that he gets neither the protections accorded to adults nor the solicitous care and regenerative treatment postulated for children.”

By the time the US Supreme Court made its ruling, Kent was twenty-one and could not be retried in juvenile court. Instead, his case was remanded to DC’s district court with instructions to consider the Supreme Court’s ruling and assign punishment “consistent with the purposes of the Juvenile Court Act.” Kent was found guilty of six counts of housebreaking and robbery, but not guilty by reason of insanity on the two counts of rape. He was treated at Saint Elizabeth’s, a psychiatric facility in DC, from 1963 to 1968, at which time he was declared sane. Eventually Kent married and had children, and apparently he has lived a crime-free life since.

The same year as the Kent ruling, 1966, Fortas wrote the opinion for another landmark case in juvenile justice, In re Gault, which involved Arizona fifteen-year-old Gerald Gault. On June 8, 1965, the sheriff of Gila County took Gerald and a friend into custody. At the time, the teenager was on probation for being in the company of a boy who had stolen a woman’s wallet from her purse. When the sheriff grabbed him, Gerald was accused of making lewd phone calls, which Fortas described this way a year later in the court’s majority opinion: “The remarks or questions put to her were of the irritatingly offensive, adolescent, sex variety.” After being arrested, Gerald was taken without a hearing to the Children’s Detention Home, where he remained for several days. When he was released, the sheriff presented Gerald’s mother with a written note saying the judge would be hearing Gerald’s case the following week, at which time the young boy, who was not represented by a lawyer, was sentenced to a juvenile correctional facility for an undetermined time not to exceed his twenty-first birthday. Under Arizona law, juvenile sentences could not be appealed. The following year, the Supreme Court ruled that Gault’s arrest, detention, and sentencing were all in violation of the US Constitution. Fortas wrote, “Under our Constitution, the condition of being a boy does not justify a kangaroo court.” Gault was eventually released and worked at a series of odd jobs for a few years. He served in the US Army from 1969 to 1991 and several years ago was working toward his teaching credentials.

Adolescence, at this time, was still widely understood as a psychological and physiological stage of life, not as a “brain stage.” A discussion about the relationship between biology and legal accountability was forced on the criminal justice system following a horrifying mass murder on August 1, 1966. Twenty-five-year-old Charles Whitman was a former altar boy, an Eagle Scout, a US Marine, and a college student when he killed his wife and his mother in their beds and then, from the observation deck of the twenty-eight-story University of Texas Tower, began indiscriminately firing a high-powered rifle at the people below. Among those he killed on that sultry August afternoon were a pregnant woman, an eighteen-year-old student and his fiancée, an electrician, a professor, and an ambulance driver who had arrived to help the wounded. Before he was shot and killed by police, Whitman murdered thirteen and wounded thirty-two. Among his four suicide notes, written over a period of hours, the one dated 6:45 p.m., Sunday, July 31, 1966, the day before the massacre, read:

I don’t quite understand what it is that compels me to type this letter. Perhaps it is to leave some vague reason for the actions I have recently performed. I don’t really understand myself these days. I am supposed to be an average reasonable and intelligent young man. However, lately (I can’t recall when it started) I have been a victim of many unusual and irrational thoughts.

It is noteworthy that Whitman referred to himself as a “victim” because he obviously felt he was not in control of his violent urges. Shortly before his death he visited the university doctor about his depression and headaches and was prescribed Valium. The doctor suggested Whitman also make an appointment with the university psychiatrist, which he did. Whitman, however, was convinced that something must be physically wrong with him, so he made this request in his suicide note:

After my death I wish that an autopsy would be performed on me to see if there is any visible physical disorder. I have had some tremendous headaches in the past and have consumed two large bottles of Excedrin in the past three months.

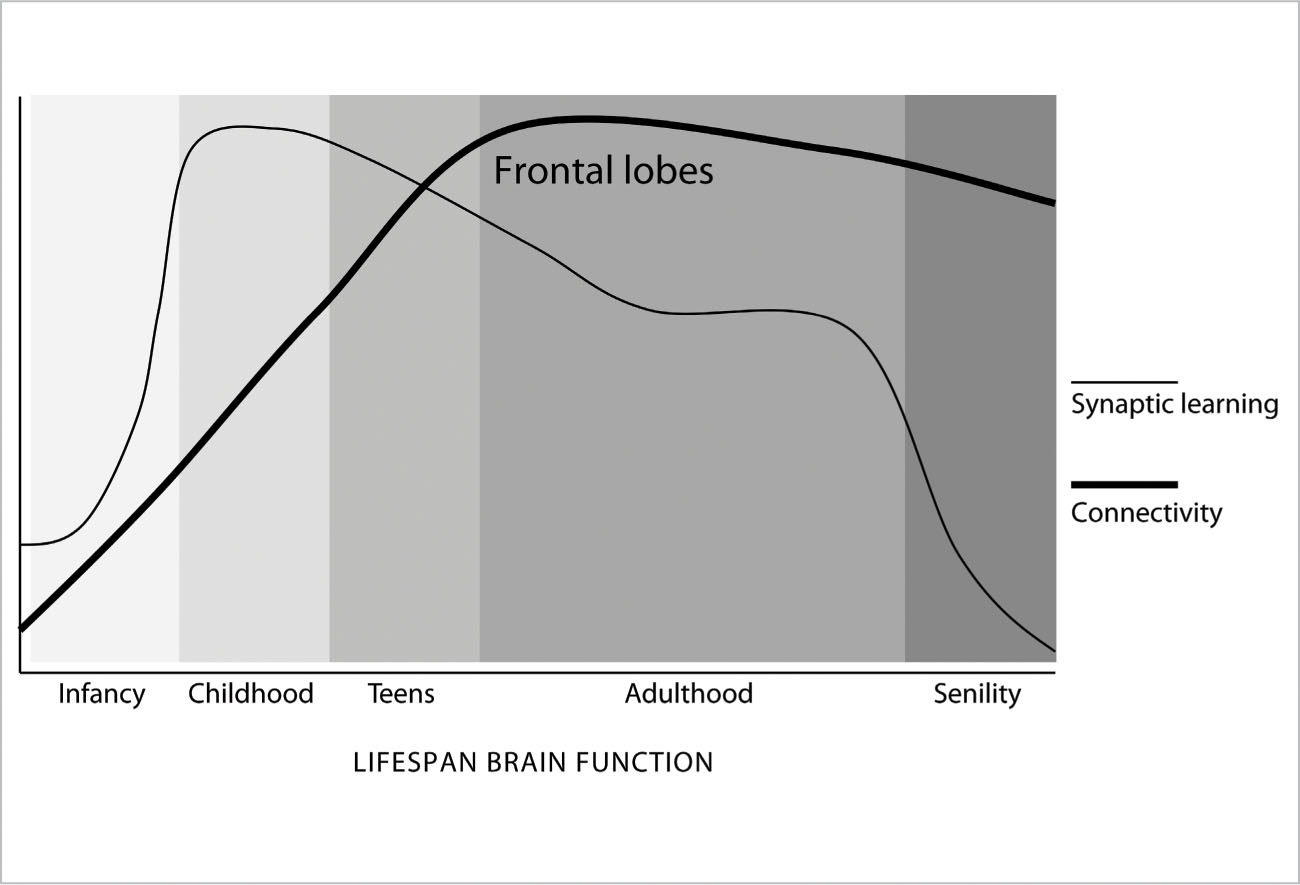

An autopsy was performed, and an explanation was found—a grade 4 glioblastoma multiforme tumor about the size of a walnut protruded from under the thalamus and impinged on both the hypothalamus and the amygdala. Was Whitman unable to harness his aggression or inhibit his destructive impulses because of the tumor’s effects on his brain? While it’s not possible to say for certain, the tumor certainly could have interfered with the normal balance of activity between his frontal lobes and limbic system. Limbic overdrive can result in rage episodes, and if the connections that normally inhibit or dampen these impulses were damaged by the presence of the tumor, he may have been incited to commit these crimes and lacked the ability to control those dangerous impulses. This is an extreme example where brain connectivity may have played a role, but the idea that reduced frontal lobe connectivity, now seen as part of the maturing process in adolescents, could or should mitigate our understanding of juvenile justice was still years away. The case of Roper v. Simmons helped.

Christopher Simmons grew up in Jefferson County, Missouri, where he suffered continual physical and emotional abuse at the hands of his stepfather. When Simmons was only four years old, his stepfather took him to a bar and fed him alcohol to entertain the other patrons. He was once hit so hard in the head by this man that his eardrum burst. By the time he reached his teens, Simmons was regularly drinking alcohol and smoking marijuana and occasionally indulging in harder drugs. To escape the abuse, the teenager hung out at the trailer home of a neighbor, a twenty-eight-year-old man who gave him more drugs and encouraged him to steal and split the profits.

On September 9, 1993, Simmons, then seventeen, and a younger friend decided to commit a heinous crime. Picking Shirley Crook’s home at random, they bound and gagged the forty-six-year-old woman, robbed her, then drove to a state park and threw her off a bridge into the Meramec River, where she drowned. Simmons was arrested; he confessed and was tried and convicted of first-degree murder, and then sentenced to death. The constitutionality of the sentence was questioned on appeal, and eventually the case reached the US Supreme Court. On March 1, 2005, the high court called the execution of a minor a violation of the Eighth and Fourteenth Amendments of the US Constitution, as well as “the evolving standards of decency that mark the progress of a maturing society.” Writing for the majority, Justice Anthony Kennedy stated:

When a juvenile offender commits a heinous crime, the State can exact forfeiture of some of the most basic liberties, but the State cannot extinguish his life and his potential to attain a mature understanding of his own humanity.

Fourteen months after that historic decision, in Jacksonville, Florida, Terrance Graham was sentenced to life in prison without the possibility of parole for a home invasion robbery. And two years after that, I received the phone call from the law firm representing Graham. As the only neurologist contributing to the amicus brief, I felt an enormous sense of responsibility to convey all that I knew about the limitations of the teenage brain when it comes to controlling impulses, assessing risk, avoiding peer pressure, and understanding the consequences of one’s actions. Beyond that, it was critical to describe how adults and adolescents use different parts of their brains when acting and reacting in certain situations.

One distinguished legal scholar, Steven Drizin of Northwestern University in Chicago, has even said, “Juveniles function very much like the mentally retarded. The biggest similarity is their cognitive deficit. [Teens] may be highly functioning, but that doesn’t make them capable of making good decisions.”

Teens, we now know, engage the hippocampus and right amygdala when faced with a threat or a dangerous situation—this is why they are prone to being emotional and impulsive—whereas adults engage the prefrontal cortex, which allows them to more reasonably assess the threat. We know that the risk factors for teens committing violent acts include seeing violence and being the victims of it themselves. We know that teens are much more likely to be influenced by peers when they take risks or engage in dangerous and/or criminal behavior. (On average, half of all homicides committed by juveniles involve multiple accomplices.) We know that because of their still-maturing frontal lobes, adolescents have trouble understanding the consequences of their decisions, and therefore they are impaired in their ability to assess things such as their Miranda rights, the competency of their representation, and the consequences of plea bargaining. And finally, we now know that adolescents, especially those between the ages of twelve and seventeen, are particularly vulnerable to making false confessions.

FIGURE 30. A Recap of Brain Development and the Critical Stage of Adolescence: Synaptic learning rises rapidly in infancy and childhood, stays high during the teen years, and tapers off to plateau in adulthood. However, myelination and connectivity do not peak until early adulthood (late twenties), plateauing well into later life. Learning abilities and rates tend to be highest in children and teens, decreasing along with synaptic function in late adulthood, although adolescents are challenged by their relative lack of connectivity of the frontal lobes, the last parts of the brain to connect. The teen years provide an exceptional opportunity to work on strengths and weaknesses.

In a 2004 analysis of 133 false confessions, researchers found that 16 percent came from sixteen- and seventeen-year-olds, the highest concentration of any age group. Valerie Reyna, a teacher and researcher in the Department of Human Development at Cornell University, summed up the competence of adolescents in the juvenile justice system when she wrote in a 2006 journal article:

In the heat of passion, in the presence of peers, on the spur of the moment, in unfamiliar situations, when trading off risks and benefits favors bad long-term outcomes, and when behavioral inhibition is required for good outcomes, adolescents are likely to reason more poorly than adults do.

In our team’s thirty-six-page amicus brief for Graham v. Florida, we wrote:

Well established, growing, and uniform scientific and academic study shows that the purposes of a sentence of life without parole—punishing the culpable, deterring the sensible, and incapacitating the incorrigible—are not reliably or rationally served by the imposition of that sentence upon adolescents.

Thankfully, on May 17, 2010, the United States Supreme Court agreed. Justice Anthony Kennedy wrote, for the majority, “With respect to life without parole for juvenile nonhomicide offenders, none of the goals of penal sanctions that have been recognized as legitimate—retribution, deterrence, incapacitation, and rehabilitation . . . provides an adequate justification. . . . Even if the punishment has some connection to a valid penological goal, it must be shown that the punishment is not grossly disproportionate in light of the justification offered. Here, in light of juvenile nonhomicide offenders’ diminished moral responsibility, any limited deterrent effect provided by life without parole is not enough to justify the sentence.”

Although siding with the majority, Chief Justice Roberts took issue not with the new science behind our understanding of the still-maturing adolescent brain but rather with the Court’s venturing an opinion on that science. “Perhaps science and society should show greater mercy to young killers,” Roberts wrote, “giving them a greater chance to reform themselves at the risk that they will kill again. But that is not our decision to make.”

The Graham case, however, did not fully protect adolescents from excessively harsh treatment by the criminal justice system. It left one thing unaddressed: sentences of life without parole in cases where a juvenile was convicted of homicide. Less than a year following the Supreme Court’s majority decision in Graham v. Florida, the situation presented itself in the form of another case that had wound its way through the justice system and finally landed in the lap of the US Supreme Court. Our group, which had collaborated on the Graham amicus brief, was asked again to step in by the Clifford Chance law firm to examine the evidence and consider how neurobiology might be used to enlighten the justices of the Supreme Court. The firm sent out a kind of electronic call to arms in mid-2011 for us to once again provide social and biological science to argue that even in cases of homicide, adolescents under the age of eighteen should not be held responsible to the same degree as adults. The prevailing federal law was that, like adults convicted of murder, adolescents could be sentenced to life in prison without the possibility of parole. Our job was to point out that at least in some instances the juvenile may have acted on impulse owing to frontal lobe immaturity (nature) or as a result of a poor educational or stressful childhood environment (nurture). In other words, juveniles are less capable, neurologically, than adults of making mature decisions and are more vulnerable than adults to external influences. A corollary to our argument that there are clear neurological differences between adults and adolescents was the fact that teenagers’ enhanced plasticity helps increase the odds in favor of their successful rehabilitation. In the amicus brief we wrote that “juveniles do typically outgrow their antisocial behavior as the impetuousness and recklessness of youth subside in adulthood. Adolescent criminal conduct frequently results from experimentation with risky behavior and not from deep-seated moral deficiency reflective of ‘bad’ character.”

Up for argument were two cases involving juveniles sentenced to life without the possibility of parole for homicides committed when both boys were only fourteen years old. In one, Jackson v. Hobbs, Kuntrell Jackson from Arkansas tried to rob a video store in 1999 along with two older youths. One of the older youths shot and killed a clerk. In the second case, Miller v. Alabama, Evan Miller and a friend beat a fifty-two-year-old neighbor with a baseball bat and then set fire to his trailer. The middle-aged man died from blunt force trauma and smoke inhalation.

On June 25, 2012, at 3:22 in the afternoon, I received an e-mail from the Clifford Chance firm, thanking the amicus brief team:

The Supreme Court handed down its decision today that it is unconstitutional to mandate a life sentence without the possibility of parole for all juveniles convicted of homicide under 18 years of age at the time of their crime. In doing so, the Court struck down statutes in 29 states with mandatory life-without-parole sentences that precluded consideration of the offender’s age. . . . Congratulations on a job well done as your views were certainly heard!

All statutes that essentially required a person who committed murder as a child to die in prison were thus struck down. The ruling meant Kuntrell Jackson and Evan Miller, both of whom were given life in prison without parole for homicides they committed at the age of fourteen, would receive new sentencing hearings. Writing for the majority in the split 5-4 decision, Justice Elena Kagan said the problem with mandatory sentences was the inability to distinguish between, say, a seventeen-year-old and a fourteen-year-old, “the shooter and the accomplice, the child from a stable household and the child from a chaotic and abusive one.” Kagan went on to cite the neurological evidence that had helped her to make her decision:

Mandatory life without parole for a juvenile precludes consideration of his chronological age and its hallmark features—among them, immaturity, impetuosity, and failure to appreciate risks and consequences.

Still, the Court was once again deeply divided, pointing to the complexity of applying neuroscience to legal culpability. In fact, the justices also did not categorically ban juvenile life sentences without parole but rather, as Kagan wrote in the majority opinion, determined that “given all that we have said in Roper, Graham, and this decision about children’s diminished culpability, and heightened capacity for change, we think appropriate occasions for sentencing juveniles to this harshest possible penalty will be uncommon.” The Court ruled that a judge or jury must have the opportunity to consider mitigating circumstances, including the offender’s age at the time of the crime, before imposing the harshest penalty for juveniles.

Although each new ruling by the Supreme Court has reaffirmed that juveniles are constitutionally different from adults and must therefore be punished differently, the Court has left it up to the states to decide whether its rulings should be applied retroactively for all those inmates now in prison who were sentenced to life terms without parole for crimes committed when they were adolescents. (Currently, Michigan, Iowa, and Mississippi have agreed to apply the ruling retroactively.)

The law is finally taking into account the immature brains of adolescent criminals when it comes to sentencing, but as a society we’re still a long way away from coming to grips with how to deal with juvenile criminals who suffer from mental disorders. (We certainly haven’t figured this out for adult criminals either.) Part of the problem is distinguishing between an immature brain and a disordered brain, and as Chapter 12 described, this can sometimes be difficult. There is no disputing that news of horrific juvenile crimes, including mass shootings, seem all too common today. But the reach of the mass media and their saturation of our daily lives obscures the fact that juvenile violent crime rates, which did increase between 1985 and 1994, actually decreased by half between 1995 and 2011. The American Academy of Psychiatry and the Law cites risk factors for adolescent violence that range from access to firearms and drugs to poverty, parental neglect, family conflict, and exposure to violence on television, in movies, and through the Internet. What is also clear, however, is that the prevalence of mental illness is greater among adolescent criminals than in the general population. At the same time, the majority of juveniles who commit crimes are not mentally ill. The more troubling statistic in this regard may be that of those adolescents who are mentally ill, less than 25 percent get treatment.

The central problem is this: Despite the great advances in neuroscience, what we still don’t know about the human brain dwarfs what we do know. Making judgments, even scientific judgments, based on what is available and known is at best foolhardy and at worst dangerous. That is certainly the case when it comes to pointing to objective evidence for a causal relationship between neuromaturity and real-world activity, especially criminal behavior. Brain scans have the appearance of hard-and-fast empirical data, but brain scans must be interpreted and are therefore far from objective. Scientists studying brain images, especially fMRI images, must weigh the technique used, the clarity of the image, and the choice of subjects in the sample or survey, and then finally must resist the temptation to find a one-to-one correspondence between a given brain region and a particular cognitive function. There is simply no such correspondence. Every brain region we tap—in fact every decision we make and every mood we feel—involves multiple cognitive processes. “Further hindering extrapolation from the laboratory to the real world is the fact that it is virtually impossible to parse the role of the brain from other biological systems and contexts that shape human behavior,” wrote Jay Giedd, coauthor of an important article on adolescent maturity and the brain published several years ago. “Behavior in adolescence, and across the lifespan, is a function of multiple interactive influences including experience, parenting, socioeconomic status, individual agency and self-efficacy, nutrition, culture, psychological well-being, the physical and built environments, and social relationships and interactions.” Giedd is chief of the Section on Brain Imaging in the Child Psychiatry Branch of the National Institute of Mental Health. And while his list of influences on adolescent behavior is exhausting, it is not exhaustive. That’s due, at least in part, to something I said earlier: that we are still learning when it comes to the human brain. Understanding the limitations of extrapolating from brain science to behavior is further complicated by the fact that increasingly scientists are stretching the age of neuromaturity. In other words, there is no bright line, no numerical age or boundary or demarcation at which we can say someone is neurologically mature. Instead, it is becoming increasingly clear that brain maturation extends well into a person’s twenties. As a scientist and a physician I want to believe there are answers to every question and clear boundaries to every event and stage in life, but I know there are not. It might be tempting to think that once the tumult of the teenage years is over, it’s smooth sailing, but that’s hardly the case either. Meanwhile, our local governments continue to throw more money into building additional prison space and facilities rather than creating rehabilitation and counseling programs for adolescents at risk.