This chapter focuses on how people learn to remember things. This chapter will help you to understand why certain knowledge sticks, while other knowledge is forgotten. For example, at one point you probably learned that System.out.print () is the method that prints in Java. However, you do not have all of Java’s methods memorized. I’m sure you have sometimes felt the need to look up specific syntax. For example, would you know whether to use addDays(), addTimespan(), or plusDays() to add a day to a DateTime?

You might not care that much about knowing syntax by heart—after all, we can all look up information online, right? However, as the previous chapter showed, what you already know influences how efficiently you process code. Therefore, memorizing programming syntax, concepts, and data structures will help you to process code faster.

This chapter introduces four important techniques that will help you memorize programming concepts better and more easily. This will strengthen your long-term storage of programming concepts, which in turn will allow for better chunking and reading of code. If you have ever struggled with remembering the syntax of Flexbox in CSS, the order of parameters in matplotlib’s boxplot () method, or the syntax of anonymous functions in JavaScript, this chapter has you covered!

In previous chapters, you saw that trying to remember code line by line is hard. Remembering what syntax to use when producing code can also be challenging. For example, can you write code from memory for the following situations?

Reading a hello.txt file and writing all lines to the command line

A regular expression matching words that start with “s” or “season”

Even though you are a professional programmer, you might have needed to look up some of the specific syntax for these exercises. In this chapter, we will explore not only why it’s hard to remember the right syntax, but also how to get better at it. First, though, we will take a deeper look at why it is so important that you can remember code.

Many programmers believe that if you do not know a certain piece of syntax, you can just look it up on the internet and that therefore syntax knowledge is not all that important. There are two reasons why “just looking things up” might not be a great solution. The first reason was covered in chapter 2: what you already know impacts to a large extent how efficiently you can read and understand code. The more concepts, data structures, and syntax you know, the more code you can easily chunk and thus remember and process.

The second reason is that an interruption of your work can be more disruptive than you think. Just opening a browser to search for information might tempt you to check your email or read a bit of news, which may not be relevant to the task at hand. You might also lose yourself in reading detailed discussions on programming websites when you are searching for related information.

Chris Parnin, a professor at North Carolina State University, has extensively studied what happens when programmers are interrupted at work.1 Parnin recorded 10,000 programming sessions by 85 programmers. He looked at how often developers were interrupted by emails and colleagues (which was a lot!), but he also examined what happens after an interruption. Parnin determined that interruptions are, unsurprisingly, quite disruptive to productivity. The study showed that it typically takes about a quarter of an hour to get back to editing code after an interruption. When interrupted during an edit of a method, programmers were able to resume their work in less than a minute in only 10% of cases.

Parnin’s results showed that programmers often forgot vital information about the code they were working on while they were away from the code. You might recognize that “What was I doing again?” feeling after returning to code from a search. Programmers in Parnin’s study also often needed to put in deliberate effort to rebuild the context. For example, they might navigate to several locations in the codebase to recall details before actually continuing the programming work.

Now that you know why remembering syntax is important, we’ll dive into how to learn syntax quickly.

A great way to learn anything quickly, including syntax, is to use flashcards. Flashcards are simply paper cards or Post-Its. One side has a prompt on it—the thing that you want to learn. The other side has the corresponding knowledge on it.

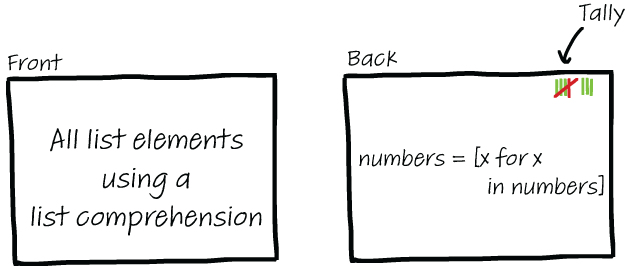

When using flashcards for programming, you can write the concept on one side and the corresponding code on the other. A set of flashcards for list comprehensions in Python might look like this:

Comprehension with filter <-> odd_numbers = [x for x in numbers if x % 2 == 1]

Comprehension with filter and calculation <-> squares = [x*x for x in numbers if x > 25]

You use flashcards by reading the side of the card with the prompt on it and trying your best to remember the corresponding syntax. Write the syntax on a separate piece of paper or type the code in an editor. When you’re finished, flip the card over and compare the code to see if you got it right.

Flashcards are commonly used in learning a second language and are tremendously useful for that. However, learning French using flashcards can be a cumbersome task because there are so many words. Even the big programming languages like C++ are much smaller than any natural human language. Learning a good chunk of the basic syntactic elements of a programming language therefore is doable with relatively low effort.

EXERCISE 3.1 Think of the top 10 programming concepts you always have trouble remembering.

Make a set of flashcards for each of the concepts and try using them. You can also do this collaboratively in a group or team, where you might discover that you are not the only one who struggles with certain concepts.

The trick to learning syntax is to use the flashcards often to practice. There are, however, also plenty of apps, like Cerego, Anki, and Quizlet, that allow you to create your own digital flashcards. The benefit of these apps is that they remind you when to practice again. If you use the paper flashcards or an app regularly, you will see that after a few weeks your syntactic vocabulary will have increased significantly. That will save you a lot of time on Googling, limit distractions, and help you to chunk better.

There are good times to add flashcards to your set. First, when you’re learning a new programming language, framework, or library, you can add a card each time you encounter a new concept. For example, if you’ve just started learning the syntax of list comprehensions, create the corresponding card or cards right away.

A second great time to expand your set of cards is when you’re about to Google a certain concept. That is a signal that you do not yet know that concept by heart. Write the concept you decided to look up on one side of a card and the code you found on the other side.

Of course, you’ll need to use your judgment. Modern programming languages, libraries, and APIs are huge, and there’s no need to memorize all of their syntax. Looking things up online is totally fine for fringe syntactic elements or concepts.

When you use your flashcards regularly, after a while you might start to feel that you know some of the cards well. When this happens, you might want to thin out your set of cards a bit. To keep track of how well you know certain concepts, you can keep a little tally on each card of your right and wrong answers, as shown in figure 3.1.

Figure 3.1 Example of a flashcard with a tally of right and wrong answers. Using a tally, you can keep track of what knowledge is already reliably stored in your LTM.

If you get a card right a bunch of times in a row, you can discard it. Of course, if you find yourself struggling again, you can always put the card back in. If you use an app to make flashcards, the apps will typically thin the set for you by not showing you cards that you already know well.

The previous section showed how you can use flashcards to quickly learn and easily remember syntax. But how long should you practice? When will you finally know the entirety of Java 8? This section will shine a light on how often you will have to revisit knowledge before your memory is perfect.

Before we can turn our attention to how to not forget things, we need to dive into how and why people forget. You already know that the STM has limitations—it cannot store a lot of information at once, and the information it stores is not retained for very long. The LTM also has limitations, but they are different.

The big problem with your LTM is that you cannot remember things for a long time without extra practice. After you read, hear, or see something, the information is transferred from your STM to your LTM—but that doesn’t mean it is stored in the LTM forever. In that sense, a human’s LTM differs significantly from a computer’s hard drive, on which information is stored relatively safely and durably.

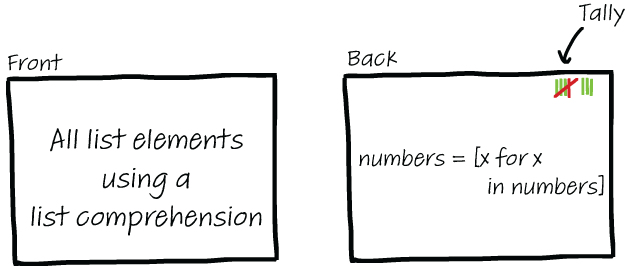

The decay for LTM is not seconds, like that of the STM, but it is still a lot shorter than you might think. The forgetting curve is illustrated in figure 3.2. As you can see, in an hour you will typically already have lost half of what you read. After two days, just 25% of what you learned remains. But that’s not the entire picture—the graph in figure 3.2 represents how much you remember if you do not revisit the information at all.

Figure 3.2 A graph illustrating how much information you remember after being exposed to it. After two days, just 25% of the knowledge remains in your LTM.

To understand why some memories are forgotten so quickly, we need to take a deep dive into the workings of the LTM—starting with how things are remembered.

Storage of memories in the brain is not based on zeros and ones, but it does share a name with how we store information to disk: encoding. When they talk about encoding, however, cognitive scientists aren’t precisely referring to the translation of thoughts to storage—the exact workings of this process are still largely unknown—but rather to the changes that happen in the brain when memories are formed by neurons.

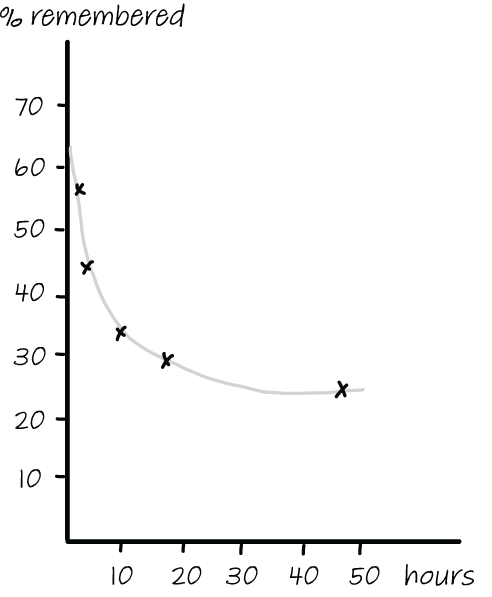

We have compared the LTM to a hard drive, but storage in the brain does not actually work hierarchically, in the way that files are arranged in folders with subfolders. As figure 3.3 shows, memories in the brain are organized more in a network structure. That’s because facts are connected to large numbers of other facts. An awareness of these connections between different facts and memories is important to understand why people forget information, which is the topic we are going to discuss next.

Figure 3.3 Two ways of organizing data: on the left, a hierarchical filesystem; on the right, memories organized as a network

Hermann Ebbinghaus was a German philosopher and psychologist who became interested in studying the capabilities of the human mind in the 1870s. At that time, the idea of measuring people’s mental capacities was relatively unheard of.

Ebbinghaus wanted to understand the limits of human memory using his own memory as a test. He wanted to push himself as far as possible. He realized that trying to remember known words or concepts was not a true test because memories are stored with associations, or relationships to each other. For example, if you try to remember the syntax of a list comprehension, knowing the syntax of a for-loop might help you.

To create a fairer means of assessment, Ebbinghaus constructed a large set of short nonsense words, such as wix, maf, kel, and jos. He then conducted an extensive set of experiments, using himself as a test subject. For years he studied lists of these nonsense words by reading them aloud, with the help of a metronome to keep up the pace, and kept track of how much practice was needed to be able to recall each list perfectly.

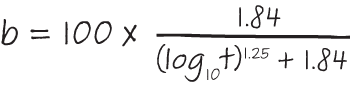

In 1880, after a decade, he estimated that he had spent almost 1,000 hours practicing, and he could recite 150 a minute. By testing himself at different intervals, Ebbinghaus was able to estimate the time span of his memory. He summarized his findings in his 1885 publication Über das Gedächtnis (Memory: A Contribution to Experimental Psychology). His book contained the formula for forgetting shown in figure 3.4, which lies at the base of the concept of the forgetting curve.

Figure 3.4 Ebbinghaus’s formula to estimate how long a memory will last

A recent study by Dutch professor Jaap Murre of the University of Amsterdam confirmed that Ebbinghaus’s formula is largely correct.2

We know now how quickly people forget things, but how will that help us not forget the syntax of boxplot () in matplotlib or list comprehensions in Python? In turns out that Ebbinghaus’s experiments in remembering nonsense words not only allowed him to predict how long it would take him to forget things, but also shed light on how to prevent forgetting. Ebbinghaus observed that he could reliably learn a set of 12 meaningless words with 68 repetitions on day 1 followed by 7 the next day (total times: 75), or he could study the set of words just 38 times spaced out over the course of 3 days, which is half the study time.

Extensive research has been done on the forgetting curve, from research on simple mathematical procedures like addition with young kids to biological facts for high schoolers. One study that shed more light on the optimal distance between repetitions was conducted by Harry Bahrick of Ohio Wesleyan University, who again used himself as a test subject but also included his wife and two adult children, who were also scientists and interested in the topic.3

They all set themselves the goal of learning 300 foreign words; his wife and daughters studied French words, and Bahrick himself studied German. They divided the words into 6 groups of 50 and used different spacing for the repetitions of each group. Each group of words was studied either 13 or 26 times at a set interval of 2, 4, or 8 weeks. Retention was then tested after 1, 2, 3, or 5 years.

One year after the end of the study period, Bahrick and his family found that they remembered the most words in the group of 50 that they had studied the most and with the longest intervals between practices—26 sessions, each 8 weeks apart. They were able to recall 76% of the words from that group a year later versus 56% in the group studied at an interval of two weeks. Recall diminished over the following years but remained consistently higher for the same group of words they studied for the longest period.

In summary, you remember the longest if you study over a longer period. That doesn’t mean you need to spend more time studying; it means you should study at more spaced-out intervals. Revisiting your set of flashcards once a month will be enough to help your memory in the long run, and it’s also relatively doable! This is, of course, in stark contrast with formal education, where we try to cram all the knowledge into one semester, or with bootcamps that seek to educate people in three months. Knowledge learned in such a way only sticks if you continue to repeat what you’ve learned frequently afterward.

Tip The biggest takeaway from this section is that the best way science knows to prevent forgetting is to practice regularly. Each repetition strengthens your memory. After several repetitions spaced out over a long period, the knowledge should remain in your LTM forever. If you were ever wondering why you’ve forgotten a lot of what you learned in college, this is why! Unless you revisit knowledge, or are forced to think about it, you will lose memories.

You now know that practicing syntax is important because it helps you to chunk code and will save you a lot of time on searching. We have also covered how often you should practice; don’t try to memorize all your flashcards in a day, but spread your study out over a longer period. The remainder of the chapter will talk about how to practice. We will cover two techniques to strengthen memories: retrieval practice (actively trying to remember something) and elaboration (actively connecting new knowledge to existing memories).

You might have noticed earlier that I didn’t tell you to simply read both sides of the flashcards. Rather, I asked you to read the side containing the prompt, which is the thing that makes you remember the syntax.

That’s because research has shown that actively trying to remember makes memories stronger. Even if you do not know the full answer, memories are easier to find when you have often tried to find them before. The remainder of this chapter will explore this finding in more depth and help you to apply it to learning programming.

Before we dive into how remembering can strengthen memories, we first need to understand the problem in more depth. You might think that a memory either is stored in your brain or isn’t, but the reality is a bit more complex. Robert and Elizabeth Bjork, professors of psychology at the University of California, distinguished two different mechanisms in retrieving information from LTM: storage strength and retrieval strength.

Storage strength indicates how well something is stored in LTM. The more you study something, the stronger the memory of it is, until it becomes virtually impossible to forget it. Can you imagine forgetting that 4 times 3 is 12? However, not all information you have stored in your brain is as easy to access as the tables of multiplication.

Retrieval strength indicates how easy it is to remember something. I’m sure you’ve had that feeling where you’re sure you know something (a name, a song, a phone number, the syntax of the filter() function in JavaScript), but you can’t quite recall it; the answer is on the tip of your tongue; you just can’t reach it. That is information for which the storage strength is high—when you finally remember it, you can’t believe you ever couldn’t recall it—but the retrieval strength is low.

It is generally agreed that storage strength can only increase—recent research indicates that people never really forget memories4—and that it is the retrieval strength of memories that decays over the years. When you repeatedly study some piece of information, you strengthen the storage strength of that fact. When you try to remember a fact that you know you know, without extra study, you improve retrieval strength ability.

When you’re looking for a particular piece of syntax, the problem often lies with your retrieval strength, not with your storage strength. For example, can you find the right code to traverse a list in C++ in reverse from the options in the following listing?

Listing 3.1 Six options to traverse a list in C++

1. rit = s.rbegin(); rit != s.rend(); rit++ 2. rit = s.revbegin(); rit != s.end(); rit++ 3. rit = s.beginr(); rit != s.endr(); rit++ 4. rit = s.beginr(); rit != s.end(); rit++ 5. rit = s.rbegin(); rit != s.end(); rit++ 6. rit = s.revbeginr(); rit != s.revend(); rit++

All these options look quite similar, and even for an experienced C++ programmer it can be tricky to remember the right version, despite having seen this code several times. When you are told the right answer, it will feel like you knew it all along: “Of course it’s rit = s.rbegin(); rit != s.rend(); rit++ !”

Hence, the issue here is not the strength with which the knowledge is stored in your LTM (the storage strength) but the ease with which you can find it (the retrieval strength). This example shows that even if you’ve seen code a dozen times before, simply being exposed to the code may not be enough for you to be able to remember it. The information is stored somewhere in your LTM but not readily available when you need it.

The exercise in the previous section showed that it’s not enough to simply store information in your LTM. You also need to be able to retrieve it with ease. Like many things in life, retrieving information gets easier when you practice it a lot. Because you have never really tried to remember syntax, it is hard to recall it when you do need it. We know that actively trying to remember something strengthens memory—this is a technique that goes back as far as Aristotle.

One of the first studies on retrieval practice was done by a schoolteacher called Philip Boswood Ballard, who published a paper on the topic called “Obliviscence and Reminiscence” in 1913. Ballard trained a group of students to memorize 16 lines from the poem “The Wreck of the Hesperus,” which recounts the story of a vain skipper who causes the death of his daughter. When he examined their recall performance, he observed something interesting. Without telling the students that they would be tested again, Ballard had them reproduce the poem again after two days. Because they did not know another test was coming, the students had not studied the poem any further. During the second test, the students on average remembered 10% more of the poem. After another two days, their recall scores were higher again. Suspicious of the outcome, Ballard repeated such studies several times—but he found similar results each time: when you actively try to recall information without additional study, you will remember more of what you learned.

Now that you’re familiar with the forgetting curve and the effects of retrieval practice, it should be even more clear why simply looking up syntax you don’t know each time you need it is not a great idea. Because looking it up is so easy and is such a common task, our brains feel like they don’t really need to remember syntax. Therefore, the retrieval strength of programming syntax remains weak.

The fact that we do not remember syntax, of course, leads to a vicious cycle. Because we don’t remember it, we need to look it up. But because we keep looking it up instead of trying to remember it, we never improve the retrieval strength of these programming concepts—so we must keep looking them up, ad infinitum.

So next time you’re about to Google something, it might be worth first actively trying to remember the syntax. Even though it might not work that time, the act of trying to remember can strengthen your memory and might help you remember next time. If that doesn’t work, make a flashcard and actively practice.

In the previous section you learned that actively trying to remember information, with retrieval practice, makes information stick in your memory better. You also know that practicing memorizing information over an extended period works best. But there is a second way you can strengthen memories: by actively thinking about and reflecting on the information. The process of thinking about information you just learned is called elaboration. Elaboration works especially well for learning complex programming concepts.

Before we can dig into the process of elaboration and how you can use it to better learn new programming concepts, we need to take a closer look at how storage in the brain works.

You’ve seen that memories in your brain are stored in a networked form, with relationships to other memories and facts. The ways in which thoughts and the relationships between them are organized in our minds are called schemas, or schemata.

When you learn new information, you will try to fit the information into a schema in your brain before storing it in LTM. Information that fits an existing schema better will be easier to remember. For example, if I ask you to remember the numbers 5, 12, 91, 54, 102, and 87 and then list three of them for a nice prize of your choice, that will be hard to do, because there are no “hooks” to connect the information to. This list will be stored in a new schema: “Things I am remembering to win a nice prize.”

However, if I ask you to remember 1, 3, 15, 127, 63, and 31, that may be easier to do. If you think about it for a little bit, you can see that these numbers fit into a category of numbers: numbers that, when transformed into a binary representation, consist of only ones. Not only can you remember these numbers more easily, but you might also be more motivated to remember the numbers because this is a sensible thing to do. You know that if you know the upper bound of numbers in bits, that can help in solving certain problems.

Remember that when the working memory processes information, it also searches the LTM for related facts and memories. When your memories are connected to each other, finding the memories is easier. In other words, retrieval strength is higher for memories that relate to other memories.

When we save memories, the memories can even be changed to adapt themselves to existing schemata. In the 1930s, British psychologist Frederic Bartlett conducted an experiment where he asked people to study a short Native American tale called The War of the Ghosts and then try to recall the story weeks or even months later.5 From their descriptions, Bartlett could see that the participants had changed the story to fit their existing beliefs and knowledge. For example, some participants omitted details they considered irrelevant. Other participants had made the story more “Western” and in line with their cultural norms; for example, by replacing a bow with a gun. This experiment showed that people do not remember bare words and facts but that they adapt memories to fit with their existing memories and beliefs.

The fact that memories can be altered as soon as we store them can have downsides. Two people involved in the same situation might remember it entirely differently afterward because their own thoughts and ideas will affect how they store the memories. But we can also use the fact that memories can be changed or saved to our advantage by storing relevant known information along with the added information.

Using elaboration to learn new programming concepts

As you saw earlier in this chapter, memories can be forgotten when the retrieval strength—the ease with which you can recollect information—is not strong enough. Bartlett’s experiment shows that even on the first save, when a memory is initially stored in LTM, some details of the memory can be altered or forgotten.

For example, if I tell you that James Monroe was the fifth president of the United States, you might remember that Monroe is a former president but forget that he was the fifth one before storing the memory. The fact that you do not remember the number can be caused by many factors; for example, you might think it is irrelevant, or too complex, or you might have been distracted. There are a lot of factors that impact how much is stored, including your emotional state. For example, you are more likely to remember a certain bug that kept you at your desk overnight a year ago than a random bug you fixed in a few minutes today.

While you cannot change your emotional state, there are many things you can do to save as much as possible of a new memory. One thing you can do to strengthen the initial encoding of memories is called elaboration. Elaboration means thinking about what you want to remember, relating it to existing memories, and making the new memories fit into schemata already stored in your LTM.

Elaboration might have been one of the reasons the pupils in Ballard’s study were better able to remember the words of the poem over time. Repeatedly recalling the poem forced the students to fill in missing words, each time committing them back to memory. They also likely connected parts of the poem to other things they remembered.

If you want to remember new information better, it helps to explicitly elaborate on the information. The act of elaboration strengthens the network of related memories, and when a new memory has more connections it’s easier to retrieve it. Imagine you are learning a new programming concept, such as list comprehensions in Python. A list comprehension is a way to create a list based on an existing list. For example, if you want to create a list of the squares of numbers you already have stored in a list called numbers, you can do that with this list comprehension:

squares = [x*x for x in numbers]

Imagine you are learning this concept for the first time. If you want to help yourself remember it better, it helps a great deal to elaborate deliberately by thinking of related concepts. For example, you might try thinking of related concepts in other programming languages, of alternative concepts in Python or other programming languages, or of how this concept relates to other paradigms.

EXERCISE 3.2 Use this exercise the next time you learn a new programming concept. Answering the following questions will help you elaborate and strengthen the new memory:

What concepts does this new concept make you think of? Write down all the related concepts.

Then, for each of the related concepts you can think of, answer these questions:

What other ways do you know to write code to achieve the same goal? Try to create as many variants of this code snippet as you can.

Do other programming languages also have this concept? Can you write down examples of other languages that support similar operations? How do they differ from the concept at hand?

Does this concept fit a certain paradigm, domain, library, or framework?

It’s important to know quite a bit of syntax by heart because more syntax knowledge will ease chunking. Also, looking up syntax can interrupt your work.

You can use flashcards to practice and remember new syntax, with a prompt on one side and code on the other side.

It’s important to practice new information regularly to fight memory decay.

The best kind of practice is retrieval practice, where you try to remember information before looking it up.

To maximize the amount of knowledge you remember, spread your practice over time.

Information in your LTM is stored as a connected network of related facts.

Active elaboration of new information helps strengthen the network of memories the new memory will connect to, easing retrieval.

1. See “Resumption Strategies for Interrupted Programming Tasks” by Chris Parnin and Spencer Rugaber (2011), http://mng.bz/0rpl.

2. See “Replication and Analysis of Ebbinghaus’ Forgetting Curve” by Jaap Murre (2015), https://journals.plos .org/plosone/article?id=10.1371/journal.pone.0120644.

3. See “Maintenance of Foreign Language Vocabulary and the Spacing Effect” by Harry Bahrick et al. (1993), www.gwern.net/docs/spacedrepetition/1993-bahrick.pdf.